- 废话不多说,直接介绍怎么安装(这里是安装的CICD环境,后续会一步一步实现gitlab+docker+harbor+k8s+jenkins,不需要的软件,可以直接忽略。)

环境配置

| Hostname | IP | 备注 (为节省虚拟机,安装的其他软件,只装k8s可不安装) |

|---|---|---|

| master | 192.168.47.100 | jenkins(至少3G内存) |

| node1 | 192.168.47.101 | harbor |

| node2 | 192.168.47.102 | gitlab(至少4G内存) |

- 需要注意版本之间的依赖关系

| 软件 | 版本 | 备注 |

|---|---|---|

| 操作系统 | CentOS7 | / |

| 内核 | 5.19.8 | 如果内核为3.10,建议先升级(master初始化可能会报错) |

| JDK | 1.8,11 | https://www.oracle.com/java/technologies/javase-downloads.html |

| Maven | 3.0.5 | https://maven.apache.org/ |

| docker | 20.10.17 | https://www.docker.com/ |

| Kubernetes | 1.24.4 | https://kubernetes.io/ |

| cri-docker | 0.2.3 | |

| Gitlab | 15.4 | https://about.gitlab.com/ |

| Jenkins | 2.361.1-1.1 | 依赖jdk11 |

| Harbor | v2.6.0 | https://goharbor.io/ |

- 查看以及升级内核的方法

查看内核版本

[root@k8s cgroup]# uname -r

3.10.0-327.el7.x86_64

导入EL repo库

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

[root@k8s cgroup]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

可安装的软件包

elrepo-release.noarch

kernel-lt.x86_64

kernel-lt-devel.x86_64

kernel-lt-doc.noarch

kernel-lt-headers.x86_64

kernel-lt-tools.x86_64

kernel-lt-tools-libs.x86_64

kernel-lt-tools-libs-devel.x86_64

kernel-ml.x86_64

kernel-ml-devel.x86_64

kernel-ml-doc.noarch

kernel-ml-headers.x86_64

kernel-ml-tools.x86_64

kernel-ml-tools-libs.x86_64

kernel-ml-tools-libs-devel.x86_64

perf.x86_64

python-perf.x86_64

安装稳定内核

yum --enablerepo=elrepo-kernel install kernel-ml

修改GRUB选项,使新安装的内核作为优先启动

cp /etc/default/grub /etc/default/grub_bak

[root@k8s cgroup]# vim /etc/default/grub

[root@k8s cgroup]# cat /etc/default/grub

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

# GRUB_DEFAULT=saved-->GRUB_DEFAULT=0

GRUB_DEFAULT=0

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="console"

GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rd.lvm.lv=centos/swap rhgb quiet"

GRUB_DISABLE_RECOVERY="true"

重新创建内核配置

[root@k8s cgroup]# grub2-mkconfig -o /boot/grub2/grub.cfg

Generating grub configuration file ...

Found linux image: /boot/vmlinuz-5.19.8-1.el7.elrepo.x86_64

Found initrd image: /boot/initramfs-5.19.8-1.el7.elrepo.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-327.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-327.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-575e24cf52124364b060acab9088f9b8

Found initrd image: /boot/initramfs-0-rescue-575e24cf52124364b060acab9088f9b8.img

done

重启验证

reboot

uname -r

5.19.8-1.el7.elrepo.x86_64

- 环境配置

#!/bin/bash

# 彻底清除原先安装的k8s环境

kubeadm reset -f

modprobe -r ipip

rm -rf ~/.kube/

rm -rf /etc/kubernetes/

rm -rf /etc/systemd/system/kubelet.service.d

rm -rf /etc/systemd/system/kubelet.service

rm -rf /usr/bin/kube*

rm -rf /etc/cni

rm -rf /opt/cni

rm -rf /var/lib/etcd

rm -rf /var/etcd

yum clean all

yum remove kube* -y

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

firewall-cmd --state

# 关闭selinux

setenforce 0

sed -i "s/SELINUX=.*/SELINUX=disabled/g" /etc/selinux/config

# 关闭swap

# 临时关闭

swapoff -a

# 永久关闭,这个需要重启生效

sed -i 's/\/dev\/mapper\/centos-swap/#\/dev\/mapper\/centos-swap/g' /etc/fstab

# 允许桥接

cat > /etc/modules-load.d/k8s.conf <<EOF

br_netfilter

EOF

cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

sudo sysctl --system

# hosts文件 域名通信

echo 192.168.47.100 k8s-master >> /etc/hosts

echo 192.168.47.101 k8s-node >> /etc/hosts

echo 192.168.47.102 k8s-node2 >> /etc/hosts

# 配置阿里源

cd /etc/yum.repos.d/

mkdir bak

mv * bak

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

yum clean all

yum makecache

# 安装docker

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://hzfyo6gg.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

yum install -y yum-utils device-mapper-persistent-data lvm2

yum install -y docker-ce

systemctl enable docker

systemctl daemon-reload

systemctl restart docker

# 安装k8s-1.24.4

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

EOF

yum install kubeadm-1.24.4 kubelet-1.24.4 -y

systemctl enable kubelet

- 安装jdk和maven(用于cicd流程,可选)

yum install -y java-openjdk

yum install -y maven

mkdir /home/repository

vim /etc/maven/settings.xml

<settings xmlns="http://maven.apache.org/SETTINGS/1.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/SETTINGS/1.0.0 http://maven.apache.org/xsd/settings-1.0.0.xsd">

# 加上

<localRepository>/home/repository</localRepository>

# 修改

<mirrors>

<mirror>

<id>aliyunmaven</id>

<mirrorOf>*</mirrorOf>

<name>阿里云公共仓库</name

<url>https://maven.aliyun.com/repository/public</url>

</mirror>

</mirrors>

k8s集群搭建

📌安装和配置cri-docker

- 软件包–>百度网盘

- 链接:https://pan.baidu.com/s/1DztUfYpEZdkzic7lg1yF1g

提取码:raq6

tar -xf cri-dockerd-0.2.3.amd64.tgz

cp cri-dockerd/cri-dockerd /usr/local/

-

创建cri-docker启动文件

https://github.com/Mirantis/cri-dockerd/tree/master/packaging/systemd/cri-docker.servicehttps://github.com/Mirantis/cri-dockerd/blob/master/packaging/systemd/cri-docker.socket

[root@k8s ~]# vim /usr/lib/systemd/system/cri-docker.service

[Unit]

Description=CRI Interface for Docker Application Container Engine

Documentation=https://docs.mirantis.com

After=network-online.target firewalld.service docker.service

Wants=network-online.target

Requires=cri-docker.socket

[Service]

Type=notify

# 指定pause:3.7

ExecStart=/usr/local/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.7

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=always

StartLimitBurst=3

StartLimitInterval=60s

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

[root@k8s ~]# vim /usr/lib/systemd/system/cri-docker.socket

[Unit]

Description=CRI Docker Socket for the API

PartOf=cri-docker.service

[Socket]

ListenStream=%t/cri-dockerd.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

- 启动cri-docker并设置开机自动启动

systemctl daemon-reload

systemctl restart cri-docker.service

systemctl enable cri-docker.service

📌初始化master

- 必须指定–cri-socket unix://var/run/cri-dockerd.sock(),除了kubeadm init命令外,kubeadm reset,kubeadm join都要指定

kubeadm init --image-repository registry.aliyuncs.com/google_containers --cri-socket unix://var/run/cri-dockerd.sock --ignore-preflight-errors=NumCPU --service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16 --kubernetes-version=1.24.4

# 这里是因为内核版本过低报错,如果升级过内核,请忽略

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR SystemVerification]: unexpected kernel config: CONFIG_CGROUP_PIDS

[ERROR SystemVerification]: missing required cgroups: pids

报错:[ERROR SystemVerification]: unexpected kernel config: CONFIG_CGROUP_PIDS

[ERROR SystemVerification]: missing required cgroups: pids解决:升级内核

[root@k8s cgroup]# uname -r 3.10.0-327.el7.x86_64 导入EL repo库 rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm [root@k8s cgroup]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available 可安装的软件包 elrepo-release.noarch kernel-lt.x86_64 kernel-lt-devel.x86_64 kernel-lt-doc.noarch kernel-lt-headers.x86_64 kernel-lt-tools.x86_64 kernel-lt-tools-libs.x86_64 kernel-lt-tools-libs-devel.x86_64 kernel-ml.x86_64 kernel-ml-devel.x86_64 kernel-ml-doc.noarch kernel-ml-headers.x86_64 kernel-ml-tools.x86_64 kernel-ml-tools-libs.x86_64 kernel-ml-tools-libs-devel.x86_64 perf.x86_64 python-perf.x86_64 安装稳定内核 yum --enablerepo=elrepo-kernel install kernel-ml 修改GRUB选项,使新安装的内核作为优先启动 cp /etc/default/grub /etc/default/grub_bak [root@k8s cgroup]# vim /etc/default/grub [root@k8s cgroup]# cat /etc/default/grub GRUB_TIMEOUT=5 GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)" # GRUB_DEFAULT=saved-->GRUB_DEFAULT=0 GRUB_DEFAULT=0 GRUB_DISABLE_SUBMENU=true GRUB_TERMINAL_OUTPUT="console" GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rd.lvm.lv=centos/swap rhgb quiet" GRUB_DISABLE_RECOVERY="true" 重新创建内核配置 [root@k8s cgroup]# grub2-mkconfig -o /boot/grub2/grub.cfg Generating grub configuration file ... Found linux image: /boot/vmlinuz-5.19.8-1.el7.elrepo.x86_64 Found initrd image: /boot/initramfs-5.19.8-1.el7.elrepo.x86_64.img Found linux image: /boot/vmlinuz-3.10.0-327.el7.x86_64 Found initrd image: /boot/initramfs-3.10.0-327.el7.x86_64.img Found linux image: /boot/vmlinuz-0-rescue-575e24cf52124364b060acab9088f9b8 Found initrd image: /boot/initramfs-0-rescue-575e24cf52124364b060acab9088f9b8.img done 重启验证 reboot uname -r 5.19.8-1.el7.elrepo.x86_64

kubeadm init --image-repository registry.aliyuncs.com/google_containers --cri-socket unix://var/run/cri-dockerd.sock --ignore-preflight-errors=NumCPU --service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16 --kubernetes-version=1.24.4

# 这里是因为镜像太大,没能拉取成功。可以直接导入我网盘里的镜像或者手动拉取需要的镜像

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/etcd:3.5.3-0: output: E0914 13:11:22.857372 17990 remote_image.go:218] "PullImage from image service failed" err="rpc error: code = Unknown desc = context deadline exceeded" image="registry.aliyuncs.com/google_containers/etcd:3.5.3-0"

time="2022-09-14T13:11:22+08:00" level=fatal msg="pulling image: rpc error: code = Unknown desc = context deadline exceeded"

, error: exit status 1

报错:[ERROR ImagePull]: failed to pull image registry.aliyuncs.com/google_containers/etcd:3.5.3-0: output: E0914 13:11:22.857372 17990 remote_image.go:218] “PullImage from image service failed” err=“rpc error: code = Unknown desc = context deadline exceeded” image=“registry.aliyuncs.com/google_containers/etcd:3.5.3-0”

原因:镜像etcd:3.5.3-0无法下载

解决🔑:手动下载

查看需要的镜像 [root@k8s ~]# kubeadm config images list I0914 14:02:13.369582 21306 version.go:255] remote version is much newer: v1.25.0; falling back to: stable-1.24 k8s.gcr.io/kube-apiserver:v1.24.4 k8s.gcr.io/kube-controller-manager:v1.24.4 k8s.gcr.io/kube-scheduler:v1.24.4 k8s.gcr.io/kube-proxy:v1.24.4 k8s.gcr.io/pause:3.7 k8s.gcr.io/etcd:3.5.3-0 k8s.gcr.io/coredns/coredns:v1.8.6 拉取需要的镜像 [root@k8s ~]# docker pull registry.aliyuncs.com/google_containers/etcd:3.5.3-0 3.5.3-0: Pulling from google_containers/etcd 36698cfa5275: Pull complete 924f6cbb1ab3: Pull complete 11ade7be2717: Pull complete 8c6339f7974a: Pull complete d846fbeccd2d: Pull complete Digest: sha256:13f53ed1d91e2e11aac476ee9a0269fdda6cc4874eba903efd40daf50c55eee5 Status: Downloaded newer image for registry.aliyuncs.com/google_containers/etcd:3.5.3-0 registry.aliyuncs.com/google_containers/etcd:3.5.3-0

[root@k8s ~]# kubeadm init --image-repository registry.aliyuncs.com/google_containers --cri-socket unix://var/run/cri-dockerd.sock --ignore-preflight-errors=NumCPU --service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16 、--kubernetes-version=1.24.4

[kubelet-check] Initial timeout of 40s passed.

error execution phase upload-config/kubelet: Error writing Crisocket information for the control-plane node: nodes "k8s" not found

To see the stack trace of this error execute with --v=5 or higher

报错:error execution phase upload-config/kubelet: Error writing Crisocket information for the control-plane node: nodes “k8s” not found

解决🔑:

swapoff -a && kubeadm reset 命令:kubeadm reset 报错:Found multiple CRI endpoints on the host. Please define which one do you wish to use by setting the 'criSocket' field in the kubeadm configuration file: unix:///var/run/containerd/containerd.sock, unix:///var/run/cri-dockerd.sock 解决:kubeadm reset --cri-socket unix://var/run/cri-dockerd.sock swapoff -a && kubeadm reset --cri-socket unix://var/run/cri-dockerd.sock systemctl daemon-reload && systemctl restart kubelet && iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

[root@k8s ~]# kubeadm init --image-repository registry.aliyuncs.com/google_containers --cri-socket unix://var/run/cri-dockerd.sock --ignore-preflight-errors=NumCPU --service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16 --kubernetes-version=1.24.4

I0914 14:56:03.338660 39811 version.go:255] remote version is much newer: v1.25.0; falling back to: stable-1.24

[init] Using Kubernetes version: v1.24.4

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.10.0.1 192.168.47.50]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s localhost] and IPs [192.168.47.50 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s localhost] and IPs [192.168.47.50 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 8.503409 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: j5h4wu.crmpe07onlh5h0o8

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.47.50:6443 --token j5h4wu.crmpe07onlh5h0o8 \

--discovery-token-ca-cert-hash sha256:0af083946d013ec301d311bc19a784be123f5baa15ca7cb2de12c292e288635e

按要求执行

[root@k8s ~]# mkdir -p $HOME/.kube

[root@k8s ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s ~]# chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

📌kubectl命令补全

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

📌安装网络flannel

- 安装 flannel网络插件

echo 52.74.223.119 github.com >> /etc/hosts

echo 192.30.253.119 gist.github.com >> /etc/hosts

echo 54.169.195.247 api.github.com >> /etc/hosts

echo 185.199.111.153 assets-cdn.github.com >> /etc/hosts

echo 151.101.64.133 raw.githubusercontent.com >> /etc/hosts

echo 151.101.108.133 user-images.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 gist.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 cloud.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 camo.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars0.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars1.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars2.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars3.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars4.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars5.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars6.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars7.githubusercontent.com >> /etc/hosts

echo 151.101.76.133 avatars8.githubusercontent.com >> /etc/hosts

[root@k8s ~]# wget --no-check-certificate https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@k8s ~]# vim kube-flannel.yml

net-conf.json: |

{

"Network": "10.122.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

[root@k8s ~]# kubectl apply -f kube-flannel.yml

[root@k8s ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-flannel kube-flannel-ds-6t62g 1/1 Running 0 30s

kube-system coredns-74586cf9b6-jbvtt 0/1 ContainerCreating 0 10m

kube-system coredns-74586cf9b6-q5mlw 0/1 ContainerCreating 0 157m

kube-system etcd-k8s 1/1 Running 1 (38m ago) 158m

kube-system kube-apiserver-k8s 1/1 Running 1 (37m ago) 158m

kube-system kube-controller-manager-k8s 1/1 Running 8 (7m20s ago) 158m

kube-system kube-proxy-qdm7z 1/1 Running 1 (38m ago) 157m

kube-system kube-scheduler-k8s 1/1 Running 8 (7m20s ago) 158m

[root@k8s ~]# kubectl describe pod coredns-74586cf9b6-jbvtt -n kube-system

Warning FailedCreatePodSandBox 4m46s kubelet Failed to create pod sandbox: rpc error: code = Unknown desc = [failed to set up sandbox container "23afc3b1f16600c4139d25c54f0cc0f802ddc356b11d3962d405d2892d0b44bb" network for pod "coredns-74586cf9b6-jbvtt": networkPlugin cni failed to set up pod "coredns-74586cf9b6-jbvtt_kube-system" network: error getting ClusterInformation: connection is unauthorized: Unauthorized, failed to clean up sandbox container "23afc3b1f16600c4139d25c54f0cc0f802ddc356b11d3962d405d2892d0b44bb" network for pod "coredns-74586cf9b6-jbvtt": networkPlugin cni failed to teardown pod "coredns-74586cf9b6-jbvtt_kube-system" network: error getting ClusterInformation: connection is unauthorized: Unauthorized]

报错:Failed to create pod sandbox: rpc error: code = Unknown desc = [failed to set up sandbox container “23afc3b1f16600c4139d25c54f0cc0f802ddc356b11d3962d405d2892d0b44bb” network for pod “coredns-74586cf9b6-jbvtt”: networkPlugin cni failed to set up pod “coredns-74586cf9b6-jbvtt_kube-system” network: error getting ClusterInformation: connection is unauthorized: Unauthorized, failed to clean up sandbox container “23afc3b1f16600c4139d25c54f0cc0f802ddc356b11d3962d405d2892d0b44bb” network for pod “coredns-74586cf9b6-jbvtt”: networkPlugin cni failed to teardown pod “coredns-74586cf9b6-jbvtt_kube-system” network: error getting ClusterInformation: connection is unauthorized: Unauthorized]

原因:卸载calico有残留

解决🔑:

[root@k8s ~]# ipvsadm --clear [root@k8s ~]# rm -rf /etc/cni/net.d/ [root@k8s ~]# kubectl delete -f kube-flannel.yml [root@k8s ~]# kubectl create -f kube-flannel.yml [root@k8s ~]# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-flannel kube-flannel-ds-6t62g 1/1 Running 0 36s kube-system coredns-74586cf9b6-jbvtt 1/1 Running 0 10m kube-system coredns-74586cf9b6-q5mlw 1/1 Running 0 157m kube-system etcd-k8s 1/1 Running 1 (38m ago) 158m kube-system kube-apiserver-k8s 1/1 Running 1 (38m ago) 158m kube-system kube-controller-manager-k8s 1/1 Running 8 (7m26s ago) 158m kube-system kube-proxy-qdm7z 1/1 Running 1 (38m ago) 157m kube-system kube-scheduler-k8s 1/1 Running 8 (7m26s ago) 158m

📌子节点配置

查看加入集群的命令

[root@k8s ~]# kubeadm token create --print-join-command

kubeadm join 192.168.47.50:6443 --token 0i4x0o.zkrwba68j2pf19um --discovery-token-ca-cert-hash sha256:473f633d586094fc423a1c1544fbef44dc46c736c747b8b6bfc6343207e10650

加入集群

kubeadm join 192.168.47.50:6443 --token j5h4wu.crmpe07onlh5h0o8 --discovery-token-ca-cert-hash sha256:0af083946d013ec301d311bc19a784be123f5baa15ca7cb2de12c292e288635e --cri-socket unix://var/run/cri-dockerd.sock

[root@k8s ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s Ready control-plane 170m v1.24.4

k8s-node NotReady <none> 74s v1.24.4

k8s-node2 NotReady <none> 54s v1.24.4

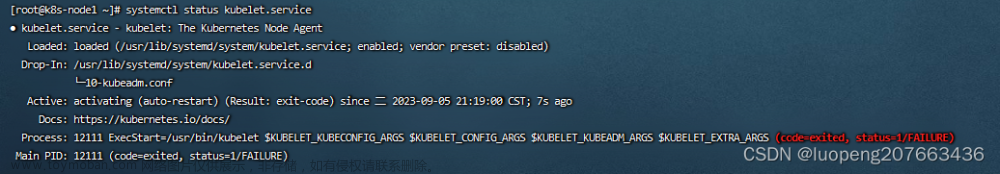

查看日志

journalctl -xeu kubelet -l

Container runtime network not ready" networkReady="NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized"

报错:Container runtime network not ready" networkReady=“NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized”

解决🔑:拷贝master上/etc/cni/net.d 目录下的文件到有问题的节点上

mkdir -p /etc/cni/net.d/ scp k8s:/etc/cni/net.d/* /etc/cni/net.d/

[root@k8s ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s Ready control-plane 3h2m v1.24.4

k8s-node Ready <none> 12m v1.24.4

k8s-node2 Ready <none> 12m v1.24.4

📌安装ingress-nginx

- 下载yaml

mkdir -p nginx-ingress

cd nginx-ingress

curl -O https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.2.0/deploy/static/provider/cloud/deploy.yaml

- yaml内容(这里是解决报错后的版本,可以直接复制)

apiVersion: v1

kind: Namespace

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

name: ingress-nginx

---

apiVersion: v1

automountServiceAccountToken: true

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx

namespace: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resourceNames:

- ingress-controller-leader

resources:

- configmaps

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- ""

resources:

- configmaps

verbs:

- update

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

namespace: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- secrets

verbs:

- get

- create

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

- namespaces

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

rules:

- apiGroups:

- admissionregistration.k8s.io

resources:

- validatingwebhookconfigurations

verbs:

- get

- update

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: v1

data:

allow-snippet-annotations: "true"

kind: ConfigMap

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-controller

namespace: ingress-nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

externalTrafficPolicy: Local

ports:

- appProtocol: http

name: http

port: 80

protocol: TCP

targetPort: http

- appProtocol: https

name: https

port: 443

protocol: TCP

targetPort: https

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: LoadBalancer

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-controller-admission

namespace: ingress-nginx

spec:

ports:

- appProtocol: https

name: https-webhook

port: 443

targetPort: webhook

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: ClusterIP

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

minReadySeconds: 0

revisionHistoryLimit: 10

selector:

matchLabels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

spec:

containers:

- args:

- /nginx-ingress-controller

- --publish-service=$(POD_NAMESPACE)/ingress-nginx-controller

- --election-id=ingress-controller-leader

- --controller-class=k8s.io/ingress-nginx

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

image: bitnami/nginx-ingress-controller

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: controller

ports:

- containerPort: 80

name: http

protocol: TCP

- containerPort: 443

name: https

protocol: TCP

- containerPort: 8443

name: webhook

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 90Mi

securityContext:

allowPrivilegeEscalation: true

capabilities:

add:

- NET_BIND_SERVICE

drop:

- ALL

runAsUser: 101

volumeMounts:

- mountPath: /usr/local/certificates/

name: webhook-cert

readOnly: true

dnsPolicy: ClusterFirst

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission

---

apiVersion: batch/v1

kind: Job

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission-create

namespace: ingress-nginx

spec:

template:

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission-create

spec:

containers:

- args:

- create

- --host=ingress-nginx-controller-admission,ingress-nginx-controller-admission.$(POD_NAMESPACE).svc

- --namespace=$(POD_NAMESPACE)

- --secret-name=ingress-nginx-admission

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

image: dyrnq/kube-webhook-certgen:v1.1.1

imagePullPolicy: IfNotPresent

name: create

securityContext:

allowPrivilegeEscalation: false

nodeSelector:

kubernetes.io/os: linux

restartPolicy: OnFailure

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 2000

serviceAccountName: ingress-nginx-admission

---

apiVersion: batch/v1

kind: Job

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission-patch

namespace: ingress-nginx

spec:

template:

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission-patch

spec:

containers:

- args:

- patch

- --webhook-name=ingress-nginx-admission

- --namespace=$(POD_NAMESPACE)

- --patch-mutating=false

- --secret-name=ingress-nginx-admission

- --patch-failure-policy=Fail

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

image: dyrnq/kube-webhook-certgen:v1.1.1

imagePullPolicy: IfNotPresent

name: patch

securityContext:

allowPrivilegeEscalation: false

nodeSelector:

kubernetes.io/os: linux

restartPolicy: OnFailure

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 2000

serviceAccountName: ingress-nginx-admission

---

apiVersion: networking.k8s.io/v1

kind: IngressClass

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: nginx

spec:

controller: k8s.io/ingress-nginx

---

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.2.0

name: ingress-nginx-admission

webhooks:

- admissionReviewVersions:

- v1

clientConfig:

service:

name: ingress-nginx-controller-admission

namespace: ingress-nginx

path: /networking/v1/ingresses

failurePolicy: Fail

matchPolicy: Equivalent

name: validate.nginx.ingress.kubernetes.io

rules:

- apiGroups:

- networking.k8s.io

apiVersions:

- v1

operations:

- CREATE

- UPDATE

resources:

- ingresses

sideEffects: None

提前拉镜像(如果需要,可以修改镜像名)

[root@k8s ~]# docker search nginx-ingress-controller

[root@k8s ~]# docker pull bitnami/nginx-ingress-controller

[root@k8s ~]# docker images

bitnami/nginx-ingress-controller latest 80ae81101d41 8 months ago 388MB

[root@k8s ~]# docker tag bitnami/nginx-ingress-controller:latest bitnami/nginx-ingress-controller:v1.24.4

[root@k8s ~]# vim ingress-nginx.yaml

containers:

- name: nginx-ingress-controller

image: bitnami/nginx-ingress-controller:v1.24.4

[root@k8s-node2 ~]# docker pull dyrnq/kube-webhook-certgen:v1.1.1

v1.1.1: Pulling from dyrnq/kube-webhook-certgen

ec52731e9273: Pull complete

b90aa28117d4: Pull complete

Digest: sha256:64d8c73dca984af206adf9d6d7e46aa550362b1d7a01f3a0a91b20cc67868660

Status: Downloaded newer image for dyrnq/kube-webhook-certgen:v1.1.1

docker.io/dyrnq/kube-webhook-certgen:v1.1.1

[root@k8s nginx-ingress]# kubectl apply -f deploy.yaml

namespace/ingress-nginx configured

serviceaccount/ingress-nginx created

serviceaccount/ingress-nginx-admission created

role.rbac.authorization.k8s.io/ingress-nginx created

role.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrole.rbac.authorization.k8s.io/ingress-nginx created

clusterrole.rbac.authorization.k8s.io/ingress-nginx-admission created

rolebinding.rbac.authorization.k8s.io/ingress-nginx created

rolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

configmap/ingress-nginx-controller created

service/ingress-nginx-controller created

service/ingress-nginx-controller-admission created

deployment.apps/ingress-nginx-controller created

job.batch/ingress-nginx-admission-create created

job.batch/ingress-nginx-admission-patch created

ingressclass.networking.k8s.io/nginx created

[root@k8s nginx-ingress]# kubectl get pods -n ingress-nginx

NAME READY STATUS RESTARTS AGE

ingress-nginx-admission-create-zpscz 0/1 Completed 0 10m

ingress-nginx-admission-patch-fbcrj 0/1 Completed 0 10m

ingress-nginx-controller-599c66746c-g2rmw 1/1 Running 0 7m41s

查看日志

[root@k8s nginx-ingress]# kubectl logs -n ingress-nginx ingress-nginx-controller-599c66746c-g2rmw

- 日志报错解决

报错:Failed to list *v1beta1.Ingress: ingresses.networking.k8s.io is forbidden: User “system:serviceaccount:ingress-nginx:nginx-ingress-serviceaccount” cannot list resource “ingresses” in API group “networking.k8s.io” at the cluster scope

解决🔑:编辑ingress的 mandatory.yaml 在ClusterRole位置添加下述代码然后重新apply -f 即可

- apiGroups: - "extensions" - "networking.k8s.io" resources: - ingresses verbs: - list - watch

- 报错2

报错:Failed to update lock: configmaps “ingress-controller-leader” is forbidden: User “system:serviceaccount:ingress-nginx:nginx-ingress-serviceaccount” cannot update resource “configmaps” in API group “” in the namespace “ingress-nginx”

解决🔑:编辑ingress的 mandatory.yaml 在Role位置添加下述代码然后重新apply -f 即可

- apiGroups: - "" resources: - configmaps verbs: - update

- 正确日志输出

[root@k8s nginx-ingress]# kubectl logs -n ingress-nginx ingress-nginx-controller-599c66746c-g2rmw

-------------------------------------------------------------------------------

NGINX Ingress controller

Release: 1.1.0

Build: 843a16a8

Repository: https://github.com/kubernetes/ingress-nginx

nginx version: nginx/1.21.5

-------------------------------------------------------------------------------

W0920 05:48:25.803155 1 client_config.go:615] Neither --kubeconfig nor --master was specified. Using the inClusterConfig. This might not work.

I0920 05:48:25.894694 1 main.go:223] "Creating API client" host="https://10.10.0.1:443"

I0920 05:48:26.606925 1 main.go:267] "Running in Kubernetes cluster" major="1" minor="24" git="v1.24.4" state="clean" commit="95ee5ab382d64cfe6c28967f36b53970b8374491" platform="linux/amd64"

I0920 05:48:27.275437 1 main.go:104] "SSL fake certificate created" file="/etc/ingress-controller/ssl/default-fake-certificate.pem"

I0920 05:48:28.096131 1 ssl.go:531] "loading tls certificate" path="/usr/local/certificates/cert" key="/usr/local/certificates/key"

I0920 05:48:28.363220 1 nginx.go:255] "Starting NGINX Ingress controller"

I0920 05:48:28.510903 1 event.go:282] Event(v1.ObjectReference{Kind:"ConfigMap", Namespace:"ingress-nginx", Name:"ingress-nginx-controller", UID:"6b2a0e79-65f5-43b8-b05b-ad6c5d068a77", APIVersion:"v1", ResourceVersion:"11568", FieldPath:""}): type: 'Normal' reason: 'CREATE' ConfigMap ingress-nginx/ingress-nginx-controller

I0920 05:48:30.447454 1 nginx.go:297] "Starting NGINX process"

I0920 05:48:30.455756 1 leaderelection.go:248] attempting to acquire leader lease ingress-nginx/ingress-controller-leader...

I0920 05:48:30.455860 1 nginx.go:317] "Starting validation webhook" address=":8443" certPath="/usr/local/certificates/cert" keyPath="/usr/local/certificates/key"

I0920 05:48:30.461818 1 controller.go:155] "Configuration changes detected, backend reload required"

I0920 05:48:30.539493 1 status.go:84] "New leader elected" identity="nginx-ingress-controller-64cc4648b8-m8pxs"

I0920 05:48:33.551937 1 controller.go:172] "Backend successfully reloaded"

I0920 05:48:33.551996 1 controller.go:183] "Initial sync, sleeping for 1 second"

I0920 05:48:33.553113 1 event.go:282] Event(v1.ObjectReference{Kind:"Pod", Namespace:"ingress-nginx", Name:"ingress-nginx-controller-599c66746c-g2rmw", UID:"7413a1d0-e3b7-4a1e-99d2-8e704a9d2ae3", APIVersion:"v1", ResourceVersion:"11935", FieldPath:""}): type: 'Normal' reason: 'RELOAD' NGINX reload triggered due to a change in configuration

W0920 05:48:34.566596 1 controller.go:201] Dynamic reconfiguration failed: Post "http://127.0.0.1:10246/configuration/backends": dial tcp 127.0.0.1:10246: connect: connection refused

E0920 05:48:34.566632 1 controller.go:205] Unexpected failure reconfiguring NGINX:

Post "http://127.0.0.1:10246/configuration/backends": dial tcp 127.0.0.1:10246: connect: connection refused

E0920 05:48:34.566698 1 queue.go:130] "requeuing" err="Post \"http://127.0.0.1:10246/configuration/backends\": dial tcp 127.0.0.1:10246: connect: connection refused" key="initial-sync"

I0920 05:48:34.566826 1 controller.go:155] "Configuration changes detected, backend reload required"

I0920 05:48:34.723516 1 controller.go:172] "Backend successfully reloaded"

I0920 05:48:34.723679 1 controller.go:183] "Initial sync, sleeping for 1 second"

I0920 05:48:34.761308 1 event.go:282] Event(v1.ObjectReference{Kind:"Pod", Namespace:"ingress-nginx", Name:"ingress-nginx-controller-599c66746c-g2rmw", UID:"7413a1d0-e3b7-4a1e-99d2-8e704a9d2ae3", APIVersion:"v1", ResourceVersion:"11935", FieldPath:""}): type: 'Normal' reason: 'RELOAD' NGINX reload triggered due to a change in configuration

I0920 05:49:09.840584 1 status.go:84] "New leader elected" identity="ingress-nginx-controller-599c66746c-g2rmw"

I0920 05:49:09.841730 1 leaderelection.go:258] successfully acquired lease ingress-nginx/ingress-controller-leader

- 测试使用

[root@k8s nginx-ingress]# kubectl create deploy echoserver --image=cilium/echoserver --replicas=2

deployment.apps/echoserver created

[root@k8s nginx-ingress]# kubectl get pods

NAME READY STATUS RESTARTS AGE

echoserver-8585bfb456-75jps 1/1 Running 0 3m52s

echoserver-8585bfb456-scnld 1/1 Running 0 3m53s

[root@k8s nginx-ingress]# kubectl expose deployment echoserver --port=80

service/echoserver exposed

[root@k8s nginx-ingress]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

echoserver ClusterIP 10.10.197.110 <none> 80/TCP 38s

kubernetes ClusterIP 10.10.0.1 <none> 443/TCP 165m

#正常情况会随机显示两个主机名

[root@k8s nginx-ingress]# curl http://10.10.197.110

Hostname: echoserver-8585bfb456-scnld

Pod Information:

-no pod information available-

Server values:

server_version=nginx: 1.13.3 - lua: 10008

Request Information:

client_address=::ffff:10.122.0.0

method=GET

real path=/

query=

request_version=1.1

request_scheme=http

request_uri=http://10.10.197.110:80/

Request Headers:

accept=*/*

host=10.10.197.110

user-agent=curl/7.29.0

Request Body:

-no body in request-

[root@k8s nginx-ingress]# curl http://10.10.197.110

Hostname: echoserver-8585bfb456-75jps

Pod Information:

-no pod information available-

Server values:

server_version=nginx: 1.13.3 - lua: 10008

Request Information:

client_address=::ffff:10.122.0.0

method=GET

real path=/

query=

request_version=1.1

request_scheme=http

request_uri=http://10.10.197.110:80/

Request Headers:

accept=*/*

host=10.10.197.110

user-agent=curl/7.29.0

Request Body:

-no body in request-

- 测试nodeport

[root@k8s nginx-ingress]# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 10.10.68.162 <pending> 80:31855/TCP,443:31968/TCP 23m

ingress-nginx-controller-admission ClusterIP 10.10.229.71 <none> 443/TCP 23m

开启nginx-ingress NodePort端口

[root@k8s nginx-ingress]# kubectl edit svc ingress-nginx-controller -n ingress-nginx

service/ingress-nginx-controller edited

#type改为NodePort

[root@k8s nginx-ingress]# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller NodePort 10.10.68.162 <none> 80:31855/TCP,443:31968/TCP 25m

ingress-nginx-controller-admission ClusterIP 10.10.229.71 <none> 443/TCP 25m

[root@k8s nginx-ingress]# curl http://192.168.47.50:31855

<html>

<head><title>404 Not Found</title></head>

<body>

<center><h1>404 Not Found</h1></center>

<hr><center>nginx</center>

</body>

</html>

配置ingress策略

[root@k8s nginx-ingress]# vim ingress-echoserver-test.yaml

[root@k8s nginx-ingress]# cat ingress-echoserver-test.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-echoserver-test

spec:

rules:

- host: echoserver.test

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: echoserver

port:

number: 80

ingressClassName: nginx

[root@k8s nginx-ingress]# kubectl apply -f ingress-echoserver-test.yaml

ingress.networking.k8s.io/ingress-echoserver-test created

[root@k8s nginx-ingress]# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

ingress-echoserver-test nginx echoserver.test 10.10.68.162 80 91s

[root@k8s nginx-ingress]# curl -H "Host: echoserver.test" http://192.168.47.50:31855

Hostname: echoserver-8585bfb456-75jps

Pod Information:

-no pod information available-

Server values:

server_version=nginx: 1.13.3 - lua: 10008

Request Information:

client_address=::ffff:10.122.1.8

method=GET

real path=/

query=

request_version=1.1

request_scheme=http

request_uri=http://echoserver.test:80/

Request Headers:

accept=*/*

host=echoserver.test

user-agent=curl/7.29.0

x-forwarded-for=10.122.0.0

x-forwarded-host=echoserver.test

x-forwarded-port=80

x-forwarded-proto=http

x-forwarded-scheme=http

x-real-ip=10.122.0.0

x-request-id=57aa1432d2373b71fc966b7f08a01176

x-scheme=http

Request Body:

-no body in request-

到此k8s集群就搭建完成了,后续会有整个cicd流程的部署文章来源:https://www.toymoban.com/news/detail-405414.html

如果有问题,可以直接评论区,我会很快回复的文章来源地址https://www.toymoban.com/news/detail-405414.html

到了这里,关于k8s-1.24.4详细安装教程(附镜像)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!