【人工智能概论】 构建神经网络——以用InceptionNet解决MNIST任务为例

一. 整体思路

- 两条原则,四个步骤。

1.1 两条原则

从宏观到微观把握数据形状

1.2 四个步骤

准备数据构建模型确定优化策略完善训练与测试代码

二. 举例——用InceptionNet解决MNIST任务

2.1 模型简介

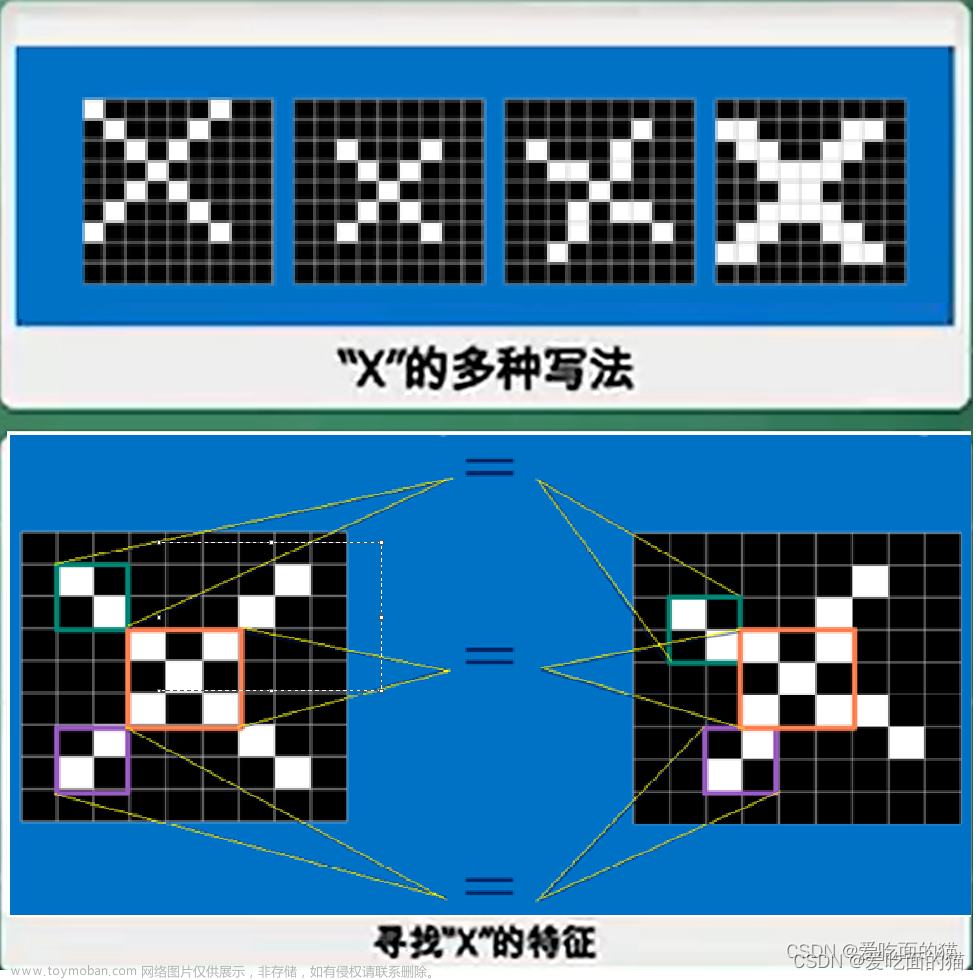

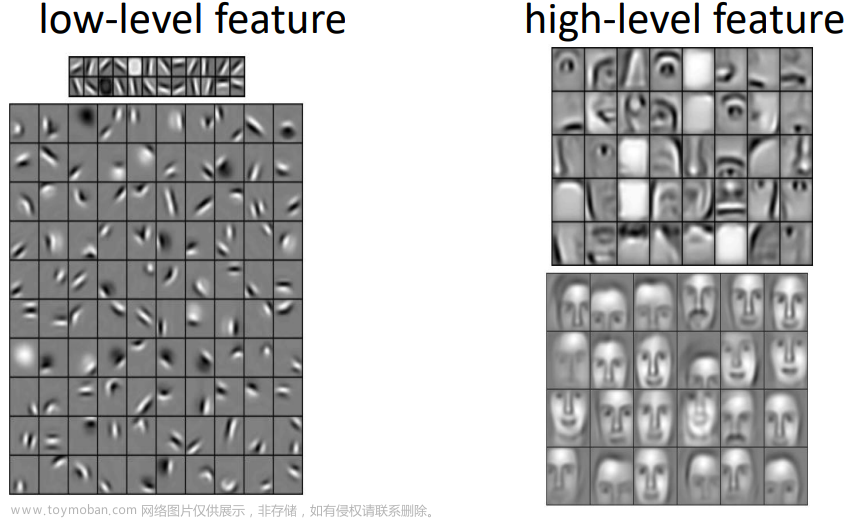

- InceptionNet的设计思路是通过增加网络宽度来获得更好的模型性能。

- 其核心在于基本单元Inception结构块,如下图:

- 通过纵向堆叠Inception块构建完整网络。

2.2 MNIST任务

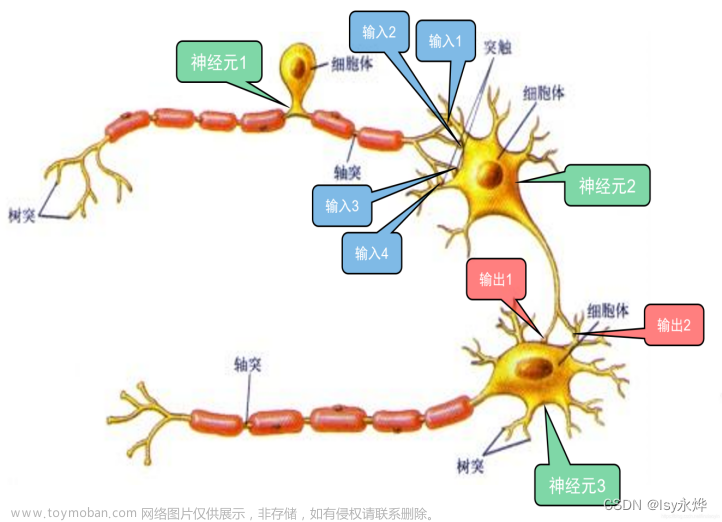

- MNIST是入门级的机器学习任务;

- 它是一个手写数字识别的数据集。

2.3 完整的程序

# 调包

import torch

import torch.nn as nn

import torch.nn.functional as F

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.optim as optim

"""数据准备"""

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))

])

train_dataset = datasets.MNIST(root='./mnist/',train=True,download=True,transform=transform)

train_loader = DataLoader(train_dataset,shuffle=True,batch_size=batch_size)

test_dataset = datasets.MNIST(root='./mnist/',train=False,download=True,transform=transform)

test_loader = DataLoader(test_dataset,shuffle=False,batch_size=batch_size)

"""构建模型"""

# 需要指定输入的通道数

class Inceptiona(nn.Module):

def __init__(self,in_channels):

super(Inceptiona,self).__init__()

self.branch1_1 = nn.Conv2d(in_channels , 16 , kernel_size= 1)

self.branch5_5_1 =nn.Conv2d(in_channels, 16, kernel_size= 1)

self.branch5_5_2 =nn.Conv2d(16,24,kernel_size=5,padding=2)

self.branch3_3_1 = nn.Conv2d(in_channels, 16,kernel_size=1)

self.branch3_3_2 = nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3_3_3 = nn.Conv2d(24,24,kernel_size=3,padding=1)

self.branch_pooling = nn.Conv2d(in_channels,24,kernel_size=1)

def forward(self,x):

x1 = self.branch1_1(x)

x2 = self.branch5_5_1(x)

x2 = self.branch5_5_2(x2)

x3 = self.branch3_3_1(x)

x3 = self.branch3_3_2(x3)

x3 = self.branch3_3_3(x3)

x4 = F.avg_pool2d(x,kernel_size=3,stride = 1, padding=1)

x4 = self.branch_pooling(x4)

outputs = [x1,x2,x3,x4]

return torch.cat(outputs,dim=1)

# 构建完整的网络

class Net(nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1 = nn.Conv2d(1,10,kernel_size=5)

self.conv2 = nn.Conv2d(88,20,kernel_size=5)

self.incep1 = Inceptiona(in_channels=10)

self.incep2 = Inceptiona(in_channels=20)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(1408,10)

def forward(self,x):

batch_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(batch_size,-1)

x = self.fc(x)

return x

"""确定优化策略"""

model = Net()

device = torch.device('cuda:0'if torch.cuda.is_available() else 'cpu')

model.to(device) # 指定设备

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

"""完善训练与测试代码"""

def train(epoch):

running_loss = 0.0

for batch_index, data in enumerate(train_loader,0):

inputs, target = data

# 把数据和模型送到同一个设备上

inputs, target = inputs.to(device), target.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs,target)

loss.backward()

optimizer.step()

running_loss += loss.item()

# 用loss.item不会构建计算图,得到的不是张量,而是标量

if batch_index % 300 == 299:

# 每三百组计算一次平均损失

print('[%d,%5d] loss: %.3f' %(epoch+1,batch_index+1,running_loss/300))

# 给出的是平均每一轮的损失

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

# 测试的环节不用求梯度

for data in test_loader:

images , labels = data

images , labels = images.to(device), labels.to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data,dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d,%%'%(100*correct/total))

return 100*correct/total # 将测试的准确率返回

# 执行训练

if __name__=='__main__':

score_best = 0

for epoch in range(10):

train(epoch)

score = test()

if score > score_best:

score_best = score

torch.save(model.state_dict(), "model.pth")

文章来源地址https://www.toymoban.com/news/detail-419523.html

文章来源:https://www.toymoban.com/news/detail-419523.html

到了这里,关于【人工智能概论】 构建神经网络——以用InceptionNet解决MNIST任务为例的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!