- 论文地址:SA-Net: Shuffle Attention for Deep Convolutional Neural Networks

- 开源代码:https://github.com/wofmanaf/SA-Net

当前的 CNN 中的 attention 机制主要包括:channel attention 和 spatial attention,当前一些方法(GCNet 、CBAM 等)通常将二者集成,容易产生 converging difficulty 和 heavy computation burden 的问题。尽管 ECANet 和 SGE 提出了一些优化方案,但没有充分利用 channel 和 spatial 之间的关系。因此,作者提出一个问题 “ Can one fuse different attention modules in a lighter but more efficient way? ”

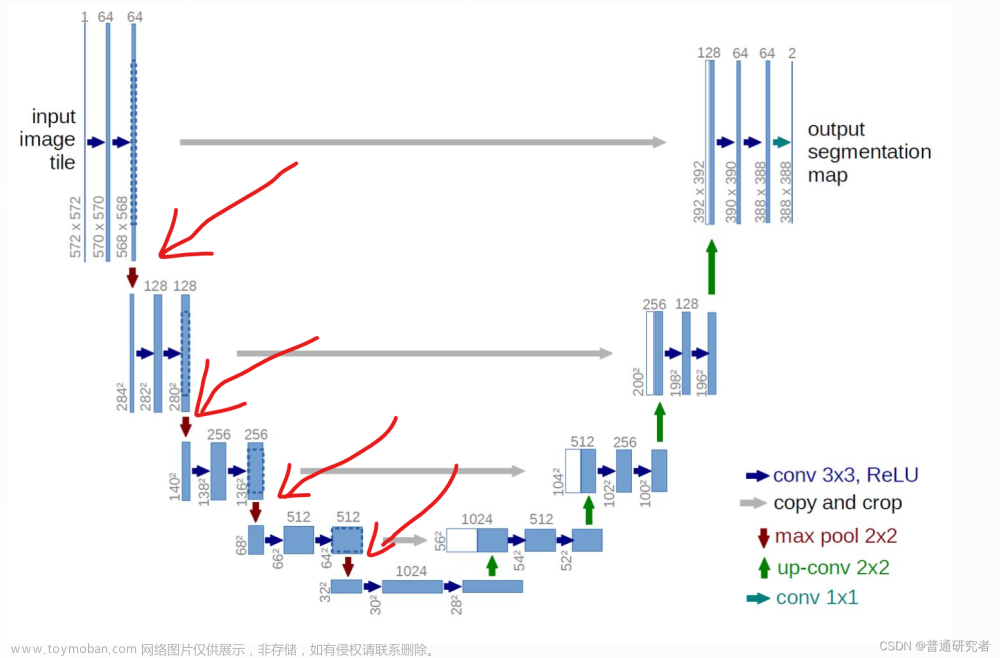

为解决这个问题,作者提出了 shuffle attention,整体框架如下图所示。可以看出首先将输入的特征分为

g

g

g组,然后每一组的特征进行split,分成两个分支,分别计算 channel attention 和 spatial attention,两种 attention 都使用全连接 + sigmoid 的方法计算。接着,两个分支的结果拼接到一起,然后合并,得到和输入尺寸一致的 feature map。 最后,用一个 shuffle 层进行处理。

1. 增加ShuffleAttention.yaml文件

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOAir v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[-1, 1, ShuffleAttention, [1024]], # 修改

[[17, 20, 24], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

2. common.py配置

在./models/common.py文件中增加以下模块代码 文章来源:https://www.toymoban.com/news/detail-426105.html

文章来源:https://www.toymoban.com/news/detail-426105.html

import numpy as np

import torch

from torch import nn

from torch.nn import init

from torch.nn.parameter import Parameter

# https://arxiv.org/pdf/2102.00240.pdf

class ShuffleAttention(nn.Module):

def __init__(self, channel=512,reduction=16,G=8):

super().__init__()

self.G=G

self.channel=channel

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.gn = nn.GroupNorm(channel // (2 * G), channel // (2 * G))

self.cweight = Parameter(torch.zeros(1, channel // (2 * G), 1, 1))

self.cbias = Parameter(torch.ones(1, channel // (2 * G), 1, 1))

self.sweight = Parameter(torch.zeros(1, channel // (2 * G), 1, 1))

self.sbias = Parameter(torch.ones(1, channel // (2 * G), 1, 1))

self.sigmoid=nn.Sigmoid()

def init_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

init.kaiming_normal_(m.weight, mode='fan_out')

if m.bias is not None:

init.constant_(m.bias, 0)

elif isinstance(m, nn.BatchNorm2d):

init.constant_(m.weight, 1)

init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

init.normal_(m.weight, std=0.001)

if m.bias is not None:

init.constant_(m.bias, 0)

@staticmethod

def channel_shuffle(x, groups):

b, c, h, w = x.shape

x = x.reshape(b, groups, -1, h, w)

x = x.permute(0, 2, 1, 3, 4)

# flatten

x = x.reshape(b, -1, h, w)

return x

def forward(self, x):

b, c, h, w = x.size()

#group into subfeatures

x=x.view(b*self.G,-1,h,w) #bs*G,c//G,h,w

#channel_split

x_0,x_1=x.chunk(2,dim=1) #bs*G,c//(2*G),h,w

#channel attention

x_channel=self.avg_pool(x_0) #bs*G,c//(2*G),1,1

x_channel=self.cweight*x_channel+self.cbias #bs*G,c//(2*G),1,1

x_channel=x_0*self.sigmoid(x_channel)

#spatial attention

x_spatial=self.gn(x_1) #bs*G,c//(2*G),h,w

x_spatial=self.sweight*x_spatial+self.sbias #bs*G,c//(2*G),h,w

x_spatial=x_1*self.(x_spatial) #bs*G,c//(2*G),h,w

# concatenate along channel axis

out=torch.cat([x_channel,x_spatial],dim=1) #bs*G,c//G,h,w

out=out.contiguous().view(b,-1,h,w)

# channel shuffle

out = self.channel_shuffle(out, 2)

return out

3. yolo.py配置

找到models/yolo.py文件中parse_model()函数的for i, (f, n, m, args) in enumerate(d['backbone'] + d['head'])(258行上下)并其循环内添加如下代码。文章来源地址https://www.toymoban.com/news/detail-426105.html

elif m is ShuffleAttention:

c1, c2 = ch[f], args[0]

if c2 != no:

c2 = make_divisible(c2 * gw, 8)

4. 训练模型

python train.py --cfg ShuffleAttention.yaml

到了这里,关于YOLO算法改进指南【中阶改进篇】:3.添加SA-Net注意力机制的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!

![[YOLOv7/YOLOv5系列算法改进NO.4]添加ECA通道注意力机制](https://imgs.yssmx.com/Uploads/2024/02/449324-1.png)