吸取前人教训,写下此稿

笔者所用到的软件版本:

hadoop 2.7.3

hadoop-eclipse-plugin-2.7.3.jar

eclipse-java-2020-06-R-win32-x86_64

先从虚拟机下载hadoop 需要解压好和文件配置好的版本,方便后文配置伪分布式文件)

笔者linux的hadoop目录为:/usr/hadoop

下载到windows的某个目录,自行选择 笔者下载到的windows目录为D:\desktop\fsdownload\hadoop-2.7.3

下载eclipse和hadoop连接的插件 hadoop-eclipse-plugin-2.7.3.jar

(笔者hadoop版本为2.7.3,你使用的hadoop版本和插件版本需要对应)

链接:https://pan.baidu.com/s/1m5BCOa1vcNghG0TUklB7CA

提取码:e9mo

(此链接为hadoop-eclipse-plugin-2.7.3.jar)

将下载好的插件hadoop-eclipse-plugin-2.7.3.jar 复制到eclipse的plugins目录下

将winutils.exe文件复制到hadoop目录下的bin路径下

wintil.exe链接

链接:https://pan.baidu.com/s/1Ahzo8IyPhoxzvPSuT1nN7Q

提取码:bhip

配置hadoop环境变量

进入 设置--系统--关于--高级系统设置--环境变量

编辑path变量,增加内容为:%HADOOP_HOME%\bin

增加一个变量HADOOP_HOME

变量值为 hadoop的路径

开启Hadoop集群(此处省略。。自行开启)

开启集群和复制插件之后 开启eclipse,可以在eclipse左侧工作区看到DFS Locations

在windows菜单下选中perference

perence下选中hadoop map/reduce

设置hadoop的安装路径 前文有提到 笔者的安装路径为 D:\desktop\fsdownload\hadoop-2.7.3

建立与hadoop的连接 在eclipse下方工作区对Map/Reduce Locations 进行设置

右击 new hadoop location 进入设置

相关设置如图

笔者的core-site.xml(笔者路径为%HADOOP_HOME%/etc/hadoop/core-site.xml)文件中端口号为9000

设置完成之后可以在DFS Locations看到hdfs上的文件

新建项目hadoop1(项目名随意)

进入eclipse 点击左上角file--new--projects

输入项目名称

这样就创建好了一个mapreduce项目

执行MapReduce 项目前,需要将hadoop中有修改过的配置文件(如伪分布式需要core-site.xml 和 hdfs-site.xml),以及log4j.properties复制到项目下的 src文件夹中

(请忽略已经建好的包)

还需要将windows下的hosts文件修改 让ip和主机名映射

hosts文件路径为C:\Windows\System32\drivers\etc\hosts

而修改hosts文件需要管理员权限

可以先右击hosts文件 点击属性

将属性中的“只读”取消 确定并应用

然后通过管理员身份运行命令提示窗口

进入hosts文件目录上一级

输入notepad hosts 即可修改hosts文件

在最底下 输入相对应的映射关系即可

笔者为192.168.222.171 master746

在hadoop1项目中创建包和类

需要填写两个地方:在Package 处填写 org.apache.hadoop.examples;在Name 处填写wordcount。(包名、类名可以随意起)

此处笔者参考 http://t.csdn.cn/4WKHD

写入代码

package org.apache.hadoop.examples;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class wordcount {

public wordcount() {

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = (new GenericOptionsParser(conf, args)).getRemainingArgs();

if(otherArgs.length < 2) {

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

Job job = Job.getInstance(conf, "word count");

job.setJarByClass(wordcount.class);

job.setMapperClass(wordcount.TokenizerMapper.class);

job.setCombinerClass(wordcount.IntSumReducer.class);

job.setReducerClass(wordcount.IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

for(int i = 0; i < otherArgs.length - 1; ++i) {

FileInputFormat.addInputPath(job, new Path(otherArgs[i]));

}

FileOutputFormat.setOutputPath(job, new Path(otherArgs[otherArgs.length - 1]));

System.exit(job.waitForCompletion(true)?0:1);

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public IntSumReducer() {

}

public void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int sum = 0;

IntWritable val;

for(Iterator i$ = values.iterator(); i$.hasNext(); sum += val.get()) {

val = (IntWritable)i$.next();

}

this.result.set(sum);

context.write(key, this.result);

}

}

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

private static final IntWritable one = new IntWritable(1);

private Text word = new Text();

public TokenizerMapper() {

}

public void map(Object key, Text value, Mapper<Object, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while(itr.hasMoreTokens()) {

this.word.set(itr.nextToken());

context.write(this.word, one);

}

}

}

}

运行时右击空白处 点击 run as 然后点击 run configutations

前面写需要进行mapreduce计算的文件 必须原本就存在

后面写输出文件 必须原本不存在 不然均会报错

看到类似这样的运行结果就是成功啦

成功运行后 查看输出文件(若未及时出现 可以右击文件refresh)

做到这 eclipse和hadoop就算连接成啦

完结撒花~

跟着笔者的步骤可以避免笔者踩过的大部分坑

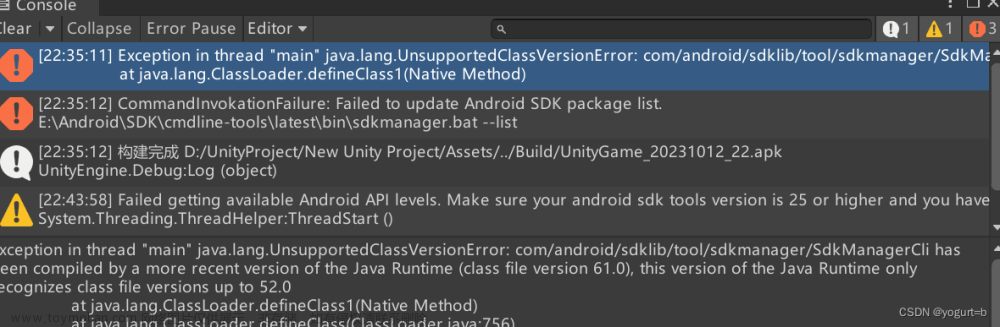

下面是我没有注明在文中的一些错误,欢迎大家一起来讨论

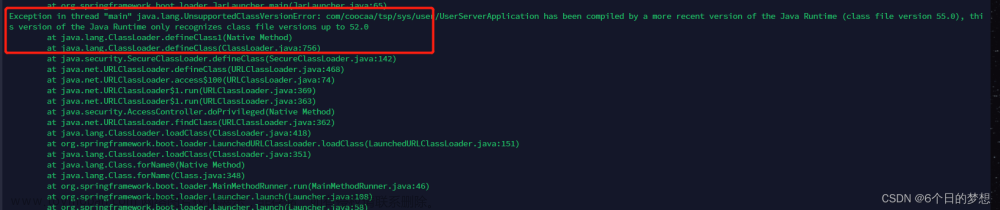

Exception in thread "main" java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Wi

报错如下

Exception in thread "main" java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z

at org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Native Method)

at org.apache.hadoop.io.nativeio.NativeIO$Windows.access(NativeIO.java:609)

at org.apache.hadoop.fs.FileUtil.canRead(FileUtil.java:977)

at org.apache.hadoop.util.DiskChecker.checkAccessByFileMethods(DiskChecker.java:187)

at org.apache.hadoop.util.DiskChecker.checkDirAccess(DiskChecker.java:174)

at org.apache.hadoop.util.DiskChecker.checkDir(DiskChecker.java:108)

at org.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.confChanged(LocalDirAllocator.java:285)

at org.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.getLocalPathForWrite(LocalDirAllocator.java:344)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:150)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:131)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:115)

at org.apache.hadoop.mapred.LocalDistributedCacheManager.setup(LocalDistributedCacheManager.java:125)

at org.apache.hadoop.mapred.LocalJobRunner$Job.<init>(LocalJobRunner.java:163)

at org.apache.hadoop.mapred.LocalJobRunner.submitJob(LocalJobRunner.java:731)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:240)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1290)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1287)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1287)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:1308)

at com.qs.WordcountDriver.main(WordcountDriver.java:44)

解决办法:

在创建的项目中 重新创建一个包,名为:org.apache.hadoop.io.nativeio

在包中创建一个类,名为NativeIO

内容为: 参考链接:http://t.csdn.cn/YxMOW

创建好在eclipse如下:

文章来源:https://www.toymoban.com/news/detail-432431.html

文章来源:https://www.toymoban.com/news/detail-432431.html

再运行,就可以解决了。文章来源地址https://www.toymoban.com/news/detail-432431.html

到了这里,关于eclipse和hadoop连接攻略(详细) Exception in thread "main" java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Wi的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!