服务器信息

| 服务器IP | 角色 |

|---|---|

| 192.168.233.201 | master |

| 192.168.233.202 | worker |

| 192.168.233.203 | worker |

安装前准备

- 配置主机名,ssh免密登录,hosts解析

# 配置主机名

#192.168.233.201 执行

hostnamectl set-hostname k8s-master

#192.168.233.201 执行

hostnamectl set-hostname k8s-node1

#192.168.233.202 执行

hostnamectl set-hostname k8s-node2

#配置hosts 三台机器执行

vim /etc/hosts

192.168.233.211 k8s-master

192.168.233.212 k8s-node1

192.168.233.213 k8s-node2

#配置免密登陆 三台机器执行

ssh-keygen

ssh-copy-id -i ~/.ssh/id_rsa.pub root@k8s-master

ssh-copy-id -i ~/.ssh/id_rsa.pub root@k8s-node1

ssh-copy-id -i ~/.ssh/id_rsa.pub root@k8s-node2

- 关闭 防火墙,seLinux,swap,允许 iptables 检查桥接流量 同步系统时间

#三台机器同时执行

#关闭防火墙

#关闭防火墙

systemctl stop firewalld.service

#关闭防火墙自动启动

systemctl disable firewalld.service

# 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

#关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

#允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

#让配置生效

sudo sysctl --system

#同步系统时间 安装ntpdate

yum -y install ntpdate

#同步时间

ntpdate -u pool.ntp.org

#同步完成后,date命令查看时间是否正确

date

3. 重启服务器

安装docker

yum install -y docker-ce-20.10.8-3.el7

systemctl start docker

systemctl enable docker

# 为docker设置阿里云镜像加速

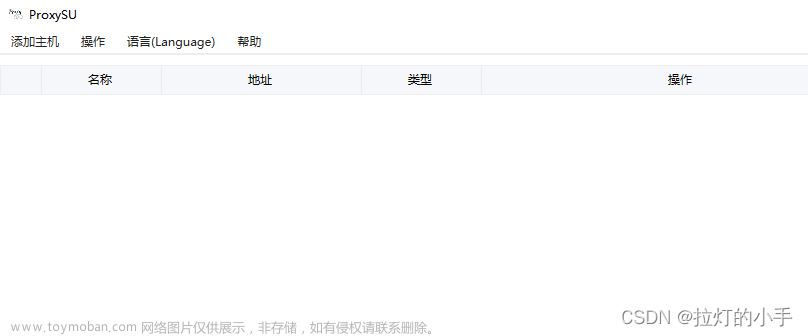

安装KuberSphere

#3台服务器都要执行 安装一些工具

yum install -y conntrack socat

export KKZONE=cn

#获取安装文件

curl -sfL https://get-kk.kubesphere.io | VERSION=v3.0.2 sh -

chmod +x kk

#生成配置文件

./kk create config --with-kubernetes v1.22.12 --with-kubesphere v3.3.1

#修改配置文件内的ip,超时时间,etcd等配置后执行安装

./kk create cluster -f config-sample.yaml

#查看进度

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-install -o jsonpath='{.items[0].metadata.name}') -f

config-sample.yaml 配置文件示例:

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: sample

spec:

hosts:

## timeout表示安装时的超时时间,如果网络不好的话可以设置大点

- {name: master, address: 192.168.233.206, internalAddress: 192.168.233.206, user: root, password: "root",timeout:1200}

- {name: node1, address: 192.168.233.207, internalAddress: 192.168.233.207, user: root, password: "root",timeout:1200}

- {name: node2, address: 192.168.233.208, internalAddress: 192.168.233.208, user: root, password: "root",timeout:1200}

roleGroups:

etcd:

- master

master:

- master

control-plane:

- master

worker:

- node1

- node2

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

# internalLoadbalancer: haproxy

domain: lb.kubesphere.local

address: ""

port: 6443

kubernetes:

version: v1.23.10

clusterName: cluster.local

autoRenewCerts: true

containerManager: docker

## etcd的type需要改为为kubeadm (新版本默认为kubekey)

etcd:

type: kubeadm

network:

plugin: calico

kubePodsCIDR: 10.233.64.0/18

kubeServiceCIDR: 10.233.0.0/18

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

registry:

privateRegistry: ""

namespaceOverride: ""

registryMirrors: []

insecureRegistries: []

addons: []

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.3.1

spec:

persistence:

storageClass: ""

authentication:

jwtSecret: ""

zone: ""

local_registry: ""

namespace_override: ""

# dev_tag: ""

etcd:

monitoring: false

endpointIps: localhost

port: 2379

tlsEnable: true

common:

core:

console:

enableMultiLogin: true

port: 30880

type: NodePort

# apiserver:

# resources: {}

# controllerManager:

# resources: {}

redis:

enabled: false

volumeSize: 2Gi

openldap:

enabled: false

volumeSize: 2Gi

minio:

volumeSize: 20Gi

monitoring:

# type: external

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090

GPUMonitoring:

enabled: false

gpu:

kinds:

- resourceName: "nvidia.com/gpu"

resourceType: "GPU"

default: true

es:

# master:

# volumeSize: 4Gi

# replicas: 1

# resources: {}

# data:

# volumeSize: 20Gi

# replicas: 1

# resources: {}

logMaxAge: 7

elkPrefix: logstash

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchHost: ""

externalElasticsearchPort: ""

alerting:

enabled: false

# thanosruler:

# replicas: 1

# resources: {}

auditing:

enabled: false

# operator:

# resources: {}

# webhook:

# resources: {}

devops:

enabled: false

# resources: {}

jenkinsMemoryLim: 8Gi

jenkinsMemoryReq: 4Gi

jenkinsVolumeSize: 8Gi

events:

enabled: false

# operator:

# resources: {}

# exporter:

# resources: {}

# ruler:

# enabled: true

# replicas: 2

# resources: {}

logging:

enabled: false

logsidecar:

enabled: true

replicas: 2

# resources: {}

metrics_server:

enabled: false

monitoring:

storageClass: ""

node_exporter:

port: 9100

# resources: {}

# kube_rbac_proxy:

# resources: {}

# kube_state_metrics:

# resources: {}

# prometheus:

# replicas: 1

# volumeSize: 20Gi

# resources: {}

# operator:

# resources: {}

# alertmanager:

# replicas: 1

# resources: {}

# notification_manager:

# resources: {}

# operator:

# resources: {}

# proxy:

# resources: {}

gpu:

nvidia_dcgm_exporter:

enabled: false

# resources: {}

multicluster:

clusterRole: none

network:

networkpolicy:

enabled: false

ippool:

type: none

topology:

type: none

openpitrix:

store:

enabled: false

servicemesh:

enabled: false

istio:

components:

ingressGateways:

- name: istio-ingressgateway

enabled: false

cni:

enabled: false

edgeruntime:

enabled: false

kubeedge:

enabled: false

cloudCore:

cloudHub:

advertiseAddress:

- ""

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

# resources: {}

# hostNetWork: false

iptables-manager:

enabled: true

mode: "external"

# resources: {}

# edgeService:

# resources: {}

terminal:

timeout: 600

如果安装过程中出现错误需要重新卸载安装

#删除集群

./kk delete cluster

#如果docker内已经生成容器并且删不掉需要删除kubelet

ps -ef | grep kubelet

kill -9 1949

rm -rf /usr/bin/kubelet

安装完kubesphere后还需要安装个nfs存储

#所有机器安装

yum install -y nfs-utils

mkdir -p /nfs/data

#master执行

echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exports

systemctl enable rpcbind

systemctl enable nfs-server

systemctl start rpcbind

systemctl start nfs-server

# 使配置生效

exportfs -r

#检查配置是否生效

exportfs

#node节点执行

showmount -e k8s-master

mount -t nfs k8s-master:/nfs/data /nfs/data

#最后在k8smaster节点上应用yaml文件

kubectl apply -f nfs.yaml

nfs.yaml文件示例文章来源:https://www.toymoban.com/news/detail-435395.html

## 创建了一个存储类

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-storage

annotations:

storageclass.kubernetes.io/is-default-class: "true"

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

archiveOnDelete: "true" ## 删除pv的时候,pv的内容是否要备份

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2

# resources:

# limits:

# cpu: 10m

# requests:

# cpu: 10m

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 172.31.0.4 ## 指定自己nfs服务器地址

- name: NFS_PATH

value: /nfs/data ## nfs服务器共享的目录

volumes:

- name: nfs-client-root

nfs:

server: 172.31.0.4

path: /nfs/data

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

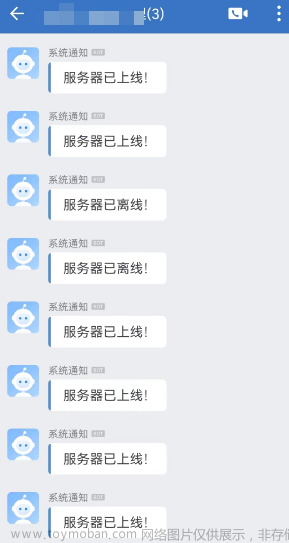

常见问题汇总

登录提示api-server异常

request to http://ks-apiserver/oauth/token failed, reason: getaddrinfo EAI_AGAIN ks-apiserver

解决办法:文章来源地址https://www.toymoban.com/news/detail-435395.html

- 编辑coredns 配置信息

kubectl -n kube-system edit cm coredns -o yaml

#会进入编辑状态,注释掉

# forward . /etc/resolv.conf {

# max_concurrent 1000

#}

- 删除掉2个pod

#查询pod的id

kubectl get pods -n kube-system | grep coredns

#删除pod

kubectl delete pod coredns-b5648d655-lm2qf -n kube-system

kubectl delete pod coredns-b5648d655-lxgxm -n kube-system

- 稍等片刻再次登录即可

到了这里,关于kubesphere多节点在线安装的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!