2023.5更新

最近帮别人做了个手眼标定,然后我标定完了大概精度能到1mm左右。所以原文中误差10mm可能是当时那个臂本身的坐标系有问题。然后用的代码改成了基于python的,放在下面。

新来的小伙伴可以只参考前面的代码就可以完成标定了。

有问题的话可以留言,一起交流~

手眼标定需要的内容:

1.不同姿态下拍摄棋盘格的照片若干张(我用了10张)

2.记录下机械臂的姿态格式为(x,y,z,rx,ry,rz)其中姿态为rpy角,单位为deg, 需要保存成excel文件

代码中需要修改的内容:

1.相机内参矩阵self.K 以及畸变参数

2.棋盘格尺寸

3.存放img和excel的路径

import os

import cv2

import xlrd2

from math import *

import numpy as np

# p[321.501 242.543] f[606.25 605.65]

class Calibration:

def __init__(self):

self.K = np.array([[606.25, 0.00000000e+00, 321.501],

[0.00000000e+00, 605.65, 242.543],

[0.00000000e+00, 0.00000000e+00, 1.00000000e+00]], dtype=np.float64)

self.distortion = np.array([[0, 0, 0.0, 0.0, 0]])

self.target_x_number = 11

self.target_y_number = 8

self.target_cell_size = 20

def angle2rotation(self, x, y, z):

Rx = np.array([[1, 0, 0], [0, cos(x), -sin(x)], [0, sin(x), cos(x)]])

Ry = np.array([[cos(y), 0, sin(y)], [0, 1, 0], [-sin(y), 0, cos(y)]])

Rz = np.array([[cos(z), -sin(z), 0], [sin(z), cos(z), 0], [0, 0, 1]])

R = Rz @ Ry @ Rx

return R

def gripper2base(self, x, y, z, tx, ty, tz):

thetaX = x / 180 * pi

thetaY = y / 180 * pi

thetaZ = z / 180 * pi

R_gripper2base = self.angle2rotation(thetaX, thetaY, thetaZ)

T_gripper2base = np.array([[tx], [ty], [tz]])

Matrix_gripper2base = np.column_stack([R_gripper2base, T_gripper2base])

Matrix_gripper2base = np.row_stack((Matrix_gripper2base, np.array([0, 0, 0, 1])))

R_gripper2base = Matrix_gripper2base[:3, :3]

T_gripper2base = Matrix_gripper2base[:3, 3].reshape((3, 1))

return R_gripper2base, T_gripper2base

def target2camera(self, img):

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

ret, corners = cv2.findChessboardCorners(gray, (self.target_x_number, self.target_y_number), None)

corner_points = np.zeros((2, corners.shape[0]), dtype=np.float64)

for i in range(corners.shape[0]):

corner_points[:, i] = corners[i, 0, :]

object_points = np.zeros((3, self.target_x_number * self.target_y_number), dtype=np.float64)

count = 0

for i in range(self.target_y_number):

for j in range(self.target_x_number):

object_points[:2, count] = np.array(

[(self.target_x_number - j - 1) * self.target_cell_size,

(self.target_y_number - i - 1) * self.target_cell_size])

count += 1

retval, rvec, tvec = cv2.solvePnP(object_points.T, corner_points.T, self.K, distCoeffs=self.distortion)

Matrix_target2camera = np.column_stack(((cv2.Rodrigues(rvec))[0], tvec))

Matrix_target2camera = np.row_stack((Matrix_target2camera, np.array([0, 0, 0, 1])))

R_target2camera = Matrix_target2camera[:3, :3]

T_target2camera = Matrix_target2camera[:3, 3].reshape((3, 1))

return R_target2camera, T_target2camera

def process(self, img_path, pose_path):

image_list = []

for root, dirs, files in os.walk(img_path):

if files:

for file in files:

image_name = os.path.join(root, file)

image_list.append(image_name)

R_target2camera_list = []

T_target2camera_list = []

for img_path in image_list:

img = cv2.imread(img_path)

R_target2camera, T_target2camera = self.target2camera(img)

R_target2camera_list.append(R_target2camera)

T_target2camera_list.append(T_target2camera)

R_gripper2base_list = []

T_gripper2base_list = []

data = xlrd2.open_workbook(pose_path)

table = data.sheets()[0]

for row in range(table.nrows):

x = table.cell_value(row, 3)

y = table.cell_value(row, 4)

z = table.cell_value(row, 5)

tx = table.cell_value(row, 0)

ty = table.cell_value(row, 1)

tz = table.cell_value(row, 2)

R_gripper2base, T_gripper2base = self.gripper2base(x, y, z, tx, ty, tz)

R_gripper2base_list.append(R_gripper2base)

T_gripper2base_list.append(T_gripper2base)

R_camera2base, T_camera2base = cv2.calibrateHandEye(R_gripper2base_list, T_gripper2base_list,

R_target2camera_list, T_target2camera_list)

return R_camera2base, T_camera2base, R_gripper2base_list, T_gripper2base_list, R_target2camera_list, T_target2camera_list

def check_result(self, R_cb, T_cb, R_gb, T_gb, R_tc, T_tc):

for i in range(len(R_gb)):

RT_gripper2base = np.column_stack((R_gb[i], T_gb[i]))

RT_gripper2base = np.row_stack((RT_gripper2base, np.array([0, 0, 0, 1])))

# print(RT_gripper2base)

RT_camera_to_gripper = np.column_stack((R_cb, T_cb))

RT_camera_to_gripper = np.row_stack((RT_camera_to_gripper, np.array([0, 0, 0, 1])))

print(RT_camera_to_gripper)#这个就是手眼矩阵

RT_target_to_camera = np.column_stack((R_tc[i], T_tc[i]))

RT_target_to_camera = np.row_stack((RT_target_to_camera, np.array([0, 0, 0, 1])))

# print(RT_target_to_camera)

RT_target_to_base = RT_gripper2base @ RT_camera_to_gripper @ RT_target_to_camera

print("第{}次验证结果为:".format(i))

print(RT_target_to_base)

print('')

if __name__ == "__main__":

image_path = r"I:\data_handeye\img"

pose_path = r"I:\pose.xlsx"

calibrator = Calibration()

R_cb, T_cb, R_gb, T_gb, R_tc, T_tc = calibrator.process(image_path, pose_path)

calibrator.check_result(R_cb, T_cb, R_gb, T_gb, R_tc, T_tc)

结果展示:

下面三个矩阵代表的是在不同姿态下解算得到标定板在基坐标系下的姿态,最后一列是位置,单位为mm,可以看出精度差不多在1mm左右。

第1次验证结果为:

[[ 3.98925017e-03 9.99742174e-01 -2.23533483e-02 -3.90700113e+02]

[ 9.99982733e-01 -4.08467234e-03 -4.22477708e-03 -1.11656116e+02]

[-4.31499393e-03 -2.23361086e-02 -9.99741206e-01 -5.01618116e+00]

[ 0.00000000e+00 0.00000000e+00 0.00000000e+00 1.00000000e+00]]

第2次验证结果为:

[[ 2.05751209e-03 9.99866123e-01 -1.62327447e-02 -3.90254641e+02]

[ 9.99993197e-01 -2.10692535e-03 -3.02753423e-03 -1.13056000e+02]

[-3.06133010e-03 -1.62264051e-02 -9.99863657e-01 -6.26002256e+00]

[ 0.00000000e+00 0.00000000e+00 0.00000000e+00 1.00000000e+00]]

第3次验证结果为:

[[ 4.87875821e-03 9.99819129e-01 -1.83822511e-02 -3.91281959e+02]

[ 9.99986520e-01 -4.91059054e-03 -1.68694875e-03 -1.11955299e+02]

[-1.77691133e-03 -1.83737731e-02 -9.99829609e-01 -6.29677977e+00]

[ 0.00000000e+00 0.00000000e+00 0.00000000e+00 1.00000000e+00]]

------------------下面为原文---------------------

一、前期准备

1.1 手眼标定原理

手眼标定的原理网上有很多,推荐几篇博客:

手眼标定_全面细致的推导过程

机器人手眼标定

深入浅出地理解机器人手眼标定

简单来说需要求解一个AX=XB的矩阵方程,具体求解方法可以看

手眼标定AX=XB求解方法(文献总结)

1.2 Aruco返回位姿的原理

ArUco标记最初由S.Garrido-Jurado等人在2014年发表的论文Automatic generation and detection of highly reliable fiducial markers under occlusion中提出。ArUco的全称是Augmented Reality University of Cordoba。Aruco是一种类似二维码的定位标记辅助工具,通过在环境中部署Markers,可以辅助机器人进行定位。

OpenCV Aruco 参数源码完整解析理解!

ArUco Marker检测原理

1.3 生成一个Aruco Marker

在线aruco生成网站,注意要选择original格式,打印出来之后备用。

1.4 安装aruco_ros包

cd ~/catkin_ws/src

git clone https://github.com/pal-robotics/aruco_ros.git

cd ..

catkin_make

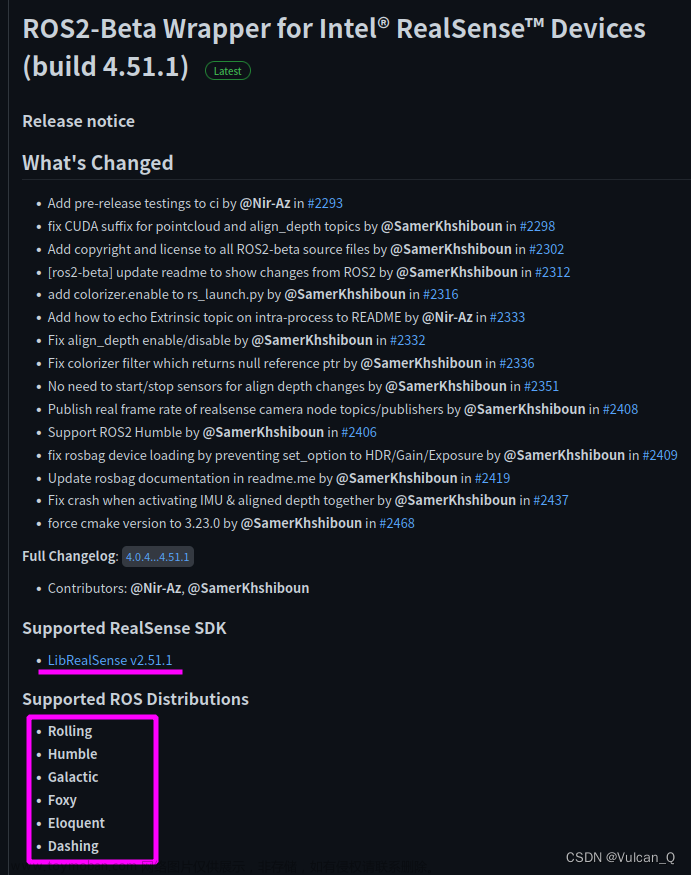

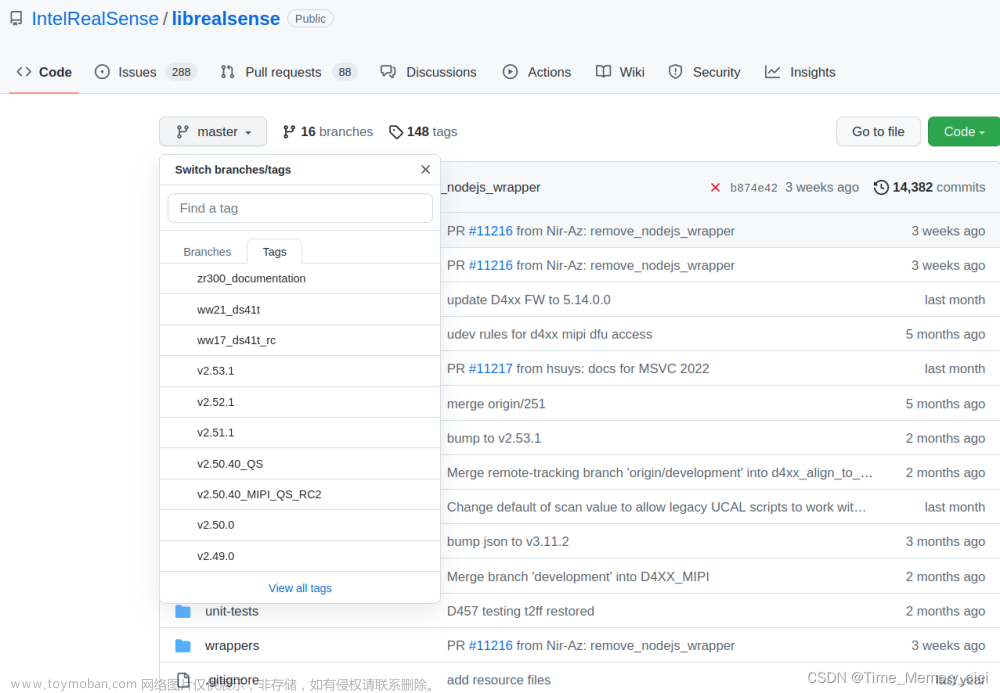

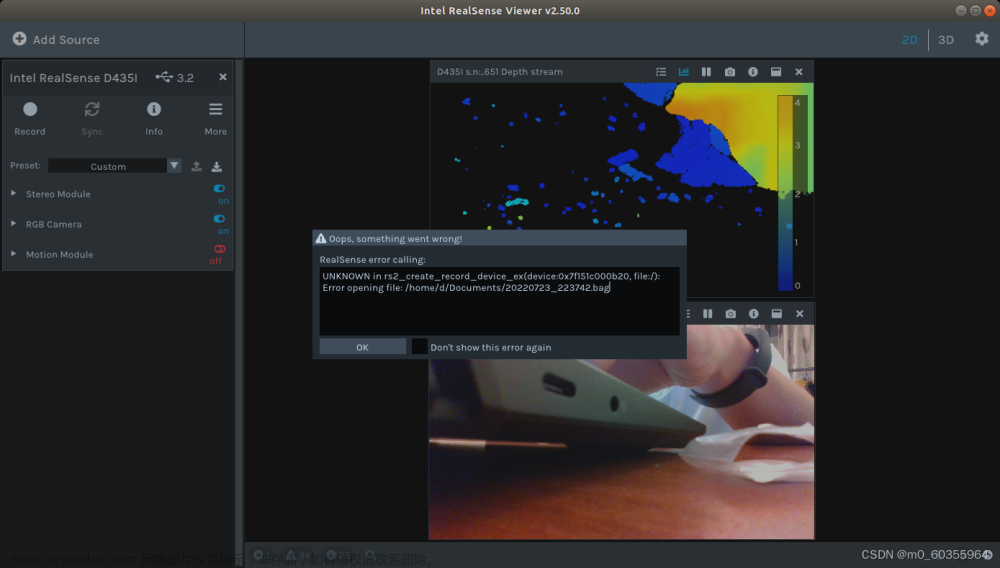

1.5 安装realsense_ros包

按照GitHub上的步骤安装即可。

https://github.com/IntelRealSense/realsense-ros

二、实验环境

- 机械臂:rokae xMate3 pro

- 相机:realsense d435i

- Aruco id:123 size:100mm

- ubuntu 16.04 ROS+ win 10 RobotAssist

- 安装方式:eye in hand

三、实验过程

3.1 配置Aruco launch文件

roscd aruco_ros

cd launch

gedit single.launch

修改single.launch文件,需要修改markerId markerSize为你生成的aruco的id和size,以及/camera_info /image指向realsense发布的话题,camera_frame改为/camera_link。

<launch>

<arg name="markerId" default="123"/>

<arg name="markerSize" default="0.1"/> <!-- in m -->

<arg name="eye" default="left"/>

<arg name="marker_frame" default="aruco_marker_frame"/>

<arg name="ref_frame" default=""/> <!-- leave empty and the pose will be published wrt param parent_name -->

<arg name="corner_refinement" default="LINES" /> <!-- NONE, HARRIS, LINES, SUBPIX -->

<node pkg="aruco_ros" type="single" name="aruco_single">

<remap from="/camera_info" to="/camera/color/camera_info" />

<remap from="/image" to="/camera/color/image_raw" />

<param name="image_is_rectified" value="True"/>

<param name="marker_size" value="$(arg markerSize)"/>

<param name="marker_id" value="$(arg markerId)"/>

<param name="reference_frame" value="$(arg ref_frame)"/>

<param name="camera_frame" value="/camera_link"/>

<param name="marker_frame" value="$(arg marker_frame)" />

<param name="corner_refinement" value="$(arg corner_refinement)" />

</node>

</launch>

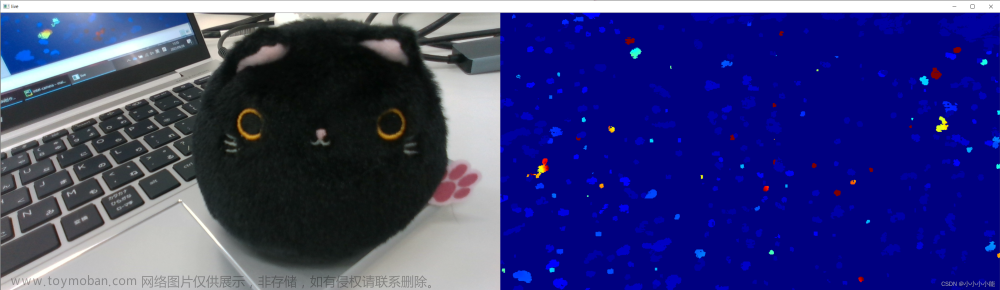

3.2 获取Aruco相对于相机的位姿

运行aruco_ros+realsense节点

roslaunch realsense2_camera rs_camera.launch

roslaunch aruco_ros single.launch

查看显示的图像和返回的位姿

rosrun image_view image_view image:=/aruco_single/result

rostopic echo /aruco_single/pose

3.3 获取机械臂末端的位姿:

使用Rokae自带的上位机HMI软件Robot Assist,可以简单读取。

其中ABC为“ZYX”型欧拉角,Q1~Q4为(w,x,y,z)四元数。

3.4 Opencv求解手眼矩阵

代码参考手眼标定(一):Opencv4实现手眼标定及手眼系统测试 ,加了点修改。

aruco返回的位姿为四元数形式,因此将attitudeVectorToMatrix函数的输入改成了 位置+欧拉角–>旋转矩阵(输入为nx6),位置+四元数—>旋转矩阵(输入为nx7)。

#include <iostream>

#include <string>

#include <opencv2/opencv.hpp>

#include <opencv2/core.hpp>

#include <opencv2/imgproc.hpp>

#include <opencv2/calib3d.hpp>

#include <iterator>

#include <algorithm>

using namespace cv;

using namespace std;

template <typename T>

std::ostream& operator<< (std::ostream& out, const std::vector<T>& v) {

if (!v.empty()) {

out << '[';

std::copy(v.begin(), v.end(), std::ostream_iterator<T>(out, ", "));

out << "\b\b]";

}

return out;

}

int num = 16;

//相机中组标定板的位姿,x,y,z,w,x,y,z

Mat_<double> CalPose = (cv::Mat_<double>(num, 7) <<

0.01963879, -0.009811901, 0.205815151, -0.472094888, 0.101775888, -0.345924179, 0.804428087,

0.018775674, -0.008453256, 0.187205523, -0.486293263, 0.154739671, -0.358078652, 0.781891409,

0.012794545, -0.004475131, 0.181938171, 0.50586501, -0.228167491, 0.37478512, -0.742681831,

0.032269519, -0.00216203, 0.173868418, 0.520070883, -0.334713601, 0.409799027, -0.670490746,

0.036921501, -0.00520581, 0.173751414, 0.509143837, -0.389764156, 0.430712138, -0.635093308,

0.028183416, -0.003799309, 0.157465994, -0.493999273, 0.434277098, -0.443106472, 0.609118031,

0.011437201, 0.00248707, 0.160097286, 0.505064681, -0.477579273, 0.433387075, -0.573588135,

0.032602217, 0.021788951, 0.224987134, -0.45692465, 0.54759083, -0.46897897, 0.520982603,

0.012125032, -0.002286719, 0.17284067, -0.469627705, 0.49778925, -0.506302662, 0.524703054,

0.005448693, -0.004084263, 0.196215734, 0.420676917, -0.55355367, 0.57085096, -0.43673613,

-0.001541587, -0.000362108, 0.187365457, 0.386895866, -0.565030889, 0.607491241, -0.402499782,

0.0283868, 0.00129835, 0.21059823, 0.367601287, -0.614671423, 0.616489404, -0.327091961,

0.026122695, -0.006088101, 0.222446054, -0.360286232, 0.636446394, -0.615347513, 0.294070155,

0.016605999, 0.002255499, 0.214847937, -0.322620688, 0.64815307, -0.642728715, 0.250426214,

-0.004833174, -0.00322185, 0.206448808, -0.318973207, 0.693907003, -0.618815043, 0.183894282,

-0.023912154, -0.006630629, 0.213570014, -0.312473203, 0.731833827, -0.595186057, 0.111952241);

//机械臂末端的位姿,x,y,z,w,x,y,z

Mat_<double> ToolPose = (cv::Mat_<double>(num, 7) <<

0.508533, -0.260803, 0.292825, -0.0002, 0.2843, 0.8712, 0.4003,

0.527713, -0.230407, 0.300856, 0.0012, 0.2461, 0.8979, 0.365,

0.544016, -0.192872, 0.326135, 0.0075, 0.1894, 0.9311, 0.3117,

0.552381, -0.149794, 0.342366, 0.0208, 0.113, 0.9692, 0.2178,

0.564071, -0.118316, 0.356974, 0.0196, 0.0855, 0.9829, 0.1617,

0.574263, -0.073508, 0.354265, 0.0123, 0.0659, 0.991, 0.1162,

0.573574, -0.038738, 0.363898, 0.01, 0.0166, 0.9961, 0.0866,

0.563025, -0.000004, 0.432336, 0, 0, 1, 0,

0.561599, 0.018044, 0.376489, 0.0398, 0.0384, 0.9984, 0.0135,

0.553613, 0.117616, 0.38041, 0.0523, 0.0278, 0.993, -0.102,

0.546303, 0.163889, 0.350932, 0.0606, 0.0419, 0.9854, -0.1537,

0.527343, 0.191386, 0.356761, 0.066, -0.0058, 0.9719, -0.2261,

0.525386, 0.218939, 0.352163, 0.0683, -0.0293, 0.9638, -0.2561,

0.510583, 0.26087, 0.30687, 0.0742, -0.0232, 0.9467, -0.3125,

0.494977, 0.290977, 0.257741, 0.0708, -0.089, 0.9264, -0.359,

0.464551, 0.322355, 0.214358, 0.0712, -0.1525, 0.8982, -0.4062);

//R和T转RT矩阵

Mat R_T2RT(Mat& R, Mat& T)

{

Mat RT;

Mat_<double> R1 = (cv::Mat_<double>(4, 3) << R.at<double>(0, 0), R.at<double>(0, 1), R.at<double>(0, 2),

R.at<double>(1, 0), R.at<double>(1, 1), R.at<double>(1, 2),

R.at<double>(2, 0), R.at<double>(2, 1), R.at<double>(2, 2),

0.0, 0.0, 0.0);

cv::Mat_<double> T1 = (cv::Mat_<double>(4, 1) << T.at<double>(0, 0), T.at<double>(1, 0), T.at<double>(2, 0), 1.0);

cv::hconcat(R1, T1, RT);//C=A+B左右拼接

return RT;

}

//RT转R和T矩阵

void RT2R_T(Mat& RT, Mat& R, Mat& T)

{

cv::Rect R_rect(0, 0, 3, 3);

cv::Rect T_rect(3, 0, 1, 3);

R = RT(R_rect);

T = RT(T_rect);

}

//判断是否为旋转矩阵

bool isRotationMatrix(const cv::Mat& R)

{

cv::Mat tmp33 = R({ 0,0,3,3 });

cv::Mat shouldBeIdentity;

shouldBeIdentity = tmp33.t() * tmp33;

cv::Mat I = cv::Mat::eye(3, 3, shouldBeIdentity.type());

return cv::norm(I, shouldBeIdentity) < 1e-6;

}

/** @brief 欧拉角 -> 3*3 的R

* @param eulerAngle 角度值

* @param seq 指定欧拉角xyz的排列顺序如:"xyz" "zyx"

*/

cv::Mat eulerAngleToRotatedMatrix(const cv::Mat& eulerAngle, const std::string& seq)

{

CV_Assert(eulerAngle.rows == 1 && eulerAngle.cols == 3);

eulerAngle /= 180 / CV_PI;

cv::Matx13d m(eulerAngle);

auto rx = m(0, 0), ry = m(0, 1), rz = m(0, 2);

auto xs = std::sin(rx), xc = std::cos(rx);

auto ys = std::sin(ry), yc = std::cos(ry);

auto zs = std::sin(rz), zc = std::cos(rz);

cv::Mat rotX = (cv::Mat_<double>(3, 3) << 1, 0, 0, 0, xc, -xs, 0, xs, xc);

cv::Mat rotY = (cv::Mat_<double>(3, 3) << yc, 0, ys, 0, 1, 0, -ys, 0, yc);

cv::Mat rotZ = (cv::Mat_<double>(3, 3) << zc, -zs, 0, zs, zc, 0, 0, 0, 1);

cv::Mat rotMat;

if (seq == "zyx") rotMat = rotX * rotY * rotZ;

else if (seq == "yzx") rotMat = rotX * rotZ * rotY;

else if (seq == "zxy") rotMat = rotY * rotX * rotZ;

else if (seq == "xzy") rotMat = rotY * rotZ * rotX;

else if (seq == "yxz") rotMat = rotZ * rotX * rotY;

else if (seq == "xyz") rotMat = rotZ * rotY * rotX;

else {

cv::error(cv::Error::StsAssert, "Euler angle sequence string is wrong.",

__FUNCTION__, __FILE__, __LINE__);

}

if (!isRotationMatrix(rotMat)) {

cv::error(cv::Error::StsAssert, "Euler angle can not convert to rotated matrix",

__FUNCTION__, __FILE__, __LINE__);

}

return rotMat;

//cout << isRotationMatrix(rotMat) << endl;

}

/** @brief 四元数转旋转矩阵

* @note 数据类型double; 四元数定义 q = w + x*i + y*j + z*k

* @param q 四元数输入{w,x,y,z}向量

* @return 返回旋转矩阵3*3

*/

cv::Mat quaternionToRotatedMatrix(const cv::Vec4d& q)

{

double w = q[0], x = q[1], y = q[2], z = q[3];

double x2 = x * x, y2 = y * y, z2 = z * z;

double xy = x * y, xz = x * z, yz = y * z;

double wx = w * x, wy = w * y, wz = w * z;

cv::Matx33d res{

1 - 2 * (y2 + z2), 2 * (xy - wz), 2 * (xz + wy),

2 * (xy + wz), 1 - 2 * (x2 + z2), 2 * (yz - wx),

2 * (xz - wy), 2 * (yz + wx), 1 - 2 * (x2 + y2),

};

return cv::Mat(res);

}

/** @brief ((四元数||欧拉角||旋转向量) && 转移向量) -> 4*4 的Rt

* @param m 1*6 || 1*7的矩阵 -> 6 {x,y,z, rx,ry,rz} 7 {x,y,z, qw,qx,qy,qz}

* @param useQuaternion 如果是1*7的矩阵,判断是否使用四元数计算旋转矩阵

* @param seq 如果通过欧拉角计算旋转矩阵,需要指定欧拉角xyz的排列顺序如:"xyz" "zyx" 为空表示旋转向量

*/

cv::Mat attitudeVectorToMatrix(cv::Mat m, bool useQuaternion, const std::string& seq)

{

CV_Assert(m.total() == 6 || m.total() == 7);

if (m.cols == 1)

m = m.t();

cv::Mat tmp = cv::Mat::eye(4, 4, CV_64FC1);

//如果使用四元数转换成旋转矩阵则读取m矩阵的第第四个成员,读4个数据

if (useQuaternion) // normalized vector, its norm should be 1.

{

cv::Vec4d quaternionVec = m({ 3, 0, 4, 1 });

quaternionToRotatedMatrix(quaternionVec).copyTo(tmp({ 0, 0, 3, 3 }));

// cout << norm(quaternionVec) << endl;

}

else

{

cv::Mat rotVec;

if (m.total() == 6)

rotVec = m({ 3, 0, 3, 1 }); //6

else

rotVec = m({ 7, 0, 3, 1 }); //10

//如果seq为空表示传入的是旋转向量,否则"xyz"的组合表示欧拉角

if (0 == seq.compare(""))

cv::Rodrigues(rotVec, tmp({ 0, 0, 3, 3 }));

else

eulerAngleToRotatedMatrix(rotVec, seq).copyTo(tmp({ 0, 0, 3, 3 }));

}

tmp({ 3, 0, 1, 3 }) = m({ 0, 0, 3, 1 }).t();

//std::swap(m,tmp);

return tmp;

}

int main()

{

//定义手眼标定矩阵

std::vector<Mat> R_gripper2base;

std::vector<Mat> t_gripper2base;

std::vector<Mat> R_target2cam;

std::vector<Mat> t_target2cam;

Mat R_cam2gripper = (Mat_<double>(3, 3));

Mat t_cam2gripper = (Mat_<double>(3, 1));

vector<Mat> images;

size_t num_images = num;

// 读取末端,标定板的姿态矩阵 4*4

std::vector<cv::Mat> vecHb, vecHc;

cv::Mat Hcb;//定义相机camera到末端grab的位姿矩阵

Mat tempR, tempT;

for (size_t i = 0; i < num_images; i++)//计算标定板位姿

{

cv::Mat tmp = attitudeVectorToMatrix(CalPose.row(i), true, ""); //转移向量转旋转矩阵

vecHc.push_back(tmp);

RT2R_T(tmp, tempR, tempT);

R_target2cam.push_back(tempR);

t_target2cam.push_back(tempT);

}

//cout << R_target2cam << endl;

for (size_t i = 0; i < num_images; i++)//计算机械臂位姿

{

//cv::Mat tmp = attitudeVectorToMatrix(ToolPose.row(i), true, "zyx");

cv::Mat tmp = attitudeVectorToMatrix(ToolPose.row(i), true, ""); //机械臂位姿为欧拉角-旋转矩阵

//tmp = tmp.inv();//机械臂基座位姿为欧拉角-旋转矩阵

vecHb.push_back(tmp);

RT2R_T(tmp, tempR, tempT);

R_gripper2base.push_back(tempR);

t_gripper2base.push_back(tempT);

}

//cout << t_gripper2base[0] << " " << t_gripper2base[1] << " " << t_gripper2base[2] << " " << endl;

//cout << t_gripper2base << endl;

//手眼标定,CALIB_HAND_EYE_TSAI法为11ms,最快

calibrateHandEye(R_gripper2base, t_gripper2base, R_target2cam, t_target2cam, R_cam2gripper, t_cam2gripper, CALIB_HAND_EYE_TSAI);

Hcb = R_T2RT(R_cam2gripper, t_cam2gripper);//矩阵合并

std::cout << "Hcb 矩阵为: " << std::endl;

std::cout << Hcb << std::endl;

cout << "是否为旋转矩阵:" << isRotationMatrix(Hcb) << std::endl << std::endl;//判断是否为旋转矩阵

//Tool_In_Base*Hcg*Cal_In_Cam,用第一组和第二组进行对比验证

cout << "第一组和第二组手眼数据验证:" << endl;

cout << vecHb[0] * Hcb * vecHc[0] << endl << vecHb[1] * Hcb * vecHc[1] << endl << endl;//.inv()

cout << "标定板在相机中的位姿:" << endl;

cout << vecHc[1] << endl;

cout << "手眼系统反演的位姿为:" << endl;

//用手眼系统预测第一组数据中标定板相对相机的位姿,是否与vecHc[1]相同

cout << Hcb.inv() * vecHb[1].inv() * vecHb[0] * Hcb * vecHc[0] << endl << endl;

cout << "----手眼系统测试----" << endl;

cout << "机械臂下标定板XYZ为:" << endl;

for (int i = 0; i < vecHc.size(); ++i)

{

cv::Mat cheesePos{ 0.0,0.0,0.0,1.0 };//4*1矩阵,单独求机械臂下,标定板的xyz

cv::Mat worldPos = vecHb[i] * Hcb * vecHc[i] * cheesePos;

cout << i << ": " << worldPos.t() << endl;

}

getchar();

}

3.5 实验结果

精度还没有做到极致,目前误差在1cm以内。

四、相关思考总结

4.1 多种姿态的表示方法

代码中的attitudeVectorToMatrix可以实现:位置+欧拉角–>旋转矩阵(输入为nx6)位置+四元数—>旋转矩阵(输入为nx7)

-

欧拉角到旋转矩阵的算法不唯一。当使用欧拉角计算时务必注意欧拉角的顺序以及输入的应为角度值。如果不知道欧拉角的顺序,可以使用https://quaternions.online/进行验证。

-

四元数到旋转矩阵的算法唯一。但是四元数会有两种表示方式

(w,x,y,z)和(x,y,z,w),attitudeVectorToMatrix函数中使用(w,x,y,z),但是aruco返回的姿态的四元数为(x,y,z,w)一定要记得修改!

4.2 机器人的末端坐标系

- 机械臂的末端坐标系是以最后一个轴的旋转面作为原始平面建立的,不是末端法兰的平面,两者之间至少有20cm的距离。

- 代码中的数据求得的手眼矩阵是相机相对于机械臂末端的转换矩阵。但是我的机械臂末端装了六维力传感器以及一个抓手,如果想要得到相对于抓手末端的手眼矩阵是不是单纯的对z值进行增减即可?当时我第一时间想到可以将原始数据机械臂位姿的z都减少一个相同的值,来模拟末端装了一个tcp,再代入程序进行求解得到如下数据。发现手眼矩阵并无变化,而是标定板在基坐标系下的z值减少了。仔细一想,发现问题在于不同姿态下末端不一定铅锤于平面,因此这样的数据程序认为是你的标定板放低了,而不是末端装了个tcp。

- 回到手眼矩阵的本质,是相对于某一个坐标系的旋转+平移。理论上可以对手眼矩阵的平移向量z值加以修改,较为简单的转换到tcp坐标系下,但是这个z值好像难以把控。尝试后还是决定再做一次手眼标定,直接将tcp坐标系加入到机械臂末端。

4.3 如何提升精度

AX=XB求解方法为Tsai-Lenz 两步法,网上有其提升精度的注意点如下。说的很抽象,我看的似懂非懂,也没人做过对照实验。希望大家多多讨论交流。

(1) 不管采集多少组用于标定的运动数据,每组运动使运动角度最大。

(2) 使两组运动的旋转轴角度最大。

(3) 每组运动中机械臂末端运动距离尽量小,可通路径规划实现该条件。

(4) 尽量减小相机中心到标定板的距离,可使用适当小的标定板。

(5) 尽量采集多组用于求解的数据。

(6) 使用高精度的相机标定方法。

(7) 尽量提高机械臂的绝对定位精度,如果该条件达不到,至少需要提高相对运动精度。文章来源:https://www.toymoban.com/news/detail-441527.html

五、参考文献

手眼标定_全面细致的推导过程

机器人手眼标定

深入浅出地理解机器人手眼标定

手眼标定AX=XB求解方法(文献总结)

OpenCV Aruco 参数源码完整解析理解

ArUco Marker检测原理

https://github.com/IntelRealSense/realsense-ros

手眼标定(二):Tsai 求解方法文章来源地址https://www.toymoban.com/news/detail-441527.html

到了这里,关于(已修正精度 1mm左右)Realsense d435i深度相机+Aruco+棋盘格+OpenCV手眼标定全过程记录的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!