前言

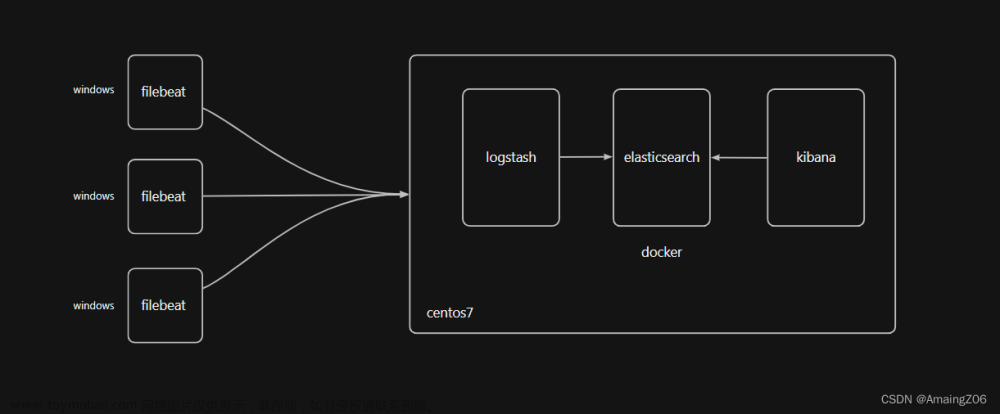

EFK简介

Elasticsearch 是一个实时的、分布式的可扩展的搜索引擎,允许进行全文、结构化搜索,它通常用于索引和搜索大量日志数据,也可用于搜索许多不同类型的文档。

FileBeats 是数据采集的得力工具。将 Beats 和您的容器一起置于服务器上,或者将 Beats 作为函数加以部署,然后便可在 Elastisearch 中集中处理数据。如果需要更加强大的处理性能,Beats 还能将数据输送到 Logstash 进行转换和解析。

Kibana 核心产品搭载了一批经典功能:柱状图、线状图、饼图、旭日图,等等。不仅如此,您还可以使用 Vega 语法来设计独属于您自己的可视化图形。所有这些都利用 Elasticsearch 的完整聚合功能。

Elasticsearch 通常与 Kibana 一起部署,Kibana 是 Elasticsearch 的一个功能强大的数据可视化 Dashboard,Kibana 允许你通过 web 界面来浏览 Elasticsearch 日志数据。

ELK和EFK的区别:

ELK 是现阶段众多企业单位都在使用的一种日志分析系统,它能够方便的为我们收集你想要的日志并且展示出来

ELK是Elasticsearch、Logstash、Kibana的简称,这三者都是开源软件,通常配合使用。

- Elasticsearch –>存储数据

是一个实时的分布式搜索和分析引擎,它可以用于全文搜索,结构化搜索以及分析。它是一个建立在全文搜索引擎 Apache Lucene 基础上的搜索引擎,使用 Java 语言编写,能对大容量的数据进行接近实时的存储、搜索和分析操作。

- Logstash –> 收集数据

数据收集引擎。它支持动态的从各种数据源搜集数据,并对数据进行过滤、分析、丰富、统一格式等操作,然后存储到用户指定的位置。

- Kibana –> 展示数据

数据分析和可视化平台。通常与 Elasticsearch 配合使用,对其中数据进行搜索、分析和以统计图表的方式展示。

EFK是ELK日志分析系统的一个变种,加入了filebeat 可以更好的收集到资源日志 来为我们的日志分析做好准备工作。

优缺点

Filebeat 相对 Logstash 的优点:

侵入低,无需修改 elasticsearch 和 kibana 的配置;

性能高,IO 占用率比 logstash 小太多;

当然 Logstash 相比于 FileBeat 也有一定的优势,比如 Logstash 对于日志的格式化处理能力,FileBeat 只是将日志从日志文件中读取出来,当然如果收集的日志本身是有一定格式的,FileBeat 也可以格式化,但是相对于Logstash 来说,效果差很多。

下面搭建一个简单的EFK日志收集系统,基于最新的版本8.7,ELK三个软件版本最好要保持一致,不然会出现问题。

一、下载

下载版本8.7.0

Es地址:https://www.elastic.co/cn/downloads/elasticsearch

Filebeat地址:https://www.elastic.co/cn/downloads/beats/filebeat

Kibana地址:https://www.elastic.co/cn/downloads/kibana

二、使用步骤

1.安装es

解压ES安装文件

修改config目录中的elasticsearch.yml,添加两行配置,防止跨域。

http.cors.enabled: true

http.cors.allow-origin: "*"

修改host

network.host: 192.168.100.22

进入bin目录

cmd中运行elasticsearch

从启动日志中拷贝出默认用户名

修改elasticsearch.yml文件,xpack.security.http.ssl:enabled设置为false。

访问localhost:9200,输入用户elastic和拷贝出的密码

能访问,代表成功了

![搭建EFK(Elasticsearch+Filebeat+Kibana)日志收集系统[windows]](https://imgs.yssmx.com/Uploads/2023/06/475312-1.png)

2.安装kibana

解压kibana

修改config下kibana.yml

修改配置:

i18n.locale: "zh-CN"

server.host: "192.168.100.22"

server.port: 5601

重置es中kibana账号的密码

进入es的bin目录

执行:

elasticsearch-reset-password -u kibana

将生成的密码拷贝出来,配置到kibana.yml中

elasticsearch.username: "kibana"

elasticsearch.password: "MK=iUF0fuJYXx-QbC=TF"

进入kibana的bin目录启动kibana.bat

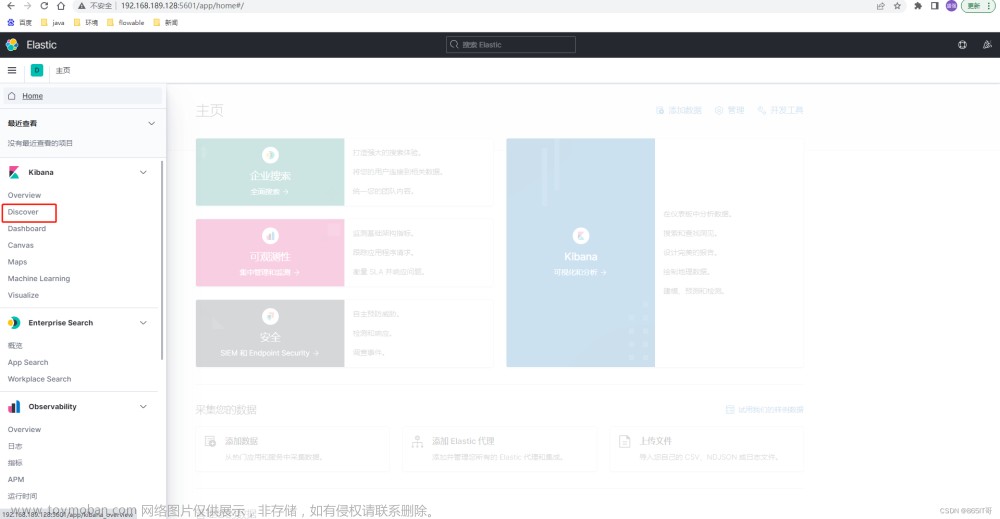

访问http://192.168.100.22:5601,输入用户名elastic,密码为安装es时拷贝出来的密码![搭建EFK(Elasticsearch+Filebeat+Kibana)日志收集系统[windows]](https://imgs.yssmx.com/Uploads/2023/06/475312-2.png)

3.安装filebeat

解压filebeat

修改filebeat.yml,配置说明见下面的注释

......

# ============================== Filebeat inputs ===============================

filebeat.inputs:

# Each - is an input. Most options can be set at the input level, so

# you can use different inputs for various configurations.

# Below are the input specific configurations.

# filestream is an input for collecting log messages from files.

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-access-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\*\log_access.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-access

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-error-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_error.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-error

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-info-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_info.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-info

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-warn-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_warn.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-warn

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

......

setup.ilm.enabled: false # 如果要创建多个索引,需要将此项设置为 false

setup.template.name: station_log # 设置模板的名称

setup.template.pattern: station_log-* # 设置模板的匹配方式,索引的前缀要和这里保持一致

setup.template.overwrite: true # 开启新设置的模板

setup.template.enabled: false # 关掉默认的模板配置

# ---------------------------- Elasticsearch Output ----------------------------

output.elasticsearch:

# Array of hosts to connect to.

hosts: ["192.168.100.22:9200"]

index: station_log-%{[fields.type]}-%{+yyyy.MM.dd} # 设置索引名称,后面引用的 fields.type 变量。此处的配置应该可以省略(不符合下面创建索引条件的日志,会使用该索引)

indices: # 使用 indices 代表要创建多个索引

- index: station_log-newframe-log-access-%{+yyyy.MM.dd} # 设置 日志的索引,注意索引前面的 station_log 要与setup.template.pattern 的配置相匹配

when.equals: # 设置创建索引的条件:当 fields.type 的值等于 newframe-log-access 时才生效

fields.type: newframe-log-access

- index: station_log-newframe-log-error-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-error

- index: station_log-newframe-log-info-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-info

- index: station_log-newframe-log-warn-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-warn

# Protocol - either `http` (default) or `https`.

#protocol: "https"

# Authentication credentials - either API key or username/password.

#api_key: "id:api_key"

username: "elastic"

password: "QbxGioLkbOy_jNkuvCkV"

......

启动:

filebeat.exe setup

filebeat.exe -e -c filebeat.yml

启动后查看索引,多了几个日志的索引![搭建EFK(Elasticsearch+Filebeat+Kibana)日志收集系统[windows]](https://imgs.yssmx.com/Uploads/2023/06/475312-3.png) 文章来源:https://www.toymoban.com/news/detail-475312.html

文章来源:https://www.toymoban.com/news/detail-475312.html

4.在kibana查看日志

访问kibana:

http://192.168.100.22:5601/

Discover

创建数据视图,查看日志,如下图![搭建EFK(Elasticsearch+Filebeat+Kibana)日志收集系统[windows]](https://imgs.yssmx.com/Uploads/2023/06/475312-4.png) 文章来源地址https://www.toymoban.com/news/detail-475312.html

文章来源地址https://www.toymoban.com/news/detail-475312.html

附完整的filebeat.yml

###################### Filebeat Configuration Example #########################

# This file is an example configuration file highlighting only the most common

# options. The filebeat.reference.yml file from the same directory contains all the

# supported options with more comments. You can use it as a reference.

#

# You can find the full configuration reference here:

# https://www.elastic.co/guide/en/beats/filebeat/index.html

# For more available modules and options, please see the filebeat.reference.yml sample

# configuration file.

# ============================== Filebeat inputs ===============================

filebeat.inputs:

# Each - is an input. Most options can be set at the input level, so

# you can use different inputs for various configurations.

# Below are the input specific configurations.

# filestream is an input for collecting log messages from files.

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-access-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\*\log_access.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-access

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-error-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_error.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-error

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-info-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_info.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-info

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

- type: log

# Unique ID among all inputs, an ID is required.

id: my-newframe-warn-id

# Change to true to enable this input configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- D:\ideaProjects\newframe-by-maven\logs\dmo-uaa\log_warn.log

#- c:\programdata\elasticsearch\logs\*

# 设置fields,标记此日志

fields:

type: newframe-log-warn

multiline.type: pattern

multiline.pattern: '^\d{4}-\d{2}-\d{2}'

multiline.negate: true

multiline.match: after

#multiline.pattern: '^[[:space:]]+(at|\.{3})[[:space:]]+\b|^Caused by:'

#multiline.negate: false

#multiline.match: after

# Exclude lines. A list of regular expressions to match. It drops the lines that are

# matching any regular expression from the list.

# Line filtering happens after the parsers pipeline. If you would like to filter lines

# before parsers, use include_message parser.

#exclude_lines: ['^DBG']

# Include lines. A list of regular expressions to match. It exports the lines that are

# matching any regular expression from the list.

# Line filtering happens after the parsers pipeline. If you would like to filter lines

# before parsers, use include_message parser.

#include_lines: ['^ERR', '^WARN']

# Exclude files. A list of regular expressions to match. Filebeat drops the files that

# are matching any regular expression from the list. By default, no files are dropped.

#prospector.scanner.exclude_files: ['.gz$']

# Optional additional fields. These fields can be freely picked

# to add additional information to the crawled log files for filtering

#fields:

# level: debug

# review: 1

# ============================== Filebeat modules ==============================

filebeat.config.modules:

# Glob pattern for configuration loading

path: ${path.config}/modules.d/*.yml

# Set to true to enable config reloading

reload.enabled: false

# Period on which files under path should be checked for changes

#reload.period: 10s

# ======================= Elasticsearch template setting =======================

setup.template.settings:

index.number_of_shards: 1

#index.codec: best_compression

#_source.enabled: false

# ================================== General ===================================

# The name of the shipper that publishes the network data. It can be used to group

# all the transactions sent by a single shipper in the web interface.

#name:

# The tags of the shipper are included in their own field with each

# transaction published.

#tags: ["service-X", "web-tier"]

# Optional fields that you can specify to add additional information to the

# output.

#fields:

# env: staging

# ================================= Dashboards =================================

# These settings control loading the sample dashboards to the Kibana index. Loading

# the dashboards is disabled by default and can be enabled either by setting the

# options here or by using the `setup` command.

#setup.dashboards.enabled: false

# The URL from where to download the dashboards archive. By default this URL

# has a value which is computed based on the Beat name and version. For released

# versions, this URL points to the dashboard archive on the artifacts.elastic.co

# website.

#setup.dashboards.url:

# =================================== Kibana ===================================

# Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API.

# This requires a Kibana endpoint configuration.

setup.kibana:

# Kibana Host

# Scheme and port can be left out and will be set to the default (http and 5601)

# In case you specify and additional path, the scheme is required: http://localhost:5601/path

# IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

# Kibana Space ID

# ID of the Kibana Space into which the dashboards should be loaded. By default,

# the Default Space will be used.

#space.id:

# =============================== Elastic Cloud ================================

# These settings simplify using Filebeat with the Elastic Cloud (https://cloud.elastic.co/).

# The cloud.id setting overwrites the `output.elasticsearch.hosts` and

# `setup.kibana.host` options.

# You can find the `cloud.id` in the Elastic Cloud web UI.

#cloud.id:

# The cloud.auth setting overwrites the `output.elasticsearch.username` and

# `output.elasticsearch.password` settings. The format is `<user>:<pass>`.

#cloud.auth:

# ================================== Outputs ===================================

# Configure what output to use when sending the data collected by the beat.

setup.ilm.enabled: false # 如果要创建多个索引,需要将此项设置为 false

setup.template.name: station_log # 设置模板的名称

setup.template.pattern: station_log-* # 设置模板的匹配方式,索引的前缀要和这里保持一致

setup.template.overwrite: true # 开启新设置的模板

setup.template.enabled: false # 关掉默认的模板配置

# ---------------------------- Elasticsearch Output ----------------------------

output.elasticsearch:

# Array of hosts to connect to.

hosts: ["192.168.100.22:9200"]

index: station_log-%{[fields.type]}-%{+yyyy.MM.dd} # 设置索引名称,后面引用的 fields.type 变量。此处的配置应该可以省略(不符合下面创建索引条件的日志,会使用该索引)

indices: # 使用 indices 代表要创建多个索引

- index: station_log-newframe-log-access-%{+yyyy.MM.dd} # 设置 日志的索引,注意索引前面的 station_log 要与setup.template.pattern 的配置相匹配

when.equals: # 设置创建索引的条件:当 fields.type 的值等于 newframe-log-access 时才生效

fields.type: newframe-log-access

- index: station_log-newframe-log-error-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-error

- index: station_log-newframe-log-info-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-info

- index: station_log-newframe-log-warn-%{+yyyy.MM.dd}

when.equals:

fields.type: newframe-log-warn

# Protocol - either `http` (default) or `https`.

#protocol: "https"

# Authentication credentials - either API key or username/password.

#api_key: "id:api_key"

username: "elastic"

password: "QbxGioLkbOy_jNkuvCkV"

# ------------------------------ Logstash Output -------------------------------

#output.logstash:

# The Logstash hosts

#hosts: ["localhost:5044"]

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

# Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

# ================================= Processors =================================

processors:

- add_host_metadata:

when.not.contains.tags: forwarded

- add_cloud_metadata: ~

- add_docker_metadata: ~

- add_kubernetes_metadata: ~

# ================================== Logging ===================================

# Sets log level. The default log level is info.

# Available log levels are: error, warning, info, debug

#logging.level: debug

# At debug level, you can selectively enable logging only for some components.

# To enable all selectors use ["*"]. Examples of other selectors are "beat",

# "publisher", "service".

#logging.selectors: ["*"]

# ============================= X-Pack Monitoring ==============================

# Filebeat can export internal metrics to a central Elasticsearch monitoring

# cluster. This requires xpack monitoring to be enabled in Elasticsearch. The

# reporting is disabled by default.

# Set to true to enable the monitoring reporter.

#monitoring.enabled: false

# Sets the UUID of the Elasticsearch cluster under which monitoring data for this

# Filebeat instance will appear in the Stack Monitoring UI. If output.elasticsearch

# is enabled, the UUID is derived from the Elasticsearch cluster referenced by output.elasticsearch.

#monitoring.cluster_uuid:

# Uncomment to send the metrics to Elasticsearch. Most settings from the

# Elasticsearch output are accepted here as well.

# Note that the settings should point to your Elasticsearch *monitoring* cluster.

# Any setting that is not set is automatically inherited from the Elasticsearch

# output configuration, so if you have the Elasticsearch output configured such

# that it is pointing to your Elasticsearch monitoring cluster, you can simply

# uncomment the following line.

#monitoring.elasticsearch:

# ============================== Instrumentation ===============================

# Instrumentation support for the filebeat.

#instrumentation:

# Set to true to enable instrumentation of filebeat.

#enabled: false

# Environment in which filebeat is running on (eg: staging, production, etc.)

#environment: ""

# APM Server hosts to report instrumentation results to.

#hosts:

# - http://localhost:8200

# API Key for the APM Server(s).

# If api_key is set then secret_token will be ignored.

#api_key:

# Secret token for the APM Server(s).

#secret_token:

# ================================= Migration ==================================

# This allows to enable 6.7 migration aliases

#migration.6_to_7.enabled: true

到了这里,关于搭建EFK(Elasticsearch+Filebeat+Kibana)日志收集系统[windows]的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!