需要源码请点赞关注收藏后评论区留言私信~~~

一、目标检测的概念

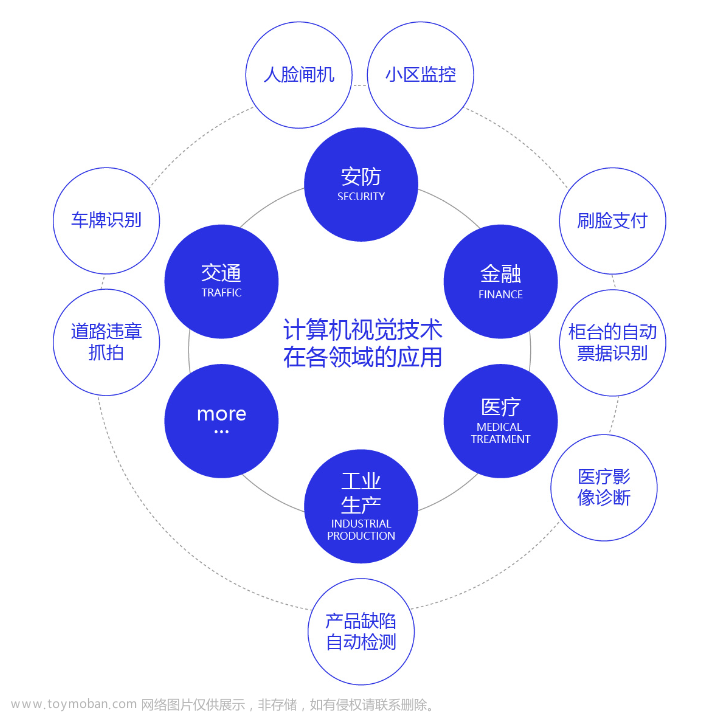

目标检测是计算机视觉和数字图像处理的一个热门方向,广泛应用于机器人导航、智能视频监控、工业检测、航空航天等诸多领域,通过计算机视觉减少对人力资本的消耗,具有重要的现实意义。因此,目标检测也就成为了近年来理论和应用的研究热点,它是图像处理和计算机视觉学科的重要分支,也是智能监控系统的核心部分,同时目标检测也是泛身份识别领域的一个基础性的算法,对后续的人脸识别、步态识别、人群计数、实例分割等任务起着至关重要的作用。

目标检测的任务是找出图像中所有感兴趣的目标,并确定它们的位置和类别,由于各类物体有不同的形状,姿态,加上成像时受光照,遮挡等因素的干扰,目标检测一直是计算机视觉领域最严峻的挑战之一

二、目标检测算法评价指标

目标检测需要预测出目标的具体位置以及目标类别,对于一个目标是否检测正确,首先要确定预测类别置信度是否达到阈值,之后确定预测框与实际框的重合度大小是否超过规定阈值,针对重合度的定义,通常采用IoU来代表,IoU是指对目标预测框与实际框之间的交集面积与两个框之间并集面积之比,IoU越大表示预测框与实际框之间重合度越高 检测得越准确

准确率为对于某个预测类别来说,预测正确的框占所有预测框的比例,而召回率为对于某个预测类别来说,预测正确的框占所有真实框的比例,两个指标计算方法如下

其中TP表示正确预测到的正样本数量,FP表示错误预测的正样本数量,FN表示错误预测的负真实样本数量

AP表示平均精准度,简单来说就是对PR曲线上的Precision值求均值,对于PR曲线来说,我们使用积分来进行计算

在实际应用中,我们并不直接对该PR曲线进行计算,而是对PR曲线进行平滑处理,即对PR曲线上的每个点,Precision的值取该点右侧最大的Precision的值

深度卷积神经网络目标检测算法性能对比如下

| 检测框架 |

mAP |

FPS |

| R-FCN |

79.4 |

7 |

| Faster R-CNN |

76.4 |

5 |

| SSD500 |

76.8 |

19 |

| YOLO |

63.4 |

45 |

| YOLO v2 |

78.6 |

40 |

| YOLO v3 |

82.3 |

39 |

三、目标检测项目实战

用到的训练集为VOC数据集,效果展示如下

数字代表了检测物体在图片中的相对坐标

分类如下

四、代码

项目结构如下

keras_frcnn文件夹里面存放着实现Faster R-CNN所用的各种类和方法

下面testing文件夹里面放着测试代码

部分代码如下 需要全部代码请点赞关注收藏后评论区私信

from keras.layers import Layer

import keras.backend as K

if K.backend() == 'tensorflow':

import tensorflow as tf

class RoiPoolingConv(Layer):

'''ROI pooling layer for 2D inputs.

See Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition,

K. He, X. Zhang, S. Ren, J. Sun

# Arguments

pool_size: int

Size of pooling region to use. pool_size = 7 will result in a 7x7 region.

num_rois: number of regions of interest to be used

# Input shape

list of two 4D tensors [X_img,X_roi] with shape:

X_img:

`(1, channels, rows, cols)` if dim_ordering='th'

or 4D tensor with shape:

`(1, rows, cols, channels)` if dim_ordering='tf'.

X_roi:

`(1,num_rois,4)` list of rois, with ordering (x,y,w,h)

# Output shape

3D tensor with shape:

`(1, num_rois, channels, pool_size, pool_size)`

'''

def __init__(self, pool_size, num_rois, **kwargs):

self.dim_ordering = K.common.image_dim_ordering()

assert self.dim_ordering in {'tf', 'th'}, 'dim_ordering must be in {tf, th}'

self.pool_size = pool_size

self.num_rois = num_rois

super(RoiPoolingConv, self).__init__(**kwargs)

def build(self, input_shape):

if self.dim_ordering == 'th':

self.nb_channels = input_shape[0][1]

elif self.dim_ordering == 'tf':

self.nb_channels = input_shape[0][3]

def compute_output_shape(self, input_shape):

if self.dim_ordering == 'th':

return None, self.num_rois, self.nb_channels, self.pool_size, self.pool_size

else:

return None, self.num_rois, self.pool_size, self.pool_size, self.nb_channels

def call(self, x, mask=None):

assert(len(x) == 2)

img = x[0]

rois = x[1]

input_shape = K.shape(img)

outputs = []

for roi_idx in range(self.num_rois):

x = rois[0, roi_idx, 0]

y = rois[0, roi_idx, 1]

w = rois[0, roi_idx, 2]

h = rois[0, roi_idx, 3]

row_length = w / float(self.pool_size)

col_length = h / float(self.pool_size)

num_pool_regions = self.pool_size

#NOTE: the RoiPooling implementation differs between theano and tensorflow due to the lack of a resize op

# in theano. The theano implementation is much less efficient and leads to long compile times

if self.dim_ordering == 'th':

for jy in range(num_pool_regions):

for ix in range(num_pool_regions):

x1 = x + ix * row_length

x2 = x1 + row_length

y1 = y + jy * col_length

y2 = y1 + col_length

x1 = K.cast(x1, 'int32')

x2 = K.cast(x2, 'int32')

y1 = K.cast(y1, 'int32')

y2 = K.cast(y2, 'int32')

x2 = x1 + K.maximum(1,x2-x1)

y2 = y1 + K.maximum(1,y2-y1)

new_shape = [input_shape[0], input_shape[1],

y2 - y1, x2 - x1]

x_crop = img[:, :, y1:y2, x1:x2]

xm = K.reshape(x_crop, new_shape)

pooled_val = K.max(xm, axis=(2, 3))

outputs.append(pooled_val)

elif self.dim_ordering == 'tf':

x = K.cast(x, 'int32')

y = K.cast(y, 'int32')

w = K.cast(w, 'int32')

h = K.cast(h, 'int32')

rs = tf.image.resize(img[:, y:y+h, x:x+w, :], (self.pool_size, self.pool_size))

outputs.append(rs)

final_output = K.concatenate(outputs, axis=0)

final_output = K.reshape(final_output, (1, self.num_rois, self.pool_size, self.pool_size, self.nb_channels))

if self.dim_ordering == 'th':

final_output = K.permute_dimensions(final_output, (0, 1, 4, 2, 3))

else:

final_output = K.permute_dimensions(final_output, (0, 1, 2, 3, 4))

return final_output

def get_config(self):

config = {'pool_size': self.pool_size,

'num_rois': self.num_rois}

base_config = super(RoiPoolingConv, self).get_config()

return dict(list(base_config.items()) + list(config.items()))

数据生成器代码如下文章来源:https://www.toymoban.com/news/detail-476240.html

from __future__ import absolute_import

import numpy as np

import cv2

import random

import copy

from . import data_augment

import threading

import itertools

def union(au, bu, area_intersection):

area_a = (au[2] - au[0]) * (au[3] - au[1])

area_b = (bu[2] - bu[0]) * (bu[3] - bu[1])

area_union = area_a + area_b - area_intersection

return area_union

def intersection(ai, bi):

x = max(ai[0], bi[0])

y = max(ai[1], bi[1])

w = min(ai[2], bi[2]) - x

h = min(ai[3], bi[3]) - y

if w < 0 or h < 0:

return 0

return w*h

def iou(a, b):

# a and b should be (x1,y1,x2,y2)

if a[0] >= a[2] or a[1] >= a[3] or b[0] >= b[2] or b[1] >= b[3]:

return 0.0

area_i = intersection(a, b)

area_u = union(a, b, area_i)

return float(area_i) / float(area_u + 1e-6)

def get_new_img_size(width, height, img_min_side=600):

if width <= height:

f = float(img_min_side) / width

resized_height = int(f * height)

resized_width = img_min_side

else:

f = float(img_min_side) / height

resized_width = int(f * width)

resized_height = img_min_side

return resized_width, resized_height

class SampleSelector:

def __init__(self, class_count):

# ignore classes that have zero samples

self.classes = [b for b in class_count.keys() if class_count[b] > 0]

self.class_cycle = itertools.cycle(self.classes)

self.curr_class = next(self.class_cycle)

def skip_sample_for_balanced_class(self, img_data):

class_in_img = False

for bbox in img_data['bboxes']:

cls_name = bbox['class']

if cls_name == self.curr_class:

class_in_img = True

self.curr_class = next(self.class_cycle)

break

if class_in_img:

return False

else:

return True

def calc_rpn(C, img_data, width, height, resized_width, resized_height, img_length_calc_function):

downscale = float(C.rpn_stride)

anchor_sizes = C.anchor_box_scales

anchor_ratios = C.anchor_box_ratios

num_anchors = len(anchor_sizes) * len(anchor_ratios)

# calculate the output map size based on the network architecture

(output_width, output_height) = img_length_calc_function(resized_width, resized_height)

n_anchratios = len(anchor_ratios)

# initialise empty output objectives

y_rpn_overlap = np.zeros((output_height, output_width, num_anchors))

y_is_box_valid = np.zeros((output_height, output_width, num_anchors))

y_rpn_regr = np.zeros((output_height, output_width, num_anchors * 4))

num_bboxes = len(img_data['bboxes'])

num_anchors_for_bbox = np.zeros(num_bboxes).astype(int)

best_anchor_for_bbox = -1*np.ones((num_bboxes, 4)).astype(int)

best_iou_for_bbox = np.zeros(num_bboxes).astype(np.float32)

best_x_for_bbox = np.zeros((num_bboxes, 4)).astype(int)

best_dx_for_bbox = np.zeros((num_bboxes, 4)).astype(np.float32)

# get the GT box coordinates, and resize to account for image resizing

gta = np.zeros((num_bboxes, 4))

for bbox_num, bbox in enumerate(img_data['bboxes']):

# get the GT box coordinates, and resize to account for image resizing

gta[bbox_num, 0] = bbox['x1'] * (resized_width / float(width))

gta[bbox_num, 1] = bbox['x2'] * (resized_width / float(width))

gta[bbox_num, 2] = bbox['y1'] * (resized_height / float(height))

gta[bbox_num, 3] = bbox['y2'] * (resized_height / float(height))

# rpn ground truth

for anchor_size_idx in range(len(anchor_sizes)):

for anchor_ratio_idx in range(n_anchratios):

anchor_x = anchor_sizes[anchor_size_idx] * anchor_ratios[anchor_ratio_idx][0]

anchor_y = anchor_sizes[anchor_size_idx] * anchor_ratios[anchor_ratio_idx][1]

for ix in range(output_width):

# x-coordinates of the current anchor box

x1_anc = downscale * (ix + 0.5) - anchor_x / 2

x2_anc = downscale * (ix + 0.5) + anchor_x / 2

# ignore boxes that go across image boundaries

if x1_anc < 0 or x2_anc > resized_width:

continue

for jy in range(output_height):

# y-coordinates of the current anchor box

y1_anc = downscale * (jy + 0.5) - anchor_y / 2

y2_anc = downscale * (jy + 0.5) + anchor_y / 2

# ignore boxes that go across image boundaries

if y1_anc < 0 or y2_anc > resized_height:

continue

# bbox_type indicates whether an anchor should be a target

bbox_type = 'neg'

# this is the best IOU for the (x,y) coord and the current anchor

# note that this is different from the best IOU for a GT bbox

best_iou_for_loc = 0.0

for bbox_num in range(num_bboxes):

# get IOU of the current GT box and the current anchor box

curr_iou = iou([gta[bbox_num, 0], gta[bbox_num, 2], gta[bbox_num, 1], gta[bbox_num, 3]], [x1_anc, y1_anc, x2_anc, y2_anc])

# calculate the regression targets if they will be needed

if curr_iou > best_iou_for_bbox[bbox_num] or curr_iou > C.rpn_max_overlap:

cx = (gta[bbox_num, 0] + gta[bbox_num, 1]) / 2.0

cy = (gta[bbox_num, 2] + gta[bbox_num, 3]) / 2.0

cxa = (x1_anc + x2_anc)/2.0

cya = (y1_anc + y2_anc)/2.0

tx = (cx - cxa) / (x2_anc - x1_anc)

ty = (cy - cya) / (y2_anc - y1_anc)

tw = np.log((gta[bbox_num, 1] - gta[bbox_num, 0]) / (x2_anc - x1_anc))

th = np.log((gta[bbox_num, 3] - gta[bbox_num, 2]) / (y2_anc - y1_anc))

if img_data['bboxes'][bbox_num]['class'] != 'bg':

# all GT boxes should be mapped to an anchor box, so we keep track of which anchor box was best

if curr_iou > best_iou_for_bbox[bbox_num]:

best_anchor_for_bbox[bbox_num] = [jy, ix, anchor_ratio_idx, anchor_size_idx]

best_iou_for_bbox[bbox_num] = curr_iou

best_x_for_bbox[bbox_num,:] = [x1_anc, x2_anc, y1_anc, y2_anc]

best_dx_for_bbox[bbox_num,:] = [tx, ty, tw, th]

# we set the anchor to positive if the IOU is >0.7 (it does not matter if there was another better box, it just indicates overlap)

if curr_iou > C.rpn_max_overlap:

bbox_type = 'pos'

num_anchors_for_bbox[bbox_num] += 1

# we update the regression layer target if this IOU is the best for the current (x,y) and anchor position

if curr_iou > best_iou_for_loc:

best_iou_for_loc = curr_iou

best_regr = (tx, ty, tw, th)

# if the IOU is >0.3 and <0.7, it is ambiguous and no included in the objective

if C.rpn_min_overlap < curr_iou < C.rpn_max_overlap:

# gray zone between neg and pos

if bbox_type != 'pos':

bbox_type = 'neutral'

# turn on or off outputs depending on IOUs

if bbox_type == 'neg':

y_is_box_valid[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 1

y_rpn_overlap[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 0

elif bbox_type == 'neutral':

y_is_box_valid[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 0

y_rpn_overlap[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 0

elif bbox_type == 'pos':

y_is_box_valid[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 1

y_rpn_overlap[jy, ix, anchor_ratio_idx + n_anchratios * anchor_size_idx] = 1

start = 4 * (anchor_ratio_idx + n_anchratios * anchor_size_idx)

y_rpn_regr[jy, ix, start:start+4] = best_regr

# we ensure that every bbox has at least one positive RPN region

for idx in range(num_anchors_for_bbox.shape[0]):

if num_anchors_for_bbox[idx] == 0:

# no box with an IOU greater than zero ...

if best_anchor_for_bbox[idx, 0] == -1:

continue

y_is_box_valid[

best_anchor_for_bbox[idx,0], best_anchor_for_bbox[idx,1], best_anchor_for_bbox[idx,2] + n_anchratios *

best_anchor_for_bbox[idx,3]] = 1

y_rpn_overlap[

best_anchor_for_bbox[idx,0], best_anchor_for_bbox[idx,1], best_anchor_for_bbox[idx,2] + n_anchratios *

best_anchor_for_bbox[idx,3]] = 1

start = 4 * (best_anchor_for_bbox[idx,2] + n_anchratios * best_anchor_for_bbox[idx,3])

y_rpn_regr[

best_anchor_for_bbox[idx,0], best_anchor_for_bbox[idx,1], start:start+4] = best_dx_for_bbox[idx, :]

y_rpn_overlap = np.transpose(y_rpn_overlap, (2, 0, 1))

y_rpn_overlap = np.expand_dims(y_rpn_overlap, axis=0)

y_is_box_valid = np.transpose(y_is_box_valid, (2, 0, 1))

y_is_box_valid = np.expand_dims(y_is_box_valid, axis=0)

y_rpn_regr = np.transpose(y_rpn_regr, (2, 0, 1))

y_rpn_regr = np.expand_dims(y_rpn_regr, axis=0)

pos_locs = np.where(np.logical_and(y_rpn_overlap[0, :, :, :] == 1, y_is_box_valid[0, :, :, :] == 1))

neg_locs = np.where(np.logical_and(y_rpn_overlap[0, :, :, :] == 0, y_is_box_valid[0, :, :, :] == 1))

num_pos = len(pos_locs[0])

# one issue is that the RPN has many more negative than positive regions, so we turn off some of the negative

# regions. We also limit it to 256 regions.

num_regions = 256

if len(pos_locs[0]) > num_regions/2:

val_locs = random.sample(range(len(pos_locs[0])), len(pos_locs[0]) - num_regions/2)

y_is_box_valid[0, pos_locs[0][val_locs], pos_locs[1][val_locs], pos_locs[2][val_locs]] = 0

num_pos = num_regions/2

if len(neg_locs[0]) + num_pos > num_regions:

val_locs = random.sample(range(len(neg_locs[0])), len(neg_locs[0]) - num_pos)

y_is_box_valid[0, neg_locs[0][val_locs], neg_locs[1][val_locs], neg_locs[2][val_locs]] = 0

y_rpn_cls = np.concatenate([y_is_box_valid, y_rpn_overlap], axis=1)

y_rpn_regr = np.concatenate([np.repeat(y_rpn_overlap, 4, axis=1), y_rpn_regr], axis=1)

return np.copy(y_rpn_cls), np.copy(y_rpn_regr)

class threadsafe_iter:

"""Takes an iterator/generator and makes it thread-safe by

serializing call to the `next` method of given iterator/generator.

"""

def __init__(self, it):

self.it = it

self.lock = threading.Lock()

def __iter__(self):

return self

def next(self):

with self.lock:

return next(self.it)

def threadsafe_generator(f):

"""A decorator that takes a generator function and makes it thread-safe.

"""

def g(*a, **kw):

return threadsafe_iter(f(*a, **kw))

return g

def get_anchor_gt(all_img_data, class_count, C, img_length_calc_function, backend, mode='train'):

# The following line is not useful with Python 3.5, it is kept for the legacy

# all_img_data = sorted(all_img_data)

sample_selector = SampleSelector(class_count)

while True:

if mode == 'train':

np.random.shuffle(all_img_data)

for img_data in all_img_data:

try:

if C.balanced_classes and sample_selector.skip_sample_for_balanced_class(img_data):

continue

# read in image, and optionally add augmentation

if mode == 'train':

img_data_aug, x_img = data_augment.augment(img_data, C, augment=True)

else:

img_data_aug, x_img = data_augment.augment(img_data, C, augment=False)

(width, height) = (img_data_aug['width'], img_data_aug['height'])

(rows, cols, _) = x_img.shape

assert cols == width

assert rows == height

# get image dimensions for resizing

(resized_width, resized_height) = get_new_img_size(width, height, C.im_size)

# resize the image so that smalles side is length = 600px

x_img = cv2.resize(x_img, (resized_width, resized_height), interpolation=cv2.INTER_CUBIC)

try:

y_rpn_cls, y_rpn_regr = calc_rpn(C, img_data_aug, width, height, resized_width, resized_height, img_length_calc_function)

except:

continue

# Zero-center by mean pixel, and preprocess image

x_img = x_img[:,:, (2, 1, 0)] # BGR -> RGB

x_img = x_img.astype(np.float32)

x_img[:, :, 0] -= C.img_channel_mean[0]

x_img[:, :, 1] -= C.img_channel_mean[1]

x_img[:, :, 2] -= C.img_channel_mean[2]

x_img /= C.img_scaling_factor

x_img = np.transpose(x_img, (2, 0, 1))

x_img = np.expand_dims(x_img, axis=0)

y_rpn_regr[:, y_rpn_regr.shape[1]//2:, :, :] *= C.std_scaling

if backend == 'tf':

x_img = np.transpose(x_img, (0, 2, 3, 1))

y_rpn_cls = np.transpose(y_rpn_cls, (0, 2, 3, 1))

y_rpn_regr = np.transpose(y_rpn_regr, (0, 2, 3, 1))

yield np.copy(x_img), [np.copy(y_rpn_cls), np.copy(y_rpn_regr)], img_data_aug

except Exception as e:

print(e)

continue

创作不易 觉得有帮助请点赞关注收藏~~~文章来源地址https://www.toymoban.com/news/detail-476240.html

到了这里,关于【Keras计算机视觉】Faster R-CNN神经网络实现目标检测实战(附源码和数据集 超详细)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!