零:版本说明

Hadoop:3.1.0

CentOS:7.6

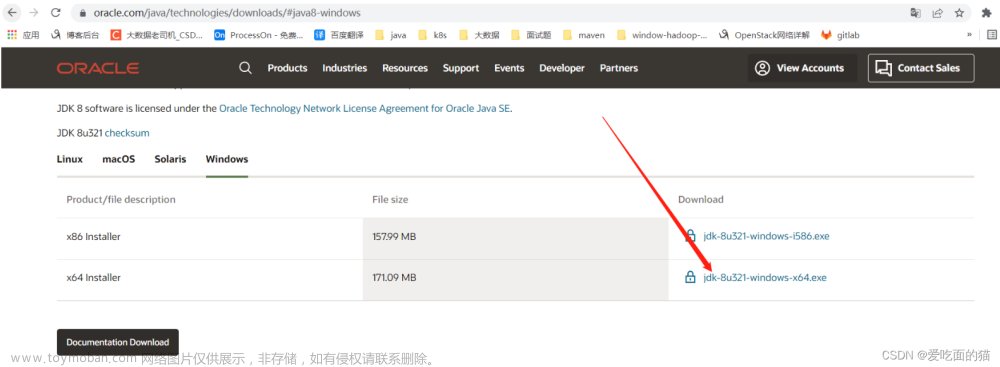

JDK:1.8

一、安装CentOS

这里网上教程很多,就不贴图了

【内存可以尽量大一些,不然Hive运行时内存不够】

二、Hadoop单机配置

创建tools目录,用于存放文件安装包

将Hadoop和JDK的安装包上传上去

创建server目录,存放解压后的文件

解压jdk

配置环境变量

配置免密登录

配置映射,配置ip地址和主机名映射,以后就可以用主机名代替ip地址

生成公钥和私钥

查看生成的公钥和私钥,并将公钥写入授权文件

解压Hadoop

配置Hadoop

修改配置文件

初始化并启动HDFS

关闭防火墙

第一次启动需要先初始化HDFS

配置启动用户

配置环境变量,方便启动

启动HDFS

[root@localhost ~]# start-dfs.sh

验证是否启动成功

方式1:

[root@localhost ~]# jps

58466 Jps

54755 NameNode

55401 SecondaryNameNode

54938 DataNode

方式2:访问这个网址,虚拟机地址:9870端口号

192.168.163.129:9870

配置Hadoop(YARN)环境

修改配置文件mapred-site.xml和yarn-site.xml

[root@localhost ~]# cd /opt/server/hadoop-3.1.0/etc/hadoop/

[root@localhost hadoop]# vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=${HADOOP_HOME}</value>

</property>

</configuration>

[root@localhost hadoop]# vim yarn-site.xml

<configuration>

<property>

<!--配置 NodeManager 上运行的附属服务。需要配置成 mapreduce_shuffle 后才可

以在Yarn 上运行 MapRedvimuce 程序。-->

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

启动服务

[root@localhost sbin]# pwd

/opt/server/hadoop-3.1.0/sbin

[root@localhost sbin]# vim start-yarn.sh

[root@localhost sbin]# vim stop-yarn.sh

# 在两个文件顶部添加如下内容

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

[root@localhost ~]# start-yarn.sh

验证是否启动成功

方式1:

[root@localhost ~]# jps

96707 NodeManager

54755 NameNode

55401 SecondaryNameNode

54938 DataNode

96476 ResourceManager

98686 Jps

方式2:访问这个网址,虚拟机地址:8088端口号

192.168.163.129:8088

三、Hive安装部署

准备好Hive和MySQL这两个安装包

安装MySQL

卸载CentOS7自带的mariadb

[root@server ~]# rpm -qa|grep mariadb

mariadb-libs-5.5.60-1.el7_5.x86_64

[root@server ~]# rpm -e mariadb-libs-5.5.60-1.el7_5.x86_64 --nodeps

解压mysql

[root@server ~]# mkdir /opt/server/mysql

[root@server mysql]# cd /opt/tools/

[root@server tools]# tar -xvf mysql-5.7.34-1.el7.x86_64.rpm-bundle.tar -C /opt/server/mysql/

执行安装

# 安装依赖

[root@server tools]# yum -y install libaio

[root@server tools]# yum -y install libncurses*

[root@server tools]# yum -y install perl perl-devel

# 切换到安装目录进行安装

[root@server tools]# cd /opt/server/mysql/

[root@server mysql]# rpm -ivh mysql-community-common-5.7.34-1.el7.x86_64.rpm

[root@server mysql]# rpm -ivh mysql-community-libs-5.7.34-1.el7.x86_64.rpm

[root@server mysql]# rpm -ivh mysql-community-client-5.7.34-1.el7.x86_64.rpm

[root@server mysql]# rpm -ivh mysql-community-server-5.7.34-1.el7.x86_64.rpm

启动Mysql

[root@server mysql]# systemctl start mysqld.service

[root@server mysql]# cat /var/log/mysqld.log | grep password

2023-06-15T07:04:14.100925Z 1 [Note] A temporary password is generated for root@localhost: !=qcAerHW5*r

修改初始的临时密码

[root@server mysql]# mysql -u root -p

Enter password: #上边的那个!=qcAerHW5*r

mysql> set global validate_password_policy=0;

mysql> set global validate_password_length=1;

mysql> set password=password('root');

授予远程连接权限

mysql> grant all privileges on *.* to 'root' @'%' identified by 'root';

mysql> flush privileges;

设置开机自启动,并检查是否成功

[root@server mysql]# systemctl enable mysqld

[root@server mysql]# systemctl list-unit-files | grep mysqld

mysqld.service enabled

mysqld@.service disabled

Mysql相关控制命令

#启动、关闭、状态查看

systemctl stop mysqld

systemctl status mysqld

systemctl start mysqld

Hive安装配置

解压Hive

[root@server mysql]# cd /opt/tools

[root@server tools]# ls

apache-hive-3.1.2-bin.tar.gz hadoop-3.1.0.tar.gz jdk-8u371-linux-x64.tar.gz mysql-5.7.34-1.el7.x86_64.rpm-bundle.tar

[root@server tools]# tar -zxvf apache-hive-3.1.2-bin.tar.gz -C /opt/server

添加mysql_jdbc驱动到hive安装包lib目录下

修改hive环境变量文件,指定Hadoop安装路径

cd /opt/server/apache-hive-3.1.2-bin/conf

cp hive-env.sh.template hive-env.sh

vim hive-env.sh

新建hive-site.xml的配置文件,配置存放元数据的MySQL的地址、驱动、用户名

密码等信息

[root@server conf]# vim hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 存储元数据mysql相关配置 /etc/hosts -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value> jdbc:mysql://server:3306/hive?createDatabaseIfNotExist=true&useSSL=false&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

</property>

</configuration>

初始化元数据库

[root@server conf]# cd /opt/server/apache-hive-3.1.2-bin/bin

[root@server conf]# ./schematool -dbType mysql -initSchema

启动Hive

添加环境变量

[root@server conf]# vim /etc/profile

[root@server conf]# source /etc/profile

# 启动前需要先把hadoop相关的启动起来

[root@server ~]# start-dfs.sh

[root@server ~]# start-yarn.sh

# 启动hive

[root@server ~]# hive

hive> create database test;

hive> use test;

hive> create table t_student(id int, name varchar(255));

hive> insert into table t_student values(1,'baobei');

四、安装部署Flume、Nginx

Flume日志采集工具安装

下载好flume安装包

解压

tar -zxvf apache-flume-1.9.0-bin.tar.gz -C /opt/server/

修改flume配置文件flume-env.sh

[root@server tools]# cd /opt/server/apache-flume-1.9.0-bin/conf

[root@server conf]# ls

flume-conf.properties.template flume-env.ps1.template flume-env.sh.template log4j.properties

[root@server conf]# cp flume-env.sh.template flume-env.sh

[root@server conf]# vim flume-env.sh

Nginx-web服务器安装

yum install epel-release

yum update

yum -y install nginx --nogpgcheck

开启nginx服务

[root@server conf]# systemctl start nginx

查看网站的访问日志

[root@server nginx]# cd /var/log/nginx

[root@server nginx]# cat access.log

添加hadoop的相关jar包

[root@server nginx]# cp /opt/server/hadoop-3.1.0/share/hadoop/common/*.jar /opt/server/apache-flume-1.9.0-bin/lib

[root@server nginx]# cp /opt/server/hadoop-3.1.0/share/hadoop/common/lib/*.jar /opt/server/apache-flume-1.9.0-bin/lib

[root@server nginx]# cp /opt/server/hadoop-3.1.0/share/hadoop/hdfs/*.jar /opt/server/apache-flume-1.9.0-bin/lib

创建配置文件taildir-hdfs.conf,监控/var/log/nginx下的日志

[root@server nginx]# cd /opt/server/apache-flume-1.9.0-bin/conf/

[root@server conf]# vim taildir-hdfs.conf

a3.sources.r3.filegroups.f1 = /var/log/nginx/access.log

# 用于记录文件读取的位置信息

a3.sources.r3.positionFile = /opt/server/apache-flume-1.9.0-bin/tail_dir.json

# Describe the sink

a3.sinks.k3.type = hdfs

a3.sinks.k3.hdfs.path = hdfs://server:8020/user/tailDir

a3.sinks.k3.hdfs.fileType = DataStream

# 设置每个文件的滚动大小大概是 128M,默认值:1024,当临时文件达到该大小(单位:bytes)时,滚动

成目标文件。如果设置成0,则表示不根据临时文件大小来滚动文件。

a3.sinks.k3.hdfs.rollSize = 134217700

# 默认值:10,当events数据达到该数量时候,将临时文件滚动成目标文件,如果设置成0,则表示不根据

events数据来滚动文件。

a3.sinks.k3.hdfs.rollCount = 0

# 不随时间滚动,默认为30秒

a3.sinks.k3.hdfs.rollInterval = 10

# flume检测到hdfs在复制块时会自动滚动文件,导致roll参数不生效,要将该参数设置为1;否则HFDS文

件所在块的复制会引起文件滚动

a3.sinks.k3.hdfs.minBlockReplicas = 1

# Use a channel which buffers events in memory

a3.channels.c3.type = memory

a3.channels.c3.capacity = 1000

a3.channels.c3.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r3.channels = c3

a3.sinks.k3.channel = c3

启动flume

[root@server apache-flume-1.9.0-bin]# bin/flume-ng agent -c ./conf -f ./conf/taildir-hdfs.conf -n a3 -Dflume.root.logger=INFO,console

五、Sqoop安装

上传安装包

解压

[root@server tools]# tar -zxvf sqoop-1.4.7.bin__hadoop-2.6.0.tar.gz -C /opt/server/

编辑配置文件文章来源:https://www.toymoban.com/news/detail-490737.html

[root@server tools]# cd /opt/server/sqoop-1.4.7.bin__hadoop-2.6.0/conf/

[root@server conf]# cp sqoop-env-template.sh sqoop-env.sh

[root@server conf]# vim sqoop-env.sh

上传mysql的jdbc驱动包到lib目录下 文章来源地址https://www.toymoban.com/news/detail-490737.html

文章来源地址https://www.toymoban.com/news/detail-490737.html

到了这里,关于大数据环境搭建 Hadoop+Hive+Flume+Sqoop+Azkaban的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!