Apache Hive 系列文章

1、apache-hive-3.1.2简介及部署(三种部署方式-内嵌模式、本地模式和远程模式)及验证详解

2、hive相关概念详解–架构、读写文件机制、数据存储

3、hive的使用示例详解-建表、数据类型详解、内部外部表、分区表、分桶表

4、hive的使用示例详解-事务表、视图、物化视图、DDL(数据库、表以及分区)管理详细操作

5、hive的load、insert、事务表使用详解及示例

6、hive的select(GROUP BY、ORDER BY、CLUSTER BY、SORT BY、LIMIT、union、CTE)、join使用详解及示例

7、hive shell客户端与属性配置、内置运算符、函数(内置运算符与自定义UDF运算符)

8、hive的关系运算、逻辑预算、数学运算、数值运算、日期函数、条件函数和字符串函数的语法与使用示例详解

9、hive的explode、Lateral View侧视图、聚合函数、窗口函数、抽样函数使用详解

10、hive综合示例:数据多分隔符(正则RegexSerDe)、url解析、行列转换常用函数(case when、union、concat和explode)详细使用示例

11、hive综合应用示例:json解析、窗口函数应用(连续登录、级联累加、topN)、拉链表应用

12、Hive优化-文件存储格式和压缩格式优化与job执行优化(执行计划、MR属性、join、优化器、谓词下推和数据倾斜优化)详细介绍及示例

13、java api访问hive操作示例

本文介绍了hive的分组、排序、CTE以及join的详细操作及示例。

本文依赖hive环境可用。

本文分为2个部分,即select的使用和join的使用。

一、Hive SQL-DQL-Select查询数据

从哪里查询取决于FROM关键字后面的table_reference。可以是普通物理表、视图、join结果或子查询结果。表名和列名不区分大小写。

1、GROUP BY、ORDER BY、CLUSTER BY、SORT BY、LIMIT语法及示例

1)、语法

[WITH CommonTableExpression (, CommonTableExpression)*]

SELECT [ALL | DISTINCT] select_expr, select_expr, ...

FROM table_reference

[WHERE where_condition]

[GROUP BY col_list]

[ORDER BY col_list]

[CLUSTER BY col_list

| [DISTRIBUTE BY col_list] [SORT BY col_list]

]

[LIMIT [offset,] rows];

2)、示例

------------案例:美国Covid-19新冠数据之select查询---------------

--step1:创建普通表t_usa_covid19

drop table if exists t_usa_covid19;

CREATE TABLE t_usa_covid19(

count_date string,

county string,

state string,

fips int,

cases int,

deaths int)

row format delimited fields terminated by ",";

--将源数据load加载到t_usa_covid19表对应的路径下

load data local inpath '/usr/local/bigdata/us-covid19-counties.dat' into table t_usa_covid19;

select * from t_usa_covid19;

--step2:创建一张分区表 基于count_date日期,state州进行分区

CREATE TABLE if not exists t_usa_covid19_p(

county string,

fips int,

cases int,

deaths int)

partitioned by(count_date string,state string)

row format delimited fields terminated by ",";

--step3:使用动态分区插入将数据导入t_usa_covid19_p中

set hive.exec.dynamic.partition.mode = nonstrict;

insert into table t_usa_covid19_p partition (count_date,state)

select county,fips,cases,deaths,count_date,state from t_usa_covid19;

---------------Hive SQL select查询基础语法------------------

--1、select_expr

--查询所有字段或者指定字段

select * from t_usa_covid19_p;

select county, cases, deaths from t_usa_covid19_p;

--查询匹配正则表达式的所有字段

SET hive.support.quoted.identifiers = none; --反引号不在解释为其他含义,被解释为正则表达式

--查询以c开头的字段

select `^c.*` from t_usa_covid19_p;

0: jdbc:hive2://server4:10000> select `^c.*` from t_usa_covid19_p limit 3;

+-------------------------+------------------------+-----------------------------+

| t_usa_covid19_p.county | t_usa_covid19_p.cases | t_usa_covid19_p.count_date |

+-------------------------+------------------------+-----------------------------+

| Autauga | 5554 | 2021-01-28 |

| Baldwin | 17779 | 2021-01-28 |

| Barbour | 1920 | 2021-01-28 |

+-------------------------+------------------------+-----------------------------+

--查询当前数据库

select current_database(); --省去from关键字

--查询使用函数

select count(county) from t_usa_covid19_p;

--2、ALL DISTINCT

--返回所有匹配的行

select state from t_usa_covid19_p;

--相当于

select all state from t_usa_covid19_p;

--返回所有匹配的行 去除重复的结果

select distinct state from t_usa_covid19_p;

--多个字段distinct 整体去重

select county,state from t_usa_covid19_p;

select distinct county,state from t_usa_covid19_p;

select distinct sex from student;

0: jdbc:hive2://server4:10000> select distinct sex from student;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+------+

| sex |

+------+

| 女 |

| 男 |

+------+

--3、WHERE CAUSE

select * from t_usa_covid19_p where 1 > 2; -- 1 > 2 返回false

select * from t_usa_covid19_p where 1 = 1; -- 1 = 1 返回true

--where条件中使用函数 找出州名字母长度超过10位的有哪些

select * from t_usa_covid19_p where length(state) >10 ;

--where子句支持子查询

SELECT *

FROM A

WHERE A.a IN (SELECT foo FROM B);

--注意:where条件中不能使用聚合函数

--报错 SemanticException:Not yet supported place for UDAF 'count'

--聚合函数要使用它的前提是结果集已经确定。

--而where子句还处于“确定”结果集的过程中,因而不能使用聚合函数。

select state,count(deaths) from t_usa_covid19_p where count(deaths) >100 group by state;

0: jdbc:hive2://server4:10000> select state,count(deaths) from t_usa_covid19_p where count(deaths) >100 group by state;

Error: Error while compiling statement: FAILED: SemanticException [Error 10128]: Line 1:54 Not yet supported place for UDAF 'count' (state=42000,code=10128)

--可以使用Having实现

select state,count(deaths)

from t_usa_covid19_p group by state

having count(deaths) > 100;

--4、分区查询、分区裁剪

--找出来自加州,累计死亡人数大于1000的县 state字段就是分区字段 进行分区裁剪 避免全表扫描

select * from t_usa_covid19_p where state ="California" and deaths > 1000;

--多分区裁剪

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California" and deaths > 1000;

--5、GROUP BY

--根据state州进行分组

--SemanticException:Expression not in GROUP BY key 'deaths'

--deaths不是分组字段 报错

--state是分组字段 可以直接出现在select_expr中

select state,deaths from t_usa_covid19_p where count_date = "2021-01-28" group by state;

--被聚合函数应用

select state,sum(deaths) from t_usa_covid19_p where count_date = "2021-01-28" group by state;

--6、having

--统计死亡病例数大于10000的州

--where语句中不能使用聚合函数 语法报错

select state,sum(deaths) from t_usa_covid19_p where count_date = "2021-01-28" and sum(deaths) >10000 group by state;

--先where分组前过滤(此处是分区裁剪),再进行group by分组, 分组后每个分组结果集确定 再使用having过滤

select state,sum(deaths) from t_usa_covid19_p

where count_date = "2021-01-28"

group by state

having sum(deaths) > 10000;

--这样写更好 即在group by的时候聚合函数已经作用得出结果 having直接引用结果过滤 不需要再单独计算一次了

select state,sum(deaths) as cnts from t_usa_covid19_p

where count_date = "2021-01-28"

group by state

having cnts> 10000;

--7、limit

--没有限制返回2021.1.28 加州的所有记录

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California";

--返回结果集的前5条

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California" limit 5;

--返回结果集从第3行(含)开始 共3行 以下是查询结果比较

--[LIMIT [offset,] rows]

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California" order by deaths desc limit 2,3; --注意 第一个参数偏移量是从0开始的

0: jdbc:hive2://server4:10000> select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California" order by deaths desc ;

+-------------------------+-----------------------+------------------------+-------------------------+-----------------------------+------------------------+

| t_usa_covid19_p.county | t_usa_covid19_p.fips | t_usa_covid19_p.cases | t_usa_covid19_p.deaths | t_usa_covid19_p.count_date | t_usa_covid19_p.state |

+-------------------------+-----------------------+------------------------+-------------------------+-----------------------------+------------------------+

| Los Angeles | 6037 | 1098363 | 16107 | 2021-01-28 | California |

| Riverside | 6065 | 270105 | 3058 | 2021-01-28 | California |

| Orange | 6059 | 241648 | 2868 | 2021-01-28 | California |

| San Diego | 6073 | 233033 | 2534 | 2021-01-28 | California |

| San Bernardino | 6071 | 271189 | 1776 | 2021-01-28 | California |

| Santa Clara | 6085 | 100468 | 1345 | 2021-01-28 | California |

| Sacramento | 6067 | 85427 | 1216 | 2021-01-28 | California |

| Fresno | 6019 | 86886 | 1122 | 2021-01-28 | California |

。。。。

0: jdbc:hive2://server4:10000> select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California" order by deaths desc limit 2,3;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------------------------+-----------------------+------------------------+-------------------------+-----------------------------+------------------------+

| t_usa_covid19_p.county | t_usa_covid19_p.fips | t_usa_covid19_p.cases | t_usa_covid19_p.deaths | t_usa_covid19_p.count_date | t_usa_covid19_p.state |

+-------------------------+-----------------------+------------------------+-------------------------+-----------------------------+------------------------+

| Orange | 6059 | 241648 | 2868 | 2021-01-28 | California |

| San Diego | 6073 | 233033 | 2534 | 2021-01-28 | California |

| San Bernardino | 6071 | 271189 | 1776 | 2021-01-28 | California |

+-------------------------+-----------------------+------------------------+-------------------------+-----------------------------+------------------------+

---------------Hive SQL select查询高阶语法------------------

---1、order by

--根据字段进行排序

--默认asc, nulls first 也可以手动指定nulls last

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California"

order by deaths ;

--指定desc nulls last

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California"

order by deaths desc;

--强烈建议将LIMIT与ORDER BY一起使用。避免数据集行数过大

--当hive.mapred.mode设置为strict严格模式时,使用不带LIMIT的ORDER BY时会引发异常。

select * from t_usa_covid19_p where count_date = "2021-01-28" and state ="California"

order by deaths desc

limit 3;

--2、cluster by

--根据指定字段将数据分组,每组内再根据该字段正序排序(只能正序)。根据同一个字段,分且排序。

select * from student;

--不指定reduce task个数

--日志显示:Number of reduce tasks not specified. Estimated from input data size: 1

select * from student cluster by num;

--分组规则hash散列(分桶表规则一样):Hash_Func(col_name) % reducetask个数

--分为几组取决于reducetask的个数(结果见下图)

--手动设置reduce task个数

set mapreduce.job.reduces =2;

select * from student cluster by num;

--3、distribute by + sort by

--案例:把学生表数据根据性别分为两个部分,每个分组内根据年龄的倒序排序。

--错误

select * from student cluster by sex order by age desc;

select * from student cluster by sex sort by age desc;

CLUSTER BY无法单独完成,因为分和排序的字段只能是同一个;

ORDER BY更不能在这里使用,因为是全局排序,只有一个输出,无法满足分的需求。

--正确

--DISTRIBUTE BY +SORT BY就相当于把CLUSTER BY的功能一分为二

--前提:DISTRIBUTE BY 是在多个reduce的时候才会有效果,否则不能看到效果

--1.DISTRIBUTE BY负责根据指定字段分组;

--2.SORT BY负责分组内排序规则。

--分组和排序的字段可以不同。

set mapreduce.job.reduces=3;

select * from student distribute by sex sort by age desc;

--下面两个语句执行结果一样

select * from student distribute by num sort by num;

select * from student cluster by num;

set mapreduce.job.reduces =2;

select * from student cluster by num;

执行结果如下:

set mapreduce.job.reduces=3;

select * from student distribute by sex sort by age desc;

执行结果如下:

2、CLUSTER、 DISTRIBUTE、SORT、ORDER BY总结

- order by全局排序,因此只有一个reducer,结果输出在一个文件中,当输入规模大时,需要较长的计算时间。

- distribute by根据指定字段将数据分组,算法是hash散列。sort by是在分组之后,每个组内局部排序。

- cluster by既有分组,又有排序,但是两个字段只能是同一个字段。

如果distribute和sort的字段是同一个时,cluster by = distribute by + sort by

3、Union联合查询

UNION用于将来自于多个SELECT语句的结果合并为一个结果集。

使用DISTINCT关键字与只使用UNION默认值效果一样,都会删除重复行。1.2.0之前的Hive版本仅支持UNION ALL,在这种情况下不会消除重复的行。

使用ALL关键字,不会删除重复行,结果集包括所有SELECT语句的匹配行(包括重复行)。

每个select_statement返回的列的数量和名称必须相同。

---------------Union联合查询----------------------------

--语法规则

select_statement UNION [ALL | DISTINCT] select_statement UNION [ALL | DISTINCT] select_statement ...;

--使用DISTINCT关键字与使用UNION默认值效果一样,都会删除重复行。

select num,name from student_local

UNION

select num,name from student_hdfs;

--和上面一样

select num,name from student_local

UNION DISTINCT

select num,name from student_hdfs;

--使用ALL关键字会保留重复行。

select num,name from student_local

UNION ALL

select num,name from student_hdfs limit 2;

--如果要将ORDER BY,SORT BY,CLUSTER BY,DISTRIBUTE BY或LIMIT应用于单个SELECT

--请将子句放在括住SELECT的括号内

SELECT num,name FROM (select num,name from student_local LIMIT 2) subq1

UNION

SELECT num,name FROM (select num,name from student_hdfs LIMIT 3) subq2;

--如果要将ORDER BY,SORT BY,CLUSTER BY,DISTRIBUTE BY或LIMIT子句应用于整个UNION结果

--请将ORDER BY,SORT BY,CLUSTER BY,DISTRIBUTE BY或LIMIT放在最后一个之后。

select num,name from student_local

UNION

select num,name from student_hdfs

order by num desc;

------------子查询Subqueries--------------

--from子句中子查询(Subqueries)

--子查询

SELECT num

FROM (

select num,name from student_local

) tmp;

--包含UNION ALL的子查询的示例

SELECT t3.name

FROM (

select num,name from student_local

UNION distinct

select num,name from student_hdfs

) t3;

--where子句中子查询(Subqueries)

--不相关子查询,相当于IN、NOT IN,子查询只能选择一个列。

--(1)执行子查询,其结果不被显示,而是传递给外部查询,作为外部查询的条件使用。

--(2)执行外部查询,并显示整个结果。

SELECT *

FROM student_hdfs

WHERE student_hdfs.num IN (select num from student_local limit 2);

--相关子查询,指EXISTS和NOT EXISTS子查询

--子查询的WHERE子句中支持对父查询的引用

SELECT A

FROM T1

WHERE EXISTS (SELECT B FROM T2 WHERE T1.X = T2.Y);

4、Common Table Expressions(CTE)

公用表表达式(CTE)是一个临时结果集:该结果集是从WITH子句中指定的简单查询派生而来的,紧接在SELECT或INSERT关键字之前。

CTE仅在单个语句的执行范围内定义。

CTE可以在 SELECT,INSERT, CREATE TABLE AS SELECT或CREATE VIEW AS SELECT语句中使用。

-----------------Common Table Expressions(CTE)-----------------------------------

--select语句中的CTE

with q1 as (select num,name,age from student where num = 95002)

select * from q1;

-- from风格

with q1 as (select num,name,age from student where num = 95002)

from q1 select *;

-- chaining CTEs 链式

with q1 as ( select * from student where num = 95002),

q2 as ( select num,name,age from q1)

select * from (select num from q2) a;

-- union

with q1 as (select * from student where num = 95002),

q2 as (select * from student where num = 95004)

select * from q1 union all select * from q2;

--视图,CTAS和插入语句中的CTE

-- insert

create table s1 like student;

with q1 as ( select * from student where num = 95002)

from q1

insert overwrite table s1

select *;

select * from s1;

-- ctas

create table s2 as

with q1 as ( select * from student where num = 95002)

select * from q1;

-- view

create view v1 as

with q1 as ( select * from student where num = 95002)

select * from q1;

select * from v1;

二、Hive SQL Join连接操作

join语法的出现是用于根据两个或多个表中的列之间的关系,从这些表中共同组合查询数据

在Hive中,当下版本3.1.2总共支持6种join语法。分别是:

inner join(内连接)、left join(左连接)、right join(右连接)full outer join(全外连接)、left semi join(左半开连接)、cross join(交叉连接,也叫做笛卡尔乘积)。

1、join语法

join_table:

table_reference [INNER] JOIN table_factor [join_condition]

| table_reference {LEFT|RIGHT|FULL} [OUTER] JOIN table_reference join_condition

| table_reference LEFT SEMI JOIN table_reference join_condition

| table_reference CROSS JOIN table_reference [join_condition] (as of Hive 0.10)

join_condition:

ON expression

-- 1、table_reference:是join查询中使用的表名,也可以是子查询别名(查询结果当成表参与join)。

-- 2、table_factor:与table_reference相同,是联接查询中使用的表名,也可以是子查询别名。

-- 3、join_condition:join查询关联的条件,如果在两个以上的表上需要连接,则使用AND关键字。

2、数据准备

--table1: 员工表

CREATE TABLE employee(

id int,

name string,

deg string,

salary int,

dept string

)

row format delimited

fields terminated by ',';

--table2:员工住址信息表

CREATE TABLE employee_address (

id int,

hno string,

street string,

city string

)

row format delimited

fields terminated by ',';

--table3:员工联系方式表

CREATE TABLE employee_connection (

id int,

phno string,

email string

)

row format delimited

fields terminated by ',';

--加载数据到表中

load data local inpath '/usr/local/bigdata/employee.txt' into table employee;

load data local inpath '/usr/local/bigdata/employee_address.txt' into table employee_address;

load data local inpath '/usr/local/bigdata/employee_connection.txt' into table employee_connection;

0: jdbc:hive2://server4:10000> select * from employee;

+--------------+----------------+---------------+------------------+----------------+

| employee.id | employee.name | employee.deg | employee.salary | employee.dept |

+--------------+----------------+---------------+------------------+----------------+

| 1201 | gopal | manager | 50000 | TP |

| 1202 | manisha | cto | 50000 | TP |

| 1203 | khalil | dev | 30000 | AC |

| 1204 | prasanth | dev | 30000 | AC |

| 1206 | kranthi | admin | 20000 | TP |

+--------------+----------------+---------------+------------------+----------------+

0: jdbc:hive2://server4:10000> select * from employee_address ;

+----------------------+-----------------------+--------------------------+------------------------+

| employee_address.id | employee_address.hno | employee_address.street | employee_address.city |

+----------------------+-----------------------+--------------------------+------------------------+

| 1201 | 288A | vgiri | jublee |

| 1202 | 108I | aoc | ny |

| 1204 | 144Z | pgutta | hyd |

| 1206 | 78B | old city | la |

| 1207 | 720X | hitec | ny |

+----------------------+-----------------------+--------------------------+------------------------+

0: jdbc:hive2://server4:10000> select * from employee_connection;

+-------------------------+---------------------------+----------------------------+

| employee_connection.id | employee_connection.phno | employee_connection.email |

+-------------------------+---------------------------+----------------------------+

| 1201 | 2356742 | gopal@tp.com |

| 1203 | 1661663 | manisha@tp.com |

| 1204 | 8887776 | khalil@ac.com |

| 1205 | 9988774 | prasanth@ac.com |

| 1206 | 1231231 | kranthi@tp.com |

+-------------------------+---------------------------+----------------------------+

3、inner join 内连接

内连接是最常见的一种连接,它也被称为普通连接,其中inner可以省略:inner join == join ;

只有进行连接的两个表中都存在与连接条件相匹配的数据才会被留下来。

--1、inner join

select e.id,e.name,e_a.city,e_a.street

from employee e

inner join employee_address e_a on e.id =e_a.id;

--等价于 inner join=join

select e.id,e.name,e_a.city,e_a.street

from employee e

join employee_address e_a on e.id =e_a.id;

--等价于 隐式连接表示法

select e.id,e.name,e_a.city,e_a.street

from employee e , employee_address e_a

where e.id =e_a.id;

-- 查询员工的地址

0: jdbc:hive2://server4:10000> select e.*,a.street from employee e join employee_address a on e.id = a.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+-----------+

| e.id | e.name | e.deg | e.salary | e.dept | a.street |

+-------+-----------+----------+-----------+---------+-----------+

| 1201 | gopal | manager | 50000 | TP | vgiri |

| 1202 | manisha | cto | 50000 | TP | aoc |

| 1204 | prasanth | dev | 30000 | AC | pgutta |

| 1206 | kranthi | admin | 20000 | TP | old city |

+-------+-----------+----------+-----------+---------+-----------+

0: jdbc:hive2://server4:10000> select e.*,a.street from employee e ,employee_address a where e.id = a.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+-----------+

| e.id | e.name | e.deg | e.salary | e.dept | a.street |

+-------+-----------+----------+-----------+---------+-----------+

| 1201 | gopal | manager | 50000 | TP | vgiri |

| 1202 | manisha | cto | 50000 | TP | aoc |

| 1204 | prasanth | dev | 30000 | AC | pgutta |

| 1206 | kranthi | admin | 20000 | TP | old city |

+-------+-----------+----------+-----------+---------+-----------+

4、left join 左连接

left join中文叫做是左外连接(Left Outer Join)或者左连接,其中outer可以省略,left outer join是早期的写法。

left join的核心就在于left左。左指的是join关键字左边的表,简称左表。

通俗解释:join时以左表的全部数据为准,右边与之关联;左表数据全部返回,右表关联上的显示返回,关联不上的显示null返回。

--2、left join

select e.id,e.name,e_conn.phno,e_conn.email

from employee e

left join employee_connection e_conn on e.id =e_conn.id;

--等价于 left outer join

select e.id,e.name,e_conn.phno,e_conn.email

from employee e

left outer join employee_connection e_conn on e.id =e_conn.id;

0: jdbc:hive2://server4:10000> select e.*,c.phno,c.email from employee e left join employee_connection c on e.id = c.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+----------+-----------------+

| e.id | e.name | e.deg | e.salary | e.dept | c.phno | c.email |

+-------+-----------+----------+-----------+---------+----------+-----------------+

| 1201 | gopal | manager | 50000 | TP | 2356742 | gopal@tp.com |

| 1202 | manisha | cto | 50000 | TP | NULL | NULL |

| 1203 | khalil | dev | 30000 | AC | 1661663 | manisha@tp.com |

| 1204 | prasanth | dev | 30000 | AC | 8887776 | khalil@ac.com |

| 1206 | kranthi | admin | 20000 | TP | 1231231 | kranthi@tp.com |

+-------+-----------+----------+-----------+---------+----------+-----------------+

5、right join 右连接

right join中文叫做是右外连接(Right Outer Jion)或者右连接,其中outer可以省略。

right join的核心就在于Right右。右指的是join关键字右边的表,简称右表。

通俗解释:join时以右表的全部数据为准,左边与之关联;右表数据全部返回,左表关联上的显示返回,关联不上的显示null返回。

right join和left join之间很相似,重点在于以哪边为准,也就是一个方向的问题。

--3、right join

select e.id,e.name,e_conn.phno,e_conn.email

from employee e

right join employee_connection e_conn on e.id =e_conn.id;

--等价于 right outer join

select e.id,e.name,e_conn.phno,e_conn.email

from employee e

right outer join employee_connection e_conn on e.id =e_conn.id;

0: jdbc:hive2://server4:10000> select e.*,c.id,c.phno,c.email from employee e right join employee_connection c on e.id = c.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

| e.id | e.name | e.deg | e.salary | e.dept | c.id | c.phno | c.email |

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

| 1201 | gopal | manager | 50000 | TP | 1201 | 2356742 | gopal@tp.com |

| 1203 | khalil | dev | 30000 | AC | 1203 | 1661663 | manisha@tp.com |

| 1204 | prasanth | dev | 30000 | AC | 1204 | 8887776 | khalil@ac.com |

| NULL | NULL | NULL | NULL | NULL | 1205 | 9988774 | prasanth@ac.com |

| 1206 | kranthi | admin | 20000 | TP | 1206 | 1231231 | kranthi@tp.com |

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

6、full outer join 全外连接

full outer join 等价 full join ,中文叫做全外连接或者外连接。

包含左、右两个表的全部行,不管另外一边的表中是否存在与它们匹配的行;

在功能上:等价于对这两个数据集合分别进行左外连接和右外连接,然后再使用消去重复行的操作将上述两个结果集合并为一个结果集。

--4、full outer join

select e.id,e.name,e_a.city,e_a.street

from employee e

full outer join employee_address e_a on e.id =e_a.id;

--等价于

select e.id,e.name,e_a.city,e_a.street

from employee e

full join employee_address e_a on e.id =e_a.id;

0: jdbc:hive2://server4:10000> select e.*,c.id,c.phno,c.email from employee e full join employee_connection c on e.id = c.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

| e.id | e.name | e.deg | e.salary | e.dept | c.id | c.phno | c.email |

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

| 1201 | gopal | manager | 50000 | TP | 1201 | 2356742 | gopal@tp.com |

| 1202 | manisha | cto | 50000 | TP | NULL | NULL | NULL |

| 1203 | khalil | dev | 30000 | AC | 1203 | 1661663 | manisha@tp.com |

| 1204 | prasanth | dev | 30000 | AC | 1204 | 8887776 | khalil@ac.com |

| NULL | NULL | NULL | NULL | NULL | 1205 | 9988774 | prasanth@ac.com |

| 1206 | kranthi | admin | 20000 | TP | 1206 | 1231231 | kranthi@tp.com |

+-------+-----------+----------+-----------+---------+-------+----------+------------------+

7、left semi join 左半开连接

左半开连接(LEFT SEMI JOIN)会返回左边表的记录,前提是其记录对于右边的表满足ON语句中的判定条件。

从效果上来看有点像inner join之后只返回左表的结果。

--5、left semi join 但是只返回左表全部数据, 只不过效率高一些

select *

from employee e

left semi join employee_address e_addr on e.id =e_addr.id;

--相当于 inner join 只不过效率高一些

select e.*

from employee e

inner join employee_address e_addr on e.id =e_addr.id;

0: jdbc:hive2://server4:10000> select * from employee e left semi join employee_address e_addr on e.id =e_addr.id;

WARN : Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

+-------+-----------+----------+-----------+---------+

| e.id | e.name | e.deg | e.salary | e.dept |

+-------+-----------+----------+-----------+---------+

| 1201 | gopal | manager | 50000 | TP |

| 1202 | manisha | cto | 50000 | TP |

| 1204 | prasanth | dev | 30000 | AC |

| 1206 | kranthi | admin | 20000 | TP |

+-------+-----------+----------+-----------+---------+

8、cross join 交叉连接

交叉连接cross join,将会返回被连接的两个表的笛卡尔积,返回结果的行数等于两个表行数的乘积。对于大表来说,cross join慎用。

在SQL标准中定义的cross join就是无条件的inner join。返回两个表的笛卡尔积,无需指定关联键。

在HiveSQL语法中,cross join 后面可以跟where子句进行过滤,或者on条件过滤。

--6、cross join

--下列A、B、C 执行结果相同,但是效率不一样

--A:

select a.*,b.* from employee a,employee_address b where a.id=b.id;

--B:

select * from employee a cross join employee_address b on a.id=b.id;

select * from employee a cross join employee_address b where a.id=b.id;

--C:

select * from employee a inner join employee_address b on a.id=b.id;

--一般不建议使用方法A和B,因为如果有WHERE子句的话,往往会先生成两个表行数乘积的行的数据表然后才根据WHERE条件从中选择。

--因此,如果两个需要求交集的表太大,将会非常非常慢,不建议使用。

--A:

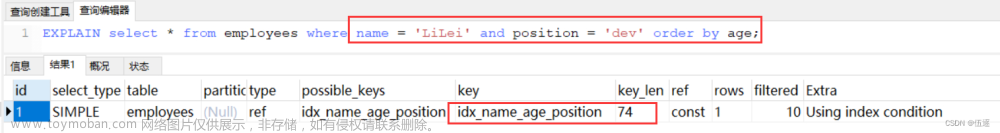

explain select a.*,b.* from employee a,employee_address b where a.id=b.id;

--B:

explain select * from employee a cross join employee_address b on a.id=b.id;

--C:

explain select * from employee a inner join employee_address b on a.id=b.id;

9、注意事项

1)、允许使用复杂的联接表达式,支持非等值连接

SELECT a.* FROM a JOIN b ON (a.id = b.id)

SELECT a.* FROM a JOIN b ON (a.id = b.id AND a.department = b.department)

SELECT a.* FROM a LEFT OUTER JOIN b ON (a.id <> b.id)

2)、同一查询中可以连接2个以上的表

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key2)

3)、如果每个表在联接子句中使用相同的列,则Hive将多个表上的联接转换为单个MR作业

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key1)

--由于联接中仅涉及b的key1列,因此被转换为1个MR作业来执行

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key2)

--会转换为两个MR作业,因为在第一个连接条件中使用了b中的key1列,而在第二个连接条件中使用了b中的key2列。

-- 第一个map / reduce作业将a与b联接在一起,然后将结果与c联接到第二个map / reduce作业中。

4)、大小表的查询位置

join时的最后一个表会通过reducer流式传输,并在其中缓冲之前的其他表,因此,将大表放置在最后有助于减少reducer阶段缓存数据所需要的内存文章来源:https://www.toymoban.com/news/detail-516085.html

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key1)

--由于联接中仅涉及b的key1列,因此被转换为1个MR作业来执行,并且表a和b的键的特定值的值被缓冲在reducer的内存中。

--然后,对于从c中检索的每一行,将使用缓冲的行来计算联接。

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key2)

--计算涉及两个MR作业。其中的第一个将a与b连接起来,并缓冲a的值,同时在reducer中流式传输b的值。

-- 在第二个MR作业中,将缓冲第一个连接的结果,同时将c的值通过reducer流式传输。

5)、在join的时候,可以通过语法STREAMTABLE提示指定要流式传输的表

--如果省略STREAMTABLE提示,则Hive将流式传输最右边的表。

SELECT /*+ STREAMTABLE(a) */ a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key1)

--a,b,c三个表都在一个MR作业中联接,并且表b和c的键的特定值的值被缓冲在reducer的内存中。

-- 然后,对于从a中检索到的每一行,将使用缓冲的行来计算联接。如果省略STREAMTABLE提示,则Hive将流式传输最右边的表。

6)、join在WHERE条件之前进行

7)、如果除一个要连接的表之外的所有表都很小,则可以将其作为仅map作业执行(map join)

SELECT /*+ MAPJOIN(b) */ a.key, a.value FROM a

JOIN b ON a.key = b.key

--不需要reducer。对于A的每个Mapper,B都会被完全读取。限制是不能执行FULL / RIGHT OUTER JOIN b。

以上,介绍了hive的分组、排序、CTE以及join的详细操作及示例。文章来源地址https://www.toymoban.com/news/detail-516085.html

到了这里,关于6、hive的select(GROUP BY、ORDER BY、CLUSTER BY、SORT BY、LIMIT、union、CTE)、join使用详解及示例的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!