【什么是 SAM】

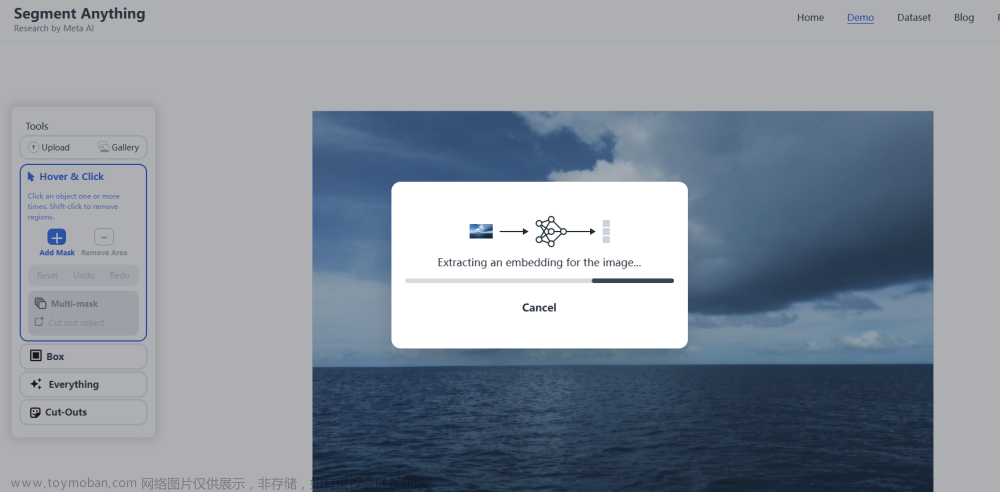

近日,Meta AI在官网发布了基础模型 Segment Anything Model(SAM)并开源,其本质是用GPT的方式(基于Transform 模型架构)让计算机具备理解了图像里面的一个个“对象”的通用能力。SAM模型建立了一个可以接受文本提示、基于海量数据(603138)训练而获得泛化能力的图像分割大模型。图像分割是计算机视觉中的一项重要任务,有助于识别和确认图像中的不同物体,把它们从背景中分离出来,这在自动驾驶(检测其他汽车、行人和障碍物)、医学成像(提取特定结构或潜在病灶)等应用中特别重要。

官网:

Segment Anything | Meta AI

github:

GitHub - facebookresearch/segment-anything: The repository provides code for running inference with the SegmentAnything Model (SAM), links for downloading the trained model checkpoints, and example notebooks that show how to use the model.

官方论文:

https://arxiv.org/abs/2304.02643

【环境搭建】

首先将源码下载到pytorch环境中:

GitHub - facebookresearch/segment-anything: The repository provides code for running inference with the SegmentAnything Model (SAM), links for downloading the trained model checkpoints, and example notebooks that show how to use the model.

安装依赖库:

pip install opencv-python pycocotools matplotlib onnxruntime onnx

安装SAM

cd segment-anything

pip install -e .

下载权重文件:

下载三个权重文件中的一个,我用的第一个,三个模型从大到小,8G以下显存选vit_b。

default or vit_h: ViT-H SAM model.

vit_l: ViT-L SAM model.

vit_b: ViT-B SAM model.

【推理测试】

源码的 notebooks下面提供了测试代码和图片:

automatic_mask_generator_example.ipynb : 自动识别图片所有mask

predictor_example.ipynb :手动选取范围进行识别mask

onnx_model_example.ipynb : onnx格式模型工具

下面测试使用的 py 代码:

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple jupyter

jupyter nbconvert --to script predictor_example.ipynb

jupyter nbconvert --to script automatic_mask_generator_example.ipynb

测试代码中 matplotlib 库需要使用3.6以下的低版本这里选择3.5.3:

区别主要在于引入的Sam预测器:

from segment_anything import sam_model_registry, SamPredictor

from segment_anything import sam_model_registry, SamAutomaticMaskGenerator

SamPredictor => 需要传入一个抠图点坐标,也就是 input_point,会扣出包含抠图点的mask以及可能的父mask。

masks, scores, logits = predictor.predict(

point_coords=input_point,

point_labels=input_label,

multimask_output=True,

)

代码如下:

import cv2

import matplotlib.pyplot as plt

import numpy as np

from segment_anything import sam_model_registry, SamPredictor

def show_mask(mask, ax, random_color=False):

if random_color:

color = np.concatenate([np.random.random(3), np.array([0.6])], axis=0)

else:

color = np.array([30 / 255, 144 / 255, 255 / 255, 0.6])

h, w = mask.shape[-2:]

mask_image = mask.reshape(h, w, 1) * color.reshape(1, 1, -1)

ax.imshow(mask_image)

def show_points(coords, labels, ax, marker_size=375):

pos_points = coords[labels == 1]

neg_points = coords[labels == 0]

ax.scatter(pos_points[:, 0], pos_points[:, 1], color='green', marker='*', s=marker_size, edgecolor='white',

linewidth=1.25)

ax.scatter(neg_points[:, 0], neg_points[:, 1], color='red', marker='*', s=marker_size, edgecolor='white',

linewidth=1.25)

if __name__ == '__main__':

# 配置,vit_h、vit_l、vit_b 从大到小,8G显存选 vit_b

sam_checkpoint = "C:\\workspace\\pycharm_workspace\\pytorch\\src\\segment-anything\\sam_vit_b_01ec64.pth"

# vit_h(default)、vit_l、vit_b

model_type = "vit_b"

# 模型实例化

sam = sam_model_registry[model_type](checkpoint=sam_checkpoint)

sam.to(device="cuda")

predictor = SamPredictor(sam)

image = cv2.imread(r"C:\\workspace\\pycharm_workspace\\pytorch\\src\\segment-anything\\notebooks\\images\\truck.jpg")

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

predictor.set_image(image)

input_point = np.array([[500, 375]])

input_label = np.array([1])

plt.figure(figsize=(10, 10))

plt.imshow(image)

show_points(input_point, input_label, plt.gca())

plt.axis('on')

plt.show()

masks, scores, logits = predictor.predict(

point_coords=input_point,

point_labels=input_label,

multimask_output=True,

)

# 遍历读取每个扣出的结果

for i, (mask, score) in enumerate(zip(masks, scores)):

plt.figure(figsize=(10, 10))

plt.imshow(image)

show_mask(mask, plt.gca())

show_points(input_point, input_label, plt.gca())

plt.title(f"Mask {i + 1}, Score: {score:.3f}", fontsize=18)

plt.axis('off')

plt.show()SamAutomaticMaskGenerator => 直接生成所有可能的mask

masks = mask_generator.generate(image)

代码如下:

import sys

sys.path.append("..")

from segment_anything import sam_model_registry, SamAutomaticMaskGenerator

import numpy as np

import torch

import matplotlib.pyplot as plt

import cv2

def show_anns(anns):

if len(anns) == 0:

return

sorted_anns = sorted(anns, key=(lambda x: x['area']), reverse=True)

ax = plt.gca()

ax.set_autoscale_on(False)

polygons = []

color = []

for ann in sorted_anns:

m = ann['segmentation']

img = np.ones((m.shape[0], m.shape[1], 3))

color_mask = np.random.random((1, 3)).tolist()[0]

for i in range(3):

img[:,:,i] = color_mask[i]

ax.imshow(np.dstack((img, m*0.35)))

if __name__ == '__main__':

sam_checkpoint = "C:\\workspace\\pycharm_workspace\\pytorch\\src\\segment-anything\\sam_vit_b_01ec64.pth"

model_type = "vit_b"

device = "cuda"

sam = sam_model_registry[model_type](checkpoint=sam_checkpoint)

sam.to(device=device)

mask_generator = SamAutomaticMaskGenerator(sam)

image = cv2.imread('images/dog.jpg')

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

masks = mask_generator.generate(image)

print(len(masks))

print(masks[0].keys())

plt.figure(figsize=(20, 20))

plt.imshow(image)

show_anns(masks)

plt.axis('off')

plt.show()【模型导出onnx】

提供了一个onnx转换的脚本:

jupyter nbconvert --to script onnx_model_example.ipynb

同样修改一下权重类型和文件即可:

checkpoint = "C:\\workspace\\pycharm_workspace\\pytorch\\src\\segment-anything\\sam_vit_b_01ec64.pth"

model_type = "vit_b"

会生成两个onnx文件,quantized是量化过后的权重:

【onnx部署】java

下面是进行 java-onnx 部署的代码,见另外一篇文章:

http://t.csdn.cn/A07aE文章来源:https://www.toymoban.com/news/detail-526707.html

文章来源地址https://www.toymoban.com/news/detail-526707.html

到了这里,关于【Meta-AI】Sam-分割一切 测试的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!