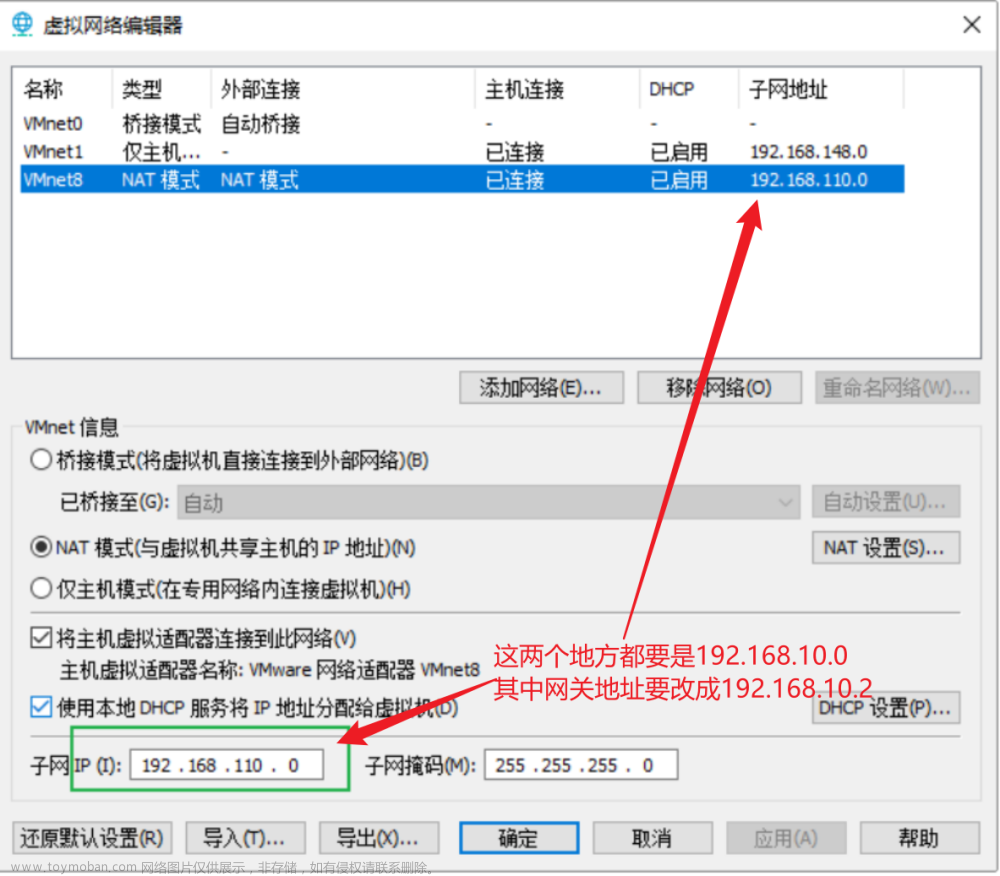

地址规划

hadoop-master 192.168.43.141

hadoop-slave1 192.168.43.142

hadoop-slave2 192.168.43.143文章来源:https://www.toymoban.com/news/detail-532569.html

核心软件包下载链接

链接:https://pan.baidu.com/s/1OwKLvZAaw8AtVaO_c6mvtw?pwd=1234

提取码:1234

MYSQL5.6:wget http://repo.mysql.com/mysql-community-release-el6-5.noarch.rpm

Scale:wget https://downloads.lightbend.com/scala/2.12.4/scala-2.12.4.tgz文章来源地址https://www.toymoban.com/news/detail-532569.html

安装前操作(三台主机配置)

iptables -F #清空系统防火墙

service iptables save #保存防火墙配置

setenforce 0 #临时关闭内核防火墙

vim /etc/selinux/config #永久关闭内核防火墙

SELINUX=disabled

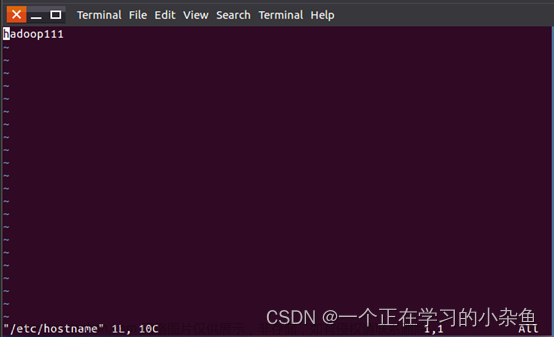

修改主机名(三台主机配置)

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=hadoop-master

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=hadoop-slave1

vim /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=hadoop-slave2

修改主机配置文件(三台主机配置)

vim /etc/hosts

192.168.43.141 hadoop-master

192.168.43.142 hadoop-slave1

192.168.43.143 hadoop-slave2

SSH配置(三台主机配置)

#生成密钥对(公钥和私钥)

ssh-keygen -t rsa

#三次回车生成密钥

#Execute in Maste

cat /root/.ssh/id_rsa.pub > /root/.ssh/authorized_keys

chmod 600 /root/.ssh/authorized_keys

#追加密钥到Master

ssh 192.168.43.142 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

ssh 192.168.43.143 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

#复制密钥到从节点

scp /root/.ssh/authorized_keys root@192.168.43.142:/root/.ssh/authorized_keys

scp /root/.ssh/authorized_keys root@192.168.43.143:/root/.ssh/authorized_keys

安装jdk(主机配置)

安装JDK

tar zxvf jdk1.8.0_111.tar.gz

#Executer in Maste

#配置JDK环境变量

vim ~/.bashrc

JAVA_HOME=/usr/local/src/jdk1.8.0_111

JAVA_BIN=/usr/local/src/jdk1.8.0_111/bin

JRE_HOME=/usr/local/src/jdk1.8.0_111/jre

CLASSPATH=/usr/local/jdk1.8.0_111/jre/lib:/usr/local/jdk1.8.0_111/lib:/usr/local/jdk1.8.0_111/jre/lib/charsets.jar

PATH=$PATH:$JAVA_HOME/bin:$JRE_HOME/bin

#分发到其他节点

scp /root/.bashrc root@192.168.43.142:/root/.bashrc

scp /root/.bashrc root@192.168.43.143:/root/.bashrc

JDK拷贝到Slave主机

#Executer in Master

scp -r /usr/local/src/jdk1.8.0_111 root@192.168.43.142:/usr/local/src/jdk1.8.0_111

scp -r /usr/local/src/jdk1.8.0_111 root@192.168.43.143:/usr/local/src/jdk1.8.0_111

安装hadoop(主机配置)

tar zxvf hadoop-2.6.1.tar.gz

cd hadoop-2.8.2/etc/hadoop

vim hadoop-env.sh

export JAVA_HOME=/usr/local/src/jdk1.8.0_111

vim yarn-env.sh

export JAVA_HOME=/usr/local/src/jdk1.8.0_111

vim slaves

hadoop-master

hadoop-slave1

hadoop-slave2

vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.43.141:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/src/hadoop-2.6.1/tmp</value>

</property>

</configuration>

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop-master:9001</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/src/hadoop-2.6.1/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/src/hadoop-2.6.1/dfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

</configuration>

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

vim yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>hadoop-master:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>hadoop-master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>hadoop-master:8035</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>hadoop-master:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>hadoop-master:8088</value>

</property>

</configuration>

#创建临时目录和文件目录

mkdir /usr/local/src/hadoop-2.6.1/tmp

mkdir -p /usr/local/src/hadoop-2.6.1/dfs/name

mkdir -p /usr/local/src/hadoop-2.6.1/dfs/data

#配置环境变量

vim ~/.bashrc

HADOOP_HOME=/usr/local/src/hadoop-2.6.1

export PATH=$PATH:$HADOOP_HOME/bin

#刷新环境变量

source ~/.bashrc

#拷贝安装包

scp -r /usr/local/src/hadoop-2.6.1 root@192.168.43.142:/usr/local/src/hadoop-2.6.1

scp -r /usr/local/src/hadoop-2.6.1 root@192.168.43.143:/usr/local/src/hadoop-2.6.1

hadoop namenode -format #初始化Namenode

./sbin/start-all.sh #启动集群(特别注意路径)

jps #状态查看

http://hadoop-master:8088 #查看网页监控

Hive安装

MySQL yum list installed | grep mysql #查询本地

yum -y remove mysql-libs.x86_64 #将原先的删除

rpm -ivh mysql-community-release-el6-5.noarch.rpm #进行安解压

yum repolist all | grep mysql #查看

yum install mysql-community-server -y #进行安装

chkconfig --list mysqld #查看是否安装成功

chkconfig mysqld on #设置开机启动

service mysqld start #启动mysql服务

mysql -uroot -p (默认密码为空) #修改密码

use mysql; #对MySQL库进行操作

update user set authentication_string=password('123456') where user='root'; # 设置账户密码并退出

flush privileges; #刷新权限

grant all privileges on *.* to 'root'@'%' identified by '123456' with grant option #MySQL5.6配置远程访问

service mysqld stop #停止mysql服务

service mysqld start #启动mysql服务

tar zxvf mysql-connector-java-5.1.45.tar.gz

#复制连接库文件

cp mysql-connector-java-5.1.45/mysql-connector-java-5.1.45-bin.jar /usr/local/src/apache-hive-1.2.2-bin/lib

tar zxvf apache-hive-1.2.2-bin.tar.gz

#修改Hive配置文件

cd apache-hive-1.2.2-bin/conf

vim hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop-master:3306/metastore?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore,metastore不存在创建</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description>

</property>

</configuration>

#增加环境变量(三台主机配置)

vim ~/.bashrc

export HIVE_HOME=/usr/local/src/apache-hive-1.2.2-bin

export PATH=$HIVE_HOME/bin:$PATH

#刷新环境变量

source ~/.bashrc

#拷贝安装包

scp -r /usr/local/src/apache-hive-1.2.2-bin root@hadoop-slave1:/usr/local/src/apache-hive-1.2.2-bin

scp -r /usr/local/src/apache-hive-1.2.2-bin root@hadoop-slave2:/usr/local/src/apache-hive-1.2.2-bin

#启动HIVE

hive

#解决Terminal initialization failed; falling back to unsupported

cd /usr/local/src/hadoop/share/hadoop/yarn/lib

find jline* #命令查找jline的jar包

rm-rf jline-2.12.jar #删除该jar包

#这里可能不是2.12,取决于你自己装的jline的版本,之后将你的hive文件夹下的jline拷贝到hadoop文件夹中去

cp jline-2.12.jar /servers/hadoop/share/hadoop/yarn/lib/

#重启Hadoop集群以及hive。

stop-all.sh

start-all.sh

#启动HIVE

hive

#查看mysql数据库是否有metastore数据表

Spark安装

#解压包

tar zxvf spark-1.6.3-bin-hadoop2.6.tgz

tar zxvf scala-2.12.4.tgz

#修改Spark配置文件

cd /usr/local/src/spark-2.0.2-bin-hadoop2.6/conf

vim spark-env.sh

export SCALA_HOME=/usr/local/src/scala-2.12.4

export JAVA_HOME=/usr/local/src/jdk1.8.0_111

export HADOOP_HOME=/usr/local/src/hadoop-2.6.1

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

SPARK_MASTER_IP=master

SPARK_LOCAL_DIRS=/usr/local/src/spark-2.0.2-bin-hadoop2.6

SPARK_DRIVER_MEMORY=1G

vim slaves

hadoop-slave1

hadoop-slave2

#拷贝安装包

scp -r /usr/local/src/spark-2.0.2-bin-hadoop2.6 root@192.168.43.142:/usr/local/src/spark-2.0.2-bin-hadoop2.6

scp -r /usr/local/src/spark-2.0.2-bin-hadoop2.6 root@192.168.43.143:/usr/local/src/spark-2.0.2-bin-hadoop2.6

scp -r /usr/local/src/scala-2.12.4 root@192.168.43.142:/usr/local/src/scala-2.12.4

scp -r /usr/local/src/scala-2.12.4 root@192.168.43.143:/usr/local/src/scala-2.12.4

#启动集群

cd /usr/local/src/spark-2.0.2-bin-hadoop2.6/sbin

./start-all.sh

#网页监控面板

hadoop-master:8080

#本地模式

./bin/run-example SparkPi 10 --master local[2]

#集群Standlone

./bin/spark-submit --class org.apache.spark.examples.SparkPi --master spark://master:7077 lib/spark-examples-1.6.3-hadoop2.6.0.jar 100

#集群

Spark on Yarn

./bin/spark-submit --class org.apache.spark.examples.SparkPi --master yarn-cluster lib/spark-examples-1.6.3-hadoop2.6.0.jar 10

到了这里,关于分布式搭建(hadoop+hive+spark)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!