点击加入->【OpenAI-API开发】技术交流群

Chatgpt由Openai最先进的型号

gpt-3.5-Turbo和

gpt-4提供支持。我们可以使用OpenAI API使用

GPT-3.5-Turbo或

GPT-4构建自己的应用程序。

聊天模型将一系列消息作为输入,然后返回AI写的消息作为输出。

本指南用一些示例API调用说明了聊天格式。

1. 导入openai库

# if needed, install and/or upgrade to the latest version of the OpenAI Python library

%pip install --upgrade openai

Requirement already satisfied: openai in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (0.27.8)

Requirement already satisfied: requests>=2.20 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from openai) (2.26.0)

Requirement already satisfied: tqdm in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from openai) (4.62.3)

Requirement already satisfied: aiohttp in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from openai) (3.8.4)

Requirement already satisfied: charset-normalizer~=2.0.0 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.20->openai) (2.0.7)

Requirement already satisfied: certifi>=2017.4.17 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.20->openai) (2022.12.7)

Requirement already satisfied: idna<4,>=2.5 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.20->openai) (3.3)

Requirement already satisfied: urllib3<1.27,>=1.21.1 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.20->openai) (1.26.7)

Requirement already satisfied: multidict<7.0,>=4.5 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (6.0.4)

Requirement already satisfied: frozenlist>=1.1.1 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (1.3.3)

Requirement already satisfied: aiosignal>=1.1.2 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (1.3.1)

Requirement already satisfied: async-timeout<5.0,>=4.0.0a3 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (4.0.2)

Requirement already satisfied: yarl<2.0,>=1.0 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (1.8.2)

Requirement already satisfied: attrs>=17.3.0 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from aiohttp->openai) (22.2.0)

Note: you may need to restart the kernel to use updated packages.

# import the OpenAI Python library for calling the OpenAI API

import openai

# set openai api

openai.api_key = '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'

model_list = openai.Model.list() # 支持的model列表

# 列出和gpt相关的model list

for model in model_list['data']:

if 'gpt' in model['id']:

print(model['id'])

gpt-3.5-turbo-16k-0613

gpt-3.5-turbo-16k

gpt-3.5-turbo-0301

gpt-3.5-turbo

gpt-3.5-turbo-0613

2.示例聊天API调用

聊天API调用有两个必需的输入:

-

model:我们可以使用的模型的名称(例如,gpt-3.5-turbo,gpt-4,gpt-3.5-turbo-0613,gpt-3.5-turbo-16k--0613) -

messages:消息对象的列表,每个对象都有两个必需的字段:-

role:Messenger的角色(system','user'或Assistain`的角色) -

content:消息的内容(例如,给我写一首美丽的诗)

-

messages还可以包含可选的"name"字段,该字段为Messenger提供了名称。例如,example-user,ealice,blackbeardbot。名称可能不包含空格。

截至2023年6月,我们还可以使用一系列的“functions”,告诉GPT它是否可以生成JSON,输入到一个函数里面。有关详细信息,请参见[documentation](https://platform.openai.com/docs/guides/gpt/function-calling),[api参考](https://platform.openai.com/docs/api-reference/聊天), 或《openai cookbook》如何使用聊天模型调用函数。

通常,对话将从系统消息开始,该消息告诉Assistant如何做,然后是交替的用户和Assistant消息,但是我们不一定遵循此格式。

我们来看一个示例聊天API调用,以查看聊天格式在实践中的工作方式。

# Example OpenAI Python library request

MODEL = "gpt-3.5-turbo-16k-0613"

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Knock knock."},

{"role": "assistant", "content": "Who's there?"},

{"role": "user", "content": "Orange."},

],

temperature=0,

)

response

<OpenAIObject chat.completion id=chatcmpl-7avh8y3M0d47CBJGYQxUXQZnisQYP at 0x7fcc3044ce50> JSON: {

"id": "chatcmpl-7avh8y3M0d47CBJGYQxUXQZnisQYP",

"object": "chat.completion",

"created": 1689035942,

"model": "gpt-3.5-turbo-16k-0613",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Orange who?"

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 35,

"completion_tokens": 3,

"total_tokens": 38

}

}

响应对象有以下几个字段:

-

id:请求的ID -

object:返回对象的类型(例如,chat.completion) -

created:请求的时间戳 -

model:用于生成响应的模型的全名 -

usage:用于生成答复,计数提示,完成和总计的token数 -

choices:完整对象的列表(只有一个,除非设置n大于1)-

message:模型生成的消息对象,带有role(角色)和content -

finish_reason:模型停止生成文本的原因(如果达到了max_tokens限制,则``停止’‘或`length’ -

索引:选择列表中完成的索引

-

提取回复:

response['choices'][0]['message']['content']

'Orange who?'

我们可以使用非交流的任务,直接通过将指令放入第一个用户消息中作为聊天格式。

例如,要求模型以海盗黑人的风格解释异步编程,我们可以按以下方式进行对话:

# example with a system message

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Explain asynchronous programming in the style of the pirate Blackbeard."},

],

temperature=0,

)

print(response['choices'][0]['message']['content'])

Arr, me matey! Let me tell ye a tale of asynchronous programming, in the style of the fearsome pirate Blackbeard!

Ye see, in the world of programming, there be times when ye need to perform tasks that take a long time to complete. These tasks might be fetchin' data from a faraway server, or performin' complex calculations. Now, in the olden days, programmers would wait patiently for these tasks to finish before movin' on to the next one. But that be a waste of time, me hearties!

Asynchronous programming be like havin' a crew of scallywags workin' on different tasks at the same time. Instead of waitin' for one task to finish before startin' the next, ye can set sail on multiple tasks at once! This be a mighty efficient way to get things done, especially when ye be dealin' with slow or unpredictable tasks.

In the land of JavaScript, we use a special technique called callbacks to achieve this. When ye start a task, ye pass along a callback function that be called once the task be completed. This way, ye can move on to other tasks while ye be waitin' for the first one to finish. It be like sendin' yer crewmates off on different missions, while ye be plannin' the next raid!

But beware, me mateys! Asynchronous programming can be a treacherous sea to navigate. Ye need to be careful with the order in which ye be executin' tasks, and make sure ye be handlin' any errors that might arise. It be a bit more complex than the traditional way of doin' things, but the rewards be worth it!

So, me hearties, if ye be lookin' to make yer programs faster and more efficient, give asynchronous programming a try. Just remember to keep a weather eye on yer code, and ye'll be sailin' the high seas of programming like a true pirate!

# example without a system message

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "user", "content": "Explain asynchronous programming in the style of the pirate Blackbeard."},

],

temperature=0,

)

print(response['choices'][0]['message']['content'])

Arr, me hearties! Gather 'round and listen up, for I be tellin' ye about the mysterious art of asynchronous programming, in the style of the fearsome pirate Blackbeard!

Now, ye see, in the world of programming, there be times when we need to perform tasks that take a mighty long time to complete. These tasks might involve fetchin' data from the depths of the internet, or performin' complex calculations that would make even Davy Jones scratch his head.

In the olden days, we pirates used to wait patiently for each task to finish afore movin' on to the next one. But that be a waste of precious time, me hearties! We be pirates, always lookin' for ways to be more efficient and plunder more booty!

That be where asynchronous programming comes in, me mateys. It be a way to tackle multiple tasks at once, without waitin' for each one to finish afore movin' on. It be like havin' a crew of scallywags workin' on different tasks simultaneously, while ye be overseein' the whole operation.

Ye see, in asynchronous programming, we be breakin' down our tasks into smaller chunks called "coroutines." Each coroutine be like a separate pirate, workin' on its own task. When a coroutine be startin' its work, it don't wait for the task to finish afore movin' on to the next one. Instead, it be movin' on to the next task, lettin' the first one continue in the background.

Now, ye might be wonderin', "But Blackbeard, how be we know when a task be finished if we don't be waitin' for it?" Ah, me hearties, that be where the magic of callbacks and promises come in!

When a coroutine be startin' its work, it be attachin' a callback or a promise to the task. This be like leavin' a message in a bottle, tellin' the task to send a signal when it be finished. Once the task be done, it be sendin' a signal to the callback or fulfillin' the promise, lettin' the coroutine know that it be time to handle the results.

This way, me mateys, we be able to keep our ship sailin' smoothly, with multiple tasks bein' worked on at the same time. We be avoidin' the dreaded "blocking" that be slowin' us down, and instead, we be makin' the most of our time on the high seas of programming.

So, me hearties, remember this: asynchronous programming be like havin' a crew of efficient pirates, workin' on different tasks at once. It be all about breakin' down tasks into smaller chunks, attachin' callbacks or promises to 'em, and lettin' 'em run in the background while ye be movin' on to the next adventure.

Now, go forth, me mateys, and embrace the power of asynchronous programming! May ye plunder the treasures of efficiency and sail the seas of productivity! Arrrr!

3.GPT-3.5-Turbo-0301的使用技巧

指导模型的最佳实践可能会因模型版本而异。以下建议适用于 gpt-3.5-turbo-0301 ,可能不适用于未来的型号。

系统消息

system消息可用于引导assistant,具有不同的性格和行为,比如我们常说的角色扮演,猫娘。

此处需注意,GPT-3.5-Turbo-0301通常不会像gpt-4-0314或gpt-3.5-3.5-Turbo-0613一样对系统(system)消息那么关注。因此,对于GPT-3.5-Turbo-0301,我建议将重要信息,放在用户(user)消息中。一些开发人员发现在对话结束时不断将系统消息移动,以防止模型的注意力随着对话的越来越长而漂移。

# An example of a system message that primes the assistant to explain concepts in great depth

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a friendly and helpful teaching assistant. You explain concepts in great depth using simple terms, and you give examples to help people learn. At the end of each explanation, you ask a question to check for understanding"},

{"role": "user", "content": "Can you explain how fractions work?"},

],

temperature=0,

)

print(response["choices"][0]["message"]["content"])

Of course! Fractions are a way to represent parts of a whole. They are made up of two numbers: a numerator and a denominator. The numerator tells you how many parts you have, and the denominator tells you how many equal parts make up the whole.

Let's take an example to understand this better. Imagine you have a pizza that is divided into 8 equal slices. If you eat 3 slices, you can represent that as the fraction 3/8. Here, the numerator is 3 because you ate 3 slices, and the denominator is 8 because the whole pizza is divided into 8 slices.

Fractions can also be used to represent numbers less than 1. For example, if you eat half of a pizza, you can write it as 1/2. Here, the numerator is 1 because you ate one slice, and the denominator is 2 because the whole pizza is divided into 2 equal parts.

Now, let's practice! If you eat 4 out of 6 slices of a pizza, how would you write that as a fraction?

# An example of a system message that primes the assistant to give brief, to-the-point answers

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a laconic assistant. You reply with brief, to-the-point answers with no elaboration."},

{"role": "user", "content": "Can you explain how fractions work?"},

],

temperature=0,

)

print(response["choices"][0]["message"]["content"])

Fractions represent parts of a whole. They have a numerator (top number) and a denominator (bottom number).

Few-show prompt

在某些情况下,我们给几个实例,也就是few-shot,可以更容易获得我们想要的内容,相比直接告诉模型我们想要什么,最好给几个例子。

向模型展示您想要的内容的一种方法是,使用伪造的一些例子。

例如:

# An example of a faked few-shot conversation to prime the model into translating business jargon to simpler speech

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a helpful, pattern-following assistant."},

{"role": "user", "content": "Help me translate the following corporate jargon into plain English."},

{"role": "assistant", "content": "Sure, I'd be happy to!"},

{"role": "user", "content": "New synergies will help drive top-line growth."},

{"role": "assistant", "content": "Things working well together will increase revenue."},

{"role": "user", "content": "Let's circle back when we have more bandwidth to touch base on opportunities for increased leverage."},

{"role": "assistant", "content": "Let's talk later when we're less busy about how to do better."},

{"role": "user", "content": "This late pivot means we don't have time to boil the ocean for the client deliverable."},

],

temperature=0,

)

print(response["choices"][0]["message"]["content"])

This sudden change in direction means we don't have enough time to complete the entire project for the client.

为了帮助说明示例消息里面,并不是真实对话的一部分,不应该由模型引用,可以尝试将system系统的名称(name)字段置为 example_user 和 example_assistant 。

改变上面的几个示例,我们可以写:

# The business jargon translation example, but with example names for the example messages

response = openai.ChatCompletion.create(

model=MODEL,

messages=[

{"role": "system", "content": "You are a helpful, pattern-following assistant that translates corporate jargon into plain English."},

{"role": "system", "name":"example_user", "content": "New synergies will help drive top-line growth."},

{"role": "system", "name": "example_assistant", "content": "Things working well together will increase revenue."},

{"role": "system", "name":"example_user", "content": "Let's circle back when we have more bandwidth to touch base on opportunities for increased leverage."},

{"role": "system", "name": "example_assistant", "content": "Let's talk later when we're less busy about how to do better."},

{"role": "user", "content": "This late pivot means we don't have time to boil the ocean for the client deliverable."},

],

temperature=0,

)

print(response["choices"][0]["message"]["content"])

This sudden change in direction means we don't have enough time to complete the entire project for the client.

并非每一次尝试对话的尝试都会一开始成功。

如果您的第一次尝试失败,请不要害怕尝试不同的启动或调理模型的方法。

例如,一位开发人员在插入一条用户消息时发现了准确性的提高,该消息说“到目前为止,这些工作很棒,这些都是完美的”,可以帮助您调节该模型提供更高质量的响应。

有关如何提高模型可靠性的更多想法,可以阅读有关[提高可靠性的技术的指南](…/ Techniques_to_to_improve_reliability.md)。它是为非聊天模型编写的,但其许多原则仍然适用。

4.计数Token数

提交请求时,API将消息转换为一系列Token,我们计费也是按照消耗的token数来计算。

所用令牌的数量影响:

- 请求费用

- 生成响应所需的时间

- 当答复被切断时,击中了最大令牌限制(

gpt-3.5-turbo`''或gpt-4`''gpt-4,192)

我们可以使用以下函数来计算将使用消息列表使用的令牌数量。

请注意,从消息中计数令牌的确切方式可能会因模型而变化。考虑以下功能的计数,而不是永恒的保证。

特别是,使用可选函数输入的请求将在以下估计值的基础上消耗额外的令牌。

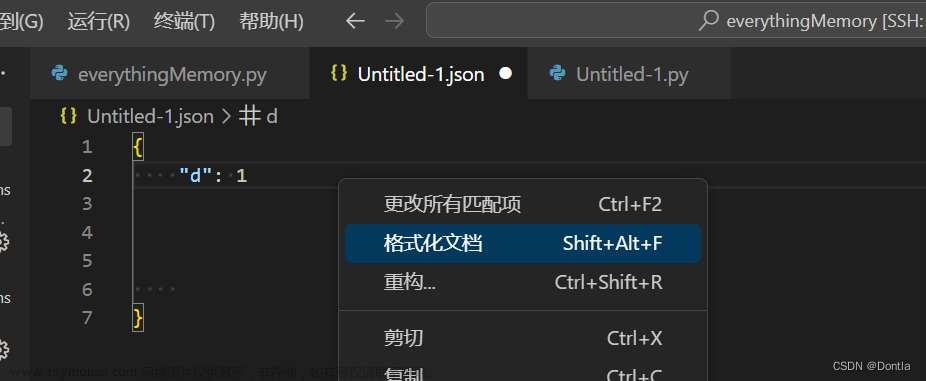

阅读有关如何使用Tiktoken计数令牌中计数令牌的更多信息。我们需要使用tiktoken这个库,首先安装这个库。文章来源:https://www.toymoban.com/news/detail-544506.html

!pip install --upgrade tiktoken

Collecting tiktoken

Downloading tiktoken-0.4.0-cp38-cp38-macosx_10_9_x86_64.whl (798 kB)

[K |████████████████████████████████| 798 kB 213 kB/s eta 0:00:01

[?25hRequirement already satisfied: regex>=2022.1.18 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from tiktoken) (2022.10.31)

Requirement already satisfied: requests>=2.26.0 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from tiktoken) (2.26.0)

Requirement already satisfied: idna<4,>=2.5 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.26.0->tiktoken) (3.3)

Requirement already satisfied: certifi>=2017.4.17 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.26.0->tiktoken) (2022.12.7)

Requirement already satisfied: urllib3<1.27,>=1.21.1 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.26.0->tiktoken) (1.26.7)

Requirement already satisfied: charset-normalizer~=2.0.0 in /Users/linxi/anaconda3/envs/pytorch18/lib/python3.8/site-packages (from requests>=2.26.0->tiktoken) (2.0.7)

Installing collected packages: tiktoken

Successfully installed tiktoken-0.4.0

import tiktoken

def num_tokens_from_messages(messages, model="gpt-3.5-turbo-0613"):

"""Return the number of tokens used by a list of messages."""

try:

encoding = tiktoken.encoding_for_model(model)

except KeyError:

print("Warning: model not found. Using cl100k_base encoding.")

encoding = tiktoken.get_encoding("cl100k_base")

if model in {

"gpt-3.5-turbo-0613",

"gpt-3.5-turbo-16k-0613",

"gpt-4-0314",

"gpt-4-32k-0314",

"gpt-4-0613",

"gpt-4-32k-0613",

}:

tokens_per_message = 3

tokens_per_name = 1

elif model == "gpt-3.5-turbo-0301":

tokens_per_message = 4 # every message follows <|start|>{role/name}\n{content}<|end|>\n

tokens_per_name = -1 # if there's a name, the role is omitted

elif "gpt-3.5-turbo" in model:

print("Warning: gpt-3.5-turbo may update over time. Returning num tokens assuming gpt-3.5-turbo-0613.")

return num_tokens_from_messages(messages, model="gpt-3.5-turbo-0613")

elif "gpt-4" in model:

print("Warning: gpt-4 may update over time. Returning num tokens assuming gpt-4-0613.")

return num_tokens_from_messages(messages, model="gpt-4-0613")

else:

raise NotImplementedError(

f"""num_tokens_from_messages() is not implemented for model {model}. See https://github.com/openai/openai-python/blob/main/chatml.md for information on how messages are converted to tokens."""

)

num_tokens = 0

for message in messages:

num_tokens += tokens_per_message

for key, value in message.items():

num_tokens += len(encoding.encode(value))

if key == "name":

num_tokens += tokens_per_name

num_tokens += 3 # every reply is primed with <|start|>assistant<|message|>

return num_tokens

接下里我们使用上述函数来计算,不同的模型对同样的输入,消耗的token数是多少。文章来源地址https://www.toymoban.com/news/detail-544506.html

# let's verify the function above matches the OpenAI API response

import openai

example_messages = [

{

"role": "system",

"content": "You are a helpful, pattern-following assistant that translates corporate jargon into plain English.",

},

{

"role": "system",

"name": "example_user",

"content": "New synergies will help drive top-line growth.",

},

{

"role": "system",

"name": "example_assistant",

"content": "Things working well together will increase revenue.",

},

{

"role": "system",

"name": "example_user",

"content": "Let's circle back when we have more bandwidth to touch base on opportunities for increased leverage.",

},

{

"role": "system",

"name": "example_assistant",

"content": "Let's talk later when we're less busy about how to do better.",

},

{

"role": "user",

"content": "This late pivot means we don't have time to boil the ocean for the client deliverable.",

},

]

for model in [

"gpt-3.5-turbo-0301",

"gpt-3.5-turbo-0613",

"gpt-3.5-turbo",

"gpt-4-0314",

"gpt-4-0613",

"gpt-4",

]:

print(model)

# example token count from the function defined above

print(f"{num_tokens_from_messages(example_messages, model)} prompt tokens counted by num_tokens_from_messages().")

# example token count from the OpenAI API

try:

response = openai.ChatCompletion.create(

model=model,

messages=example_messages,

temperature=0,

max_tokens=1, # we're only counting input tokens here, so let's not waste tokens on the output

)

print(f'{response["usage"]["prompt_tokens"]} prompt tokens counted by the OpenAI API.')

print()

except openai.error.OpenAIError as e:

print(e)

print()

gpt-3.5-turbo-0301

127 prompt tokens counted by num_tokens_from_messages().

127 prompt tokens counted by the OpenAI API.

gpt-3.5-turbo-0613

129 prompt tokens counted by num_tokens_from_messages().

129 prompt tokens counted by the OpenAI API.

gpt-3.5-turbo

Warning: gpt-3.5-turbo may update over time. Returning num tokens assuming gpt-3.5-turbo-0613.

129 prompt tokens counted by num_tokens_from_messages().

129 prompt tokens counted by the OpenAI API.

gpt-4-0314

129 prompt tokens counted by num_tokens_from_messages().

The model: `gpt-4-0314` does not exist

gpt-4-0613

129 prompt tokens counted by num_tokens_from_messages().

The model: `gpt-4-0613` does not exist

gpt-4

Warning: gpt-4 may update over time. Returning num tokens assuming gpt-4-0613.

129 prompt tokens counted by num_tokens_from_messages().

The model: `gpt-4` does not exist

到了这里,关于Python遇上OpenAI系列教程【一】:如何格式化输入到chatgpt模型的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!