GoogLeNet卷积神经网络-笔记

GoogLeNet是2014年ImageNet比赛的冠军,

它的主要特点是网络不仅有深度,

还在横向上具有“宽度”。

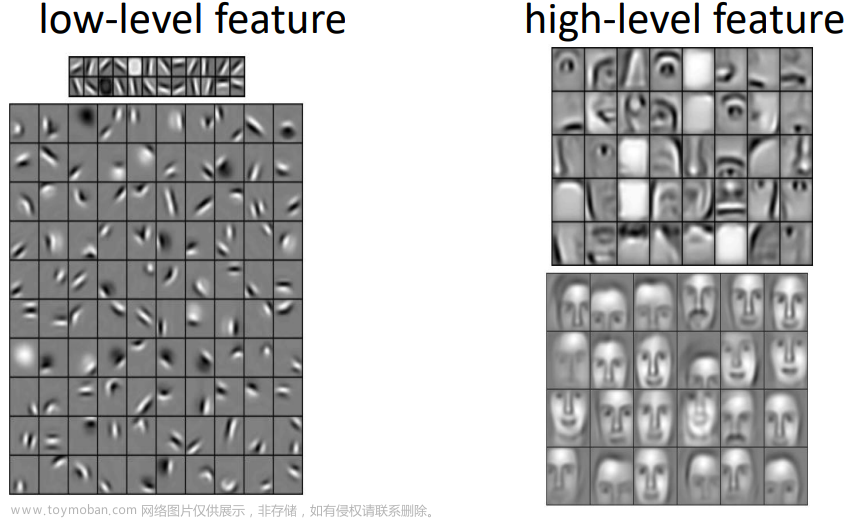

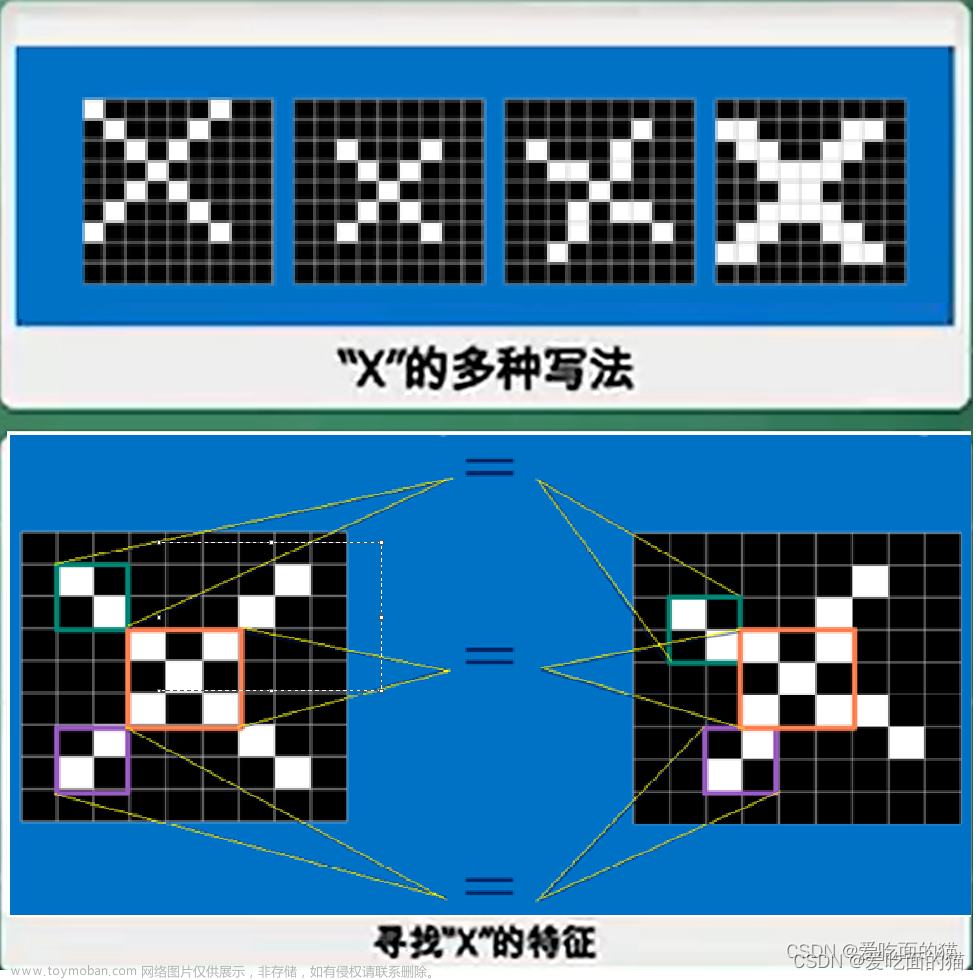

由于图像信息在空间尺寸上的巨大差异,

如何选择合适的卷积核来提取特征就显得比较困难了。

空间分布范围更广的图像信息适合用较大的卷积核来提取其特征;

而空间分布范围较小的图像信息则适合用较小的卷积核来提取其特征。

为了解决这个问题,

GoogLeNet提出了一种被称为Inception模块的方案。

Inception模块结构图

GoogleNet模型网络结构图

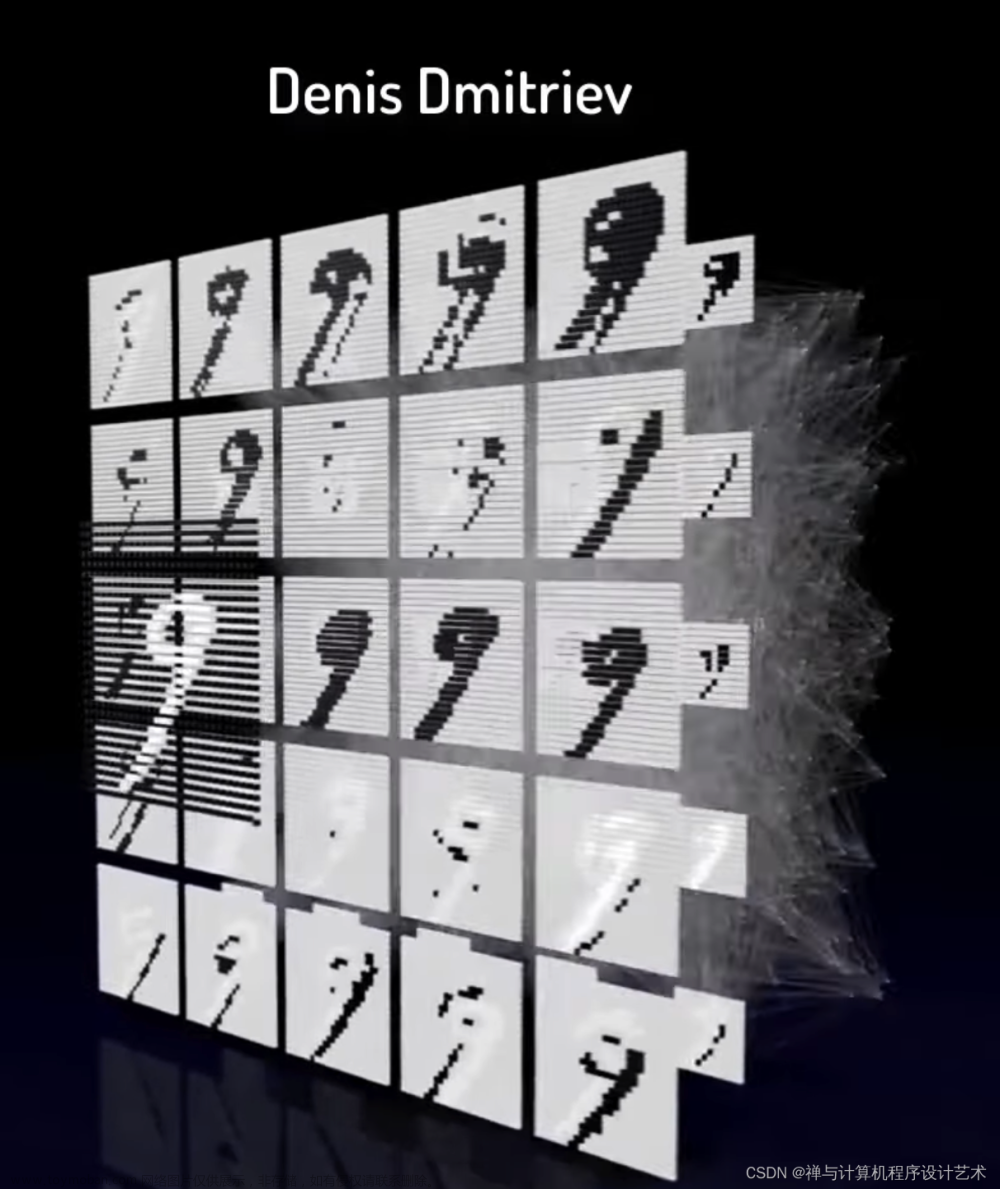

测试结果为:

通过运行结果可以发现,使用GoogLeNet在眼疾筛查数据集iChallenge-PM上,loss能有效的下降,经过5个epoch的训练,在验证集上的准确率可以达到95%左右。

实测准确率为0.95左右

[validation] accuracy/loss: 0.9575/0.1915

[validation] accuracy/loss: 0.9500/0.2322

#输出结果:

PS E:\project\python> & D:/ProgramData/Anaconda3/python.exe e:/project/python/PM/GoogLeNet_PM.py

W0803 18:25:55.522811 8308 gpu_resources.cc:61] Please NOTE: device: 0, GPU Compute Capability: 6.1, Driver API Version: 12.2, Runtime API Version: 10.2

W0803 18:25:55.532805 8308 gpu_resources.cc:91] device: 0, cuDNN Version: 7.6.

116

start training ...

epoch: 0, batch_id: 0, loss is: 0.6920

epoch: 0, batch_id: 20, loss is: 0.8546

[validation] accuracy/loss: 0.7100/0.5381

epoch: 1, batch_id: 0, loss is: 0.6177

epoch: 1, batch_id: 20, loss is: 0.4581

[validation] accuracy/loss: 0.9400/0.3120

epoch: 2, batch_id: 0, loss is: 0.2858

epoch: 2, batch_id: 20, loss is: 0.5234

[validation] accuracy/loss: 0.5975/0.5757

epoch: 3, batch_id: 0, loss is: 0.6338

epoch: 3, batch_id: 20, loss is: 0.3180

[validation] accuracy/loss: 0.9575/0.1915

epoch: 4, batch_id: 0, loss is: 0.1087

epoch: 4, batch_id: 20, loss is: 0.3728

[validation] accuracy/loss: 0.9500/0.2322

PS E:\project\python>

'''

GoogleNet网模型中子图层Shape[N,C,H,W],w参数,b参数[Cout]

PS E:\project\python> & D:/ProgramData/Anaconda3/python.exe e:/project/python/PM/GoogLeNet_PM.py

W0803 20:27:47.303915 15396 gpu_resources.cc:61] Please NOTE: device: 0, GPU Compute Capability: 6.1, Driver API Version: 12.2, Runtime API Version: 10.2

W0803 20:27:47.311910 15396 gpu_resources.cc:91] device: 0, cuDNN Version: 7.6.

116

(10, 3, 224, 224)

[10, 3, 224, 224]

conv2d_0 [10, 64, 224, 224] [64, 3, 7, 7] [64]

max_pool2d_0 [10, 64, 112, 112]

conv2d_1 [10, 64, 112, 112] [64, 64, 1, 1] [64]

conv2d_2 [10, 192, 112, 112] [192, 64, 3, 3] [192]

max_pool2d_1 [10, 192, 56, 56]

print block3-1:

conv2d_3 [10, 64, 56, 56] [64, 192, 1, 1] [64]

conv2d_4 [10, 96, 56, 56] [96, 192, 1, 1] [96]

conv2d_5 [10, 128, 56, 56] [128, 96, 3, 3] [128]

conv2d_6 [10, 16, 56, 56] [16, 192, 1, 1] [16]

conv2d_7 [10, 32, 56, 56] [32, 16, 5, 5] [32]

max_pool2d_2 [10, 192, 56, 56]

conv2d_8 [10, 32, 56, 56] [32, 192, 1, 1] [32]

print block3-2:

conv2d_9 [10, 128, 56, 56] [128, 256, 1, 1] [128]

conv2d_10 [10, 128, 56, 56] [128, 256, 1, 1] [128]

conv2d_11 [10, 192, 56, 56] [192, 128, 3, 3] [192]

conv2d_12 [10, 32, 56, 56] [32, 256, 1, 1] [32]

conv2d_13 [10, 96, 56, 56] [96, 32, 5, 5] [96]

max_pool2d_3 [10, 256, 56, 56]

conv2d_14 [10, 64, 56, 56] [64, 256, 1, 1] [64]

max_pool2d_4 [10, 480, 28, 28]

print block4_1:

conv2d_15 [10, 192, 28, 28] [192, 480, 1, 1] [192]

conv2d_16 [10, 96, 28, 28] [96, 480, 1, 1] [96]

conv2d_17 [10, 208, 28, 28] [208, 96, 3, 3] [208]

conv2d_18 [10, 16, 28, 28] [16, 480, 1, 1] [16]

conv2d_19 [10, 48, 28, 28] [48, 16, 5, 5] [48]

max_pool2d_5 [10, 480, 28, 28]

conv2d_20 [10, 64, 28, 28] [64, 480, 1, 1] [64]

print block4_2:

conv2d_21 [10, 160, 28, 28] [160, 512, 1, 1] [160]

conv2d_22 [10, 112, 28, 28] [112, 512, 1, 1] [112]

conv2d_23 [10, 224, 28, 28] [224, 112, 3, 3] [224]

conv2d_24 [10, 24, 28, 28] [24, 512, 1, 1] [24]

conv2d_25 [10, 64, 28, 28] [64, 24, 5, 5] [64]

max_pool2d_6 [10, 512, 28, 28]

conv2d_26 [10, 64, 28, 28] [64, 512, 1, 1] [64]

print block4_3:

conv2d_27 [10, 128, 28, 28] [128, 512, 1, 1] [128]

conv2d_28 [10, 128, 28, 28] [128, 512, 1, 1] [128]

conv2d_29 [10, 256, 28, 28] [256, 128, 3, 3] [256]

conv2d_30 [10, 24, 28, 28] [24, 512, 1, 1] [24]

conv2d_31 [10, 64, 28, 28] [64, 24, 5, 5] [64]

max_pool2d_7 [10, 512, 28, 28]

conv2d_32 [10, 64, 28, 28] [64, 512, 1, 1] [64]

print block4_4:

conv2d_33 [10, 112, 28, 28] [112, 512, 1, 1] [112]

conv2d_34 [10, 144, 28, 28] [144, 512, 1, 1] [144]

conv2d_35 [10, 288, 28, 28] [288, 144, 3, 3] [288]

conv2d_36 [10, 32, 28, 28] [32, 512, 1, 1] [32]

conv2d_37 [10, 64, 28, 28] [64, 32, 5, 5] [64]

max_pool2d_8 [10, 512, 28, 28]

conv2d_38 [10, 64, 28, 28] [64, 512, 1, 1] [64]

print block4_5:

conv2d_39 [10, 256, 28, 28] [256, 528, 1, 1] [256]

conv2d_40 [10, 160, 28, 28] [160, 528, 1, 1] [160]

conv2d_41 [10, 320, 28, 28] [320, 160, 3, 3] [320]

conv2d_42 [10, 32, 28, 28] [32, 528, 1, 1] [32]

conv2d_43 [10, 128, 28, 28] [128, 32, 5, 5] [128]

max_pool2d_9 [10, 528, 28, 28]

conv2d_44 [10, 128, 28, 28] [128, 528, 1, 1] [128]

max_pool2d_10 [10, 832, 14, 14]

print block5_1:

conv2d_45 [10, 256, 14, 14] [256, 832, 1, 1] [256]

conv2d_46 [10, 160, 14, 14] [160, 832, 1, 1] [160]

conv2d_47 [10, 320, 14, 14] [320, 160, 3, 3] [320]

conv2d_48 [10, 32, 14, 14] [32, 832, 1, 1] [32]

conv2d_49 [10, 128, 14, 14] [128, 32, 5, 5] [128]

max_pool2d_11 [10, 832, 14, 14]

conv2d_50 [10, 128, 14, 14] [128, 832, 1, 1] [128]

print block5_2:

conv2d_51 [10, 384, 14, 14] [384, 832, 1, 1] [384]

conv2d_52 [10, 192, 14, 14] [192, 832, 1, 1] [192]

conv2d_53 [10, 384, 14, 14] [384, 192, 3, 3] [384]

conv2d_54 [10, 48, 14, 14] [48, 832, 1, 1] [48]

conv2d_55 [10, 128, 14, 14] [128, 48, 5, 5] [128]

max_pool2d_12 [10, 832, 14, 14]

conv2d_56 [10, 128, 14, 14] [128, 832, 1, 1] [128]

adaptive_avg_pool2d_0 [10, 1024, 1, 1]

linear_0 [10, 1] [1024, 1] [1]

PS E:\project\python>

测试源代码如下所示:文章来源:https://www.toymoban.com/news/detail-629216.html

# GoogLeNet模型代码

#GoogLeNet卷积神经网络-笔记

import numpy as np

import paddle

from paddle.nn import Conv2D, MaxPool2D, AdaptiveAvgPool2D, Linear

## 组网

import paddle.nn.functional as F

# 定义Inception块

class Inception(paddle.nn.Layer):

def __init__(self, c0, c1, c2, c3, c4, **kwargs):

'''

Inception模块的实现代码,

c1,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

c2,图(b)中第二条支路卷积的输出通道数,数据类型是tuple或list,

其中c2[0]是1x1卷积的输出通道数,c2[1]是3x3

c3,图(b)中第三条支路卷积的输出通道数,数据类型是tuple或list,

其中c3[0]是1x1卷积的输出通道数,c3[1]是3x3

c4,图(b)中第一条支路1x1卷积的输出通道数,数据类型是整数

'''

super(Inception, self).__init__()

# 依次创建Inception块每条支路上使用到的操作

self.p1_1 = Conv2D(in_channels=c0,out_channels=c1, kernel_size=1, stride=1)

self.p2_1 = Conv2D(in_channels=c0,out_channels=c2[0], kernel_size=1, stride=1)

self.p2_2 = Conv2D(in_channels=c2[0],out_channels=c2[1], kernel_size=3, padding=1, stride=1)

self.p3_1 = Conv2D(in_channels=c0,out_channels=c3[0], kernel_size=1, stride=1)

self.p3_2 = Conv2D(in_channels=c3[0],out_channels=c3[1], kernel_size=5, padding=2, stride=1)

self.p4_1 = MaxPool2D(kernel_size=3, stride=1, padding=1)

self.p4_2 = Conv2D(in_channels=c0,out_channels=c4, kernel_size=1, stride=1)

# # 新加一层batchnorm稳定收敛

# self.batchnorm = paddle.nn.BatchNorm2D(c1+c2[1]+c3[1]+c4)

def forward(self, x):

# 支路1只包含一个1x1卷积

p1 = F.relu(self.p1_1(x))

# 支路2包含 1x1卷积 + 3x3卷积

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

# 支路3包含 1x1卷积 + 5x5卷积

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

# 支路4包含 最大池化和1x1卷积

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 将每个支路的输出特征图拼接在一起作为最终的输出结果

return paddle.concat([p1, p2, p3, p4], axis=1)

# return self.batchnorm()

class GoogLeNet(paddle.nn.Layer):

def __init__(self):

super(GoogLeNet, self).__init__()

# GoogLeNet包含五个模块,每个模块后面紧跟一个池化层

# 第一个模块包含1个卷积层

self.conv1 = Conv2D(in_channels=3,out_channels=64, kernel_size=7, padding=3, stride=1)

# 3x3最大池化

self.pool1 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第二个模块包含2个卷积层

self.conv2_1 = Conv2D(in_channels=64,out_channels=64, kernel_size=1, stride=1)

self.conv2_2 = Conv2D(in_channels=64,out_channels=192, kernel_size=3, padding=1, stride=1)

# 3x3最大池化

self.pool2 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第三个模块包含2个Inception块

self.block3_1 = Inception(192, 64, (96, 128), (16, 32), 32)

self.block3_2 = Inception(256, 128, (128, 192), (32, 96), 64)

# 3x3最大池化

self.pool3 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第四个模块包含5个Inception块

self.block4_1 = Inception(480, 192, (96, 208), (16, 48), 64)

self.block4_2 = Inception(512, 160, (112, 224), (24, 64), 64)

self.block4_3 = Inception(512, 128, (128, 256), (24, 64), 64)

self.block4_4 = Inception(512, 112, (144, 288), (32, 64), 64)

self.block4_5 = Inception(528, 256, (160, 320), (32, 128), 128)

# 3x3最大池化

self.pool4 = MaxPool2D(kernel_size=3, stride=2, padding=1)

# 第五个模块包含2个Inception块

self.block5_1 = Inception(832, 256, (160, 320), (32, 128), 128)

self.block5_2 = Inception(832, 384, (192, 384), (48, 128), 128)

# 全局池化,用的是global_pooling,不需要设置pool_stride

self.pool5 = AdaptiveAvgPool2D(output_size=1)

self.fc = Linear(in_features=1024, out_features=1)

def forward(self, x):

x = self.pool1(F.relu(self.conv1(x)))

x = self.pool2(F.relu(self.conv2_2(F.relu(self.conv2_1(x)))))

x = self.pool3(self.block3_2(self.block3_1(x)))

x = self.block4_3(self.block4_2(self.block4_1(x)))

x = self.pool4(self.block4_5(self.block4_4(x)))

x = self.pool5(self.block5_2(self.block5_1(x)))

x = paddle.reshape(x, [x.shape[0], -1])

x = self.fc(x)

return x

#=================================

import PM

# 创建模型

model = GoogLeNet()

print(len(model.parameters()))

opt = paddle.optimizer.Momentum(learning_rate=0.001, momentum=0.9, parameters=model.parameters(), weight_decay=0.001)

# 启动训练过程

PM.train_pm(model, opt)

—the—end—文章来源地址https://www.toymoban.com/news/detail-629216.html

到了这里,关于GoogLeNet卷积神经网络-笔记的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!