背景:

由于测试环境的磁盘满了,导致多个NodeManager出现不健康状态,查看了下,基本都是data空间满导致,不是删除日志文件等就能很快解决的,只能删除一些历史没有用的数据。于是从大文件列表中,找出2018年的spark作业的历史中间文件并彻底删除(跳过回收站)

/usr/local/hadoop-2.6.3/bin/hdfs dfs -rm -r -skipTrash /user/hadoop/.sparkStaging/application_1542856934835_1063/Job_20181128135837.jar

问题产生过程:

hdfs删除大量历史文件过程中,standby的namenode节点gc停顿时间过长退出了

当时没注意stanby已经退出了,还在继续删除数据,后面发现stanby停了后,于是重启stanby的NN,

启动时active的namenode已经删除了许多文件,导致两个namenode保存的数据块信息不一致了,出现大量数据块不一致的报错,使得所有的DataNode在与NameNode节点汇报心跳时,超时而被当做dead节点。

问题现象:

active的namenode的webUI里datanode状态正常,

但是standby的webUI里datanode全部dead,日志显示datanode频繁连接standby的NN且被远程standby的NN连接关闭,standby的NN显示一直在添加新的数据块

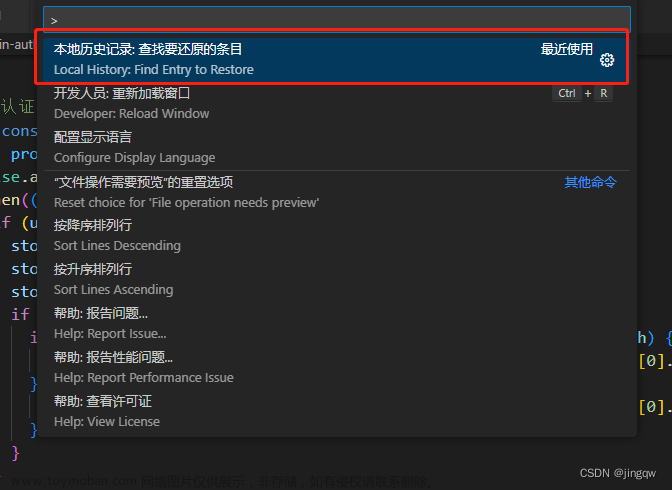

解决过程:

【重启stanby的NN】重启stanby的NN,重启后,stanby的NN存在GC停顿时间长的日志,之后出现大量写数据时管道断开(java.io.IOException: Broken pipe)的报错,stanby的节点列表还都是dead状态,且DataNode节点的日志大量报与stanby的NN的rpc端连接被重置错误(Connection reset by peer),这个过程之前还不知道原理,原来DN也会往stanby的NN发送报告信息。

【active的NN诡异的挂掉】stanby的NN重启一段时间后发现active的NN也挂掉了,而且日志没有明显的报错,于是重启active的NN,过后发现active和stanby的NN的50070的webUI中DataNode列表都是dead了,且DataNode节点的日志依然大量报与NN的rpc端连接被重置错误(Connection reset by peer)

【尝试重启DataNode】尝试重启DataNode让其重新注册,发现重启后还是依然报与NN的rpc连接重置的错

【刷新节点操作】问了位大神后,尝试刷新节点,让全部节点重新注册,发现刷新节点失败,也是报的rpc连接被重置问题

【排查网络问题】由于服务并没有挂掉,且对应的rpc端口也有监听,猜测是网络、dns、防火墙、时间同步等问题,让运维一起排查都反馈没问题,不过运维帮忙反馈该rpc端口有时可以连接有时超时,连接中有大量的close_wait,一般情况下说明程序已经没有响应了,导致客户端主动断开请求。

【调整active的NN堆内存大小重启并刷新节点】于是猜想是不是现在active的NN的堆内存不足了,导致大量的rpc请求被阻塞,于是尝试调大active的NN的堆内存大小,停止可能影响NN性能的JobHistoryServer、Balancer和自身的agent监控服务,重启,重启后发现active的DN节点列表已恢复正常,但是stanby的DN节点列表还都是dead,尝试再次刷新节点,发现有刷新成功和刷新失败的rpc连接重置的日志,观察节点列表仍然还不能恢复正常

【发现JN挂掉了一个】于是查看stanby的DN的日志,发现报了JN连接异常的错误,发现确实active的NN中的JN挂了,重启JN,以为节点恢复了,发现还是不行,大神指点JN挂掉其实无所谓,确实,理论上数据块信息都是在NN,挂掉最多导致部分新数据没有,后面还会补上的

【重启stanby的NN】在大神的指点下,这时候重启stanby的NN能解决90%的问题,重启后果然active和stanby的DN列表都恢复正常了。

相关日志:

stanby的NameNode因gc停顿时间过长导致退出日志

2019-08-20 14:06:38,841 INFO org.apache.hadoop.hdfs.server.namenode.FileJournalManager: Finalizing edits file /usr/local/hadoop-2.6.3/data/dfs/name/current/edits_inprogress_0000000000203838246 -> /usr/local/hadoop-2.6.3/data/dfs/name/current/edits_0000000000203838246-0000000000203838808

2019-08-20 14:06:38,841 INFO org.apache.hadoop.hdfs.server.namenode.FSEditLog: Starting log segment at 203838809

2019-08-20 14:06:40,408 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1069ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1555ms

2019-08-20 14:06:45,513 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 2139ms

GC pool 'PS MarkSweep' had collection(s): count=2 time=2638ms

2019-08-20 14:06:45,513 INFO org.apache.hadoop.hdfs.server.blockmanagement.CacheReplicationMonitor: Rescanning after 30749 milliseconds

2019-08-20 14:07:03,010 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 3326ms

GC pool 'PS MarkSweep' had collection(s): count=11 time=14667ms

2019-08-20 14:07:14,188 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1009ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1509ms

2019-08-20 14:07:18,175 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 2179ms

GC pool 'PS MarkSweep' had collection(s): count=2 time=2678ms

2019-08-20 14:07:19,723 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1047ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1540ms

standby的Namenode重启后因合并editLog日志和数据块删除等操作导致gc停顿日志

The number of live datanodes 15 has reached the minimum number 0. Safe mode will be turned off automatically once the thresholds have been reached.

2019-08-20 14:54:31,557 INFO org.apache.hadoop.hdfs.StateChange: STATE* Safe mode ON.

The reported blocks 447854 needs additional 1040262 blocks to reach the threshold 0.9990 of total blocks 1489605.

The number of live datanodes 15 has reached the minimum number 0. Safe mode will be turned off automatically once the thresholds have been reached.

2019-08-20 14:54:32,799 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1387ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1886ms

2019-08-20 14:54:39,305 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 3923ms

GC pool 'PS MarkSweep' had collection(s): count=4 time=4422ms

2019-08-20 14:54:55,588 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 2695ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=3195ms

2019-08-20 14:56:11,593 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1670ms

GC pool 'PS MarkSweep' had collection(s): count=6 time=6936ms

2019-08-20 14:56:14,517 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 2424ms

GC pool 'PS MarkSweep' had collection(s): count=30 time=41545ms

2019-08-20 14:56:16,459 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1441ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1942ms

2019-08-20 14:56:17,653 ERROR org.mortbay.log: Error for /jmx

2019-08-20 14:56:28,608 ERROR org.mortbay.log: /jmx?qry=Hadoop:service=NameNode,name=FSNamesystemState

2019-08-20 14:56:26,419 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 1309ms

GC pool 'PS MarkSweep' had collection(s): count=1 time=1809ms

2019-08-20 14:56:23,558 ERROR org.mortbay.log: Error for /jmx

2019-08-20 14:56:21,164 ERROR org.mortbay.log: handle failed

2019-08-20 14:56:19,957 ERROR org.mortbay.log: Error for /jmxstandby的Namenode重启后的写数据报错

The reported blocks 448402 needs additional 1039714 blocks to reach the threshold 0.9990 of total blocks 1489605.

The number of live datanodes 15 has reached the minimum number 0. Safe mode will be turned off automatically once the thresholds have been reached.

2019-08-20 14:58:08,273 WARN org.apache.hadoop.ipc.Server: IPC Server handler 5 on 9001, call org.apache.hadoop.hdfs.server.protocol.DatanodeProtocol.blockReceivedAndDeleted from 10.104.108.220:63143 Call#320840 Retry#0: output error

2019-08-20 14:58:08,290 INFO org.apache.hadoop.ipc.Server: IPC Server handler 5 on 9001 caught an exception

java.io.IOException: Broken pipe

at sun.nio.ch.FileDispatcherImpl.write0(Native Method)

at sun.nio.ch.SocketDispatcher.write(SocketDispatcher.java:47)

at sun.nio.ch.IOUtil.writeFromNativeBuffer(IOUtil.java:93)

at sun.nio.ch.IOUtil.write(IOUtil.java:65)

at sun.nio.ch.SocketChannelImpl.write(SocketChannelImpl.java:471)

at org.apache.hadoop.ipc.Server.channelWrite(Server.java:2574)

at org.apache.hadoop.ipc.Server.access$1900(Server.java:135)

at org.apache.hadoop.ipc.Server$Responder.processResponse(Server.java:978)

at org.apache.hadoop.ipc.Server$Responder.doRespond(Server.java:1043)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2095)

2019-08-20 14:58:08,273 WARN org.apache.hadoop.ipc.Server: IPC Server handler 1 on 9001, call org.apache.hadoop.hdfs.server.protocol.DatanodeProtocol.blockReceivedAndDeleted from 10.104.101.45:8931 Call#2372642 Retry#0: output error

2019-08-20 14:58:08,290 INFO org.apache.hadoop.ipc.Server: IPC Server handler 1 on 9001 caught an exception

java.io.IOException: Broken pipe

at sun.nio.ch.FileDispatcherImpl.write0(Native Method)

at sun.nio.ch.SocketDispatcher.write(SocketDispatcher.java:47)

at sun.nio.ch.IOUtil.writeFromNativeBuffer(IOUtil.java:93)

at sun.nio.ch.IOUtil.write(IOUtil.java:65)

at sun.nio.ch.SocketChannelImpl.write(SocketChannelImpl.java:471)

at org.apache.hadoop.ipc.Server.channelWrite(Server.java:2574)

at org.apache.hadoop.ipc.Server.access$1900(Server.java:135)

at org.apache.hadoop.ipc.Server$Responder.processResponse(Server.java:978)

at org.apache.hadoop.ipc.Server$Responder.doRespond(Server.java:1043)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2095)

active的NN诡异地无明显报错的挂掉

2019-08-20 16:27:55,477 INFO org.apache.hadoop.hdfs.StateChange: BLOCK* allocateBlock: /user/hive/warehouse/bi_ucar.db/fact_complaint_detail/.hive-staging_hive_2019-08-20_16-27-19_940_4374739327044819866-1/-ext-10000/_temporary/0/_temporary/attempt_201908201627_0030_m_000199_0/part-00199. BP-535417423-10.104.104.128-1535976912717 blk_1089864054_16247563{blockUCState=UNDER_CONSTRUCTION, primaryNodeIndex=-1, replicas=[ReplicaUnderConstruction[[DISK]DS-77fcebb8-363e-4b79-8eb6-974db40231cb:NORMAL:10.104.108.157:50010|RBW], ReplicaUnderConstruction[[DISK]DS-6425eb5e-a10e-4f44-ae1e-eb0170d7e5c5:NORMAL:10.104.108.212:50010|RBW], ReplicaUnderConstruction[[DISK]DS-16b95ffb-ac8a-4c34-86bc-e0ee58380a60:NORMAL:10.104.108.170:50010|RBW]]}

2019-08-20 16:27:55,488 INFO BlockStateChange: BLOCK* addStoredBlock: blockMap updated: 10.104.108.170:50010 is added to blk_1089864054_16247563{blockUCState=UNDER_CONSTRUCTION, primaryNodeIndex=-1, replicas=[ReplicaUnderConstruction[[DISK]DS-77fcebb8-363e-4b79-8eb6-974db40231cb:NORMAL:10.104.108.157:50010|RBW], ReplicaUnderConstruction[[DISK]DS-6425eb5e-a10e-4f44-ae1e-eb0170d7e5c5:NORMAL:10.104.108.212:50010|RBW], ReplicaUnderConstruction[[DISK]DS-16b95ffb-ac8a-4c34-86bc-e0ee58380a60:NORMAL:10.104.108.170:50010|RBW]]} size 0

2019-08-20 16:27:55,489 INFO BlockStateChange: BLOCK* addStoredBlock: blockMap updated: 10.104.108.212:50010 is added to blk_1089864054_16247563{blockUCState=UNDER_CONSTRUCTION, primaryNodeIndex=-1, replicas=[ReplicaUnderConstruction[[DISK]DS-77fcebb8-363e-4b79-8eb6-974db40231cb:NORMAL:10.104.108.157:50010|RBW], ReplicaUnderConstruction[[DISK]DS-6425eb5e-a10e-4f44-ae1e-eb0170d7e5c5:NORMAL:10.104.108.212:50010|RBW], ReplicaUnderConstruction[[DISK]DS-16b95ffb-ac8a-4c34-86bc-e0ee58380a60:NORMAL:10.104.108.170:50010|RBW]]} size 0

2019-08-20 16:27:55,489 INFO BlockStateChange: BLOCK* addStoredBlock: blockMap updated: 10.104.108.157:50010 is added to blk_1089864054_16247563{blockUCState=UNDER_CONSTRUCTION, primaryNodeIndex=-1, replicas=[ReplicaUnderConstruction[[DISK]DS-77fcebb8-363e-4b79-8eb6-974db40231cb:NORMAL:10.104.108.157:50010|RBW], ReplicaUnderConstruction[[DISK]DS-6425eb5e-a10e-4f44-ae1e-eb0170d7e5c5:NORMAL:10.104.108.212:50010|RBW], ReplicaUnderConstruction[[DISK]DS-16b95ffb-ac8a-4c34-86bc-e0ee58380a60:NORMAL:10.104.108.170:50010|RBW]]} size 0

2019-08-20 16:27:55,492 INFO org.apache.hadoop.hdfs.StateChange: DIR* completeFile: /user/hive/warehouse/bi_ucar.db/fact_complaint_detail/.hive-staging_hive_2019-08-20_16-27-19_940_4374739327044819866-1/-ext-10000/_temporary/0/_temporary/attempt_201908201627_0030_m_000199_0/part-00199 is closed by DFSClient_NONMAPREDUCE_1289526722_42

2019-08-20 16:27:55,511 INFO BlockStateChange: BLOCK* BlockManager: ask 10.104.132.196:50010 to delete [blk_1089864025_16247534, blk_1089863850_16247357]

2019-08-20 16:27:55,511 INFO BlockStateChange: BLOCK* BlockManager: ask 10.104.108.213:50010 to delete [blk_1089864033_16247542, blk_1089864028_16247537]

2019-08-20 16:27:55,568 WARN org.apache.hadoop.security.UserGroupInformation: No groups available for user hadoop

2019-08-20 16:27:55,616 WARN org.apache.hadoop.security.UserGroupInformation: No groups available for user hadoop

2019-08-20 16:27:55,715 WARN org.apache.hadoop.security.UserGroupInformation: No groups available for user hadoop

2019-08-20 16:27:55,715 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: there are no corrupt file blocks.

2019-08-20 16:27:56,661 WARN org.apache.hadoop.security.UserGroupInformation: No groups available for user hadoop

2019-08-20 16:27:56,665 WARN org.apache.hadoop.security.UserGroupInformation: No groups available for user hadoop

active节点刷新节点失败报rpc连接重置错误

DataNode节点大量报与NN的RPC连接重置错误

2019-08-20 16:20:15,284 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Copied BP-535417423-10.104.104.128-1535976912717:blk_1089743067_16126311 to /10.104.132.198:22528

2019-08-20 16:20:15,288 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Copied BP-535417423-10.104.104.128-1535976912717:blk_1089743066_16126310 to /10.104.132.198:22540

2019-08-20 16:20:17,097 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Receiving BP-535417423-10.104.104.128-1535976912717:blk_1089862867_16246374 src: /10.104.108.170:55257 dest: /10.104.108.156:50010

2019-08-20 16:20:17,111 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Received BP-535417423-10.104.104.128-1535976912717:blk_1089862867_16246374 src: /10.104.108.170:55257 dest: /10.104.108.156:50010 of size 43153

2019-08-20 16:20:19,102 WARN org.apache.hadoop.hdfs.server.datanode.DataNode: IOException in offerService

java.io.IOException: Failed on local exception: java.io.IOException: Connection reset by peer; Host Details : local host is: "datanodetest17.bi/10.104.108.156"; destination host is: "namenodetest02.bi.10101111.com":9001;

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:772)

at org.apache.hadoop.ipc.Client.call(Client.java:1473)

at org.apache.hadoop.ipc.Client.call(Client.java:1400)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:232)

at com.sun.proxy.$Proxy13.sendHeartbeat(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.DatanodeProtocolClientSideTranslatorPB.sendHeartbeat(DatanodeProtocolClientSideTranslatorPB.java:140)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.sendHeartBeat(BPServiceActor.java:617)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.offerService(BPServiceActor.java:715)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:889)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.io.IOException: Connection reset by peer

at sun.nio.ch.FileDispatcherImpl.read0(Native Method)

at sun.nio.ch.SocketDispatcher.read(SocketDispatcher.java:39)

at sun.nio.ch.IOUtil.readIntoNativeBuffer(IOUtil.java:223)

at sun.nio.ch.IOUtil.read(IOUtil.java:197)

at sun.nio.ch.SocketChannelImpl.read(SocketChannelImpl.java:380)

at org.apache.hadoop.net.SocketInputStream$Reader.performIO(SocketInputStream.java:57)

at org.apache.hadoop.net.SocketIOWithTimeout.doIO(SocketIOWithTimeout.java:142)

at org.apache.hadoop.net.SocketInputStream.read(SocketInputStream.java:161)

at org.apache.hadoop.net.SocketInputStream.read(SocketInputStream.java:131)

at java.io.FilterInputStream.read(FilterInputStream.java:133)

at java.io.FilterInputStream.read(FilterInputStream.java:133)

at org.apache.hadoop.ipc.Client$Connection$PingInputStream.read(Client.java:514)

at java.io.BufferedInputStream.fill(BufferedInputStream.java:246)

at java.io.BufferedInputStream.read(BufferedInputStream.java:265)

at java.io.DataInputStream.readInt(DataInputStream.java:387)

at org.apache.hadoop.ipc.Client$Connection.receiveRpcResponse(Client.java:1072)

at org.apache.hadoop.ipc.Client$Connection.run(Client.java:967)

2019-08-20 16:20:20,458 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Receiving BP-535417423-10.104.104.128-1535976912717:blk_1089862871_16246378 src: /10.104.108.170:55263 dest: /10.104.108.156:50010

2019-08-20 16:20:20,712 INFO org.apache.hadoop.hdfs.server.datanode.DataNode.clienttrace: src: /10.104.108.170:55263, dest: /10.104.108.156:50010, bytes: 196672, op: HDFS_WRITE, cliID: DFSClient_NONMAPREDUCE_-1795425359_1146224, offset: 0, srvID: 9f1f3a39-a45d-4961-859f-c1953bde9a73, blockid: BP-535417423-10.104.104.128-1535976912717:blk_1089862871_16246378, duration: 98410715

其他方案:

在尝试解决的过程中,大神指点了尝试调大以下参数,默认是10

dfs.namenode.handler.count

dfs.namenode.service.handler.count

我认为目前在未有明确报错信息的情况下,不要盲目更改参数,否则可能有其他副作用文章来源:https://www.toymoban.com/news/detail-632927.html

总结:

大量删除文件过程中,必须时刻关注active和stanby的NN的状态和日志,一旦发现异常信息,及时停止删除,避免发生后续NN挂掉或者其数据丢失问题

当active和stanby的NN都启动且WebUI中的DN列表如果都是dead的情况下,可以尝试先刷新节点让其重新注册,有机会恢复正常

stanby的NN在大量的操作导致edits过大,standby节点合并的时候就可能发生gc暂停时间过长而退出,应避免连续的大量文件操作

rpc端口有时可以连接有时超时,连接中有大量的close_wait,一般情况下说明程序已经没有响应了,导致客户端主动断开请求,可能是NN所在节点的对内存不足触发gc,导致大量线程阻塞使得rpc请求超时而重置

调整NameNode的堆内存大小,在hadoop-env.sh中配置HADOOP_NAMENODE_OPTS文章来源地址https://www.toymoban.com/news/detail-632927.html

到了这里,关于大量删除hdfs历史文件导致全部DataNode心跳汇报超时为死亡状态问题解决的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!