代码来自李沐老师《动手学pytorch》

在数据处理时,首先执行以下代码

def load_data_wiki(batch_size, max_len):

"""加载WikiText-2数据集"""

num_workers = d2l.get_dataloader_workers()

data_dir = d2l.download_extract('wikitext-2', 'wikitext-2')

以上两句代码,不再说明

paragraphs = _read_wiki(data_dir)

train_set = _WikiTextDataset(paragraphs, max_len)

train_iter = torch.utils.data.DataLoader(train_set, batch_size,

shuffle=True)

return train_iter, train_set.vocab

d2l.DATA_HUB['wikitext-2'] = (

'https://s3.amazonaws.com/research.metamind.io/wikitext/'

'wikitext-2-v1.zip', '3c914d17d80b1459be871a5039ac23e752a53cbe')

#@save

def _read_wiki(data_dir):

file_name = os.path.join(data_dir, 'wiki.train.tokens')

with open(file_name, 'r',encoding='utf-8') as f:

lines = f.readlines()

# 大写字母转换为小写字母 ,每行文本中包含两个句子,才进行处理,否则舍去文本

paragraphs = [line.strip().lower().split(' . ')

for line in lines if len(line.split(' . ')) >= 2]

random.shuffle(paragraphs)

return paragraphs

首先读取文本,每个文本必须包含两个以上句子(为了第二个预训练任务:判断两个句子,是否连续)。paragraphs 其中一部分结果如下所示

文本中包含了三个句子,每个’‘里面,代表一个句子

['common starlings are trapped for food in some mediterranean countries'

, 'the meat is tough and of low quality , so it is <unk> or made into <unk>'

, 'one recipe said it should be <unk> " until tender , however long that may be "'

, 'even when correctly prepared , it may still be seen as an acquired taste .']

class _WikiTextDataset(torch.utils.data.Dataset):

def __init__(self, paragraphs, max_len):

'''

每一个paragraph就是上面的包含多个句子的列表,将其进行分词处理。下面是一个分词的例子

[['common', 'starlings', 'are', 'trapped', 'for', 'food', 'in', 'some', 'mediterranean', 'countries']

, ['the', 'meat', 'is', 'tough', 'and', 'of', 'low', 'quality', ',', 'so', 'it', 'is', '<unk>', 'or', 'made', 'into', '<unk>'], ['one', 'recipe', 'said', 'it', 'should', 'be', '<unk>', '"', 'until', 'tender', ',', 'however', 'long', 'that', 'may', 'be', '"']

, ['even', 'when', 'correctly', 'prepared', ',', 'it', 'may', 'still', 'be', 'seen', 'as', 'an', 'acquired', 'taste', '.']]

'''

paragraphs = [d2l.tokenize(

paragraph, token='word') for paragraph in paragraphs]

#将词提取处理,保存

sentences = [sentence for paragraph in paragraphs

for sentence in paragraph]

#形成一个词典,min_freq为词最少出现的次数,少于5次,则不保存进词典中

self.vocab = d2l.Vocab(sentences, min_freq=5, reserved_tokens=[

'<pad>', '<mask>', '<cls>', '<sep>'])

# 获取下一句子预测任务的数据

examples = []

for paragraph in paragraphs:

examples.extend(_get_nsp_data_from_paragraph(

paragraph, paragraphs, self.vocab, max_len))

'''

def _get_nsp_data_from_paragraph(paragraph,paragraphs,vocab,max_len):

nsp_data_from_paragraph=[]

for i in range(len(paragraph)-1):

_get_next_sentence函数传入的是相邻的句子a,b。函数中b会有一定概率替换为其他的句子

tokens_a, tokens_b, is_next = _get_next_sentence(

paragraph[i], paragraph[i + 1], paragraphs)

句子长度大于bert限制的长度,则舍去。

if len(tokens_a)+len(tokens_b)+3>max_len:

continue

#加上<cls>和<sep>,segments用于区token在哪个句子中

tokens, segments = d2l.get_tokens_and_segments(tokens_a, tokens_b)

nsp_data_from_paragraph.append((tokens, segments, is_next))

return nsp_data_from_paragraph

token和segments的例子: True表示两个句子相邻,False表示b被随机替换,a,b不相邻。

(['<cls>', 'mushrooms', 'grow', '<unk>', 'or', 'in', '"', '<unk>', 'groups', '"', 'in', 'late', 'summer', 'and', 'throughout',

'autumn', ',', 'though', 'it', 'is', 'not', 'commonly', 'encountered', 'species', '<sep>', 'it',

'can', 'be', 'found', 'in', 'europe', ',', 'asia', 'and', 'north', 'america', '.', '<sep>'],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1], True),

'''

# 获取遮蔽语言模型任务的数据

'''

在这里我们会将句子中单词,替换为在词典中的索引。13意思为,句子的第13个词,进行了处理,可能不变,可能替换为其他词,可能替换为mask。在这里这个词没有替换。0与1区分两个句子,False代表两个句子不相邻。

examples中的结果;

([3, 2510, 31, 337, 9, 0, 6, 6891, 8, 11621, 6, 21, 11, 60, 3405, 14, 1542, 9546, 4, 2524,

21, 185, 4421, 649, 38, 277, 2872, 13233, 4], [13], [60],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

False)

'''

examples = [(_get_mlm_data_from_tokens(tokens, self.vocab)

+ (segments, is_next))

for tokens, segments, is_next in examples]

#_pad_bert_inputs对数据进行填充,all_mlm_weights中1为需要预测,0为填充

# all_mlm_weights= tensor([1., 0., 0., 0., 0., 0., 0., 0., 0., 0.]

(self.all_token_ids, self.all_segments, self.valid_lens,

self.all_pred_positions, self.all_mlm_weights,

self.all_mlm_labels, self.nsp_labels) = _pad_bert_inputs(

examples, max_len, self.vocab)

def __getitem__(self, idx):

return (self.all_token_ids[idx], self.all_segments[idx],

self.valid_lens[idx], self.all_pred_positions[idx],

self.all_mlm_weights[idx], self.all_mlm_labels[idx],

self.nsp_labels[idx])

def __len__(self):

return len(self.all_token_ids)

上述已经将数据处理完,最后看一下处理后的例子:

将原来的句子列表填充1,一直到到大小为64

tensor([[ 3, 5, 0, 18306, 23, 11, 2659, 156, 5779, 382,

1296, 110, 158, 22, 5, 1771, 496, 0, 3398, 2,

5, 3496, 110, 5038, 179, 4, 16, 11, 19837, 6,

58, 13, 5, 685, 7, 66, 156, 0, 3063, 77,

3842, 19, 4, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1]])

segments用于区分两个句子,0为第一个句子中的词,1为第二个句子中的词,后面的0为填充

tensor([[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0]])

valid_lens表示句子列表的有效长度

tensor([43.])

pred_positions需要预测的位置,0为填充

tensor([[19, 0, 0, 0, 0, 0, 0, 0, 0, 0]])

mlm_weights需要预测多少个词,0为填充

tensor([[1., 0., 0., 0., 0., 0., 0., 0., 0., 0.]])

预测位置的真实标签,0为填充

tensor([[22, 0, 0, 0, 0, 0, 0, 0, 0, 0]])

两句话是否相邻

tensor([0])

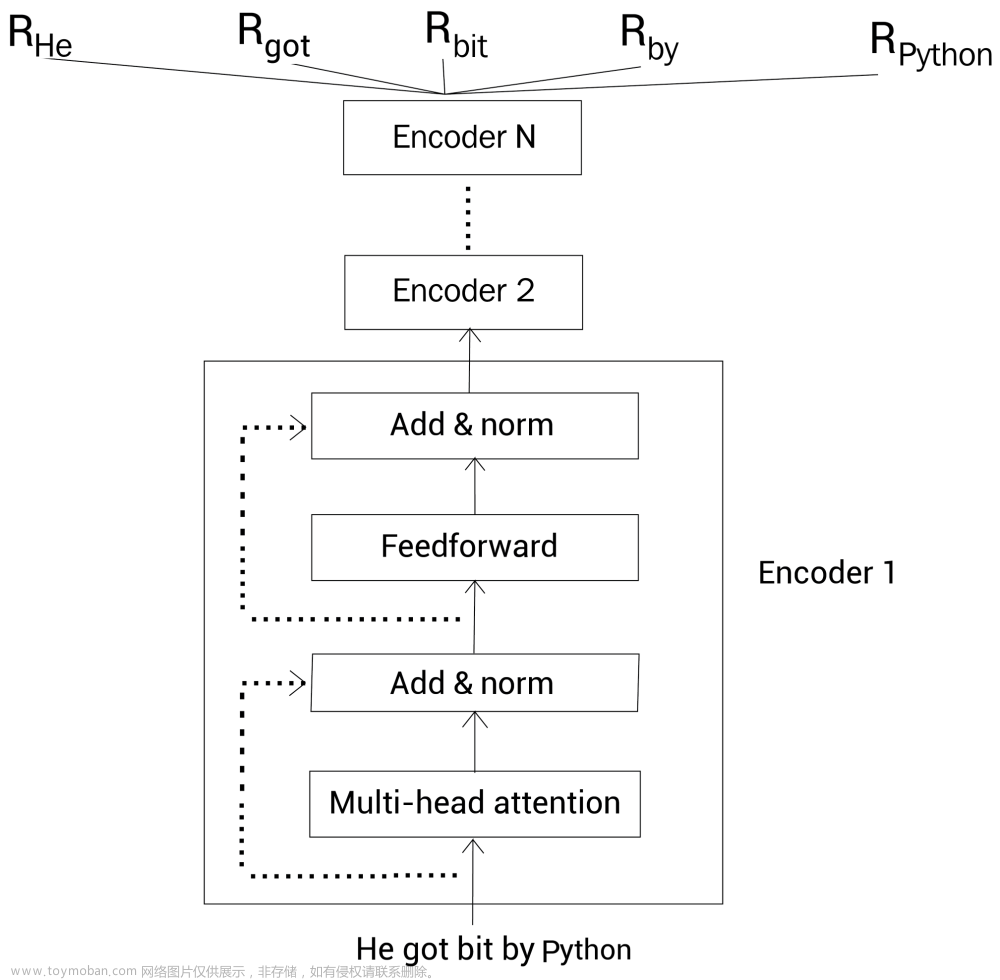

随后就是把处理好的数据,送入bert中。在 BERTEncoder 中,执行如下代码:

def forward(self, tokens, segments, valid_lens):

# Shape of `X` remains unchanged in the following code snippet:

# (batch size, max sequence length, `num_hiddens`)

# 将token和segment分别进行embedding,

X = self.token_embedding(tokens) + self.segment_embedding(segments)

#加入位置编码

X = X + self.pos_embedding.data[:, :X.shape[1], :]

for blk in self.blks:

X = blk(X, valid_lens)

return X

将编码完后的数据,进行多头注意力和残差化

def forward(self, X, valid_lens):

Y = self.addnorm1(X, self.attention(X, X, X, valid_lens))

return self.addnorm2(Y, self.ffn(Y))

将结果返回到如下代码中:其中encoded_X .shape=torch.Size([1, 64, 128]),1代表批次大小为1,我们设置的每个批次只有行文本,每行文本由64个词组成,bert提取128维的向量来表示每个词。随后进行两个任务,一个是预测被掩盖的单词,另一个为判断两个句子是否为相邻。

def forward(self, tokens, segments, valid_lens=None, pred_positions=None):

encoded_X = self.encoder(tokens, segments, valid_lens)

if pred_positions is not None:

mlm_Y_hat = self.mlm(encoded_X, pred_positions)

else:

mlm_Y_hat = None

# The hidden layer of the MLP classifier for next sentence prediction.

# 0 is the index of the '<cls>' token

nsp_Y_hat = self.nsp(self.hidden(encoded_X[:, 0, :]))

return encoded_X, mlm_Y_hat, nsp_Y_hat

第一个任务为预测被mask的单词:

'''

例如:batch为1,X为1*64*128,其中num_pred_positions =10,batch_idx 会重复为[0, 0, 0, 0, 0, 0, 0, 0, 0, 0],pred_positions为[ 3, 6, 10, 12, 15, 20, 0, 0, 0, 0],X[batch_idx, pred_positions]会将需要预测的向量取出。然后reshape为1*10*128的矩阵。最后连接一个mlp,经过规范化后接nn.Linear(num_hiddens, vocab_size)),会生成再vocab上的预测

'''

def forward(self, X, pred_positions):

num_pred_positions = pred_positions.shape[1]

pred_positions = pred_positions.reshape(-1)

batch_size = X.shape[0]

batch_idx = torch.arange(0, batch_size)

# Suppose that `batch_size` = 2, `num_pred_positions` = 3, then

# `batch_idx` is `torch.tensor([0, 0, 0, 1, 1, 1])`

batch_idx = torch.repeat_interleave(batch_idx, num_pred_positions)

masked_X = X[batch_idx, pred_positions]

masked_X = masked_X.reshape((batch_size, num_pred_positions, -1))

mlm_Y_hat = self.mlp(masked_X)

return mlm_Y_hat

结束后,会返回到上层的代码中:文章来源:https://www.toymoban.com/news/detail-647467.html

def forward(self, tokens, segments, valid_lens=None, pred_positions=None):

encoded_X = self.encoder(tokens, segments, valid_lens)

if pred_positions is not None:

mlm_Y_hat = self.mlm(encoded_X, pred_positions)

else:

mlm_Y_hat = None

# The hidden layer of the MLP classifier for next sentence prediction.

# 0 is the index of the '<cls>' token

判断句子是否连续,将<cls>的向量,放入mlp中,接一个nn.Linear(num_inputs, 2),最后变成一个二分类问题。

nsp_Y_hat = self.nsp(self.hidden(encoded_X[:, 0, :]))

return encoded_X, mlm_Y_hat, nsp_Y_hat

后面就是计算损失:文章来源地址https://www.toymoban.com/news/detail-647467.html

将mlm_Y_hat进行reshap,与mlm_Y求loss,最后需要乘mlm_weights_X,将填充的无用数据进行去除。

mlm_l = loss(mlm_Y_hat.reshape(-1, vocab_size), mlm_Y.reshape(-1)) * mlm_weights_X.reshape(-1, 1)

取平均loss

mlm_l = mlm_l.sum() / (mlm_weights_X.sum() + 1e-8)

nsp_l = loss(nsp_Y_hat, nsp_y)

l = mlm_l + nsp_l

到了这里,关于BERT数据处理,模型,预训练的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!

![[oneAPI] 基于BERT预训练模型的英文文本蕴含任务](https://imgs.yssmx.com/Uploads/2024/02/666282-1.png)