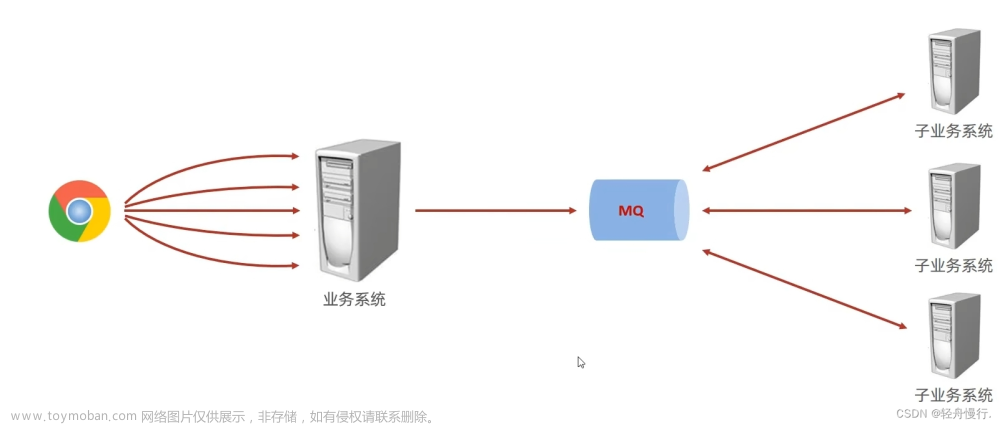

springboot整合kafka-笔记

配置pom.xml

这里我的springboot版本是2.3.8.RELEASE,使用的kafka-mq的版本是2.12文章来源:https://www.toymoban.com/news/detail-651649.html

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-dependencies</artifactId>

<version>2.3.8.RELEASE</version>

<type>pom</type>

<scope>import</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.3.6.RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.3.1</version>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.3.6.RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.3.1</version>

</dependency>

</dependencies>

配置application.yml

spring:

kafka:

bootstrap-servers: 10.1.5.212:9092

配置EnableKafka注解

@EnableKafka

@EnableScheduling

@SpringBootApplication

public class DemoApplication {

public static void main(String[] args) {

SpringApplication.run(DemoApplication.class,args);

}

}

定义kafka消息生产者

package cn.test.kafka;

import lombok.extern.slf4j.Slf4j;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Service;

@Slf4j

@Service

public class KafkaProducerService {

@Autowired

private KafkaTemplate<String, String> kafkaTemplate;

private String topic= "my816topic";

public void sendMessage(String message){

log.info("向kafka发送消息:{}",message);

kafkaTemplate.send(topic,message);

}

}

定义kafka消息消费者

package cn.test.kafka;

import lombok.extern.slf4j.Slf4j;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Service;

@Slf4j

@Service

public class KafkaConsumerService {

@KafkaListener(topics = "my816topic",groupId = "mygroup")

public void listen(String message){

log.info("rec msg:{}",message);

}

}

测试发送kafka消息

@Component

@Slf4j

public class TestJob {

@Autowired

private ObjectMapper objectMapper;

@Autowired

private KafkaProducerService kafkaProducerService;

@Scheduled(cron = "0/13 * * * * ?")

public void hwreg() {

String s = null;

try {

s = objectMapper.writeValueAsString(new CityInfo("hefei"+System.currentTimeMillis(), 117.17, 31.52));

} catch (JsonProcessingException e) {

e.printStackTrace();

}

//测试发送字符串消息

kafkaProducerService.sendMessage(s);

}

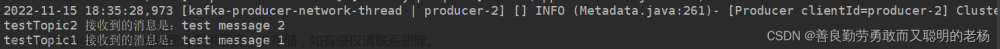

测试发送kafka消息-控制台日志文章来源地址https://www.toymoban.com/news/detail-651649.html

2023-08-16 19:16:50,832 INFO [17324] [main] [] o.a.k.c.c.ConsumerConfig [AbstractConfig.java : 347] ConsumerConfig values:

allow.auto.create.topics = true

auto.commit.interval.ms = 5000

auto.offset.reset = latest

bootstrap.servers = [10.1.5.212:9092]

check.crcs = true

client.dns.lookup = default

client.id =

client.rack =

connections.max.idle.ms = 540000

default.api.timeout.ms = 60000

enable.auto.commit = false

exclude.internal.topics = true

fetch.max.bytes = 52428800

fetch.max.wait.ms = 500

fetch.min.bytes = 1

group.id = mygroup

group.instance.id = null

heartbeat.interval.ms = 3000

interceptor.classes = []

internal.leave.group.on.close = true

isolation.level = read_uncommitted

key.deserializer = class org.apache.kafka.common.serialization.StringDeserializer

max.partition.fetch.bytes = 1048576

max.poll.interval.ms = 300000

max.poll.records = 500

metadata.max.age.ms = 300000

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = INFO

metrics.sample.window.ms = 30000

partition.assignment.strategy = [class org.apache.kafka.clients.consumer.RangeAssignor]

receive.buffer.bytes = 65536

reconnect.backoff.max.ms = 1000

reconnect.backoff.ms = 50

request.timeout.ms = 30000

retry.backoff.ms = 100

sasl.client.callback.handler.class = null

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism = GSSAPI

security.protocol = PLAINTEXT

send.buffer.bytes = 131072

session.timeout.ms = 10000

ssl.cipher.suites = null

ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

value.deserializer = class org.apache.kafka.common.serialization.StringDeserializer

2023-08-16 19:16:50,884 INFO [17324] [main] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 117] Kafka version: 2.3.1

2023-08-16 19:16:50,884 INFO [17324] [main] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 118] Kafka commitId: 18a913733fb71c01

2023-08-16 19:16:50,884 INFO [17324] [main] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 119] Kafka startTimeMs: 1692184610882

2023-08-16 19:16:50,887 INFO [17324] [main] [] o.a.k.c.c.KafkaConsumer [KafkaConsumer.java : 964] [Consumer clientId=consumer-1, groupId=mygroup] Subscribed to topic(s): my816topic

2023-08-16 19:16:51,094 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.Metadata [Metadata.java : 261] [Consumer clientId=consumer-1, groupId=mygroup] Cluster ID: mUe0Z0StRd2_X-_m_S557A

2023-08-16 19:16:51,096 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.AbstractCoordinator [AbstractCoordinator.java : 728] [Consumer clientId=consumer-1, groupId=mygroup] Discovered group coordinator 10.1.5.212:9092 (id: 2147483647 rack: null)

2023-08-16 19:16:51,099 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.ConsumerCoordinator [ConsumerCoordinator.java : 476] [Consumer clientId=consumer-1, groupId=mygroup] Revoking previously assigned partitions []

2023-08-16 19:16:51,099 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.s.k.l.KafkaMessageListenerContainer [LogAccessor.java : 292] mygroup: partitions revoked: []

2023-08-16 19:16:51,100 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.AbstractCoordinator [AbstractCoordinator.java : 505] [Consumer clientId=consumer-1, groupId=mygroup] (Re-)joining group

2023-08-16 19:16:51,114 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.AbstractCoordinator [AbstractCoordinator.java : 505] [Consumer clientId=consumer-1, groupId=mygroup] (Re-)joining group

2023-08-16 19:16:51,126 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.AbstractCoordinator [AbstractCoordinator.java : 469] [Consumer clientId=consumer-1, groupId=mygroup] Successfully joined group with generation 9

2023-08-16 19:16:51,130 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.ConsumerCoordinator [ConsumerCoordinator.java : 283] [Consumer clientId=consumer-1, groupId=mygroup] Setting newly assigned partitions: my816topic-0

2023-08-16 19:16:51,141 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.a.k.c.c.i.ConsumerCoordinator [ConsumerCoordinator.java : 525] [Consumer clientId=consumer-1, groupId=mygroup] Setting offset for partition my816topic-0 to the committed offset FetchPosition{offset=288, offsetEpoch=Optional.empty, currentLeader=LeaderAndEpoch{leader=10.1.5.212:9092 (id: 0 rack: null), epoch=0}}

2023-08-16 19:16:51,152 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] o.s.k.l.KafkaMessageListenerContainer [LogAccessor.java : 292] mygroup: partitions assigned: [my816topic-0]

2023-08-16 19:16:52,049 INFO [17324] [scheduling-1] [] c.t.k.KafkaProducerService [KafkaProducerService.java : 19] 向kafka发送消息:{"city":"hefei1692184612007","longitude":117.17,"latitude":31.52}

2023-08-16 19:16:52,053 INFO [17324] [scheduling-1] [] o.a.k.c.p.ProducerConfig [AbstractConfig.java : 347] ProducerConfig values:

acks = 1

batch.size = 16384

bootstrap.servers = [10.1.5.212:9092]

buffer.memory = 33554432

client.dns.lookup = default

client.id =

compression.type = none

connections.max.idle.ms = 540000

delivery.timeout.ms = 120000

enable.idempotence = false

interceptor.classes = []

key.serializer = class org.apache.kafka.common.serialization.StringSerializer

linger.ms = 0

max.block.ms = 60000

max.in.flight.requests.per.connection = 5

max.request.size = 1048576

metadata.max.age.ms = 300000

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = INFO

metrics.sample.window.ms = 30000

partitioner.class = class org.apache.kafka.clients.producer.internals.DefaultPartitioner

receive.buffer.bytes = 32768

reconnect.backoff.max.ms = 1000

reconnect.backoff.ms = 50

request.timeout.ms = 30000

retries = 2147483647

retry.backoff.ms = 100

sasl.client.callback.handler.class = null

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism = GSSAPI

security.protocol = PLAINTEXT

send.buffer.bytes = 131072

ssl.cipher.suites = null

ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.timeout.ms = 60000

transactional.id = null

value.serializer = class org.apache.kafka.common.serialization.StringSerializer

2023-08-16 19:16:52,072 INFO [17324] [scheduling-1] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 117] Kafka version: 2.3.1

2023-08-16 19:16:52,072 INFO [17324] [scheduling-1] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 118] Kafka commitId: 18a913733fb71c01

2023-08-16 19:16:52,072 INFO [17324] [scheduling-1] [] o.a.k.c.u.AppInfoParser [AppInfoParser.java : 119] Kafka startTimeMs: 1692184612071

2023-08-16 19:16:52,079 INFO [17324] [kafka-producer-network-thread | producer-1] [] o.a.k.c.Metadata [Metadata.java : 261] [Producer clientId=producer-1] Cluster ID: mUe0Z0StRd2_X-_m_S557A

2023-08-16 19:16:52,115 INFO [17324] [org.springframework.kafka.KafkaListenerEndpointContainer#0-0-C-1] [] c.t.k.KafkaConsumerService [KafkaConsumerService.java : 14] rec msg:{"city":"hefei1692184612007","longitude":117.17,"latitude":31.52}

到了这里,关于springboot整合kafka-笔记的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!