原文网址:https://blog.csdn.net/m0_52910424/article/details/127819278

要注意的一点是,小批量随机梯度下降算法的实现细节。在构造DataLoader时shuffle设置为True即代表批量是随机的。

“traindataloader1=torch.utils.data.DataLoader(dataset=traindataset1,batch_size=batch_size,shuffle=True)”

fnn手动实现:文章来源:https://www.toymoban.com/news/detail-667588.html

import time

import matplotlib.pyplot as plt

import numpy as np

import torch

import torch.nn as nn

import torchvision

from torch.nn.functional import cross_entropy, binary_cross_entropy

from torch.nn import CrossEntropyLoss

from torchvision import transforms

from sklearn import metrics

device = torch.device("cuda" if torch.cuda.is_available() else "cpu") # 如果有gpu则在gpu上计算 加快计算速度

print(f'当前使用的device为{device}')

# 数据集定义

# 构建回归数据集合 - traindataloader1, testdataloader1

data_num, train_num, test_num = 10000, 7000, 3000 # 分别为样本总数量,训练集样本数量和测试集样本数量

true_w, true_b = 0.0056 * torch.ones(500,1), 0.028

features = torch.randn(data_num, 500)

labels = torch.matmul(features,true_w) + true_b # 按高斯分布

labels += torch.tensor(np.random.normal(0,0.01,size=labels.size()),dtype=torch.float32)

# 划分训练集和测试集

train_features, test_features = features[:train_num,:], features[train_num:,:]

train_labels, test_labels = labels[:train_num], labels[train_num:]

batch_size = 128

traindataset1 = torch.utils.data.TensorDataset(train_features,train_labels)

testdataset1 = torch.utils.data.TensorDataset(test_features, test_labels)

traindataloader1 = torch.utils.data.DataLoader(dataset=traindataset1,batch_size=batch_size,shuffle=True)

testdataloader1 = torch.utils.data.DataLoader(dataset=testdataset1,batch_size=batch_size,shuffle=True)

# 构二分类数据集合

data_num, train_num, test_num = 10000, 7000, 3000 # 分别为样本总数量,训练集样本数量和测试集样本数量

# 第一个数据集 符合均值为 0.5 标准差为1 得分布

features1 = torch.normal(mean=0.2, std=2, size=(data_num, 200), dtype=torch.float32)

labels1 = torch.ones(data_num)

# 第二个数据集 符合均值为 -0.5 标准差为1的分布

features2 = torch.normal(mean=-0.2, std=2, size=(data_num, 200), dtype=torch.float32)

labels2 = torch.zeros(data_num)

# 构建训练数据集

train_features2 = torch.cat((features1[:train_num], features2[:train_num]), dim=0) # size torch.Size([14000, 200])

train_labels2 = torch.cat((labels1[:train_num], labels2[:train_num]), dim=-1) # size torch.Size([6000, 200])

# 构建测试数据集

test_features2 = torch.cat((features1[train_num:], features2[train_num:]), dim=0) # torch.Size([14000])

test_labels2 = torch.cat((labels1[train_num:], labels2[train_num:]), dim=-1) # torch.Size([6000])

batch_size = 128

# Build the training and testing dataset

traindataset2 = torch.utils.data.TensorDataset(train_features2, train_labels2)

testdataset2 = torch.utils.data.TensorDataset(test_features2, test_labels2)

traindataloader2 = torch.utils.data.DataLoader(dataset=traindataset2,batch_size=batch_size,shuffle=True)

testdataloader2 = torch.utils.data.DataLoader(dataset=testdataset2,batch_size=batch_size,shuffle=True)

# 定义多分类数据集 - train_dataloader - test_dataloader

batch_size = 128

# Build the training and testing dataset

traindataset3 = torchvision.datasets.FashionMNIST(root='.\\FashionMNIST\\Train',

train=True,

download=True,

transform=transforms.ToTensor())

testdataset3 = torchvision.datasets.FashionMNIST(root='.\\FashionMNIST\\Test',

train=False,

download=True,

transform=transforms.ToTensor())

traindataloader3 = torch.utils.data.DataLoader(traindataset3, batch_size=batch_size, shuffle=True)

testdataloader3 = torch.utils.data.DataLoader(testdataset3, batch_size=batch_size, shuffle=False)

# 绘制图像的代码

def picture(name, trainl, testl, type='Loss'):

plt.rcParams["font.sans-serif"]=["SimHei"] #设置字体

plt.rcParams["axes.unicode_minus"]=False #该语句解决图像中的“-”负号的乱码问题

plt.title(name) # 命名

plt.plot(trainl, c='g', label='Train '+ type)

plt.plot(testl, c='r', label='Test '+type)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.legend()

plt.grid(True)

print(f'回归数据集 样本总数量{len(traindataset1) + len(testdataset1)},训练样本数量{len(traindataset1)},测试样本数量{len(testdataset1)}')

print(f'二分类数据集 样本总数量{len(traindataset2) + len(testdataset2)},训练样本数量{len(traindataset2)},测试样本数量{len(testdataset2)}')

print(f'多分类数据集 样本总数量{len(traindataset3) + len(testdataset3)},训练样本数量{len(traindataset3)},测试样本数量{len(testdataset3)}')

# 定义自己的前馈神经网络

class MyNet1():

def __init__(self):

# 设置隐藏层和输出层的节点数

num_inputs, num_hiddens, num_outputs = 500, 256, 1

w_1 = torch.tensor(np.random.normal(0,0.01,(num_hiddens,num_inputs)),dtype=torch.float32,requires_grad=True)

b_1 = torch.zeros(num_hiddens, dtype=torch.float32,requires_grad=True)

w_2 = torch.tensor(np.random.normal(0, 0.01,(num_outputs, num_hiddens)),dtype=torch.float32,requires_grad=True)

b_2 = torch.zeros(num_outputs,dtype=torch.float32, requires_grad=True)

self.params = [w_1, b_1, w_2, b_2]

# 定义模型结构

self.input_layer = lambda x: x.view(x.shape[0],-1)

self.hidden_layer = lambda x: self.my_relu(torch.matmul(x,w_1.t())+b_1)

self.output_layer = lambda x: torch.matmul(x,w_2.t()) + b_2

def my_relu(self, x):

return torch.max(input=x,other=torch.tensor(0.0))

def forward(self,x):

x = self.input_layer(x)

x = self.my_relu(self.hidden_layer(x))

x = self.output_layer(x)

return x

def mySGD(params, lr, batchsize):

for param in params:

param.data -= lr*param.grad / batchsize

def mse(pred, true):

ans = torch.sum((true-pred)**2) / len(pred)

# print(ans)

return ans

# 训练

model1 = MyNet1() # logistics模型

criterion = CrossEntropyLoss() # 损失函数

lr = 0.05 # 学习率

batchsize = 128

epochs = 40 #训练轮数

train_all_loss1 = [] # 记录训练集上得loss变化

test_all_loss1 = [] #记录测试集上的loss变化

begintime1 = time.time()

for epoch in range(epochs):

train_l = 0

for data, labels in traindataloader1:

pred = model1.forward(data)

train_each_loss = mse(pred.view(-1,1), labels.view(-1,1)) #计算每次的损失值

train_each_loss.backward() # 反向传播

mySGD(model1.params, lr, batchsize) # 使用小批量随机梯度下降迭代模型参数

# 梯度清零

train_l += train_each_loss.item()

for param in model1.params:

param.grad.data.zero_()

# print(train_each_loss)

train_all_loss1.append(train_l) # 添加损失值到列表中

with torch.no_grad():

test_loss = 0

for data, labels in traindataloader1:

pred = model1.forward(data)

test_each_loss = mse(pred, labels)

test_loss += test_each_loss.item()

test_all_loss1.append(test_loss)

if epoch==0 or (epoch+1) % 4 == 0:

print('epoch: %d | train loss:%.5f | test loss:%.5f'%(epoch+1,train_all_loss1[-1],test_all_loss1[-1]))

endtime1 = time.time()

print("手动实现前馈网络-回归实验 %d轮 总用时: %.3fs"%(epochs,endtime1-begintime1))

# 定义自己的前馈神经网络

class MyNet2():

def __init__(self):

# 设置隐藏层和输出层的节点数

num_inputs, num_hiddens, num_outputs = 200, 256, 1

w_1 = torch.tensor(np.random.normal(0, 0.01, (num_hiddens, num_inputs)), dtype=torch.float32,

requires_grad=True)

b_1 = torch.zeros(num_hiddens, dtype=torch.float32, requires_grad=True)

w_2 = torch.tensor(np.random.normal(0, 0.01, (num_outputs, num_hiddens)), dtype=torch.float32,

requires_grad=True)

b_2 = torch.zeros(num_outputs, dtype=torch.float32, requires_grad=True)

self.params = [w_1, b_1, w_2, b_2]

# 定义模型结构

self.input_layer = lambda x: x.view(x.shape[0], -1)

self.hidden_layer = lambda x: self.my_relu(torch.matmul(x, w_1.t()) + b_1)

self.output_layer = lambda x: torch.matmul(x, w_2.t()) + b_2

self.fn_logistic = self.logistic

def my_relu(self, x):

return torch.max(input=x, other=torch.tensor(0.0))

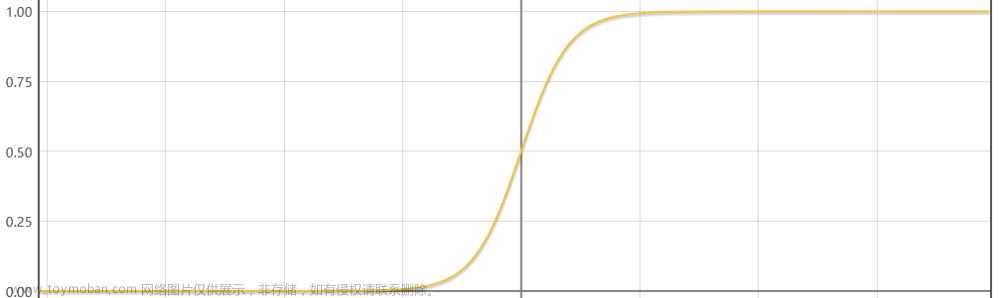

def logistic(self, x): # 定义logistic函数

x = 1.0 / (1.0 + torch.exp(-x))

return x

# 定义前向传播

def forward(self, x):

x = self.input_layer(x)

x = self.my_relu(self.hidden_layer(x))

x = self.fn_logistic(self.output_layer(x))

return x

def mySGD(params, lr):

for param in params:

param.data -= lr * param.grad

# 训练

model2 = MyNet2()

lr = 0.01 # 学习率

epochs = 40 # 训练轮数

train_all_loss2 = [] # 记录训练集上得loss变化

test_all_loss2 = [] # 记录测试集上的loss变化

train_Acc12, test_Acc12 = [], []

begintime2 = time.time()

for epoch in range(epochs):

train_l, train_epoch_count = 0, 0

for data, labels in traindataloader2:

pred = model2.forward(data)

train_each_loss = binary_cross_entropy(pred.view(-1), labels.view(-1)) # 计算每次的损失值

train_l += train_each_loss.item()

train_each_loss.backward() # 反向传播

mySGD(model2.params, lr) # 使用随机梯度下降迭代模型参数

# 梯度清零

for param in model2.params:

param.grad.data.zero_()

# print(train_each_loss)

train_epoch_count += (torch.tensor(np.where(pred > 0.5, 1, 0)).view(-1) == labels).sum()

train_Acc12.append((train_epoch_count/len(traindataset2)).item())

train_all_loss2.append(train_l) # 添加损失值到列表中

with torch.no_grad():

test_l, test_epoch_count = 0, 0

for data, labels in testdataloader2:

pred = model2.forward(data)

test_each_loss = binary_cross_entropy(pred.view(-1), labels.view(-1))

test_l += test_each_loss.item()

test_epoch_count += (torch.tensor(np.where(pred > 0.5, 1, 0)).view(-1) == labels.view(-1)).sum()

test_Acc12.append((test_epoch_count/len(testdataset2)).item())

test_all_loss2.append(test_l)

if epoch == 0 or (epoch + 1) % 4 == 0:

print('epoch: %d | train loss:%.5f | test loss:%.5f | train acc:%.5f | test acc:%.5f' % (epoch + 1, train_all_loss2[-1], test_all_loss2[-1], train_Acc12[-1], test_Acc12[-1]))

endtime2 = time.time()

print("手动实现前馈网络-二分类实验 %d轮 总用时: %.3f" % (epochs, endtime2 - begintime2))

# 定义自己的前馈神经网络

class MyNet3():

def __init__(self):

# 设置隐藏层和输出层的节点数

num_inputs, num_hiddens, num_outputs = 28 * 28, 256, 10 # 十分类问题

w_1 = torch.tensor(np.random.normal(0, 0.01, (num_hiddens, num_inputs)), dtype=torch.float32,

requires_grad=True)

b_1 = torch.zeros(num_hiddens, dtype=torch.float32, requires_grad=True)

w_2 = torch.tensor(np.random.normal(0, 0.01, (num_outputs, num_hiddens)), dtype=torch.float32,

requires_grad=True)

b_2 = torch.zeros(num_outputs, dtype=torch.float32, requires_grad=True)

self.params = [w_1, b_1, w_2, b_2]

# 定义模型结构

self.input_layer = lambda x: x.view(x.shape[0], -1)

self.hidden_layer = lambda x: self.my_relu(torch.matmul(x, w_1.t()) + b_1)

self.output_layer = lambda x: torch.matmul(x, w_2.t()) + b_2

def my_relu(self, x):

return torch.max(input=x, other=torch.tensor(0.0))

# 定义前向传播

def forward(self, x):

x = self.input_layer(x)

x = self.hidden_layer(x)

x = self.output_layer(x)

return x

def mySGD(params, lr, batchsize):

for param in params:

param.data -= lr * param.grad / batchsize

# 训练

model3 = MyNet3() # logistics模型

criterion = cross_entropy # 损失函数

lr = 0.15 # 学习率

epochs = 40 # 训练轮数

train_all_loss3 = [] # 记录训练集上得loss变化

test_all_loss3 = [] # 记录测试集上的loss变化

train_ACC13, test_ACC13 = [], [] # 记录正确的个数

begintime3 = time.time()

for epoch in range(epochs):

train_l,train_acc_num = 0, 0

for data, labels in traindataloader3:

pred = model3.forward(data)

train_each_loss = criterion(pred, labels) # 计算每次的损失值

train_l += train_each_loss.item()

train_each_loss.backward() # 反向传播

mySGD(model3.params, lr, 128) # 使用小批量随机梯度下降迭代模型参数

# 梯度清零

train_acc_num += (pred.argmax(dim=1)==labels).sum().item()

for param in model3.params:

param.grad.data.zero_()

# print(train_each_loss)

train_all_loss3.append(train_l) # 添加损失值到列表中

train_ACC13.append(train_acc_num / len(traindataset3)) # 添加准确率到列表中

with torch.no_grad():

test_l, test_acc_num = 0, 0

for data, labels in testdataloader3:

pred = model3.forward(data)

test_each_loss = criterion(pred, labels)

test_l += test_each_loss.item()

test_acc_num += (pred.argmax(dim=1)==labels).sum().item()

test_all_loss3.append(test_l)

test_ACC13.append(test_acc_num / len(testdataset3)) # # 添加准确率到列表中

if epoch == 0 or (epoch + 1) % 4 == 0:

print('epoch: %d | train loss:%.5f | test loss:%.5f | train acc: %.2f | test acc: %.2f'

% (epoch + 1, train_l, test_l, train_ACC13[-1],test_ACC13[-1]))

endtime3 = time.time()

print("手动实现前馈网络-多分类实验 %d轮 总用时: %.3f" % (epochs, endtime3 - begintime3))

plt.figure(figsize=(12,3))

plt.title('Loss')

plt.subplot(131)

picture('前馈网络-回归-Loss',train_all_loss1,test_all_loss1)

plt.subplot(132)

picture('前馈网络-二分类-loss',train_all_loss2,test_all_loss2)

plt.subplot(133)

picture('前馈网络-多分类-loss',train_all_loss3,test_all_loss3)

plt.show()

plt.figure(figsize=(8, 3))

plt.subplot(121)

picture('前馈网络-二分类-ACC',train_Acc12,test_Acc12,type='ACC')

plt.subplot(122)

picture('前馈网络-多分类—ACC', train_ACC13,test_ACC13, type='ACC')

plt.show()

nn实现(包括3种激活函数、多层隐藏层):文章来源地址https://www.toymoban.com/news/detail-667588.html

import time

import matplotlib.pyplot as plt

import numpy as np

import torch

import torch.nn as nn

import torchvision

from torch.nn.functional import cross_entropy, binary_cross_entropy

from torch.nn import CrossEntropyLoss

from torchvision import transforms

from sklearn import metrics

device = torch.device("cuda" if torch.cuda.is_available() else "cpu") # 如果有gpu则在gpu上计算 加快计算速度

print(f'当前使用的device为{device}')

# 数据集定义

# 构建回归数据集合 - traindataloader1, testdataloader1

data_num, train_num, test_num = 10000, 7000, 3000 # 分别为样本总数量,训练集样本数量和测试集样本数量

true_w, true_b = 0.0056 * torch.ones(500,1), 0.028

features = torch.randn(10000, 500)

labels = torch.matmul(features,true_w) + true_b # 按高斯分布

labels += torch.tensor(np.random.normal(0,0.01,size=labels.size()),dtype=torch.float32)

# 划分训练集和测试集

train_features, test_features = features[:train_num,:], features[train_num:,:]

train_labels, test_labels = labels[:train_num], labels[train_num:]

batch_size = 128

traindataset1 = torch.utils.data.TensorDataset(train_features,train_labels)

testdataset1 = torch.utils.data.TensorDataset(test_features, test_labels)

traindataloader1 = torch.utils.data.DataLoader(dataset=traindataset1,batch_size=batch_size,shuffle=True)

testdataloader1 = torch.utils.data.DataLoader(dataset=testdataset1,batch_size=batch_size,shuffle=True)

# 构二分类数据集合

data_num, train_num, test_num = 10000, 7000, 3000 # 分别为样本总数量,训练集样本数量和测试集样本数量

# 第一个数据集 符合均值为 0.5 标准差为1 得分布

features1 = torch.normal(mean=0.2, std=2, size=(data_num, 200), dtype=torch.float32)

labels1 = torch.ones(data_num)

# 第二个数据集 符合均值为 -0.5 标准差为1的分布

features2 = torch.normal(mean=-0.2, std=2, size=(data_num, 200), dtype=torch.float32)

labels2 = torch.zeros(data_num)

# 构建训练数据集

train_features2 = torch.cat((features1[:train_num], features2[:train_num]), dim=0) # size torch.Size([14000, 200])

train_labels2 = torch.cat((labels1[:train_num], labels2[:train_num]), dim=-1) # size torch.Size([6000, 200])

# 构建测试数据集

test_features2 = torch.cat((features1[train_num:], features2[train_num:]), dim=0) # torch.Size([14000])

test_labels2 = torch.cat((labels1[train_num:], labels2[train_num:]), dim=-1) # torch.Size([6000])

batch_size = 128

# Build the training and testing dataset

traindataset2 = torch.utils.data.TensorDataset(train_features2, train_labels2)

testdataset2 = torch.utils.data.TensorDataset(test_features2, test_labels2)

traindataloader2 = torch.utils.data.DataLoader(dataset=traindataset2,batch_size=batch_size,shuffle=True)

testdataloader2 = torch.utils.data.DataLoader(dataset=testdataset2,batch_size=batch_size,shuffle=True)

# 定义多分类数据集 - train_dataloader - test_dataloader

batch_size = 128

# Build the training and testing dataset

traindataset3 = torchvision.datasets.FashionMNIST(root='.\\FashionMNIST\\Train',

train=True,

download=True,

transform=transforms.ToTensor())

testdataset3 = torchvision.datasets.FashionMNIST(root='.\\FashionMNIST\\Test',

train=False,

download=True,

transform=transforms.ToTensor())

traindataloader3 = torch.utils.data.DataLoader(traindataset3, batch_size=batch_size, shuffle=True)

testdataloader3 = torch.utils.data.DataLoader(testdataset3, batch_size=batch_size, shuffle=False)

# 绘制图像的代码

def picture(name, trainl, testl, type='Loss'):

plt.rcParams["font.sans-serif"]=["SimHei"] #设置字体

plt.rcParams["axes.unicode_minus"]=False #该语句解决图像中的“-”负号的乱码问题

plt.title(name) # 命名

plt.plot(trainl, c='g', label='Train '+ type)

plt.plot(testl, c='r', label='Test '+type)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.legend()

plt.grid(True)

print(f'回归数据集 样本总数量{len(traindataset1) + len(testdataset1)},训练样本数量{len(traindataset1)},测试样本数量{len(testdataset1)}')

print(f'二分类数据集 样本总数量{len(traindataset2) + len(testdataset2)},训练样本数量{len(traindataset2)},测试样本数量{len(testdataset2)}')

print(f'多分类数据集 样本总数量{len(traindataset3) + len(testdataset3)},训练样本数量{len(traindataset3)},测试样本数量{len(testdataset3)}')

def ComPlot(datalist,title='1',ylabel='Loss',flag='act'):

plt.rcParams["font.sans-serif"]=["SimHei"] #设置字体

plt.rcParams["axes.unicode_minus"]=False #该语句解决图像中的“-”负号的乱码问题

plt.title(title)

plt.xlabel('Epoch')

plt.ylabel(ylabel)

plt.plot(datalist[0],label='Tanh' if flag=='act' else '[128]')

plt.plot(datalist[1],label='Sigmoid' if flag=='act' else '[512 256]')

plt.plot(datalist[2],label='ELu' if flag=='act' else '[512 256 128 64]')

plt.plot(datalist[3],label='Relu' if flag=='act' else '[256]')

plt.legend()

plt.grid(True)

from torch.optim import SGD

from torch.nn import MSELoss

# 利用torch.nn实现前馈神经网络-回归任务 代码

# 定义自己的前馈神经网络

class MyNet21(nn.Module):

def __init__(self):

super(MyNet21, self).__init__()

# 设置隐藏层和输出层的节点数

num_inputs, num_hiddens, num_outputs = 500, 256, 1

# 定义模型结构

self.input_layer = nn.Flatten()

self.hidden_layer = nn.Linear(num_inputs, num_hiddens)

self.output_layer = nn.Linear(num_hiddens, num_outputs)

self.relu = nn.ReLU()

# 定义前向传播

def forward(self, x):

x = self.input_layer(x)

x = self.relu(self.hidden_layer(x))

x = self.output_layer(x)

return x

# 训练

model21 = MyNet21() # logistics模型

model21 = model21.to(device)

print(model21)

criterion = MSELoss() # 损失函数

criterion = criterion.to(device)

optimizer = SGD(model21.parameters(), lr=0.1) # 优化函数

epochs = 40 # 训练轮数

train_all_loss21 = [] # 记录训练集上得loss变化

test_all_loss21 = [] # 记录测试集上的loss变化

begintime21 = time.time()

for epoch in range(epochs):

train_l = 0

for data, labels in traindataloader1:

data, labels = data.to(device=device), labels.to(device)

pred = model21(data)

train_each_loss = criterion(pred.view(-1, 1), labels.view(-1, 1)) # 计算每次的损失值

optimizer.zero_grad() # 梯度清零

train_each_loss.backward() # 反向传播

optimizer.step() # 梯度更新

train_l += train_each_loss.item()

train_all_loss21.append(train_l) # 添加损失值到列表中

with torch.no_grad():

test_loss = 0

for data, labels in testdataloader1:

data, labels = data.to(device), labels.to(device)

pred = model21(data)

test_each_loss = criterion(pred,labels)

test_loss += test_each_loss.item()

test_all_loss21.append(test_loss)

if epoch == 0 or (epoch + 1) % 10 == 0:

print('epoch: %d | train loss:%.5f | test loss:%.5f' % (epoch + 1, train_all_loss21[-1], test_all_loss21[-1]))

endtime21 = time.time()

print("torch.nn实现前馈网络-回归实验 %d轮 总用时: %.3fs" % (epochs, endtime21 - begintime21))

# 利用torch.nn实现前馈神经网络-二分类任务

import time

from torch.optim import SGD

from torch.nn.functional import binary_cross_entropy

# 利用torch.nn实现前馈神经网络-回归任务 代码

# 定义自己的前馈神经网络

class MyNet22(nn.Module):

def __init__(self):

super(MyNet22, self).__init__()

# 设置隐藏层和输出层的节点数

num_inputs, num_hiddens, num_outputs = 200, 256, 1

# 定义模型结构

self.input_layer = nn.Flatten()

self.hidden_layer = nn.Linear(num_inputs, num_hiddens)

self.output_layer = nn.Linear(num_hiddens, num_outputs)

self.relu = nn.ReLU()

def logistic(self, x): # 定义logistic函数

x = 1.0 / (1.0 + torch.exp(-x))

return x

# 定义前向传播

def forward(self, x):

x = self.input_layer(x)

x = self.relu(self.hidden_layer(x))

x = self.logistic(self.output_layer(x))

return x

# 训练

model22 = MyNet22() # logistics模型

model22 = model22.to(device)

print(model22)

optimizer = SGD(model22.parameters(), lr=0.001) # 优化函数

epochs = 40 # 训练轮数

train_all_loss22 = [] # 记录训练集上得loss变化

test_all_loss22 = [] # 记录测试集上的loss变化

train_ACC22, test_ACC22 = [], []

begintime22 = time.time()

for epoch in range(epochs):

train_l, train_epoch_count, test_epoch_count = 0, 0, 0 # 每一轮的训练损失值 训练集正确个数 测试集正确个数

for data, labels in traindataloader2:

data, labels = data.to(device), labels.to(device)

pred = model22(data)

train_each_loss = binary_cross_entropy(pred.view(-1), labels.view(-1)) # 计算每次的损失值

optimizer.zero_grad() # 梯度清零

train_each_loss.backward() # 反向传播

optimizer.step() # 梯度更新

train_l += train_each_loss.item()

pred = torch.tensor(np.where(pred.cpu()>0.5, 1, 0)) # 大于 0.5时候,预测标签为 1 否则为0

each_count = (pred.view(-1) == labels.cpu()).sum() # 每一个batchsize的正确个数

train_epoch_count += each_count # 计算每个epoch上的正确个数

train_ACC22.append(train_epoch_count / len(traindataset2))

train_all_loss22.append(train_l) # 添加损失值到列表中

with torch.no_grad():

test_loss, each_count = 0, 0

for data, labels in testdataloader2:

data, labels = data.to(device), labels.to(device)

pred = model22(data)

test_each_loss = binary_cross_entropy(pred.view(-1),labels)

test_loss += test_each_loss.item()

# .cpu 为转换到cpu上计算

pred = torch.tensor(np.where(pred.cpu() > 0.5, 1, 0))

each_count = (pred.view(-1)==labels.cpu().view(-1)).sum()

test_epoch_count += each_count

test_all_loss22.append(test_loss)

test_ACC22.append(test_epoch_count / len(testdataset2))

if epoch == 0 or (epoch + 1) % 4 == 0:

print('epoch: %d | train loss:%.5f test loss:%.5f | train acc:%.5f | test acc:%.5f' % (epoch + 1, train_all_loss22[-1],

test_all_loss22[-1], train_ACC22[-1], test_ACC22[-1]))

endtime22 = time.time()

print("torch.nn实现前馈网络-二分类实验 %d轮 总用时: %.3fs" % (epochs, endtime22 - begintime22))

# 利用torch.nn实现前馈神经网络-多分类任务

from collections import OrderedDict

from torch.nn import CrossEntropyLoss

from torch.optim import SGD

# 定义自己的前馈神经网络

class MyNet23(nn.Module):

"""

参数: num_input:输入每层神经元个数,为一个列表数据

num_hiddens:隐藏层神经元个数

num_outs: 输出层神经元个数

num_hiddenlayer : 隐藏层的个数

"""

def __init__(self,num_hiddenlayer=1, num_inputs=28*28,num_hiddens=[256],num_outs=10,act='relu'):

super(MyNet23, self).__init__()

# 设置隐藏层和输出层的节点数

self.num_inputs, self.num_hiddens, self.num_outputs = num_inputs,num_hiddens,num_outs # 十分类问题

# 定义模型结构

self.input_layer = nn.Flatten()

# 若只有一层隐藏层

if num_hiddenlayer ==1:

self.hidden_layers = nn.Linear(self.num_inputs,self.num_hiddens[-1])

else: # 若有多个隐藏层

self.hidden_layers = nn.Sequential()

self.hidden_layers.add_module("hidden_layer1", nn.Linear(self.num_inputs,self.num_hiddens[0]))

for i in range(0,num_hiddenlayer-1):

name = str('hidden_layer'+str(i+2))

self.hidden_layers.add_module(name, nn.Linear(self.num_hiddens[i],self.num_hiddens[i+1]))

self.output_layer = nn.Linear(self.num_hiddens[-1], self.num_outputs)

# 指代需要使用什么样子的激活函数

if act == 'relu':

self.act = nn.ReLU()

elif act == 'sigmoid':

self.act = nn.Sigmoid()

elif act == 'tanh':

self.act = nn.Tanh()

elif act == 'elu':

self.act = nn.ELU()

print(f'你本次使用的激活函数为 {act}')

def logistic(self, x): # 定义logistic函数

x = 1.0 / (1.0 + torch.exp(-x))

return x

# 定义前向传播

def forward(self, x):

x = self.input_layer(x)

x = self.act(self.hidden_layers(x))

x = self.output_layer(x)

return x

# 训练

# 使用默认的参数即: num_inputs=28*28,num_hiddens=256,num_outs=10,act='relu'

model23 = MyNet23()

model23 = model23.to(device)

# 将训练过程定义为一个函数,方便实验三和实验四调用

def train_and_test(model=model23):

MyModel = model

print(MyModel)

optimizer = SGD(MyModel.parameters(), lr=0.01) # 优化函数

epochs = 40 # 训练轮数

criterion = CrossEntropyLoss() # 损失函数

train_all_loss23 = [] # 记录训练集上得loss变化

test_all_loss23 = [] # 记录测试集上的loss变化

train_ACC23, test_ACC23 = [], []

begintime23 = time.time()

for epoch in range(epochs):

train_l, train_epoch_count, test_epoch_count = 0, 0, 0

for data, labels in traindataloader3:

data, labels = data.to(device), labels.to(device)

pred = MyModel(data)

train_each_loss = criterion(pred, labels.view(-1)) # 计算每次的损失值

optimizer.zero_grad() # 梯度清零

train_each_loss.backward() # 反向传播

optimizer.step() # 梯度更新

train_l += train_each_loss.item()

train_epoch_count += (pred.argmax(dim=1)==labels).sum()

train_ACC23.append(train_epoch_count.cpu()/len(traindataset3))

train_all_loss23.append(train_l) # 添加损失值到列表中

with torch.no_grad():

test_loss, test_epoch_count= 0, 0

for data, labels in testdataloader3:

data, labels = data.to(device), labels.to(device)

pred = MyModel(data)

test_each_loss = criterion(pred,labels)

test_loss += test_each_loss.item()

test_epoch_count += (pred.argmax(dim=1)==labels).sum()

test_all_loss23.append(test_loss)

test_ACC23.append(test_epoch_count.cpu()/len(testdataset3))

if epoch == 0 or (epoch + 1) % 4 == 0:

print('epoch: %d | train loss:%.5f | test loss:%.5f | train acc:%5f test acc:%.5f:' % (epoch + 1, train_all_loss23[-1], test_all_loss23[-1],

train_ACC23[-1],test_ACC23[-1]))

endtime23 = time.time()

print("torch.nn实现前馈网络-多分类任务 %d轮 总用时: %.3fs" % (epochs, endtime23 - begintime23))

# 返回训练集和测试集上的 损失值 与 准确率

return train_all_loss23,test_all_loss23,train_ACC23,test_ACC23

train_all_loss23,test_all_loss23,train_ACC23,test_ACC23 = train_and_test(model=model23)

plt.figure(figsize=(12,3))

plt.subplot(131)

picture('前馈网络-回归-loss',train_all_loss21,test_all_loss21)

plt.subplot(132)

picture('前馈网络-二分类-loss',train_all_loss22,test_all_loss22)

plt.subplot(133)

picture('前馈网络-多分类-loss',train_all_loss23,test_all_loss23)

plt.show()

plt.figure(figsize=(8,3))

plt.subplot(121)

picture('前馈网络-二分类-ACC',train_ACC22,test_ACC22,type='ACC')

plt.subplot(122)

picture('前馈网络-多分类-ACC',train_ACC23,test_ACC23,type='ACC')

plt.show()

plt.figure(figsize=(16,3))

plt.subplot(141)

ComPlot([train_all_loss31,train_all_loss32,train_all_loss33,train_all_loss23],title='Train_Loss')

plt.subplot(142)

ComPlot([test_all_loss31,test_all_loss32,test_all_loss33,test_all_loss23],title='Test_Loss')

plt.subplot(143)

ComPlot([train_ACC31,train_ACC32,train_ACC33,train_ACC23],title='Train_ACC')

plt.subplot(144)

ComPlot([test_ACC31,test_ACC32,test_ACC33,test_ACC23],title='Test_ACC')

plt.show()

到了这里,关于fnn手动实现和nn实现(包括3种激活函数、隐藏层)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!

![MedicalGPT:基于LLaMA-13B的中英医疗问答模型(LoRA)、实现包括二次预训练、有监督微调、奖励建模、强化学习训练[LLM:含Ziya-LLaMA]。](https://imgs.yssmx.com/Uploads/2024/02/828638-1.png)