一.nova架构

nova是openstack最核心的服务,负责维护和管理云环境的计算资源。因此,云主机的整个生命周期都是由nova负责的。

1.1 nova-api

负责接收和相应客户的API调用。

1.2 compute core

nova-schedule

负责决定在哪个计算节点运行虚拟机。

nova-compute

通过调用Hypervisor实现虚拟机生命周期的管理。一般运行在计算节点。

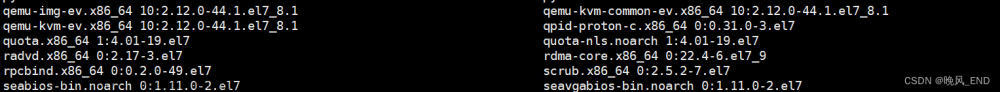

hypervisor

对虚拟机进行硬件虚拟化的管理软件,比如KVM和VMWare等。

nova-conductor

由于nova-compute需要不断对数据库进行更新,比如更新虚拟机状态,为了安全性和伸缩性的考虑,通过nova-conductor间接实现数据库的访问。

1.3 database

一般使用MYSQL,安装在控制节点上,因为nova有一些数据需要存储在database中。

1.4 Message Queue

用于nova各个子服务之间的通讯,一般使用的是RabbitMQ,从而解耦各个子服务。

二.nova创建主机源码剖析

1.nova-api进程执行过程:

a. nova:api:openstack:compute:servers.py:ServersController:create():

通过用户发送的api数据中的req和body信息来解析需要的有关云主机的数据,比如云主机类型(inst_type),镜像id(image_uuid),主机聚合(availability_zone),强制使用的主机以及节点(forced_host,forced_node),元数据(metadata),连接的网络(requested_networks)等,然后调用nova:compute:api.py:API:create()来正式开始创建云主机,最后向用户返回响应结果。

def create(self, req, body):

"""Creates a new server for a given user."""

context = req.environ['nova.context']

server_dict = body['server']

password = self._get_server_admin_password(server_dict)

name = common.normalize_name(server_dict['name'])

description = name

if api_version_request.is_supported(req, min_version='2.19'):

description = server_dict.get('description')

# Arguments to be passed to instance create function

create_kwargs = {}

# TODO(alex_xu): This is for back-compatible with stevedore

# extension interface. But the final goal is that merging

# all of extended code into ServersController.

self._create_by_func_list(server_dict, create_kwargs, body)

availability_zone = server_dict.pop("availability_zone", None)

if api_version_request.is_supported(req, min_version='2.52'):

create_kwargs['tags'] = server_dict.get('tags')

helpers.translate_attributes(helpers.CREATE,

server_dict, create_kwargs)

target = {

'project_id': context.project_id,

'user_id': context.user_id,

'availability_zone': availability_zone}

context.can(server_policies.SERVERS % 'create', target)

# TODO(Shao He, Feng) move this policy check to os-availability-zone

# extension after refactor it.

parse_az = self.compute_api.parse_availability_zone

try:

availability_zone, host, node = parse_az(context,

availability_zone)

except exception.InvalidInput as err:

raise exc.HTTPBadRequest(explanation=six.text_type(err))

if host or node:

context.can(server_policies.SERVERS % 'create:forced_host', {})

# NOTE(danms): Don't require an answer from all cells here, as

# we assume that if a cell isn't reporting we won't schedule into

# it anyway. A bit of a gamble, but a reasonable one.

min_compute_version = service_obj.get_minimum_version_all_cells(

nova_context.get_admin_context(), ['nova-compute'])

supports_device_tagging = (min_compute_version >=

DEVICE_TAGGING_MIN_COMPUTE_VERSION)

block_device_mapping = create_kwargs.get("block_device_mapping")

# TODO(Shao He, Feng) move this policy check to os-block-device-mapping

# extension after refactor it.

if block_device_mapping:

context.can(server_policies.SERVERS % 'create:attach_volume',

target)

for bdm in block_device_mapping:

if bdm.get('tag', None) and not supports_device_tagging:

msg = _('Block device tags are not yet supported.')

raise exc.HTTPBadRequest(explanation=msg)

image_uuid = self._image_from_req_data(server_dict, create_kwargs)

# NOTE(cyeoh): Although upper layer can set the value of

# return_reservation_id in order to request that a reservation

# id be returned to the client instead of the newly created

# instance information we do not want to pass this parameter

# to the compute create call which always returns both. We use

# this flag after the instance create call to determine what

# to return to the client

return_reservation_id = create_kwargs.pop('return_reservation_id',

False)

requested_networks = server_dict.get('networks', None)

if requested_networks is not None:

requested_networks = self._get_requested_networks(

requested_networks, supports_device_tagging)

# Skip policy check for 'create:attach_network' if there is no

# network allocation request.

if requested_networks and len(requested_networks) and \

not requested_networks.no_allocate:

context.can(server_policies.SERVERS % 'create:attach_network',

target)

flavor_id = self._flavor_id_from_req_data(body)

try:

inst_type = flavors.get_flavor_by_flavor_id(

flavor_id, ctxt=context, read_deleted="no")

supports_multiattach = common.supports_multiattach_volume(req)

(instances, resv_id) = self.compute_api.create(context,

inst_type,

image_uuid,

display_name=name,

display_description=description,

availability_zone=availability_zone,

forced_host=host, forced_node=node,

metadata=server_dict.get('metadata', {}),

admin_password=password,

requested_networks=requested_networks,

check_server_group_quota=True,

supports_multiattach=supports_multiattach,

**create_kwargs)

......

# If the caller wanted a reservation_id, return it

if return_reservation_id:

return wsgi.ResponseObject({'reservation_id': resv_id})

req.cache_db_instances(instances)

server = self._view_builder.create(req, instances[0])

if CONF.api.enable_instance_password:

server['server']['adminPass'] = password

robj = wsgi.ResponseObject(server)

return self._add_location(robj)b. nova:compute:api.py:API:create():

这个函数检查是否指定IP和端口,是否有可用主机聚合以及生成过滤器属性,最后调用_create_instance()函数。

def create(self, context, instance_type,

image_href, kernel_id=None, ramdisk_id=None,

min_count=None, max_count=None,

display_name=None, display_description=None,

key_name=None, key_data=None, security_groups=None,

availability_zone=None, forced_host=None, forced_node=None,

user_data=None, metadata=None, injected_files=None,

admin_password=None, block_device_mapping=None,

access_ip_v4=None, access_ip_v6=None, requested_networks=None,

config_drive=None, auto_disk_config=None, scheduler_hints=None,

legacy_bdm=True, shutdown_terminate=False,

check_server_group_quota=False, tags=None,

supports_multiattach=False):

if requested_networks and max_count is not None and max_count > 1:

self._check_multiple_instances_with_specified_ip(

requested_networks)

if utils.is_neutron():

self._check_multiple_instances_with_neutron_ports(

requested_networks)

if availability_zone:

available_zones = availability_zones.\

get_availability_zones(context.elevated(), True)

if forced_host is None and availability_zone not in \

available_zones:

msg = _('The requested availability zone is not available')

raise exception.InvalidRequest(msg)

filter_properties = scheduler_utils.build_filter_properties(

scheduler_hints, forced_host, forced_node, instance_type)

return self._create_instance(

context, instance_type,

image_href, kernel_id, ramdisk_id,

min_count, max_count,

display_name, display_description,

key_name, key_data, security_groups,

availability_zone, user_data, metadata,

injected_files, admin_password,

access_ip_v4, access_ip_v6,

requested_networks, config_drive,

block_device_mapping, auto_disk_config,

filter_properties=filter_properties,

legacy_bdm=legacy_bdm,

shutdown_terminate=shutdown_terminate,

check_server_group_quota=check_server_group_quota,

tags=tags, supports_multiattach=supports_multiattach)c. nova:compute:api.py:API:_create_instance():

这个函数主要的代码包含了三个部分:1.通过调用_provision_instances()函数将虚拟机参数写入到数据库之中;2.如果创建了域,则调用build_instances()函数;3.如果没有创建域,则调用schedule_and_build_instances()函数。

def _create_instance(self, context, instance_type,

image_href, kernel_id, ramdisk_id,

min_count, max_count,

display_name, display_description,

key_name, key_data, security_groups,

availability_zone, user_data, metadata, injected_files,

admin_password, access_ip_v4, access_ip_v6,

requested_networks, config_drive,

block_device_mapping, auto_disk_config, filter_properties,

reservation_id=None, legacy_bdm=True, shutdown_terminate=False,

check_server_group_quota=False, tags=None,

supports_multiattach=False):

......

instances_to_build = self._provision_instances(

context, instance_type, min_count, max_count, base_options,

boot_meta, security_groups, block_device_mapping,

shutdown_terminate, instance_group, check_server_group_quota,

filter_properties, key_pair, tags, supports_multiattach)

instances = []

request_specs = []

build_requests = []

for rs, build_request, im in instances_to_build:

build_requests.append(build_request)

instance = build_request.get_new_instance(context)

instances.append(instance)

request_specs.append(rs)

if CONF.cells.enable:

# NOTE(danms): CellsV1 can't do the new thing, so we

# do the old thing here. We can remove this path once

# we stop supporting v1.

for instance in instances:

instance.create()

# NOTE(melwitt): We recheck the quota after creating the objects

# to prevent users from allocating more resources than their

# allowed quota in the event of a race. This is configurable

# because it can be expensive if strict quota limits are not

# required in a deployment.

if CONF.quota.recheck_quota:

try:

compute_utils.check_num_instances_quota(

context, instance_type, 0, 0,

orig_num_req=len(instances))

except exception.TooManyInstances:

with excutils.save_and_reraise_exception():

# Need to clean up all the instances we created

# along with the build requests, request specs,

# and instance mappings.

self._cleanup_build_artifacts(instances,

instances_to_build)

self.compute_task_api.build_instances(context,

instances=instances, image=boot_meta,

filter_properties=filter_properties,

admin_password=admin_password,

injected_files=injected_files,

requested_networks=requested_networks,

security_groups=security_groups,

block_device_mapping=block_device_mapping,

legacy_bdm=False)

else:

self.compute_task_api.schedule_and_build_instances(

context,

build_requests=build_requests,

request_spec=request_specs,

image=boot_meta,

admin_password=admin_password,

injected_files=injected_files,

requested_networks=requested_networks,

block_device_mapping=block_device_mapping,

tags=tags)

return instances, reservation_id我们先来分析_create_instance()函数的第一部分:_provision_instances()函数:

该函数主要建立了四张表:

| req_spec | 虚拟机调度需要的表格,保存在nova-api的request_specs表中。 |

| instance | 虚拟机的相关信息。保存在nova数据库中。 |

| build_request | 创建虚拟机时,nova-api不会把数据保存在nova数据库的instances表中,而是保存在nova-api数据库中的build_request表中。 |

| inst_mapping | 不同cell之间的实例映射,保存在nova-api的instance_mappings表中。 |

最后我们来看看第三部分:schedule_and_build_instances()函数,该函数便开始了虚拟机的调度过程。

d. nova:conductor:api.py:ComputeTaskAPI:schedule_and_build_instances()

该函数调用了nova:conductor:rpcapi.py:ComputeTaskAPI:schedule_and_build_instances()函数,此rpcapi.py下的schedule_and_build_instances()函数又封装了nova-api所产生的参数,并且进行RPC异步调用,注意由于是异步调用,nova-api会立即返回,继续响应用户的API请求,从此刻开始,由conductor来接收RPC消息来继续进行虚拟机的调度过程。

以上过程vm_state为building,task_state为scheduling。具体是在nova/compute/api.py文件下的API.py类的populate_instance_for_create()中将instance表中的vm_state设置成BUILDING,将task_state设置成SCHEDULING,表明该过程在调度。

populate_instance_for_create()是在_provision_instances()函数中创建instance表格时调用的。

2.nova-conductor进程执行过程

nova:conductor:manager.py:ComputeTaskManager:schedule_and_build_instances():

nova-conductor进程调用该函数接收nova-api发送的RPC消息,该函数主要调用了_schedule _instances()函数,_schedule_instances()函数又调用了nova: scheduler:client:_init_.py:SchedulerClient:select_destinations()函数,该函数又调用了nova:scheduler:client:query.py:select_destinati ons()函数,最后又调用了nova: scheduler:rpcapi.py: SchedulerAPI:select_destinations()函数,于是又到了RPC调用环节,不过该函数采用的是RPC同步调用,过程中会一直等待调用返回。此时,nova-scheduler进程接收到RPC消息,开始正式进行虚拟机调度过程。

def schedule_and_build_instances(self, context, build_requests,

request_specs, image,

admin_password, injected_files,

requested_networks, block_device_mapping,

tags=None):

......

with obj_target_cell(instance, cell) as cctxt:

self.compute_rpcapi.build_and_run_instance(

cctxt, instance=instance, image=image,

request_spec=request_spec,

filter_properties=filter_props,

admin_password=admin_password,

injected_files=injected_files,

requested_networks=requested_networks,

security_groups=legacy_secgroups,

block_device_mapping=instance_bdms,

host=host.service_host, node=host.nodename,

limits=host.limits, host_list=host_list)3.nova-scheduler进程执行过程

nova:scheduler:manager.py:SchedulerManager:select_destinations()函数:

nova-scheduler进程调用该函数接收nova-conductor发送的请求nova-scheduler进行虚拟机调度的RPC消息,该函数内部会调用driver的select_destinations()函数,driver其实相当于一种调度器驱动,在配置文件nova.conf文件中的调度器驱动scheduler_driver选项选择filter_scheduler,则可以使用filter_scheduler作为调度器(其他备选项为:caching_scheduler,chance_scheduler,fake_scheduler)。filter_scheduler算法能够根据指定的filter(也是在nova.conf中指定)来过滤掉不满足条件的计算节点,最后再根据weight算法计算权值,选择权值最高的计算节点来创建虚拟机。具体的filter处理过程将在后面一篇进行介绍。

def select_destinations(self, ctxt, request_spec=None,

filter_properties=None, spec_obj=_sentinel, instance_uuids=None,

return_objects=False, return_alternates=False):

......

# Only return alternates if both return_objects and return_alternates

# are True.

return_alternates = return_alternates and return_objects

selections = self.driver.select_destinations(ctxt, spec_obj,

instance_uuids, alloc_reqs_by_rp_uuid, provider_summaries,

allocation_request_version, return_alternates)

......

return selections当选择完目标计算节点以后,由于nova-conductor使用的是同步调度算法,因此nova-scheduler会将选择的计算节点返回给nova-conductor,最后程序将回到nova:conductor:api.py: ComputeTaskAPI:schedule_and_build_instances()函数,由nova-conductor进程继续执行。

4.nova-conductor进程执行过程

nova:conductor:manager.py:ComputeTaskManager:schedule_and_build_instances():

nova-conductor在该函数中进行一系列的处理,最终调用nova:compute:rpcapi.py:Compute API:build_and_run_instance()函数。该函数继续进行我们熟悉的RPC调用来通知nova-compute进程来在该进程所在的计算节点上部署虚拟机,注意该调用采取的是异步调用的方式。

def build_and_run_instance(self, ctxt, instance, host, image, request_spec,

filter_properties, admin_password=None, injected_files=None,

requested_networks=None, security_groups=None,

block_device_mapping=None, node=None, limits=None,

host_list=None):

# NOTE(edleafe): compute nodes can only use the dict form of limits.

if isinstance(limits, objects.SchedulerLimits):

limits = limits.to_dict()

kwargs = {"instance": instance,

"image": image,

"request_spec": request_spec,

"filter_properties": filter_properties,

"admin_password": admin_password,

"injected_files": injected_files,

"requested_networks": requested_networks,

"security_groups": security_groups,

"block_device_mapping": block_device_mapping,

"node": node,

"limits": limits,

"host_list": host_list,

}

client = self.router.client(ctxt)

version = self._ver(ctxt, '4.19')

if not client.can_send_version(version):

version = '4.0'

kwargs.pop("host_list")

cctxt = client.prepare(server=host, version=version)

cctxt.cast(ctxt, 'build_and_run_instance', **kwargs)5. nova-compute进程执行过程

nova:compute:manager.py:ComputeManager:build_and_run_instance()函数:

该函数继续调用_do_build_and_run_instance()函数,该函数内部会更新instance表中的vm_state的状态为BUILDING(貌似没变)以及task_state的状态为none。

def _do_build_and_run_instance(self, context, instance, image,

request_spec, filter_properties, admin_password, injected_files,

requested_networks, security_groups, block_device_mapping,

node=None, limits=None, host_list=None):

try:

LOG.debug('Starting instance...', instance=instance)

instance.vm_state = vm_states.BUILDING

instance.task_state = None

instance.save(expected_task_state=

(task_states.SCHEDULING, None))

......然后_do_build_and_ run_instance()函数再继续调用_build_and_run_instance()函数,该函数内部会继续调用_build_resource()函数继续申请网络和磁盘资源。等待分配完资源以后更新task_ state状态为BUILDING;然后再调用driver(这里为libvirt.LibvirtDriver,即Hypervisor,在nova.conf中的compute_driver进行设置,之后driver相同)的spawn函数进行创建,该过程时间最长;最后创建完毕返回,instance表中的vm_state状态变为ACTIVE,task_state状态变为none,power_state变为RUNNING。到此虚拟机的创建过程结束。文章来源:https://www.toymoban.com/news/detail-709214.html

def _build_and_run_instance(self, context, instance, image, injected_files,

admin_password, requested_networks, security_groups,

block_device_mapping, node, limits, filter_properties,

request_spec=None):

......

with self._build_resources(context, instance,

requested_networks, security_groups, image_meta,

block_device_mapping) as resources:

instance.vm_state = vm_states.BUILDING

instance.task_state = task_states.SPAWNING

# NOTE(JoshNang) This also saves the changes to the

# instance from _allocate_network_async, as they aren't

# saved in that function to prevent races.

instance.save(expected_task_state=

task_states.BLOCK_DEVICE_MAPPING)

block_device_info = resources['block_device_info']

network_info = resources['network_info']

allocs = resources['allocations']

LOG.debug('Start spawning the instance on the hypervisor.',

instance=instance)

with timeutils.StopWatch() as timer:

self.driver.spawn(context, instance, image_meta,

injected_files, admin_password,

allocs, network_info=network_info,

block_device_info=block_device_info)

LOG.info('Took %0.2f seconds to spawn the instance on '

'the hypervisor.', timer.elapsed(),

instance=instance)

......

compute_utils.notify_about_instance_create(context, instance,

self.host, phase=fields.NotificationPhase.END,

bdms=block_device_mapping)接下来我们再来看一下_build_resources()函数的具体实现:1.调用_build_networks_for_ instance()函数来为虚拟机分配网络资源,该函数内部会利用driver来为虚拟机获取mac地址(ip地址是在虚拟机启动阶段由dhcp协议进行分配),该函数内部再调用_allocate_network()函数异步分配网络,并且会将task_state的状态更新为NETWORKING,vm_state状态不变;2.在准备块设备之前调用prepare_networks_before_block_device_mapping()函数对虚拟机网络进行配置;3.将task_state的状态改为BLOCK_DEVICE_MAPPING,vm_state的状态不变,然后调用_prep_block _device()函数为虚拟机分配块设备,内部具体还是要调用driver进行实现。文章来源地址https://www.toymoban.com/news/detail-709214.html

def _build_resources(self, context, instance, requested_networks,

security_groups, image_meta, block_device_mapping):

resources = {}

network_info = None

try:

LOG.debug('Start building networks asynchronously for instance.',

instance=instance)

network_info = self._build_networks_for_instance(context, instance,

requested_networks, security_groups)

resources['network_info'] = network_info

......

try:

# Depending on a virt driver, some network configuration is

# necessary before preparing block devices.

self.driver.prepare_networks_before_block_device_mapping(

instance, network_info)

# Verify that all the BDMs have a device_name set and assign a

# default to the ones missing it with the help of the driver.

self._default_block_device_names(instance, image_meta,

block_device_mapping)

LOG.debug('Start building block device mappings for instance.',

instance=instance)

instance.vm_state = vm_states.BUILDING

instance.task_state = task_states.BLOCK_DEVICE_MAPPING

instance.save()

block_device_info = self._prep_block_device(context, instance,

block_device_mapping)

resources['block_device_info'] = block_device_info

......

raise exception.BuildAbortException(

instance_uuid=instance.uuid,

reason=six.text_type(exc))到了这里,关于openstack 之 nova架构,源码剖析的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!