1、终端,输入第一条命令一直回车即可,然后将生成的将公钥内容写入到~/.ssh/authorized_keys中

ssh-keygen -t rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys2、系统登录-共享-远程登录,打开。如下:

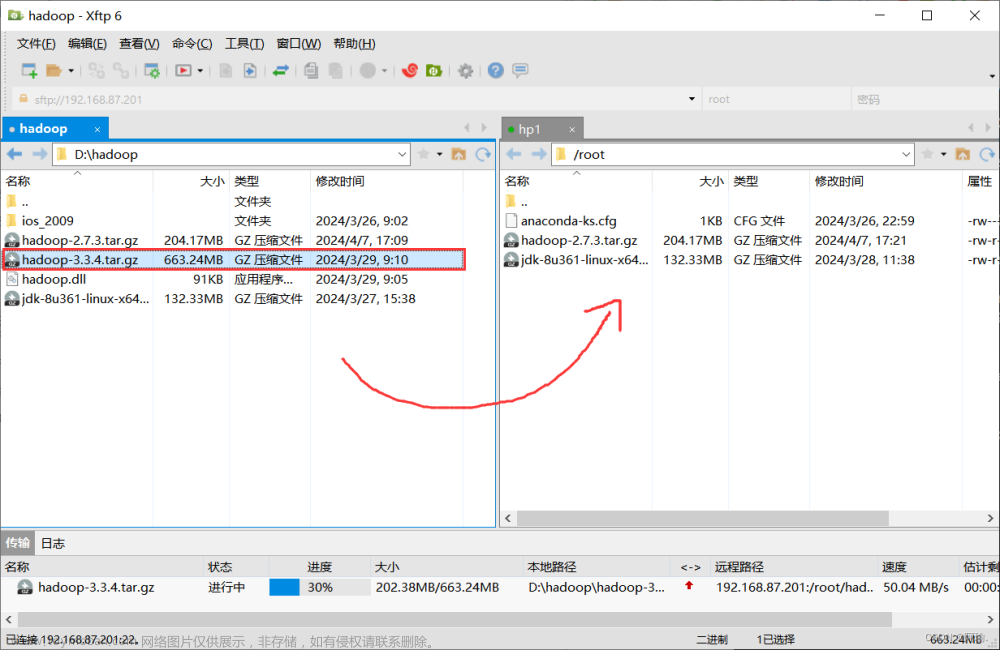

3、官网下载安装包并且解压:

Apache Hadoop

cd ~

tar -zxvf hadoop-3.1.4.tar.gz

4、修改 core-site.xml

cd ~/hadoop-3.1.4/etc/hadoop

vim core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/Users/winhye/hadoop/tmp</value>

</property>

<property>

<!-- fs.default.name 已过期,推荐使用 fs.defaultFS -->

<name>fs.defaultFS</name>

<value>hdfs://hadoop:9000</value>

</property>

<!-- 缓冲区大小,根据服务器性能动态调整 -->

<property>

<name>io.file.buffer.size</name>

<value>4096</value>

</property>

<!-- 开启垃圾桶机制,删除掉的数据可以从垃圾桶中回收,单位分钟 -->

<property>

<name>fs.trash.interval</name>

<value>10080</value>

</property>

</configuration>

5、修改 hdfs-site.xml

cd ~/hadoop-3.1.4/etc/hadoop

vim hdfs-site.xml

<configuration>

<!-- 0.0.0.0 支持来自服务器外部的访问 -->

<property>

<name>dfs.namenode.http-address</name>

<value>0.0.0.0:9870</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>0.0.0.0:9868</value>

</property>

<!-- 数据存储位置,多个目录用英文逗号隔开 -->

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/namenode</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/datanode</value>

</property>

<!-- 元数据操作日志、检查点日志存储目录 -->

<property>

<name>dfs.namenode.edits.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/edits</value>

</property>

<property>

<name>dfs.namenode.checkpoint.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/snn/checkpoint</value>

</property>

<property>

<name>dfs.namenode.checkpoint.edits.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/snn/edits</value>

</property>

<!-- 临时文件目录 -->

<property>

<name>dfs.tmp.dir</name>

<value>file:/Users/winhye/hadoop_bigdata/data/hadoop/tmp</value>

</property>

<!-- 文件切片的副本个数 -->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<!-- HDFS 的文件权限 -->

<property>

<name>dfs.permissions.enabled</name>

<value>true</value>

</property>

<!-- 每个 Block 的大小为128 MB,单位:KB -->

<property>

<name>dfs.blocksize</name>

<value>134217728</value>

</property>

</configuration>

6、修改 yarn-site.xml

cd ~/hadoop-3.1.4/etc/hadoop

vim yarn-site.xml

<configuration>

<property>

<!-- 支持来自服务器外部的访问 -->

<name>yarn.resourcemanager.hostname</name>

<value>0.0.0.0</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>0.0.0.0:8088</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>512</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value>

</property>

</configuration>

7、修改 mapred-site.xml

cd ~/hadoop-3.1.4/etc/hadoop

vim mapred-site.xml

<configuration>

<!-- 设置历史任务的主机和端口,0.0.0.0 支持来自服务器外部的访问 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>0.0.0.0:10020</value>

</property>

<!-- 设置网页端的历史任务的主机和端口 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>0.0.0.0:19888</value>

</property>

</configuration>

8、配置环境变量

vim ~/.bash_profile

export JAVA_8_HOME=/Library/Java/JavaVirtualMachines/jdk1.8.0_202.jdk/Contents/Home

export JAVA_HOME=$JAVA_8_HOME

export PATH=$JAVA_HOME/bin:$PATH

export HADOOP_HOME=/Users/winhye/hadoop-3.1.4

export PATH=$HADOOP_HOME/sbin:$HADOOP_HOME/bin:$PATH9、配置HOSTS文章来源:https://www.toymoban.com/news/detail-731843.html

sudo vim /etc/hosts

127.0.0.1 hadoop10、启动文章来源地址https://www.toymoban.com/news/detail-731843.html

# 先格式化操作:

cd ~/bigdata/hadoop-3.2.1/

# 格式化命令:

bin/hdfs namenode -format # 或:bin/hadoop namenode –format

# 启动 HDFS:

sbin/start-dfs.sh

# 启动 Yarn:

sbin/start-yarn.sh

# 启动 HistoryServer:

sbin/mr-jobhistory-daemon.sh start historyserver

# 注意:上述命令已过时,应使用此命令启动 HistoryServer:

bin/mapred --daemon start historyserver到了这里,关于MACOS Ventura 本地安装HDFS 3.1.4的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!