瑞芯微平台YOLOV5算法的部署

本文实现整体的部署流程比较小白,首先在PC上分别实现工程中的模型仿真推理、yolov5-pytorch仿真推理、自己训练yolov5模型仿真推理,完成仿真之后再在板端分别实现rk提供模型的板端推理、yolov5-pytorch板端推理、自己训练的yolov5模型板端推理,最后实现自己训练的yolov5模型实时视频算法部署,整个过程从仿真到板端,从图片到视频,过程较为繁琐,各位大佬们可以根据自己的情况选择跳过某些章节。接下来我们就一块开始部署之旅吧!

一. 部署概述

环境:Ubuntu20.04、python3.8

芯片:RK3568

芯片系统:buildroot

开发板:RP-RK3568-B

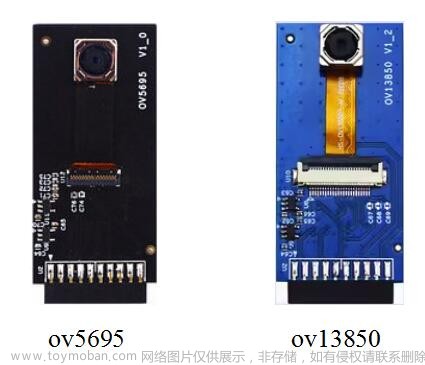

依赖:Qt + opencv + rknn + rga实现视频流采集缩放识别到输出显示,支持USB摄像头、mipi摄像头等,输出支持mipi、hdmi

开发主要参考文档:《Rockchip_Quick_Start_RKNN_Toolkit2_CN-1.4.0.pdf》、《Rockchip_User_Guide_RKNN_Toolkit2_CN-1.4.0.pdf》

二.yolov5模型训练

训练过程网上的博客已经非常完善,不过要注意一下下面提到的yolov5版本,简单记录一下步骤。

2.1创建conda环境

PC机:win10

CUDA:11.7

# 创建conda环境

conda create -n rkyolov5 python=3.8

# 激活conda环境

conda activate rkyolov5

# 删除所有镜像源

conda config --remove-key channels

# 安装pytoch

conda install pytorch torchvision torchaudio pytorch-cuda=11.7 -c pytorch -c nvidia

安装pytorch到官网下载对应的CUDA版本。

2.2训练yolov5

对应版本的yolov5链接:https://github.com/ultralytics/yolov5/tree/c5360f6e7009eb4d05f14d1cc9dae0963e949213

此版本为v5.0,相应的权重文件可对应从官网下载;

将yolov5的激活函数由silu改为relu,会损失精度但能带来性能的提升。

以下QA记录训练时的报错信息。

Q:UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at TensorShape.cpp:2228.) return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

A:functional.py文件中,将 return _VF.meshgrid(tensors, **kwargs)改为 return _VF.meshgrid(tensors, **kwargs,indexing=‘ij’)

Q:RuntimeError: result type Float can‘t be cast to the desired output type __int64

A:loss.py文件中修改

-

anchors = self.anchors[i] 为anchors, shape = self.anchors[i], p[i].shape

-

indices.append((b, a, gj.clamp_(0, gain[3] - 1), gi.clamp_(0, gain[2] - 1))) # image, anchor, grid indices

为

indices.append((b, a, gj.clamp_(0, shape[2] - 1), gi.clamp_(0, shape[3] - 1))) # image, anchor, grid

三.Ubuntu环境搭建

根据文档中所写,RK官方提供了两种环境搭建方法:一是通过 Python 包安装与管理工具 pip 进行安装;二是运行带完整 RKNN-Toolkit2 工具包的 docker 镜像。

建议采用通过Docker镜像安装的方式,后续不用担心因环境搭建引起的问题。其中包含docker镜像的安装包在瑞芯微github项目主页提供的百度企业网盘链接中,从网盘下载的rknn-toolkit2和github拉取的区别在于是否包含docker镜像,rknn-npu2项目可以直接从github/rockchip-linux/rknpu2拉取最新代码。

下面给出在项目中常用到的docker命令:

docker images // 列出docker中的镜像

<ctrl + D> // 退出容器

docker ps // 列出正在运行的容器

docker ps -a // 列出所有的容器

docker start -i <id of image> // 启动容器

docker安装后执行如下命令:

# 加载镜像

rockchip@rockchip-virtual-machine:~/NPU/rknn-toolkit2-1.4.0/docker$ docker load --input rknn-toolkit2-1.4.0-cp38-docker.tar.gz

feef05f055c9: Loading layer [==================================================>] 75.13MB/75.13MB

27a0fcbed699: Loading layer [==================================================>] 3.584kB/3.584kB

f62852363a2c: Loading layer [==================================================>] 424MB/424MB

d3193fc26692: Loading layer [==================================================>] 4.608kB/4.608kB

85943b0adcca: Loading layer [==================================================>] 9.397MB/9.397MB

0bec62724c1a: Loading layer [==================================================>] 9.303MB/9.303MB

e71db98f482d: Loading layer [==================================================>] 262.1MB/262.1MB

bde01abfb33a: Loading layer [==================================================>] 4.498MB/4.498MB

da9eed9f1e11: Loading layer [==================================================>] 5.228GB/5.228GB

85893de9b3b8: Loading layer [==================================================>] 106.7MB/106.7MB

0c9ec6e0b723: Loading layer [==================================================>] 106.7MB/106.7MB

d16b85c303bc: Loading layer [==================================================>] 106.7MB/106.7MB

Loaded image: rknn-toolkit2:1.4.0-cp38

# 检查镜像

rockchip@rockchip-virtual-machine:~/NPU/rknn-toolkit2-1.4.0/docker$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

rknn-toolkit2 1.4.0-cp38 afab63ce3679 7 months ago 6.29GB

hello-world latest feb5d9fea6a5 19 months ago 13.3kB

# 运行镜像并将examples映射到镜像空间。根据自己路径修改命令中的路径。

rockchip@rockchip-virtual-machine:~/NPU/rknn-toolkit2-1.4.0/docker$ docker run -t -i --privileged -v /dev/bus/usb:/dev/bus/usb -v /home/rockchip/NPU/rknn-toolkit2-1.4.0/examples/:/examples rknn-toolkit2:1.4.0-cp38 /bin/bash

root@129da9263f1e:/#

root@129da9263f1e:/#

root@129da9263f1e:/#

root@129da9263f1e:/# ls

bin boot dev etc examples home lib lib32 lib64 libx32 media mnt opt packages proc root run sbin srv sys tmp usr var

# 运行demo

root@129da9263f1e:/examples/tflite/mobilenet_v1# python3 test.py

W __init__: rknn-toolkit2 version: 1.4.0-22dcfef4

--> Config model

W config: 'target_platform' is None, use rk3566 as default, Please set according to the actual platform!

done

--> Loading model

2023-04-20 15:21:12.334160: W tensorflow/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libcudart.so.11.0'; dlerror: libcudart.so.11.0: cannot open shared object file: No such file or directory; LD_LIBRARY_PATH: /usr/local/lib/python3.8/dist-packages/cv2/../../lib64:

2023-04-20 15:21:12.334291: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

done

--> Building model

I base_optimize ...

I base_optimize done.

I …………………………………………

D RKNN: [15:21:23.735] ----------------------------------------

D RKNN: [15:21:23.735] Total Weight Memory Size: 4365632

D RKNN: [15:21:23.735] Total Internal Memory Size: 1756160

D RKNN: [15:21:23.735] Predict Internal Memory RW Amount: 10331296

D RKNN: [15:21:23.735] Predict Weight Memory RW Amount: 4365552

D RKNN: [15:21:23.735] ----------------------------------------

D RKNN: [15:21:23.735] <<<<<<<< end: N4rknn21RKNNMemStatisticsPassE

I rknn buiding done

done

--> Export rknn model

done

--> Init runtime environment

W init_runtime: Target is None, use simulator!

done

--> Running model

Analysing : 100%|█████████████████████████████████████████████████| 60/60 [00:00<00:00, 1236.81it/s]

Preparing : 100%|██████████████████████████████████████████████████| 60/60 [00:00<00:00, 448.14it/s]

mobilenet_v1

-----TOP 5-----

[156]: 0.9345703125

[155]: 0.0570068359375

[205]: 0.00429534912109375

[284]: 0.003116607666015625

[285]: 0.00017178058624267578

done

到此环境已全部配置完成。

四.测试rk官方提供的yolov5s.onnx

进入目录/NPU/rknn-toolkit2-1.4.0/examples/onnx/yolov5,执行

>>> python3 test.py

class: person, score: 0.8223356008529663

box coordinate left,top,right,down: [473.26745200157166, 231.93780636787415, 562.1268351078033, 519.7597033977509]

class: person, score: 0.817978024482727

box coordinate left,top,right,down: [211.9896697998047, 245.0290389060974, 283.70787048339844, 513.9374527931213]

class: person, score: 0.7971192598342896

box coordinate left,top,right,down: [115.24964022636414, 232.44154334068298, 207.7837154865265, 546.1097872257233]

class: person, score: 0.4627230763435364

box coordinate left,top,right,down: [79.09242534637451, 339.18042743206024, 121.60038471221924, 514.234916806221]

class: bus , score: 0.7545359134674072

box coordinate left,top,right,down: [86.41703361272812, 134.41848754882812, 558.1083570122719, 460.4184875488281]

执行完在此路径下可以看到生成了一张result.jpg,打开可以看到预测结果图。

五.转换yolov5s-Pytorch模型并测试推理

5.1pt转onnx

各个软件包都要注意版本!注意版本!注意版本!

pytorch==1.7.1 torchvision==0.8.2 torchaudio==0.7.2 cudatoolkit=11.0

pillow==8.4.0

protobuf==3.20

onnx==1.9.0

opset version:12

转换步骤:

-

修改

models/yolo.py,修改class Detect(nn.Module):的forward函数,注意!!!仅在转换时修改,在训练时改回原状态!再训练时不要忘记哦!# def forward(self, x): # z = [] # inference output # for i in range(self.nl): # x[i] = self.m[i](x[i]) # conv # bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85) # x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous() # # if not self.training: # inference # if self.grid[i].shape[2:4] != x[i].shape[2:4] or self.onnx_dynamic: # self.grid[i] = self._make_grid(nx, ny).to(x[i].device) # # y = x[i].sigmoid() # if self.inplace: # y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy # y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh # else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953 # xy = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy # wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i].view(1, self.na, 1, 1, 2) # wh # y = torch.cat((xy, wh, y[..., 4:]), -1) # z.append(y.view(bs, -1, self.no)) # # return x if self.training else (torch.cat(z, 1), x) def forward(self, x): z = [] # inference output for i in range(self.nl): x[i] = self.m[i](x[i]) # conv return x -

修改

export.py函数的--opset为12 -

运行

export.py -

简化模型

python -m onnxsim weights/yolov5s.onnx weights/yolov5s-sim.onnx

5.2onnx转rknn并在PC上仿真测试

与第四节转换步骤相同,重新复制yolov5文件夹为myolov5,放入5.1节得到的yolov5s-sim模型,重命名为yolov5s.onnx,再运行test.py,推理结果如下:

六.转换自训练模型并测试推理

作者用自己的数据集训练了一个简单的模型用来测试,模型的类别数为4。

6.1pt转onnx

此转换步骤与5.1节转换步骤相同。

6.2onnx转rknn并在PC上仿真测试

修改examples/onnx/myolov5/test.py文件

- 修改onnx路径

- 修改rknn保存路径

- 修改img测试图片路径

- 修改类别数

修改完成后运行脚本,获得推理坐标值和rknn模型文件,推理结果如下:

七.rk官方提供模型板端推理

运行前准备:

-

确保PC上已配置交叉编译工具链

-

开发板刷入Linux系统,本次刷入builtroot系统测试

进入/NPU/rknpu2/examples/rknn_yolov5_demo_v5/convert_rknn_demo复制一份重命名为yolov5_3568,进入目录,修改onnx2rknn.py,运行脚本,将onnx转为rknn;

进入rockchip/NPU/rknpu2/examples/rknn_yolov5_demo目录,运行脚本编译程序:

bash ./build-linux_RK356X.sh

编译成功会生成一个install/和build/文件夹,

将install文件夹下的文件全部复制到开发板中,进入开发板中运行程序测试推理:

[root@RK356X:/mnt/rknn_yolov5_demo_Linux]# ./rknn_yolov5_demo ./model/RK356X/yolov5s-640-640.rknn ./model/bus.jpg

post process config: box_conf_threshold = 0.25, nms_threshold = 0.45

Read ./model/bus.jpg ...

img width = 640, img height = 640

Loading mode...

sdk version: 1.4.0 (a10f100eb@2022-09-09T09:07:14) driver version: 0.4.2

model input num: 1, output num: 3

index=0, name=images, n_dims=4, dims=[1, 640, 640, 3], n_elems=1228800, size=1228800, fmt=NHWC, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

index=0, name=334, n_dims=4, dims=[1, 255, 80, 80], n_elems=1632000, size=1632000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=77, scale=0.080445

index=1, name=353, n_dims=4, dims=[1, 255, 40, 40], n_elems=408000, size=408000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=56, scale=0.080794

index=2, name=372, n_dims=4, dims=[1, 255, 20, 20], n_elems=102000, size=102000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=69, scale=0.081305

model is NHWC input fmt

model input height=640, width=640, channel=3

once run use 61.952000 ms

loadLabelName ./model/coco_80_labels_list.txt

person @ (114 235 212 527) 0.819099

person @ (210 242 284 509) 0.814970

person @ (479 235 561 520) 0.790311

bus @ (99 141 557 445) 0.693320

person @ (78 338 122 520) 0.404960

loop count = 10 , average run 60.485800 ms

推理结果如下:

八.yolov5s-Pytorch模型板端推理

进入rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_v5/convert_rknn_demo/yolov5目录,修改onnx2rknn.py:

运行onnx2rknn.py:

复制model到rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_v5/model/RK356X中,运行build-linux_RK356X.sh,编译成功会生成一个install/和build/文件夹,

通过SD卡等方式将install文件夹整个复制到开发板的/mnt目录,赋予rknn_yolov5_demo可执行权限,运行程序获取推理结果:

[root@RK356X:/mnt/rknn_yolov5_demo_Linux]# ./rknn_yolov5_demo ./model/RK356X/yolov5s-640-640.rknn ./model/bus.jpg

post process config: box_conf_threshold = 0.25, nms_threshold = 0.45

Read ./model/bus.jpg ...

img width = 640, img height = 640

Loading mode...

sdk version: 1.4.0 (a10f100eb@2022-09-09T09:07:14) driver version: 0.4.2

model input num: 1, output num: 3

index=0, name=images, n_dims=4, dims=[1, 640, 640, 3], n_elems=1228800, size=1228800, fmt=NHWC, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

index=0, name=334, n_dims=4, dims=[1, 255, 80, 80], n_elems=1632000, size=1632000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=77, scale=0.080445

index=1, name=353, n_dims=4, dims=[1, 255, 40, 40], n_elems=408000, size=408000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=56, scale=0.080794

index=2, name=372, n_dims=4, dims=[1, 255, 20, 20], n_elems=102000, size=102000, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=69, scale=0.081305

model is NHWC input fmt

model input height=640, width=640, channel=3

once run use 62.407000 ms

loadLabelName ./model/coco_80_labels_list.txt

person @ (114 235 212 527) 0.819099

person @ (210 242 284 509) 0.814970

person @ (479 235 561 520) 0.790311

bus @ (99 141 557 445) 0.693320

person @ (78 338 122 520) 0.404960

loop count = 10 , average run 77.491700 ms

图像推理结果:

九.用自己数据集训练的模型板端推理

- 进入

rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_v5/convert_rknn_demo/yolov5目录,修改onnx2rknn.py:

- 修改

dataset.txt:

-

运行

onnx2rknn.py:

-

复制rknn模型到

rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_c4/model/RK356X -

进入

rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_c4/model目录,修改coco_80_labels_list.txt:

6.修改rockchip/NPU/rknpu2/examples/rknn_yolov5_demo_c4/include/postprocess.h,修改类别数和置信度阈值:

7.运行build-linux_RK356X.sh,编译成功会生成一个install/和build/文件夹:

8.通过SD卡等方式将install文件夹整个复制到开发板的/mnt目录,赋予rknn_yolov5_demo可执行权限,运行程序获取推理结果:

[root@RK356X:/mnt/rknn_yolov5_demo_Linux_c4]# chmod a+x rknn_yolov5_demo

[root@RK356X:/mnt/rknn_yolov5_demo_Linux_c4]# ./rknn_yolov5_demo ./model/RK356X/best-sim.rknn ./model/per_car.jpg

post process config: box_conf_threshold = 0.25, nms_threshold = 0.25

Read ./model/per_car.jpg ...

img width = 640, img height = 640

Loading mode...

sdk version: 1.4.0 (a10f100eb@2022-09-09T09:07:14) driver version: 0.4.2

model input num: 1, output num: 3

index=0, name=images, n_dims=4, dims=[1, 640, 640, 3], n_elems=1228800, size=1228800, fmt=NHWC, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

index=0, name=output, n_dims=4, dims=[1, 27, 80, 80], n_elems=172800, size=172800, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=64, scale=0.122568

index=1, name=320, n_dims=4, dims=[1, 27, 40, 40], n_elems=43200, size=43200, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=38, scale=0.110903

index=2, name=321, n_dims=4, dims=[1, 27, 20, 20], n_elems=10800, size=10800, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=36, scale=0.099831

model is NHWC input fmt

model input height=640, width=640, channel=3

once run use 86.341000 ms

loadLabelName ./model/coco_80_labels_list.txt

person @ (61 190 284 520) 0.907933

car @ (381 370 604 446) 0.897453

car @ (343 366 412 394) 0.873785

car @ (404 367 429 388) 0.628984

car @ (425 361 494 388) 0.365345

loop count = 10 , average run 96.655700 ms

推理效果如下:

十.用自己数据集训练的模型板端实时视频推理

10.1 视频输入输出调试

此步骤原本想采用rockit框架去进行视频采集到输出和Qt获取图像的方式,此框架API对于有海思平台开发经验的人来说容易上手,但在调试时发现对于3588平台可以使用rockit采集到输出,在3568平台一直卡在打开摄像头这一步,如果你使用的是RK3588/RK3588S可以使用rockit框架完成原始视频数据的采集,作者最终使用了opencv去获取摄像头图像并送显。

10.2测试Qt框架采集图像

使用Qt框架采集图像需依赖于opencv,可以参考网上的博客完成opencv的交叉编译。

QImages经过opencv转为Mat,Mat送入RKNN进行推理并画框,Mat再转为QImages到QPixmap输出显示。

集成Qt的视频采集显示程序以及官方yolo检测程序,经调试程序已实现yolov5实时识别,完整工程代码已在Github开源,代码路径。

效果如下:

PS:RK平台CPU GPU NPU DDR定频和性能模式设置方法

要想发挥芯片的最大性能,可以进行如下操作对CPU、NPU等硬件进行调频。

查看NPU可设置频率:

[root@RK356X:/]# cat /sys/class/devfreq/fde40000.npu/available_frequencies

200000000 297000000 400000000 600000000 700000000 800000000 900000000

查看NPU当前频率:

[root@RK356X:/]# cat /sys/class/devfreq/fde40000.npu/cur_freq

600000000

设置NPU频率:

[root@RK356X:/]# echo 900000000 > /sys/class/devfreq/fde40000.npu/userspace/set_freq

[root@RK356X:/]# cat /sys/class/devfreq/fde40000.npu/cur_freq

900000000

再次运行rknn推理单张图片耗时减少10ms,若要发挥最大性能,将CPU、DDR、GPU频率均设置为最高频率。测试速度在rk3568上最快为70ms。

另一个提速方式,将silu改为relu,根据网上测试表现单张图片可达到40ms,有兴趣的小伙伴可以尝试。文章来源:https://www.toymoban.com/news/detail-735077.html

参考网址:https://www.yii666.com/blog/354522.html文章来源地址https://www.toymoban.com/news/detail-735077.html

到了这里,关于瑞芯微RK3568/RK3588平台YOLOV5实时视频算法的部署小白教程的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!