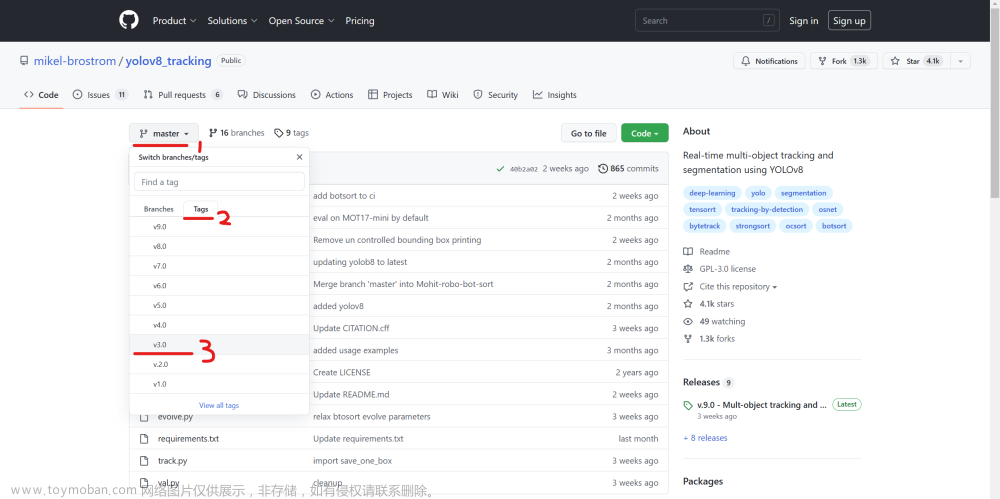

下载源码

https://github.com/shaoshengsong/DeepSORT

安装onnxruntime

安装方法参考博客

安装Eigen3

当谈及线性代数计算库时,Eigen3是一个强大而受欢迎的选择。Eigen3是一个C++模板库,提供了许多用于线性代数运算的功能,如向量、矩阵、矩阵运算、线性方程组求解等。以下是Eigen3的一些主要特点和功能:

高性能:Eigen3使用了优化的算法和技术,具有出色的运行速度和高效的内存使用。它通过使用表达式模板技术来在编译时生成高效的计算代码,避免了一些运行时的开销。

简洁易用:Eigen3提供了直观且易于使用的API,使得进行线性代数计算变得简单和直观。它的API设计简洁明了,提供了丰富的运算符重载和友好的语法,使得代码编写更加简单和可读。

广泛的功能:Eigen3支持多种线性代数运算,包括向量和矩阵的基本运算、线性方程组的求解、特征值和特征向量的计算、矩阵分解(如LU分解、QR分解等)、广义特征值问题的求解等。它还提供了一些高级功能,如支持稀疏矩阵和动态大小矩阵等。

跨平台支持:Eigen3是一个跨平台的库,可以在各种操作系统和编译器上使用。它使用标准的C++语法和特性,并且不依赖于任何特定的硬件或操作系统。

开源免费:Eigen3是一个开源库,遵循MPL2.0协议,可以免费使用和修改。它的源代码可在GitHub上获得,方便用户进行定制和扩展。

Eigen3已被广泛应用于科学计算、机器学习、计算机图形学等领域。无论是在学术研究还是实际应用中,Eigen3都是一个强大而可靠的线性代数库。

sudo apt-get install libeigen3-dev

编译opencv

编译命令:下载opencv4的源码包后解压,并下载contrib扩展包,开启dnn

读取视频需要安装ffmpeg:

sudo apt-get install build-essential

sudo apt-get install cmake git libgtk2.0-dev pkg-config libavcodec-dev libavformat-dev libswscale-dev

sudo apt-get install python-dev python-numpy libtbb2 libtbb-dev libjpeg-dev libpng-dev libtiff-dev libjasper-dev libdc1394-22-dev

对于libjasper-dev安装失败:

apt-get install software-properties-common

add-apt-repository "deb http://security.ubuntu.com/ubuntu xenial-security main"

apt update

apt-get install libjasper-dev

sudo apt-get install ffmpeg

获取ffmpeg的相关安装目录:

pkg-config --cflags libavformat

编译:

cmake -DCMAKE_BUILD_TYPE=Release -DOPENCV_EXTRA_MODULES_PATH=/home/opencv_contrib-4.7.0/opencv_contrib-4.7.0/modules -DWITH_OPENCL=OFF -DBUILD_DOCS=OFF -DBUILD_EXAMPLES=OFF -DBUILD_WITH_DEBUG_INFO=OFF -DBUILD_TESTS=OFF -DWITH_1394=OFF -DWITH_CUDA=OFF -DWITH_CUBLAS=OFF -DWITH_CUFFT=OFF -DWITH_OPENCLAMDBLAS=OFF -DWITH_OPENCLAMDFFT=OFF -DINSTALL_C_EXAMPLES=OFF -DINSTALL_PYTHON_EXAMPLES=OFF -DINSTALL_TO_MANGLED_PATHS=OFF -DBUILD_ANDROID_EXAMPLES=OFF -DBUILD_opencv_python=OFF -DBUILD_opencv_python_bindings_generator=OFF -DBUILD_opencv_apps=OFF -DBUILD_opencv_calib3d=OFF -DBUILD_opencv_features2d=OFF -DBUILD_opencv_flann=OFF -DBUILD_opencv_java_bindings_generator=OFF -DBUILD_opencv_js=OFF -DBUILD_opencv_ml=OFF -DBUILD_opencv_objdetect=OFF -DBUILD_opencv_photo=OFF -DBUILD_opencv_python3=OFF -DBUILD_opencv_python_tests=OFF -DBUILD_opencv_shape=OFF -DBUILD_opencv_stitching=OFF -DBUILD_opencv_superres=OFF -DBUILD_opencv_ts=OFF -DBUILD_opencv_videostab=OFF -DBUILD_opencv_world=ON -DBUILD_opencv_dnn=ON -D WITH_FFMPEG=ON -D WITH_TIFF=OFF -D BUILD_TIFF=OFF -DWITH_FFMPEG=ON -DFFMPEG_LIBRARIES=/usr/local/lib/ -D FFMPEG_INCLUDE_DIRS=/usr/local/include/ ..

make install

更改deepsort对应的cpp文件:文章来源:https://www.toymoban.com/news/detail-737152.html

#include <fstream>

#include <sstream>

#include <opencv2/imgproc.hpp>

#include <opencv2/opencv.hpp>

#include <opencv2/dnn.hpp>

#include "YOLOv5Detector.h"

#include "FeatureTensor.h"

#include "BYTETracker.h" //bytetrack

#include "tracker.h"//deepsort

//Deep SORT parameter

const int nn_budget=100;

const float max_cosine_distance=0.2;

void get_detections(DETECTBOX box,float confidence,DETECTIONS& d)

{

DETECTION_ROW tmpRow;

tmpRow.tlwh = box;//DETECTBOX(x, y, w, h);

tmpRow.confidence = confidence;

d.push_back(tmpRow);

}

void test_deepsort(cv::Mat& frame, std::vector<detect_result>& results,tracker& mytracker)

{

std::vector<detect_result> objects;

DETECTIONS detections;

for (detect_result dr : results)

{

//cv::putText(frame, classes[dr.classId], cv::Point(dr.box.tl().x+10, dr.box.tl().y - 10), cv::FONT_HERSHEY_SIMPLEX, .8, cv::Scalar(0, 255, 0));

if(dr.classId == 0) //person

{

objects.push_back(dr);

cv::rectangle(frame, dr.box, cv::Scalar(255, 0, 0), 2);

get_detections(DETECTBOX(dr.box.x, dr.box.y,dr.box.width, dr.box.height),dr.confidence, detections);

}

}

std::cout<<"begin track"<<std::endl;

if(FeatureTensor::getInstance()->getRectsFeature(frame, detections))

{

std::cout << "get feature succeed!"<<std::endl;

mytracker.predict();

mytracker.update(detections);

std::vector<RESULT_DATA> result;

for(Track& track : mytracker.tracks) {

if(!track.is_confirmed() || track.time_since_update > 1) continue;

result.push_back(std::make_pair(track.track_id, track.to_tlwh()));

}

for(unsigned int k = 0; k < detections.size(); k++)

{

DETECTBOX tmpbox = detections[k].tlwh;

cv::Rect rect(tmpbox(0), tmpbox(1), tmpbox(2), tmpbox(3));

cv::rectangle(frame, rect, cv::Scalar(0,0,255), 4);

// cvScalar的储存顺序是B-G-R,CV_RGB的储存顺序是R-G-B

for(unsigned int k = 0; k < result.size(); k++)

{

DETECTBOX tmp = result[k].second;

cv::Rect rect = cv::Rect(tmp(0), tmp(1), tmp(2), tmp(3));

rectangle(frame, rect, cv::Scalar(255, 255, 0), 2);

std::string label = cv::format("%d", result[k].first);

cv::putText(frame, label, cv::Point(rect.x, rect.y), cv::FONT_HERSHEY_SIMPLEX, 0.8, cv::Scalar(255, 255, 0), 2);

}

}

}

std::cout<<"end track"<<std::endl;

}

void test_bytetrack(cv::Mat& frame, std::vector<detect_result>& results,BYTETracker& tracker)

{

std::vector<detect_result> objects;

for (detect_result dr : results)

{

if(dr.classId == 0) //person

{

objects.push_back(dr);

}

}

std::vector<STrack> output_stracks = tracker.update(objects);

for (unsigned long i = 0; i < output_stracks.size(); i++)

{

std::vector<float> tlwh = output_stracks[i].tlwh;

bool vertical = tlwh[2] / tlwh[3] > 1.6;

if (tlwh[2] * tlwh[3] > 20 && !vertical)

{

cv::Scalar s = tracker.get_color(output_stracks[i].track_id);

cv::putText(frame, cv::format("%d", output_stracks[i].track_id), cv::Point(tlwh[0], tlwh[1] - 5),

0, 0.6, cv::Scalar(0, 0, 255), 2, cv::LINE_AA);

cv::rectangle(frame, cv::Rect(tlwh[0], tlwh[1], tlwh[2], tlwh[3]), s, 2);

}

}

}

int main(int argc, char *argv[])

{

//deepsort

tracker mytracker(max_cosine_distance, nn_budget);

//bytetrack

int fps=20;

BYTETracker bytetracker(fps, 30);

//-----------------------------------------------------------------------

// 加载类别名称

std::vector<std::string> classes;

std::string file="./coco_80_labels_list.txt";

std::ifstream ifs(file);

if (!ifs.is_open())

CV_Error(cv::Error::StsError, "File " + file + " not found");

std::string line;

while (std::getline(ifs, line))

{

classes.push_back(line);

}

//-----------------------------------------------------------------------

std::cout<<"classes:"<<classes.size();

std::shared_ptr<YOLOv5Detector> detector(new YOLOv5Detector());

detector->init("/home/DeepSORT-master/DeepSORT-master/build/yolov5x.onnx");

std::cout<<"begin read video"<<std::endl;

const std::string source = "/home/DeepSORT-master/DeepSORT-master/build/test.mp4";

cv::VideoCapture capture(source);

if (!capture.isOpened()) {

printf("could not read this video file...\n");

return -1;

}

std::cout<<"end read video"<<std::endl;

std::vector<detect_result> results;

int num_frames = 0;

cv::VideoWriter video("out.avi",cv::VideoWriter::fourcc('M','J','P','G'),10, cv::Size(1920,1080));

while (true)

{

cv::Mat frame;

if (!capture.read(frame)) // if not success, break loop

{

std::cout<<"\n Cannot read the video file. please check your video.\n";

break;

}

num_frames ++;

//Second/Millisecond/Microsecond 秒s/毫秒ms/微秒us

auto start = std::chrono::system_clock::now();

detector->detect(frame, results);

auto end = std::chrono::system_clock::now();

auto detect_time =std::chrono::duration_cast<std::chrono::milliseconds>(end - start).count();//ms

std::cout<<classes.size()<<":"<<results.size()<<":"<<num_frames<<std::endl;

//test_deepsort(frame, results,mytracker);

test_bytetrack(frame, results,bytetracker);

//cv::imshow("YOLOv5-6.x", frame);

video.write(frame);

if(cv::waitKey(30) == 27) // Wait for 'esc' key press to exit

{

break;

}

results.clear();

}

capture.release();

video.release();

cv::destroyAllWindows();

}

编译并运行:文章来源地址https://www.toymoban.com/news/detail-737152.html

到了这里,关于【Deepsort】C++版本Deepsort编译(依赖opencv,eigen3)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!