stop-all.sh

tar -zxvf apache-zookeeper-3.8.3-bin.tar.gz -C /opt/module/

ls /opt/module/

cd /opt/module/apache-zookeeper-3.8.3-bin/conf/

mv zoo_sample.cfg zoo.cfg

pwd

vi /opt/module/apache-zookeeper-3.8.3-bin/conf/zoo.cfg

dataDir=/opt/module/apache-zookeeper-3.8.3-bin/zkData

server.1=192.168.63.101:2888:3888

server.2=192.168.63.102:2888:3888

server.3=192.168.63.103:2888:3888

mkdir -p /opt/module/apache-zookeeper-3.8.3-bin/zkData/

vi /opt/module/apache-zookeeper-3.8.3-bin/zkData/myid

/root/bin/xsync /opt/module/

/opt/module/apache-zookeeper-3.8.3-bin/bin/zkServer.sh start

/opt/module/apache-zookeeper-3.8.3-bin/bin/zkServer.sh status

/opt/module/apache-zookeeper-3.8.3-bin/bin/zkServer.sh restart

hadoop102

/opt/module/apache-zookeeper-3.8.3-bin/bin/zkServer.sh start

/opt/module/apache-zookeeper-3.8.3-bin/bin/zkServer.sh status

环境变量:

vi /etc/profile

#ZK_HOME

export ZK_HOME=/opt/module/apache-zookeeper-3.8.3-bin/

export PATH=$PATH:$ZK_HOME/bin

/root/bin/xsync /etc/profile

source /etc/profile

防火墙:

systemctl stop firewalld&&systemctl disable firewalld

setenforce 0

sed -i '/SELINUX/s/enforcing/disabled/' /etc/selinux/config

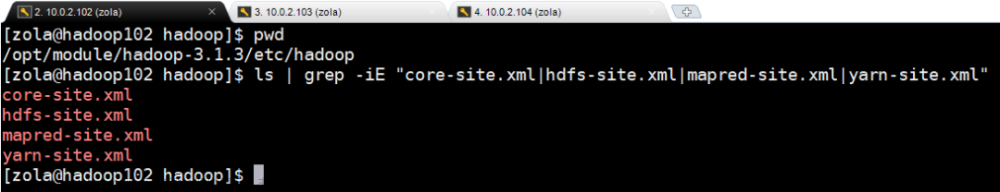

配置文件:

cd /opt/module/hadoop-2.7.2/etc/hadoop/

vi core-site.xml

<configuration>

<!-- 指定HDFS中NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<!-- 指定hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop101:2181,hadoop102:2181,hadoop103:2181</value>

</property>

</configuration>

vi hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop103:50090</value>

</property>

<!-- 完全分布式集群名称 -->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<!-- 集群中NameNode节点都有哪些 -->

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<!-- nn1的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>hadoop101:9000</value>

</property>

<!-- nn2的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>hadoop102:9000</value>

</property>

<!-- nn1的http通信地址 -->

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>hadoop101:50070</value>

</property>

<!-- nn2的http通信地址 -->

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>hadoop102:50070</value>

</property>

<!-- 指定NameNode元数据在JournalNode上的存放位置 -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop101:8485;hadoop102:8485;hadoop103:8485/mycluster</value>

</property>

<!-- 配置隔离机制,即同一时刻只能有一台服务器对外响应 -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<!-- 使用隔离机制时需要ssh无秘钥登录-->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_rsa</value>

</property>

<!-- 声明journalnode服务器存储目录-->

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/opt/ha/hadoop-2.7.2/data/jn</value>

</property>

<!-- 关闭权限检查-->

<property>

<name>dfs.permissions.enable</name>

<value>false</value>

</property>

<!-- 访问代理类:client,mycluster,active配置失败自动切换实现方式-->

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

</configuration>

vi yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<!-- reducer获取数据的方式 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!--启用resourcemanager ha-->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!--声明两台resourcemanager的地址-->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>cluster-yarn1</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hadoop102</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hadoop103</value>

</property>

<!--指定zookeeper集群的地址-->

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>hadoop101:2181,hadoop102:2181,hadoop103:2181</value>

</property>

<!--启用自动恢复-->

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<!--指定resourcemanager的状态信息存储在zookeeper集群-->

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

</configuration>

[hadoop-2.7.2]# hadoop-daemon.sh start journalnode (3台)

[hadoop-2.7.2]# rm -rf /opt/module/hadoop-2.7.2/data/ (3台)

[hadoop-2.7.2]# hdfs namenode -format

/root/bin/xsync /opt/module/hadoop-2.7.2/

hadoop-daemon.sh start namenode (101,102)

hdfs namenode -bootstrapStandby (102)(同步101 namenode)

hadoop-daemon.sh start datanode

hdfs haadmin -transitionToActive nn1 --forcemanual(使活跃)

start-yarn.sh (102) 打开ResourceManager, NodeManager节点

yarn-daemon.sh start resourcemanager(103)打开ResourceManager节点文章来源:https://www.toymoban.com/news/detail-752541.html

文章来源地址https://www.toymoban.com/news/detail-752541.html

到了这里,关于hadoop高可用的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!