基于Kubernetes实现Rancher高可用

一、Rancher是什么

一句话介绍:Rancher可用于对K8S集群进行部署及实现对业务部署进行管理等。

二、Rancher部署方式有哪些?

2.1 Docker安装

对于规模化较小的管理团队或初始使用Rancher管理K8S集群部署,建议使用此种方式。

2.2 helm方式部署到K8S集群

对于具体一定规模且有一定K8S管理经验的团队,我们建议可以通过在Kubernetes部署Rancher,以达到Rancher高可用目的。

三、在Kubernetes集群中部署Rancher

3.1 快速部署一套K8S集群

可以采用RKE部署,也可以采用其它快捷部署方式部署K8S集群。本案例使用的是kubekey部署。

3.1.1 主机准备

| 主机名 | IP地址 | 备注 |

|---|---|---|

| k8s-master01 | 192.168.10.140/24 | master |

| k8s-worker01 | 192.168.10.141/24 | worker |

| k8s-worker02 | 192.168.10.142/24 | worker |

# vim /etc/hosts

# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.10.140 k8s-master01

192.168.10.141 k8s-worker01

192.168.10.142 k8s-worker02

3.1.2 软件准备

kubernetes版本大于等于1.18

| 软件名称 | 是否安装 |

|---|---|

| socat | 必须安装 |

| conntrack | 必须安装 |

| ebtables | 可选,但推荐安装 |

| ipset | 可选,但推荐安装 |

| ipvsadm | 可选,但推荐安装 |

# yum -y install socat conntrack ebtables ipset ipvsadm

3.1.3 使用Kubekey部署多节点K8S集群

3.1.3.1 Kubekey工具下载

[root@k8s-master01 ~]# curl -sfL https://get-kk.kubesphere.io | sh -

[root@k8s-master01 ~]# ls

kk kubekey-v3.0.7-linux-amd64.tar.gz

[root@k8s-master01 ~]# mv kk /usr/local/bin/

3.1.3.2 多节点K8S集群部署

参考网址:https://www.kubesphere.io/zh/docs/v3.3/installing-on-linux/introduction/multioverview/

参考网址:https://github.com/kubesphere/kubekey

3.1.3.2.1 创建kk部署K8S集群配置文件

[root@k8s-master01 ~]# kk create config -f multi-node-k8s.yaml

输出内容如下:

Generate KubeKey config file successfully

[root@k8s-master01 ~]# ls

multi-node-k8s.yaml

[root@k8s-master01 ~]# vim multi-node-k8s.yaml

[root@k8s-master01 ~]# cat multi-node-k8s.yaml

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: member1

spec:

hosts:

- {name: k8s-master01, address: 192.168.10.140, internalAddress: 192.168.10.140, user: root, password: "centos"}

- {name: k8s-worker01, address: 192.168.10.141, internalAddress: 192.168.10.141, user: root, password: "centos"}

- {name: k8s-worker02, address: 192.168.10.142, internalAddress: 192.168.10.142, user: root, password: "centos"}

roleGroups:

etcd:

- k8s-master01

control-plane:

- k8s-master01

worker:

- k8s-worker01

- k8s-worker02

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

# internalLoadbalancer: haproxy

domain: lb.kubemsb.com

address: ""

port: 6443

kubernetes:

version: v1.23.10

clusterName: cluster.local

autoRenewCerts: true

containerManager: docker

etcd:

type: kubekey

network:

plugin: calico

kubePodsCIDR: 10.244.0.0/16

kubeServiceCIDR: 10.96.0.0/16

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

registry:

privateRegistry: ""

namespaceOverride: ""

registryMirrors: []

insecureRegistries: []

addons: []

关于认证方式,也可参考如下:

默认为root用户

hosts:

- {name: master, address: 192.168.10.140, internalAddress: 192.168.10.140, password: centos}

使用ssh密钥实现免密登录

hosts:

- {name: master, address: 192.168.10.140, internalAddress: 192.168.10.140, privateKeyPath: “~/.ssh/id_rsa”}

3.1.3.2.2 执行kk创建k8s集群

[root@k8s-master01 ~]# kk create cluster -f multi-node-k8s.yaml

执行安装结束后:

18:28:03 CST Pipeline[CreateClusterPipeline] execute successfully

Installation is complete.

Please check the result using the command:

kubectl get pod -A

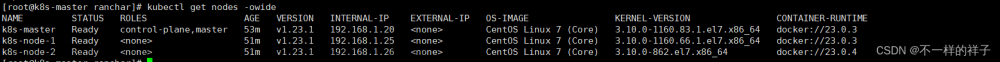

查看节点

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane,master 81s v1.23.10

k8s-worker01 Ready worker 59s v1.23.10

k8s-worker02 Ready worker 59s v1.23.10

查看所有的Pod

[root@k8s-master01 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-84897d7cdf-6mkhc 1/1 Running 0 63s

kube-system calico-node-2vjw7 1/1 Running 0 63s

kube-system calico-node-4lvzb 1/1 Running 0 63s

kube-system calico-node-zgt7p 1/1 Running 0 63s

kube-system coredns-b7c47bcdc-lqbzj 1/1 Running 0 75s

kube-system coredns-b7c47bcdc-rtj6b 1/1 Running 0 75s

kube-system kube-apiserver-k8s-master01 1/1 Running 0 89s

kube-system kube-controller-manager-k8s-master01 1/1 Running 0 88s

kube-system kube-proxy-29pmp 1/1 Running 0 69s

kube-system kube-proxy-59fqk 1/1 Running 0 69s

kube-system kube-proxy-nwm4r 1/1 Running 0 76s

kube-system kube-scheduler-k8s-master01 1/1 Running 0 89s

kube-system nodelocaldns-q8nvj 1/1 Running 0 69s

kube-system nodelocaldns-wbd29 1/1 Running 0 76s

kube-system nodelocaldns-xkhb9 1/1 Running 0 69s

3.2 负载均衡器Metallb准备

3.2.1 官方网址

3.2.2 修改kube-proxy代理模式及IPVS配置

[root@k8s-master01 ~]# kubectl get configmap -n kube-system

NAME DATA AGE

kube-proxy 2 7h1m

[root@k8s-master01 ~]# kubectl edit configmap kube-proxy -n kube-system

......

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: ""

strictARP: true 此处由flase修改为true

syncPeriod: 0s

tcpFinTimeout: 0s

tcpTimeout: 0s

udpTimeout: 0s

kind: KubeProxyConfiguration

metricsBindAddress: ""

mode: ipvs 修改这里

nodePortAddresses: null

oomScoreAdj: null

portRange: ""

showHiddenMetricsForVersion: ""

udpIdleTimeout: 0s

[root@k8s-master01 ~]# kubectl rollout restart daemonset kube-proxy -n kube-system

3.2.3 部署metallb

[root@k8s-master01 ~]# kubectl apply -f https://raw.githubusercontent.com/metallb/metallb/v0.13.10/config/manifests/metallb-native.yaml

3.2.4 配置IP地址池及开启二层转发

[root@k8s-master01 ~]# vim ippool.yaml

[root@k8s-master01 ~]# cat ippool.yaml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 192.168.10.240-192.168.10.250

[root@k8s-master01 ~]# kubectl apply -f ippool.yaml

[root@k8s-master01 ~]# vim l2.yaml

[root@k8s-master01 ~]# cat l2.yaml

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

[root@k8s-master01 ~]# kubectl apply -f l2.yaml

3.3 Ingress nginx代理服务部署

[root@k8s-master01 ~]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.8.1/deploy/static/provider/cloud/deploy.yaml

[root@k8s-master01 ~]# vim deploy.yaml

......

347 externalTrafficPolicy: Cluster 由Local修改为Cluster

348 ipFamilies:

349 - IPv4

350 ipFamilyPolicy: SingleStack

351 ports:

352 - appProtocol: http

353 name: http

354 port: 80

355 protocol: TCP

356 targetPort: http

357 - appProtocol: https

358 name: https

359 port: 443

360 protocol: TCP

361 targetPort: https

362 selector:

363 app.kubernetes.io/component: controller

364 app.kubernetes.io/instance: ingress-nginx

365 app.kubernetes.io/name: ingress-nginx

366 type: LoadBalancer 注意此处

[root@k8s-master01 ~]# kubectl apply -f deploy.yaml

[root@k8s-master01 ~]# kubectl get all -n ingress-nginx

NAME READY STATUS RESTARTS AGE

pod/ingress-nginx-admission-create-6trx2 0/1 Completed 0 4h23m

pod/ingress-nginx-admission-patch-xwbsx 0/1 Completed 2 4h23m

pod/ingress-nginx-controller-bdcdb7d6d-758cc 1/1 Running 0 4h23m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/ingress-nginx-controller LoadBalancer 10.233.32.199 192.168.10.240 80:32061/TCP,443:32737/TCP 4h23m

service/ingress-nginx-controller-admission ClusterIP 10.233.13.200 <none> 443/TCP 4h23m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/ingress-nginx-controller 1/1 1 1 4h23m

NAME DESIRED CURRENT READY AGE

replicaset.apps/ingress-nginx-controller-bdcdb7d6d 1 1 1 4h23m

NAME COMPLETIONS DURATION AGE

job.batch/ingress-nginx-admission-create 1/1 43s 4h23m

job.batch/ingress-nginx-admission-patch 1/1 57s 4h23m

3.4 Helm准备

在使用kubekey部署K8S集群时,已经部署,如果没有准备,可以使用如下方法实施。

[root@k8s-master01 ~]# wget https://get.helm.sh/helm-v3.12.3-linux-amd64.tar.gz

[root@k8s-master01 ~]# tar xf helm-v3.12.3-linux-amd64.tar.gz

[root@k8s-master01 ~]# mv linux-amd64/helm /usr/local/bin/helm

[root@k8s-master01 ~]# helm version

3.5 Helm Chart仓库准备

[root@k8s-master01 ~]# helm repo add rancher-stable https://releases.rancher.com/server-charts/stable

[root@k8s-master01 ~]# helm repo list

NAME URL

rancher-stable https://releases.rancher.com/server-charts/stable

[root@k8s-master01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "rancher-stable" chart repository

Update Complete. ⎈Happy Helming!⎈

3.6 cert-manager部署

由于Rancher Manager Server默认需要SSL/TLS配置来保证访问的安全性。所以需要部署cert-manager,用于自动签发证书使用。

也可以使用真实域名及真实域名证书。

[root@k8s-master01 ~]# kubectl apply -f https://github.com/cert-manager/cert-manager/releases/download/v1.11.0/cert-manager.crds.yaml

customresourcedefinition.apiextensions.k8s.io/clusterissuers.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/challenges.acme.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/certificaterequests.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/issuers.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/certificates.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/orders.acme.cert-manager.io created

[root@k8s-master01 ~]# helm repo add jetstack https://charts.jetstack.io

"jetstack" has been added to your repositories

[root@k8s-master01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "jetstack" chart repository

...Successfully got an update from the "rancher-stable" chart repository

Update Complete. ⎈Happy Helming!⎈

[root@k8s-master01 ~]# helm install cert-manager jetstack/cert-manager \

--namespace cert-manager \

--create-namespace \

--version v1.11.0

输出信息:

NAME: cert-manager

LAST DEPLOYED: Fri Aug 18 12:05:53 2023

NAMESPACE: cert-manager

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

cert-manager v1.11.0 has been deployed successfully!

In order to begin issuing certificates, you will need to set up a ClusterIssuer

or Issuer resource (for example, by creating a 'letsencrypt-staging' issuer).

More information on the different types of issuers and how to configure them

can be found in our documentation:

https://cert-manager.io/docs/configuration/

For information on how to configure cert-manager to automatically provision

Certificates for Ingress resources, take a look at the `ingress-shim`

documentation:

https://cert-manager.io/docs/usage/ingress/

[root@k8s-master01 ~]# kubectl get pods -n cert-manager

NAME READY STATUS RESTARTS AGE

cert-manager-6b4d84674-c29gd 1/1 Running 0 8m39s

cert-manager-cainjector-59f8d9f696-trrl5 1/1 Running 0 8m39s

cert-manager-webhook-56889bfc96-59ddj 1/1 Running 0 8m39s

3.7 Rancher部署

[root@k8s-master01 ~]# kubectl create namespace cattle-system

namespace/cattle-system created

[root@k8s-master01 ~]# kubectl get ns

NAME STATUS AGE

cattle-system Active 7s

default Active 178m

ingress-nginx Active 2m4s

kube-node-lease Active 178m

kube-public Active 178m

kube-system Active 178m

kubekey-system Active 178m

metallb-system Active 10m

[root@k8s-master01 ~]# vim rancher-install.sh

[root@k8s-master01 ~]# cat rancher-install.sh

helm install rancher rancher-stable/rancher \

--namespace cattle-system \

--set hostname=www.kubex.com.cn \

--set bootstrapPassword=admin \

--set ingress.tls.source=rancher \

--set ingress.extraAnnotations.'kubernetes\.io/ingress\.class'=nginx

[root@k8s-master01 ~]# sh rancher-install.sh

[root@k8s-master01 ~]# kubectl -n cattle-system rollout status deploy/rancher

输出以下信息表示成功:

Waiting for deployment "rancher" rollout to finish: 0 of 3 updated replicas are available...

Waiting for deployment "rancher" rollout to finish: 1 of 3 updated replicas are available...

Waiting for deployment "rancher" rollout to finish: 2 of 3 updated replicas are available...

deployment "rancher" successfully rolled out

3.8 Rancher访问

[root@k8s-master01 ~]# echo https://www.kubex.com.cn/dashboard/?setup=$(kubectl get secret --namespace cattle-system bootstrap-secret -o go-template='{{.data.bootstrapPassword|base64decode}}')

https://www.kubex.com.cn/dashboard/?setup=admin

文章来源:https://www.toymoban.com/news/detail-756409.html

文章来源:https://www.toymoban.com/news/detail-756409.html

文章来源地址https://www.toymoban.com/news/detail-756409.html

文章来源地址https://www.toymoban.com/news/detail-756409.html

正确的方向比努力更重要, 点个关注动态持续更新。如需视频教程后台私信留言,一起冲冲冲 !!!

到了这里,关于一小时完成Rancher高可用搭建丨基于kubernetes(K8s)完成丨Docker helm的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!