一、前言

接着上一篇的笔记,Scrapy爬取普通无反爬、静态页面的网页时可以顺利爬取我们要的信息。但是大部分情况下我们要的数据所在的网页它是动态加载出来的(ajax请求后传回前端页面渲染、js调用function等)。这种情况下需要使用selenium进行模拟人工操作浏览器行为,实现自动化采集动态网页数据。文章来源:https://www.toymoban.com/news/detail-765925.html

二、环境搭建

- Scrapy框架的基本依赖包(前几篇有记录)

- selenium依赖包

- pip install selenium==4.0.0a6.post2

- pip install certifi

- pip install urllib3==1.25.11

- 安装Firefox浏览器和对应版本的驱动包

- 火狐浏览器我用的是最新版121.0

- 驱动的版本为0.3.0,见上方资源链接

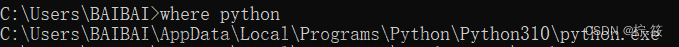

- 把驱动放到python环境的Scripts文件夹下

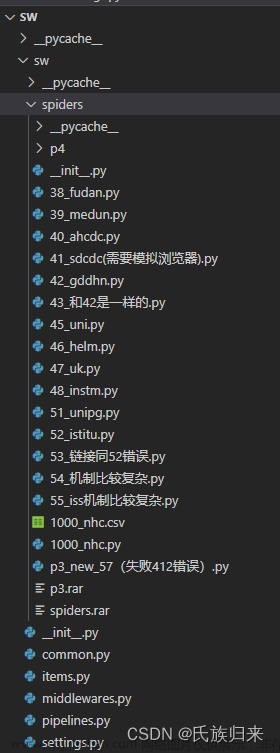

三、代码实现

- settings设置

SPIDER_MIDDLEWARES = {

'stock_spider.middlewares.StockSpiderSpiderMiddleware': 543,

}

DOWNLOADER_MIDDLEWARES = {

'stock_spider.middlewares.StockSpiderDownloaderMiddleware': 543,

}

ITEM_PIPELINES = {

'stock_spider.pipelines.StockSpiderPipeline': 300,

}

- middlewares中间件

from selenium.webdriver.firefox.options import Options as firefox_options

spider.driver = webdriver.Firefox(options=firefox_options()) # 指定使用的浏览器

- process_request

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

spider.driver.get("http://www.baidu.com")

return None

- process_response

from scrapy.http import HtmlResponse

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object

# - return a Request object

# - or raise IgnoreRequest

response_body = spider.driver.page_source

return HtmlResponse(url=request.url, body=response_body, encoding='utf-8', request=request)

启动爬虫后就可以看到爬虫启动了浏览器驱动,接下来就可以实现各种模拟人工操作了文章来源地址https://www.toymoban.com/news/detail-765925.html

到了这里,关于python爬虫进阶篇:Scrapy中使用Selenium模拟Firefox火狐浏览器爬取网页信息的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!