k8s 有啥特点

- 服务发现&负载平衡, 服务很方便的给外部用户

- 方便回滚和故障恢复

- 有金主爸爸们(google 红帽之类的)

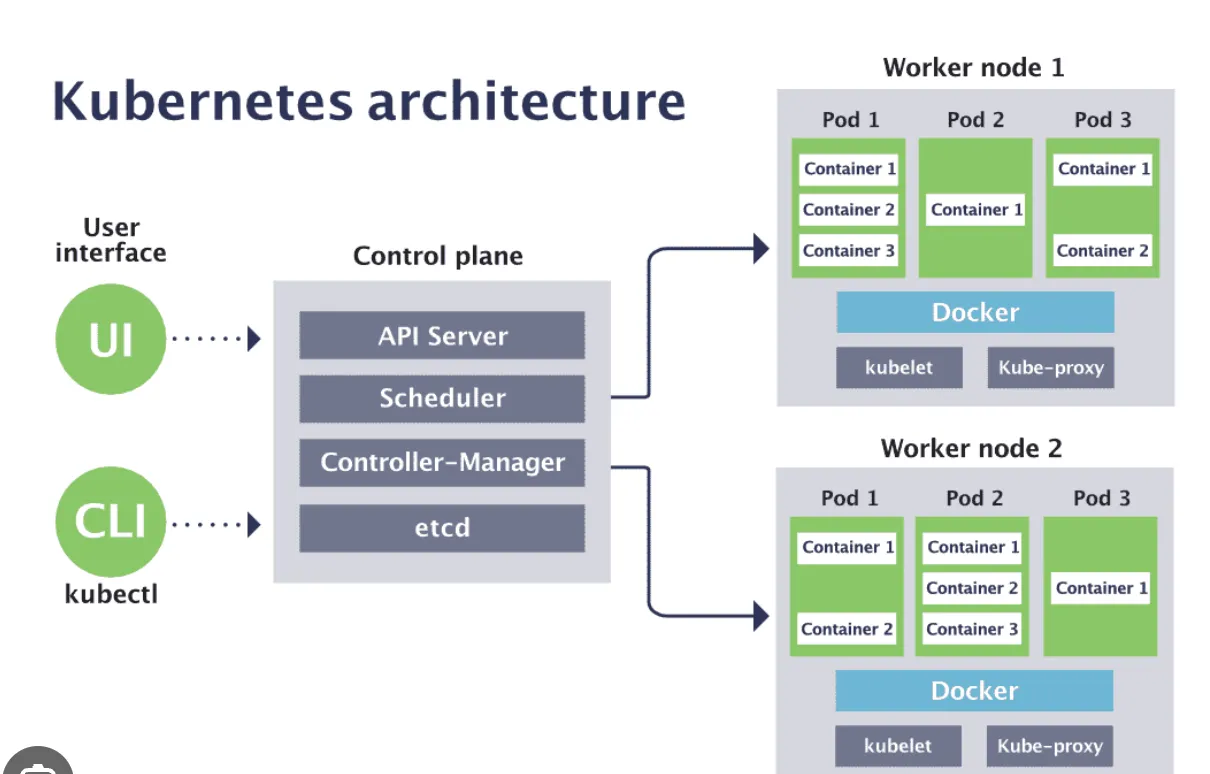

架构

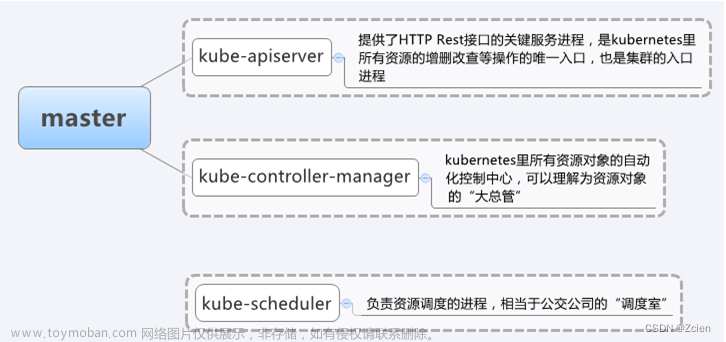

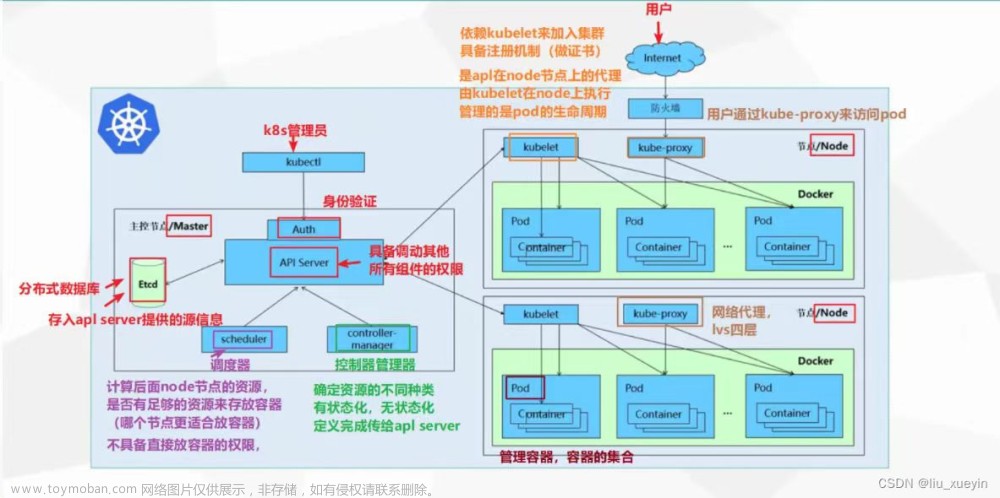

- master(或者叫做Control Plane) 这边4个

- etcl 存储这个分布式集群的信息

- apiserver 通信用的

- controller manage 这个是apiserver的小弟,没有主意的古惑仔

- schedule 这个是apiserver的小弟之二,负责资源的调度

- worker 那边3个

- kubelet 这个是apiserver的小弟之三,工具人

- kube-proxy 这个复杂服务发现的比较重要,没有他,服务发现这个功能就寄了

- container runtime 工具人

cka 模拟考题一

一个新的worker03节点,如何加入集群

# 1 进入master节点

ssh master

# 2 创建加入的命令

kubeadm

kubeadm token -h

kubeadm token create -h

kubeadm token create --print-join-command

# 3 把第三个命令的输出结果记住,再到worker03节点执行

exit

ssh worker03

# 类似于下面的这个

kubeadm join 192.168.240.231:6443 --token xz51cy.bn85l1lh3xaargy8 --discovery-token-ca-cert-hash sha256:30502614fdcc0043f0cd2a4720b051f55feecdfd4db5ffee1b11250adead22b4

# 4 等5分钟

# 检验

kubectl get nodes

cka 模拟考题二

查看这个集群使用的是什么网络插件

# /etc/cni/net.d/ 这个目录下面显示啥,基本上就是啥了

ll /etc/cni/net.d/

# 05-cilium.conflist

k get po --all-namespaces | grep cilium

k get po -A | grep cilium

插一嘴 Pod QoS

pod总共有下面三种等级,这个不同的等级会在集群或者节点资源不充足的时候展现,展现的形式就是低等级的pod会最先被删除

- Guaranteed :每个container中都设置了resources ,斌且其中的limits与requests 中包含cpu与memory的设置,并且一致

- Burstable:至少一个container中resources中有设置,设置成啥都随便

- BestEffort:一个container也没有设置resources

k explain ns

k explain ns.spec

# 创建实验环境

cat << eof | k apply -f - -n csdn-test

---

{ apiVersion: v1, kind: Namespace, metadata: { name: csdn-test } }

...

eof

for podd in `k get po -oname`; do k delete $podd ; done

-

cpu/memory limit == cpu/memory request Guaranteed

-

cpu/memory limits Guaranteed

-

cpu/memory request Burstable

-

cpu limits Burstable

-

memory limits Burstable

-

memory request Burstable

-

cpu request Burstable

-

BestEffort

所有container requests 啥也没设置 为 BestEffort

但凡有设置为Burstable

当所有containers中 limits 全设置 与 requests 与 limits 设置相等 则为 Guaranteed

cat << eof | k apply -f - -n csdn-test

---

apiVersion: v1

kind: Pod

metadata:

name: p0

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

requests: { cpu: 200m, memory: 200Mi }

limits: { cpu: 200m, memory: 200Mi }

---

apiVersion: v1

kind: Pod

metadata:

name: p1

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

---

apiVersion: v1

kind: Pod

metadata:

name: p2

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

requests: { cpu: 200m, memory: 200Mi }

---

apiVersion: v1

kind: Pod

metadata:

name: p3

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 100m }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p4

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { memory: 200Mi }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p5

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

requests: { cpu: 100m }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p6

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

requests: { memory: 200Mi }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p7

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

...

eof

len=$(k get po -oname | wc -l)

for i in `seq $len`; do

QoS=$(k describe po/p${i} | awk '/QoS/{print $3}')

printf '%s\t%s\n' $i $QoS

done

# k describe po/p1 | sed -ne 's/QoS Class\:\s*//gp'

for podd in `k get po -oname`; do

k delete $podd &

done

cat << eof | k apply -f - -n csdn-test

---

apiVersion: v1

kind: Pod

metadata:

name: p1

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

- name: c2

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p2

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

- name: c2

image: nginx

...

---

apiVersion: v1

kind: Pod

metadata:

name: p3

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

requests: { cpu: 200m, memory: 200Mi }

- name: c2

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

requests: { cpu: 200m, memory: 200Mi }

...

---

apiVersion: v1

kind: Pod

metadata:

name: p4

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

requests: { cpu: 200m, memory: 200Mi }

- name: c2

image: nginx

...

---

apiVersion: v1

kind: Pod

metadata:

name: p4

namespace: csdn-test

spec:

containers:

- name: c1

image: nginx

resources:

limits: { cpu: 200m, memory: 200Mi }

requests: { cpu: 200m, memory: 200Mi }

- name: c2

image: nginx

...

eof

插一嘴 创建资源

# pod

k run po -h

# 直接运行

k run po --labels="aa=bb,bb=cc" --image=nginx --namespace=csdn-test --restart=Never --image-pull-policy='Always' --port=80 --expose=true --command -- /bin/sh \sleep 3600 ;

# 编辑yaml

k run po --labels="aa=bb,bb=cc" --image=nginx --namespace=csdn-test --restart=Never --image-pull-policy='Always' --port=80 --expose=true --dry-run=client -oyaml

插一嘴 静态pod

ssh master

ls /etc/kubernetes/manifests -l

-rw-------. 1 root root 2445 12月 2 20:31 etcd.yaml

-rw------- 1 root root 3445 12月 24 00:29 kube-apiserver.yaml

-rw-------. 1 root root 2901 12月 2 20:31 kube-controller-manager.yaml

-rw-------. 1 root root 1487 12月 2 20:31 kube-scheduler.yaml

上面的这4个就是静态pod

k get po -n kube-system --no-headers

# 静态pod的名称后缀是-controle plane 主机的名称

这个路径是通过如下的方式获得的/etc/kubernetes/manifests文章来源:https://www.toymoban.com/news/detail-780305.html

systemctl status kubelet

cd /usr/lib/systemd/system/kubelet.service.d

cat 10-kubeadm.conf

cat /var/lib/kubelet/config.yaml | grep static

创建静态pod

k run sta-po --labels="aa=bb,bb=cc" --image=nginx --namespace=csdn-test --restart=Never --image-pull-policy='Always' --port=80 --dry-run=client -oyaml > /etc/kubernetes/manifests/aa.yml

k get po -l "aa in (bb), bb in (cc)" -L aa,bb

NAME READY STATUS RESTARTS AGE AA BB

sta-po-huang-aa.xx 1/1 Running 0 70s bb cc文章来源地址https://www.toymoban.com/news/detail-780305.html

到了这里,关于02 k8s考试基础知识(一)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!