1.官网升级说明

升级 kubeadm 集群 | Kubernetes

2. 版本说明

详细参考:版本偏差策略 | Kubernetes

Kubernetes 版本以 x.y.z 表示,其中 x 是主要版本, y 是次要版本,z 是补丁版本。

版本升级控制:

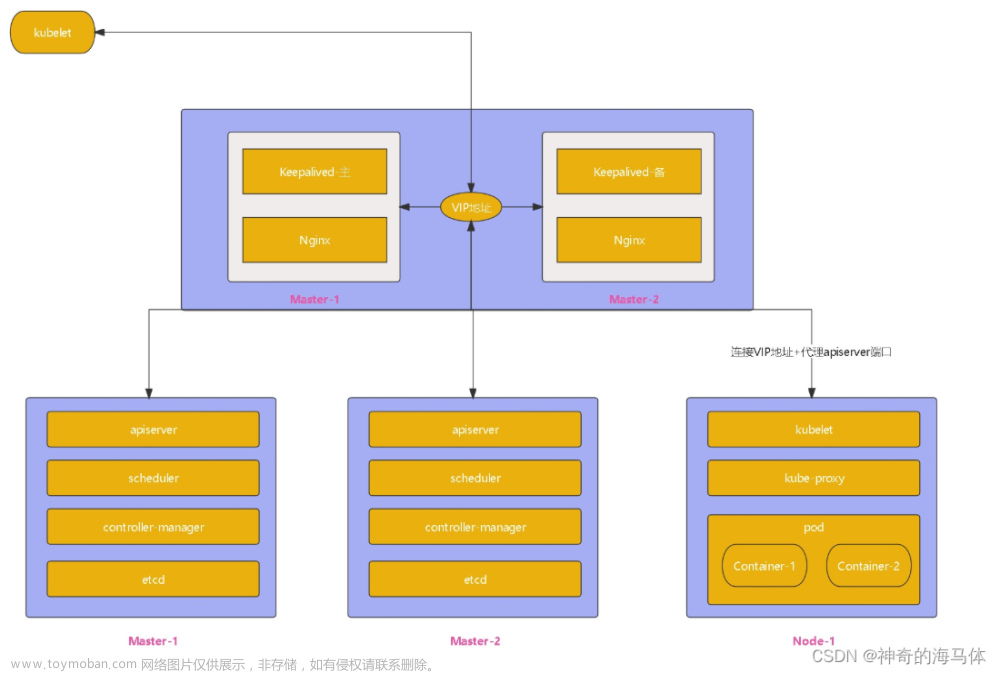

1. 最新版和最老版的 kube-apiserver 实例版本偏差最多为一个次要版本。

2. kubelet 版本不能比kube-apiserver版本新; kubelet可以比kube-apiserver低三个次要版本 (如果 kubelet < 1.25,则只能比 kube-apiserver 低两个次要版本,如:kube-apiserver 处于 1.29 版本,则kubelet 支持 1.29、1.28、1.27 和 1.26 版本)。

3. kube-proxy不能比 kube-apiserver 新; 最多可以 kube-apiserver旧三个小版本(kube-proxy < 1.25 最多只能比 kube-apiserver 旧两个小版本); 可比它旁边运行的kubelet实例旧或新最多三个次要版本(kube-proxy < 1.25 最多只能是比它并行运行的 kubelet 实例旧或新的两个次要版本)。

4.kube-controller-manager、kube-scheduler和cloud-controller-manager不能比与它们通信的kube-apiserver实例新。它们应该与kube-apiserver次要版本相匹配,但可能最多旧一个次要版本(允许实时升级)。

5. kubectl 在 kube-apiserver 的一个次要版本(较旧或较新)中支持。

3.升级总体流程

3.1 先升级master节点,然后升级work节点

3.1.1各个插件升级流程

1)升级kubeadm

目前阿里的apt源和清华源的kubeadm版本只能倒1.28.2;同步的是旧的apt.kubernetes.io地址的仓库,现在需要转到用最新的社区自治的软件包仓库(

pkgs.k8s.io)更改源可以参考:更改 Kubernetes 软件包仓库 | Kubernetes

如果使用的是阿里源或是清华源,执行命令

apt-cache madison kubeadm

可以看到最高只支持更新到kubeadm 1.28.2

需要更改使用pkgs.k8s.io的源

下载 Kubernetes 仓库的公共签名密钥。所有仓库都使用相同的签名密钥, 因此你可以忽略 URL 中的版本:

curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.28/deb/Release.key | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

新增apt仓库定义

echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.28/deb/ /" | sudo tee /etc/apt/sources.list.d/kubernetes.list

执行update,查看可以升级的kubeadm版本

atp-get update

apt-cache madison kubeadm

先看下升级计划

kubeadm upgrade plan

可以看到kubeadm当前版本是1.28.2,需要对其先升级到1.28.4然后再执行k8s的升级

# 用最新的补丁版本号替换 1.28.x-* 中的 x

apt-mark unhold kubeadm && \

apt-get update && apt-get install -y kubeadm='1.28.4-1.1' && \

apt-mark hold kubeadm

2)开始升级master节点

root@k8s-master:/etc/apt/keyrings# kubeadm upgrade apply v1.28.4

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.28.4"

[upgrade/versions] Cluster version: v1.28.2

[upgrade/versions] kubeadm version: v1.28.4

[upgrade] Are you sure you want to proceed? [y/N]: y

[upgrade/prepull] Pulling images required for setting up a Kubernetes cluster

[upgrade/prepull] This might take a minute or two, depending on the speed of your internet connection

[upgrade/prepull] You can also perform this action in beforehand using 'kubeadm config images pull'

[upgrade/apply] Upgrading your Static Pod-hosted control plane to version "v1.28.4" (timeout: 5m0s)...

[upgrade/etcd] Upgrading to TLS for etcd

[upgrade/staticpods] Preparing for "etcd" upgrade

[upgrade/staticpods] Current and new manifests of etcd are equal, skipping upgrade

[upgrade/etcd] Waiting for etcd to become available

[upgrade/staticpods] Writing new Static Pod manifests to "/etc/kubernetes/tmp/kubeadm-upgraded-manifests132025818"

[upgrade/staticpods] Preparing for "kube-apiserver" upgrade

[upgrade/staticpods] Renewing apiserver certificate

[upgrade/staticpods] Renewing apiserver-kubelet-client certificate

[upgrade/staticpods] Renewing front-proxy-client certificate

[upgrade/staticpods] Renewing apiserver-etcd-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-apiserver.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2023-12-18-17-38-58/kube-apiserver.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

[apiclient] Found 1 Pods for label selector component=kube-apiserver

[upgrade/staticpods] Component "kube-apiserver" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-controller-manager" upgrade

[upgrade/staticpods] Renewing controller-manager.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-controller-manager.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2023-12-18-17-38-58/kube-controller-manager.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

[apiclient] Found 1 Pods for label selector component=kube-controller-manager

[upgrade/staticpods] Component "kube-controller-manager" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-scheduler" upgrade

[upgrade/staticpods] Renewing scheduler.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-scheduler.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2023-12-18-17-38-58/kube-scheduler.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

[apiclient] Found 1 Pods for label selector component=kube-scheduler

[upgrade/staticpods] Component "kube-scheduler" upgraded successfully!

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upgrade] Backing up kubelet config file to /etc/kubernetes/tmp/kubeadm-kubelet-config3792099237/config.yaml

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

[upgrade/successful] SUCCESS! Your cluster was upgraded to "v1.28.4". Enjoy!

[upgrade/kubelet] Now that your control plane is upgraded, please proceed with upgrading your kubelets if you haven't already done so.

kubeadm upgrade apply 做了以下工作:

检查你的集群是否处于可升级状态:

API 服务器是可访问的

所有节点处于 Ready 状态

控制面是健康的

强制执行版本偏差策略。

确保控制面的镜像是可用的或可拉取到服务器上。

如果组件配置要求版本升级,则生成替代配置与/或使用用户提供的覆盖版本配置。

升级控制面组件或回滚(如果其中任何一个组件无法启动)。

应用新的 CoreDNS 和 kube-proxy 清单,并强制创建所有必需的 RBAC 规则。

如果旧文件在 180 天后过期,将创建 API 服务器的新证书和密钥文件并备份旧文件。

出现下面说明升级成功了

注:kubeadm upgrade 也会自动对 kubeadm 在节点上所管理的证书执行续约操作。 如果需要略过证书续约操作,可以使用标志 --certificate-renewal=false。

查看证书过期时间

kubeadm certs check-expiration

说明:

对于 v1.28 之前的版本,kubeadm 默认采用这样一种模式:在

kubeadm upgrade apply期间立即升级插件(包括 CoreDNS 和 kube-proxy),而不管是否还有其他尚未升级的Master节点实例。 这可能会导致兼容性问题。从 v1.28 开始,kubeadm 默认采用这样一种模式: 在开始升级插件之前,先检查是否已经升级所有的Master节点实例。 你必须按顺序执行Master节点的升级,或者至少确保在所有其他Master节点实例已完成升级之前不启动最后一个Master节点实例的升级, 并且在最后一个Master节点实例完成升级之后才执行插件的升级。如果你要保留旧的升级行为,可以通过kubeadm upgrade apply --feature-gates=UpgradeAddonsBeforeControlPlane=true启用UpgradeAddonsBeforeControlPlane特性门控。Kubernetes 项目通常不建议启用此特性门控, 你应该转为更改你的升级过程或集群插件,这样你就不需要启用旧的行为。UpgradeAddonsBeforeControlPlane特性门控将在后续的版本中被移除。

3)升级CNI插件

需要检查对应的网络插件是否和当前版本匹配,不匹配的话需要升级;

插件说明可以参考官方文档

安装扩展(Addon) | Kubernetes

如果 CNI 驱动作为 DaemonSet 运行,则在其他控制平面节点上不需要此步骤。

例如:该集群使用的calico是3.26.3

查看calico文档

查看calico文档

About Calico | Calico Documentation

它所支持的kubernetes版本有

4)升级其他master节点和work节点

使用命令

sudo kubeadm upgrade nodekubeadm upgrade node 在其他Master节点上执行以下操作:

从集群中获取 kubeadm ClusterConfiguration。

(可选操作)备份 kube-apiserver 证书。

升级Master节点组件的静态 Pod 清单。

为本节点升级 kubelet 配置

kubeadm upgrade node 在工作节点上完成以下工作:

从集群取回 kubeadm ClusterConfiguration。

为本节点升级 kubelet 配置。

5)工作节点升级

# 先驱逐节点的pod (同时会将节点打污点)

kubectl drain k8s-master --ignore-daemonsets

---

root@k8s-master:/etc/apt/keyrings# kubectl drain k8s-master --ignore-daemonsets

node/k8s-master cordoned

Warning: ignoring DaemonSet-managed Pods: calico-system/calico-node-2fcr6, calico-system/csi-node-driver-s4zvc, ingress-nginx/ingress-nginx-controller-6w5d7, kube-system/kube-proxy-x6pv5

evicting pod tigera-operator/tigera-operator-597bf4ddf6-gjthp

evicting pod default/curl-b747fd9ff-mvdtp

evicting pod calico-apiserver/calico-apiserver-7ff86ffc-b65hp

evicting pod calico-apiserver/calico-apiserver-7ff86ffc-sk6kz

evicting pod calico-system/calico-kube-controllers-6d5984f57f-rfw74

evicting pod calico-system/calico-typha-7d7d7c7d67-zmx2p

evicting pod ingress-nginx/ingress-nginx-admission-patch-xl8l2

evicting pod default/nginx-statefulset-0

evicting pod ingress-nginx/ingress-nginx-admission-create-789zw

evicting pod kube-system/coredns-66f779496c-459f4

evicting pod kube-system/coredns-66f779496c-s5blf

pod/ingress-nginx-admission-patch-xl8l2 evicted

pod/ingress-nginx-admission-create-789zw evicted

pod/tigera-operator-597bf4ddf6-gjthp evicted

I1218 17:47:25.344453 2767592 request.go:697] Waited for 1.070127575s due to client-side throttling, not priority and fairness, request: GET:https://k8s-master:6443/api/v1/namespaces/calico-apiserver/pods/calico-apiserver-7ff86ffc-b65hp

pod/nginx-statefulset-0 evicted

pod/calico-apiserver-7ff86ffc-sk6kz evicted

pod/calico-apiserver-7ff86ffc-b65hp evicted

pod/calico-kube-controllers-6d5984f57f-rfw74 evicted

pod/calico-typha-7d7d7c7d67-zmx2p evicted

pod/coredns-66f779496c-s5blf evicted

pod/coredns-66f779496c-459f4 evicted

pod/curl-b747fd9ff-mvdtp evicted

node/k8s-master drained

开始升级kubelet和kubectl(master节点和work节点都要)

# 解除版本锁

root@k8s-master:~# apt-mark unhold kubelet kubectl

kubelet was already not on hold.

kubectl was already not on hold.

# 安装1.28.4-1.1

root@k8s-master:~# apt-get update && apt-get install -y kubelet='1.28.4-1.1' kubectl='1.28.4-1.1'

Hit:1 http://mirrors.aliyun.com/ubuntu jammy InRelease

Hit:2 http://mirrors.aliyun.com/ubuntu jammy-security InRelease

Hit:3 http://mirrors.aliyun.com/ubuntu jammy-updates InRelease

Hit:4 http://mirrors.aliyun.com/ubuntu jammy-proposed InRelease

Hit:5 http://mirrors.aliyun.com/ubuntu jammy-backports InRelease

Hit:6 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.28/deb InRelease

Reading package lists... Done

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following packages will be upgraded:

kubectl kubelet

2 upgraded, 0 newly installed, 0 to remove and 193 not upgraded.

Need to get 29.8 MB of archives.

After this operation, 205 kB of additional disk space will be used.

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.28/deb kubectl 1.28.4-1.1 [10.3 MB]

Get:2 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.28/deb kubelet 1.28.4-1.1 [19.5 MB]

Fetched 29.8 MB in 5s (5,638 kB/s)

(Reading database ... 89165 files and directories currently installed.)

Preparing to unpack .../kubectl_1.28.4-1.1_amd64.deb ...

Unpacking kubectl (1.28.4-1.1) over (1.28.2-00) ...

Preparing to unpack .../kubelet_1.28.4-1.1_amd64.deb ...

Unpacking kubelet (1.28.4-1.1) over (1.28.2-00) ...

Setting up kubectl (1.28.4-1.1) ...

Setting up kubelet (1.28.4-1.1) ...

Scanning processes...

Scanning linux images...

Running kernel seems to be up-to-date.

No services need to be restarted.

No containers need to be restarted.

No user sessions are running outdated binaries.

No VM guests are running outdated hypervisor (qemu) binaries on this host.

# 升级完成 锁住版本

root@k8s-master:~# apt-mark hold kubelet kubectl

kubelet set on hold.

kubectl set on hold.

# 重启kubelet

root@k8s-master:~# systemctl daemon-reload

root@k8s-master:~# systemctl restart kubelet

# 接触节点保护

root@k8s-master:~# kubectl uncordon k8s-master

node/k8s-master uncordoned

完成升级文章来源:https://www.toymoban.com/news/detail-781706.html

文章来源地址https://www.toymoban.com/news/detail-781706.html

文章来源地址https://www.toymoban.com/news/detail-781706.html

到了这里,关于kubeadm升级k8s版本1.28.2升级至1.28.4(Ubuntu操作系统下)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!