ik分词器插件

es在7.3版本已经支持中文分词,由于中文分词只能支持到单个字进行分词,不够灵活与适配我们平常使用习惯,所以有很多对应中文分词出现,最近使用的是ik分词器,就说说它吧。

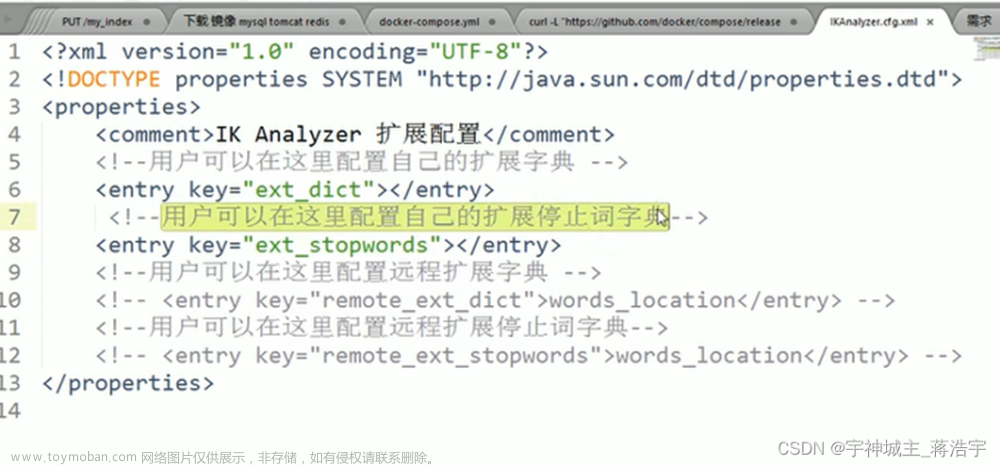

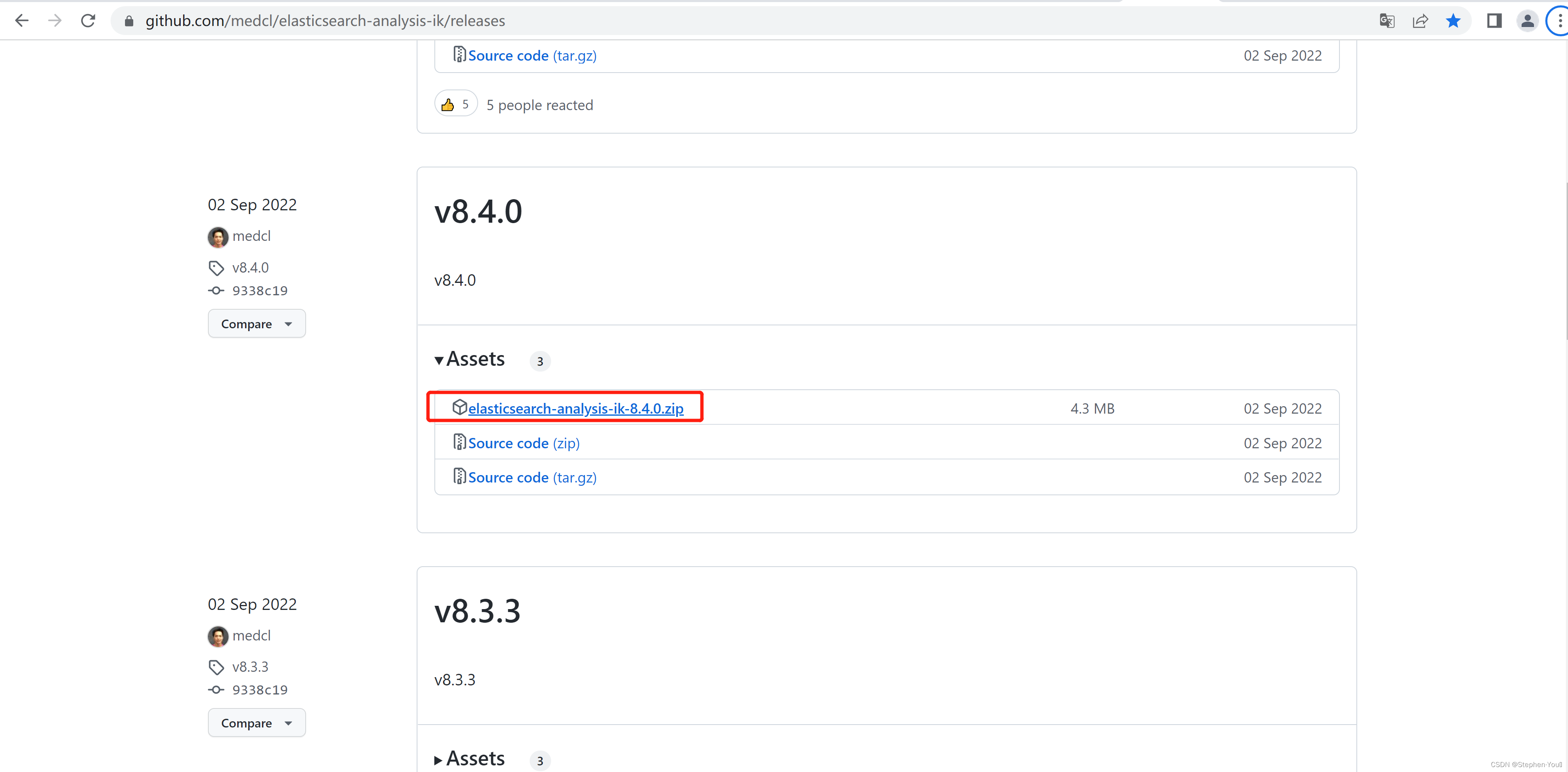

ik分词器安装

安装可以百度下有很多教程,需要注意的是ik分词器的版本要跟es版本对应上,避免出现不必要的兼容问题。

ik分词模式

ik_max_word: 将文本拆分成最细粒度的词语或者字

GET /test_analysis/_analyze

{

"text": "是否分词",

"analyzer": "ik_max_word"

}

结果

{

"tokens" : [

{

"token" : "是否",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "是",

"start_offset" : 0,

"end_offset" : 1,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "否",

"start_offset" : 1,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "分词",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 3

},

{

"token" : "分",

"start_offset" : 2,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "词",

"start_offset" : 3,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 5

}

]

}

ik_smart: 将文本拆分成最粗粒的词语

GET /test_analysis/_analyze

{

"text": "是否分词",

"analyzer": "ik_smart"

}

结果

{

"tokens" : [

{

"token" : "是否",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "分词",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 1

}

]

}

一般都用ik_max_word

es ik分词测试

创建索引

PUT /test_analysis

{

"mappings": {

"properties": {

"message": {

"type": "text",

"analyzer": "ik_max_word"

},

"id": {

"type": "keyword"

}

}

}

}

添加数据

POST /test_analysis/_bulk

{"index":{}}

{"id":"111", "message":"我是一个小可爱"}

{"index":{}}

{"id":"222", "message":"只是为了测试一下结果是否分词"}

{"index":{}}

{"id":"333", "message":"测试一下是否进行了ik分词"}

{"index":{}}

{"id":"444", "message":"搞一些假的数据吧"}

{"index":{}}

{"id":"555", "message":"实在不知道再写一些什么了"}

{"index":{}}

{"id":"666", "message":"就这样吧"}

查询文章来源:https://www.toymoban.com/news/detail-782721.html

GET /test_analysis/_search

{

"query": {

"bool": {

"must": [

{

"match": {

"message": "是否分词"

}

}

]

}

}

}

查询分词结果文章来源地址https://www.toymoban.com/news/detail-782721.html

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 3,

"relation" : "eq"

},

"max_score" : 5.104265,

"hits" : [

{

"_index" : "test_analysis",

"_type" : "_doc",

"_id" : "MDXEe4wBS_Neyb68FBdy",

"_score" : 5.104265,

"_source" : {

"id" : "333",

"message" : "测试一下是否进行了ik分词"

}

},

{

"_index" : "test_analysis",

"_type" : "_doc",

"_id" : "LzXEe4wBS_Neyb68FBdy",

"_score" : 5.0611815,

"_source" : {

"id" : "222",

"message" : "只是为了测试一下结果是否分词"

}

},

{

"_index" : "test_analysis",

"_type" : "_doc",

"_id" : "LjXEe4wBS_Neyb68FBdy",

"_score" : 0.728194,

"_source" : {

"id" : "111",

"message" : "我是一个小可爱"

}

}

]

}

}

到了这里,关于Elasticsearch之ik中文分词篇的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!