前言

这是一篇关于GAN在计算机视觉领域的综述。

正文

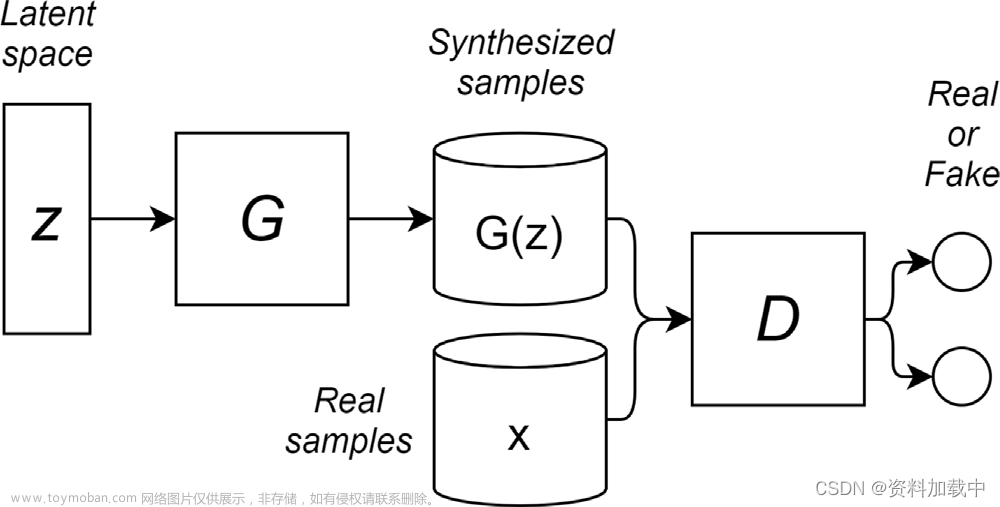

生成对抗网络是一种基于博弈论的生成模型,其中神经网络用于模拟数据分布。应用领域:语言生成、图像生成、图像到图像翻译、图像生成文本描述、视频生成。GAN模型能够复制数据分布并生成合成数据,应用一定的标准偏差来创建新的、以前从未见过的数据。

图1显示了GAN架构是如何组成的。由于这种架构的复杂性,GANs在训练[16–18]过程中存在不稳定。这些模型中训练的不稳定性导致了模态崩溃等问题,因此人们对[19–23]的这类问题进行了研究。正如[24]所定义的,当GANs模型生成具有不同输入的相同类输出时,就会发生模式崩溃。

GAN调查通常集中在GAN模型结构[16,27]或它们在某些任务[28,29]中的应用上。本文主要聚焦在模型结构本身 。文章[34]这样的调查的重点是分析最先进的通用神经网络,并进一步分析各种网络的性能。此外,他们还提出了一套关于哪种损失函数最适合每种使用情况的建议。文章[35]关注的是过去几年不同的GAN的架构如何用于不同的问题,而文章[28]则展示了计算机视觉及其应用的不同架构。

文章调研总览

GAN网络的模型结构时间轴

GAN网络的损失函数时间轴

GAN网络的时间轴

文章来源:https://www.toymoban.com/news/detail-797517.html

文章来源:https://www.toymoban.com/news/detail-797517.html

参考文献

[1] I.J. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S.Ozair, A. Courville, Y. Bengio, Generative adversarial networks, 2014.[2] J. Cheng, Y. Yang, X. Tang, N. Xiong, Y. Zhang, F. Lei, Generative adversarialnetworks: A literature review., KSII Trans. Internet Inf. Syst. 14 (12)(2020).[3] T. Karras, T. Aila, S. Laine, J. Lehtinen, Progressive growing of GANs forimproved quality, stability, and variation, 2018.[4] I. Gulrajani, F. Ahmed, M. Arjovsky, V. Dumoulin, A. Courville, Improvedtraining of wasserstein GANs, in: Proceedings of the 31st InternationalConference on Neural Information Processing Systems, NIPS ’17, CurranAssociates Inc., Red Hook, NY, USA, 2017, pp. 5769–5779.[5] J. Xu, X. Ren, J. Lin, X. Sun, Diversity-promoting GAN: A cross-entropybased generative adversarial network for diversified text generation, in:Proceedings of the 2018 Conference on Empirical Methods in NaturalLanguage Processing, Association for Computational Linguistics, Brussels,Belgium, 2018, pp. 3940–3949.[6] T. Karras, S. Laine, T. Aila, A style-based generator architecture forgenerative adversarial networks, 2019.[7] J.-Y. Zhu, T. Park, P. Isola, A.A. Efros, Unpaired image-to-image translationusing cycle-consistent adversarial networks, in: 2017 IEEE InternationalConference on Computer Vision, ICCV, 2017, pp. 2242–2251.[8] P. Isola, J.-Y. Zhu, T. Zhou, A.A. Efros, Image-to-image translation withconditional adversarial networks, 2018.[9] M. Zhu, P. Pan, W. Chen, Y. Yang, DM-GAN: Dynamic memory generativeadversarial networks for text-to-image synthesis, in: Proceedings of theIEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR,2019.[10] Y. Li, M. Min, D. Shen, D. Carlson, L. Carin, Video generation from text,in: Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 32,2018, p. 1.[11] S.W. Kim, Y. Zhou, J. Philion, A. Torralba, S. Fidler, Learning to simulate dynamic environments with gamegan, in: Proceedings of theIEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020,pp. 1231–1240.[12] D.H. Ackley, G.E. Hinton, T.J. Sejnowski, A learning algorithm forBoltzmann machines, Cogn. Sci. 9 (1) (1985) 147–169.[13] D. Bank, N. Koenigstein, R. Giryes, Autoencoders, 2021.[14] A. van den Oord, N. Kalchbrenner, Pixel RNN, in: ICML, 2016.[15] Y. Sun, L. Xu, L. Guo, Y. Li, Y. Wang, A comparison study of VAE andGAN for software fault prediction, in: S. Wen, A. Zomaya, L.T. Yang(Eds.), Algorithms and Architectures for Parallel Processing, SpringerInternational Publishing, Cham, 2020, pp. 82–96.[16] M. Wiatrak, S.V. Albrecht, Stabilizing generative adversarial networktraining: A survey, 2019, arXiv.[17] H. Thanh-Tung, T. Tran, S. Venkatesh, Improving generalization andstability of generative adversarial networks, 2019.[18] X. Mao, Q. Li, H. Xie, R.Y. Lau, Z. Wang, S. Paul Smolley, Least squaresgenerative adversarial networks, in: Proceedings of the IEEE InternationalConference on Computer Vision, ICCV, 2017.[19] Bhagyashree, V. Kushwaha, G.C. Nandi, Study of prevention of modecollapse in generative adversarial network (GAN), in: 2020 IEEE 4thConference on Information Communication Technology, CICT, 2020,pp. 1–6.[20] D. Bang, H. Shim, MGGAN: Solving mode collapse using manifold guidedtraining, 2018.[21] S. Adiga, M.A. Attia, W.-T. Chang, R. Tandon, On the tradeoff betweenmode collapse and sample quality in generative adversarial networks,in: 2018 IEEE Global Conference on Signal and Information Processing(GlobalSIP), 2018, pp. 1184–1188.[22] D. Bau, J.-Y. Zhu, J. Wulff, W. Peebles, H. Strobelt, B. Zhou, A. Torralba,Seeing what a GAN cannot generate, in: Proceedings of the IEEE/CVFInternational Conference on Computer Vision, ICCV, 2019.[23] R. Durall, A. Chatzimichailidis, P. Labus, J. Keuper, Combating modecollapse in GAN training: An empirical analysis using hessian eigenvalues,2020.[24] H. Thanh-Tung, T. Tran, Catastrophic forgetting and mode collapse inGANs, in: 2020 International Joint Conference on Neural Networks, IJCNN,2020, pp. 1–10.[25] A. Aggarwal, M. Mittal, G. Battineni, Generative adversarial network: Anoverview of theory and applications, Int. J. Inf. Manage. Data Insights 1(1) (2021) 100004.[26] M. Arjovsky, S. Chintala, L. Bottou, Wasserstein GAN, 2017.[27] B. Ghosh, I.K. Dutta, M. Totaro, M. Bayoumi, A survey on the progressionand performance of generative adversarial networks, in: 2020 11thInternational Conference on Computing, Communication and NetworkingTechnologies, ICCCNT, 2020, pp. 1–8.[28] Z. Wang, Q. She, T.E. Ward, Generative adversarial networks in computervision: A survey and taxonomy, 2020.[29] H. Alqahtani, M. Kavakli-Thorne, D.G. Kumar Ahuja, Applications of generative adversarial networks (GANs): An updated review, Arch. Comput.Methods Eng. 28 (2019).[30] Z. Pan, W. Yu, X. Yi, A. Khan, F. Yuan, Y. Zheng, Recent progress ongenerative adversarial networks (GANs): A survey, IEEE Access 7 (2019)36322–36333.[31] K. Wang, C. Gou, Y. Duan, Y. Lin, X. Zheng, F.-Y. Wang, Generativeadversarial networks: introduction and outlook, IEEE/CAA J. Autom. Sin.4 (4) (2017) 588–598.[32] V. Sampath, I. Maurtua, J.J.A. Martín, A. Gutierrez, A survey on generativeadversarial networks for imbalance problems in computer vision tasks, J.Big Data 8 (1) (2021) 1–59.[33] X. Wu, K. Xu, P. Hall, A survey of image synthesis and editing withgenerative adversarial networks, Tsinghua Sci. Technol. 22 (6) (2017)660–674.[34] Z. Pan, W. Yu, B. Wang, H. Xie, V.S. Sheng, J. Lei, S. Kwong, Loss functionsof generative adversarial networks (GANs): opportunities and challenges,IEEE Trans. Emerg. Top. Comput. Intell. 4 (4) (2020) 500–522.[35] J. Gui, Z. Sun, Y. Wen, D. Tao, J. Ye, A review on generative adversarialnetworks: Algorithms, theory, and applications, 2020.[36] H. Zhang, Z. Le, Z. Shao, H. Xu, J. Ma, MFF-GAN: An unsupervised generative adversarial network with adaptive and gradient joint constraintsfor multi-focus image fusion, Inf. Fusion 66 (2021) 40–53.[37] R. Liu, Y. Ge, C.L. Choi, X. Wang, H. Li, DivCo: Diverse conditional imagesynthesis via contrastive generative adversarial network, in: Proceedingsof the IEEE/CVF Conference on Computer Vision and Pattern Recognition,CVPR, 2021, pp. 16377–16386.[38] D.M. De Silva, G. Poravi, A review on generative adversarial networks, in:2021 6th International Conference for Convergence in Technology (I2CT),2021, pp. 1–4.[39] L. Metz, B. Poole, D. Pfau, J. Sohl-Dickstein, Unrolled generative adversarialnetworks, 2017.[40] S. Suh, H. Lee, P. Lukowicz, Y.O. Lee, CEGAN: Classification enhancementgenerative adversarial networks for unraveling data imbalance problems,Neural Netw. 133 (2021) 69–86.[41] J. Nash, Non-cooperative games, Ann. of Math. (1951) 286–295.[42] F. Farnia, A. Ozdaglar, GANs may have no Nash equilibria, 2020.[43] M. Heusel, H. Ramsauer, T. Unterthiner, B. Nessler, S. Hochreiter, Ganstrained by a two time-scale update rule converge to a local nashequilibrium, Adv. Neural Inf. Process. Syst. 30 (2017).[44] Á. González-Prieto, A. Mozo, E. Talavera, S. Gómez-Canaval, Dynamics ofFourier modes in torus generative adversarial networks, Mathematics 9(4) (2021).[45] T. Salimans, I. Goodfellow, W. Zaremba, V. Cheung, A. Radford, X. Chen,Improved techniques for training GANs, 2016.[46] Z. Zhang, C. Luo, J. Yu, Towards the gradient vanishing, divergencemismatching and mode collapse of generative adversarial nets, in: Proceedings of the 28th ACM International Conference on Information andKnowledge Management, CIKM ’19, Association for Computing Machinery,New York, NY, USA, 2019, pp. 2377–2380.[47] H.D. Meulemeester, J. Schreurs, M. Fanuel, B.D. Moor, J.A.K. Suykens, Thebures metric for generative adversarial networks, 2021.[48] W. Li, L. Fan, Z. Wang, C. Ma, X. Cui, Tackling mode collapse in multigenerator GANs with orthogonal vectors, Pattern Recognit. 110 (2021)107646.[49] I. Goodfellow, NIPS 2016 tutorial: Generative adversarial networks, 2017.[50] S. Pei, R.Y. Da Xu, G. Meng, dp-GAN: Alleviating mode collapse in GANvia diversity penalty module, 2021, arXiv preprint arXiv:2108.02353 .[51] J. Su, GAN-QP: A novel GAN framework without gradient vanishing andLipschitz constraint, 2018.[52] Y. Zuo, G. Avraham, T. Drummond, Improved training of generative adversarial networks using decision forests, in: Proceedings of the IEEE/CVFWinter Conference on Applications of Computer Vision, WACV, 2021,pp. 3492–3501.[53] S. Liu, O. Bousquet, K. Chaudhuri, Approximation and convergenceproperties of generative adversarial learning, 2017.[54] S.A. Barnett, Convergence problems with generative adversarial networks(GANs), 2018.[55] A. Borji, Pros and cons of GAN evaluation measures, Comput. Vis. ImageUnderst. 179 (2019) 41–65.[56] C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, Z. Wojna, Rethinking theinception architecture for computer vision, 2015.[57] J. Deng, W. Dong, R. Socher, L.-J. Li, K. Li, L. Fei-Fei, Imagenet: A largescale hierarchical image database, in: 2009 IEEE Conference on ComputerVision and Pattern Recognition, IEEE, 2009, pp. 248–255.[58] S. Nowozin, B. Cseke, R. Tomioka, f-GAN: Training generative neuralsamplers using variational divergence minimization, 2016.[59] S. Gurumurthy, R.K. Sarvadevabhatla, V.B. Radhakrishnan, DeLiGAN:Generative adversarial networks for diverse and limited data, 2017.[60] T. Karras, M. Aittala, S. Laine, E. Härkönen, J. Hellsten, J. Lehtinen, T. Aila,Alias-free generative adversarial networks, 2021, arXiv preprint arXiv:2106.12423 .[61] G. Daras, A. Odena, H. Zhang, A.G. Dimakis, Your local GAN: Designingtwo dimensional local attention mechanisms for generative models, in:Proceedings of the IEEE/CVF Conference on Computer Vision and PatternRecognition, 2020, pp. 14531–14539.[62] Z. Wang, E. Simoncelli, A. Bovik, Multiscale structural similarity forimage quality assessment, in: The Thrity-Seventh Asilomar Conferenceon Signals, Systems Computers, 2003, Vol. 2, 2003, pp. 1398–1402, Vol.2.[63] K. Kurach, M. Lucic, X. Zhai, M. Michalski, S. Gelly, The GAN landscape:Losses, architectures, regularization, and normalization, 2019.[64] E.L. Lehmann, J.P. Romano, Testing Statistical Hypotheses, SpringerScience & Business Media, 2006.[65] D. Lopez-Paz, M. Oquab, Revisiting classifier two-sample tests, 2018.[66] K. Simonyan, A. Zisserman, Very deep convolutional networks forlarge-scale image recognition, in: International Conference on LearningRepresentations, 2015.[67] W. Bounliphone, E. Belilovsky, M.B. Blaschko, I. Antonoglou, A. Gretton, Atest of relative similarity for model selection in generative models, 2016.[68] C.-L. Li, W.-C. Chang, Y. Cheng, Y. Yang, B. Póczos, MMD GAN: Towardsdeeper understanding of moment matching network, 2017.[69] A. Radford, L. Metz, S. Chintala, Unsupervised representation learningwith deep convolutional generative adversarial networks, 2016.[70] J. Jumper, R. Evans, A. Pritzel, T. Green, M. Figurnov, K. Tunyasuvunakool,O. Ronneberger, R. Bates, A. Žídek, A. Bridgland, et al., High accuracyprotein structure prediction using deep learning, in: Fourteenth CriticalAssessment of Techniques for Protein Structure Prediction (AbstractBook), Vol. 22, 2020, p. 24.[71] J.T. Springenberg, A. Dosovitskiy, T. Brox, M. Riedmiller, Striving forsimplicity: The all convolutional net, 2015.[72] R. Ayachi, M. Afif, Y. Said, M. Atri, Strided convolution instead of maxpooling for memory efficiency of convolutional neural networks, in:M.S. Bouhlel, S. Rovetta (Eds.), Proceedings of the 8th InternationalConference on Sciences of Electronics, Technologies of Information andTelecommunications (SETIT’18), Vol. 1, Springer International Publishing,Cham, 2020, pp. 234–243.[73] Y. Li, N. Xiao, W. Ouyang, Improved boundary equilibrium generativeadversarial networks, IEEE Access 6 (2018) 11342–11348.[74] S. Wu, G. Li, L. Deng, L. Liu, D. Wu, Y. Xie, L. Shi, L1 norm batchnormalization for efficient training of deep neural networks, IEEE Trans.Neural Netw. Learn. Syst. 30 (7) (2019) 2043–2051.[75] D.H. Hubel, T.N. Wiesel, Receptive fields of single neurones in the cat’sstriate cortex, J. Physiol. 148 (3) (1959) 574–591.[76] M. Mirza, S. Osindero, Conditional generative adversarial nets, 2014.[77] M. Loey, G. Manogaran, N.E.M. Khalifa, A deep transfer learning modelwith classical data augmentation and cgan to detect covid-19 from chestct radiography digital images, Neural Comput. Appl. (2020) 1–13.[78] Y. Ma, X. Chen, W. Zhu, X. Cheng, D. Xiang, F. Shi, Speckle noise reductionin optical coherence tomography images based on edge-sensitive cGAN,Biomed. Opt. Express 9 (11) (2018) 5129–5146.[79] Y. Li, R. Fu, X. Meng, W. Jin, F. Shao, A SAR-to-optical image translationmethod based on conditional generation adversarial network (cGAN), IEEEAccess 8 (2020) 60338–60343.[80] X. Chen, Y. Duan, R. Houthooft, J. Schulman, I. Sutskever, P. Abbeel,Infogan: Interpretable representation learning by information maximizing generative adversarial nets, in: Proceedings of the 30th International Conference on Neural Information Processing Systems, 2016,pp. 2180–2188.[81] A. Odena, C. Olah, J. Shlens, Conditional image synthesis with auxiliaryclassifier gans, in: International Conference on Machine Learning, PMLR,2017, pp. 2642–2651.[82] C.E. Shannon, A mathematical theory of communication, Bell Syst. Tech.J. 27 (3) (1948) 379–423.[83] K. He, X. Zhang, S. Ren, J. Sun, Deep residual learning for imagerecognition, in: Proceedings of the IEEE Conference on Computer Visionand Pattern Recognition, 2016, pp. 770–778.22 G. Iglesias, E. Talavera and A. Díaz-ÁlvarezComputer Science Review 48 (2023) 100553[84] C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V.Vanhoucke, A. Rabinovich, Going deeper with convolutions, in: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,2015, pp. 1–9.[85] Y. Zhou, T.L. Berg, Learning temporal transformations from time-lapsevideos, in: European Conference on Computer Vision, Springer, 2016,pp. 262–277.[86] J. Johnson, A. Alahi, L. Fei-Fei, Perceptual losses for real-time styletransfer and super-resolution, in: European Conference on ComputerVision, Springer, 2016, pp. 694–711.[87] M. Liu, J. Zhu, A. Tao, J. Kautz, B. Catanzaro, High-resolution imagesynthesis and semantic manipulation with conditional gans, in: ICCV,2017.[88] Y. Qu, Y. Chen, J. Huang, Y. Xie, Enhanced pix2pix dehazing network, in:Proceedings of the IEEE/CVF Conference on Computer Vision and PatternRecognition, 2019, pp. 8160–8168.[89] M. Mori, T. Fujioka, L. Katsuta, Y. Kikuchi, G. Oda, T. Nakagawa, Y.Kitazume, K. Kubota, U. Tateishi, Feasibility of new fat suppression forbreast MRI using pix2pix, Jpn. J. Radiol. 38 (11) (2020) 1075–1081.[90] W. Pan, C. Torres-Verdín, M.J. Pyrcz, Stochastic pix2pix: a new machinelearning method for geophysical and well conditioning of rule-basedchannel reservoir models, Natural Resour. Res. 30 (2) (2021) 1319–1345.[91] M. Drob, RF PIX2PIX unsupervised wi-fi to video translation, 2021, arXivpreprint arXiv:2102.09345 .[92] N. Sundaram, T. Brox, K. Keutzer, Dense point trajectories by gpuaccelerated large displacement optical flow, in: European Conference onComputer Vision, Springer, 2010, pp. 438–451.[93] Z. Kalal, K. Mikolajczyk, J. Matas, Forward-backward error: Automaticdetection of tracking failures, in: 2010 20th International Conference onPattern Recognition, IEEE, 2010, pp. 2756–2759.[94] Z. Yi, H. Zhang, P. Tan, M. Gong, Dualgan: Unsupervised dual learningfor image-to-image translation, in: Proceedings of the IEEE InternationalConference on Computer Vision, 2017, pp. 2849–2857.[95] J. Ye, Y. Ji, X. Wang, X. Gao, M. Song, Data-free knowledge amalgamationvia group-stack dual-gan, in: Proceedings of the IEEE/CVF Conference onComputer Vision and Pattern Recognition, 2020, pp. 12516–12525.[96] D. Prokopenko, J.V. Stadelmann, H. Schulz, S. Renisch, D.V. Dylov, Synthetic CT generation from MRI using improved DualGAN, 2019, arXivpreprint arXiv:1909.08942 .[97] W. Liang, D. Ding, G. Wei, An improved DualGAN for near-infrared imagecolorization, Infrared Phys. Technol. 116 (2021) 103764.[98] C.L.M. Veillon, N. Obin, A. Roebel, Towards end-to-end F0 voice conversionbased on dual-GAN with convolutional wavelet kernels, 2021, arXivpreprint arXiv:2104.07283 .[99] F. Yger, A. Rakotomamonjy, Wavelet kernel learning, Pattern Recognit. 44(10–11) (2011) 2614–2629.[100] Z. Luo, J. Chen, T. Takiguchi, Y. Ariki, Emotional voice conversion usingdual supervised adversarial networks with continuous wavelet transformf0 features, IEEE/ACM Trans. Audio Speech Lang. Process. 27 (10) (2019)1535–1548.[101] T. Kim, M. Cha, H. Kim, J.K. Lee, J. Kim, Learning to discover crossdomain relations with generative adversarial networks, in: InternationalConference on Machine Learning, PMLR, 2017, pp. 1857–1865.[102] C.R.A. Chaitanya, A.S. Kaplanyan, C. Schied, M. Salvi, A. Lefohn, D.Nowrouzezahrai, T. Aila, Interactive reconstruction of Monte Carlo imagesequences using a recurrent denoising autoencoder, ACM Trans. Graph.36 (4) (2017) 1–12.[103] I.A. Luchnikov, A. Ryzhov, P.-J. Stas, S.N. Filippov, H. Ouerdane, Variationalautoencoder reconstruction of complex many-body physics, Entropy 21(11) (2019) 1091.[104] J. Mehta, A. Majumdar, Rodeo: robust de-aliasing autoencoder forreal-time medical image reconstruction, Pattern Recognit. 63 (2017)499–510.[105] S. Hicsonmez, N. Samet, E. Akbas, P. Duygulu, GANILLA: Generativeadversarial networks for image to illustration translation, Image Vis.Comput. 95 (2020) 103886.[106] A.A. Rusu, N.C. Rabinowitz, G. Desjardins, H. Soyer, J. Kirkpatrick, K.Kavukcuoglu, R. Pascanu, R. Hadsell, Progressive neural networks, 2016,arXiv preprint arXiv:1606.04671 .[107] A. Krizhevsky, G. Hinton, et al., Learning multiple layers of features fromtiny images, 2009.[108] H. Yang, J. Liu, L. Zhang, Y. Li, H. Zhang, ProEGAN-MS: A progressive growing generative adversarial networks for electrocardiogram generation,IEEE Access 9 (2021) 52089–52100.[109] V. Bhagat, S. Bhaumik, Data augmentation using generative adversarialnetworks for pneumonia classification in chest xrays, in: 2019 FifthInternational Conference on Image Information Processing, ICIIP, IEEE,2019, pp. 574–579.[110] L. Liu, Y. Zhang, J. Deng, S. Soatto, Dynamically grown generative adversarial networks, in: Proceedings of the AAAI Conference on ArtificialIntelligence, Vol. 35, 2021, pp. 8680–8687.[111] T. Sainburg, M. Thielk, B. Theilman, B. Migliori, T. Gentner, Generativeadversarial interpolative autoencoding: adversarial training on latentspace interpolations encourage convex latent distributions, 2018, arXivpreprint arXiv:1807.06650 .[112] S. Laine, Feature-Based Metrics for Exploring the Latent Space ofGenerative Models, ICLR Workshop Poster, 2018.[113] X. Huang, S. Belongie, Arbitrary style transfer in real-time with adaptive instance normalization, in: Proceedings of the IEEE InternationalConference on Computer Vision, 2017, pp. 1501–1510.[114] M. Tancik, P.P. Srinivasan, B. Mildenhall, S. Fridovich-Keil, N. Raghavan, U.Singhal, R. Ramamoorthi, J.T. Barron, R. Ng, Fourier features let networkslearn high frequency functions in low dimensional domains, 2020, arXivpreprint arXiv:2006.10739 .[115] R. Xu, X. Wang, K. Chen, B. Zhou, C.C. Loy, Positional encoding as spatialinductive bias in gans, in: Proceedings of the IEEE/CVF Conference onComputer Vision and Pattern Recognition, 2021, pp. 13569–13578.[116] H. Zhang, I. Goodfellow, D. Metaxas, A. Odena, Self-attention generativeadversarial networks, in: International Conference on Machine Learning,PMLR, 2019, pp. 7354–7363.[117] A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A.N. Gomez, Ł.Kaiser, I. Polosukhin, Attention is all you need, in: Advances in NeuralInformation Processing Systems, 2017, pp. 5998–6008.[118] A. Brock, J. Donahue, K. Simonyan, Large scale GAN training for highfidelity natural image synthesis, 2018, arXiv preprint arXiv:1809.11096 .[119] A.G. Dimakis, P.B. Godfrey, Y. Wu, M.J. Wainwright, K. Ramchandran,Network coding for distributed storage systems, IEEE Trans. Inform.Theory 56 (9) (2010) 4539–4551.[120] Y. Chen, G. Li, C. Jin, S. Liu, T. Li, SSD-GAN: Measuring the realness in thespatial and spectral domains, 2020, arXiv preprint arXiv:2012.05535 .[121] P. Benioff, The computer as a physical system: A microscopic quantummechanical Hamiltonian model of computers as represented by turingmachines, J. Stat. Phys. 22 (5) (1980) 563–591.[122] E.R. MacQuarrie, C. Simon, S. Simmons, E. Maine, The emerging commercial landscape of quantum computing, Nat. Rev. Phys. 2 (11) (2020)596–598.[123] Y. Cao, J. Romero, J.P. Olson, M. Degroote, P.D. Johnson, M. Kieferová,I.D. Kivlichan, T. Menke, B. Peropadre, N.P. Sawaya, et al., Quantumchemistry in the age of quantum computing, Chem. Rev. 119 (19) (2019)10856–10915.[124] S.A. Stein, B. Baheri, R.M. Tischio, Y. Mao, Q. Guan, A. Li, B. Fang, S. Xu,Qugan: A generative adversarial network through quantum states, 2020,arXiv preprint arXiv:2010.09036 .[125] M.Y. Niu, A. Zlokapa, M. Broughton, S. Boixo, M. Mohseni, V. Smelyanskyi,H. Neven, Entangling quantum generative adversarial networks, 2021,arXiv preprint arXiv:2105.00080 .[126] W.W. Ng, J. Hu, D.S. Yeung, S. Yin, F. Roli, Diversified sensitivity-basedundersampling for imbalance classification problems, IEEE Trans. Cybern.45 (11) (2014) 2402–2412.[127] E. Ramentol, Y. Caballero, R. Bello, F. Herrera, SMOTE-RS B*: a hybridpreprocessing approach based on oversampling and undersampling forhigh imbalanced data-sets using SMOTE and rough sets theory, Knowl.Inf. Syst. 33 (2) (2012) 245–265.[128] Z. Pan, F. Yuan, J. Lei, W. Li, N. Ling, S. Kwong, MIEGAN: Mobile imageenhancement via a multi-module cascade neural network, IEEE Trans.Multimed. 24 (2021) 519–533.[129] G. Qi, Loss-sensitive generative adversarial networks on lipschitzdensities, 2017, CoRR abs/1701.06264 . arXiv preprint arXiv:1701.06264 .[130] L. Weng, From gan to wgan, 2019, arXiv preprint arXiv:1904.08994 .[131] J. Cao, L. Mo, Y. Zhang, K. Jia, C. Shen, M. Tan, Multi-marginal wassersteingan, Adv. Neural Inf. Process. Syst. 32 (2019) 1776–1786.[132] Y. Xiangli, Y. Deng, B. Dai, C.C. Loy, D. Lin, Real or not real, that is thequestion, 2020, arXiv preprint arXiv:2002.05512 .[133] T. Miyato, T. Kataoka, M. Koyama, Y. Yoshida, Spectral normalization forgenerative adversarial networks, 2018, arXiv preprint arXiv:1802.05957 .[134] T. Salimans, D.P. Kingma, Weight normalization: A simple reparameterization to accelerate training of deep neural networks, Adv. Neural Inf.Process. Syst. 29 (2016) 901–909.[135] K.B. Kancharagunta, S.R. Dubey, Csgan: Cyclic-synthesized generativeadversarial networks for image-to-image transformation, 2019, arXivpreprint arXiv:1901.03554 .[136] X. Wang, X. Tang, Face photo-sketch synthesis and recognition, IEEETrans. Pattern Anal. Mach. Intell. 31 (11) (2008) 1955–1967.[137] R. Tyleček, R. Šára, Spatial pattern templates for recognition of objectswith regular structure, in: German Conference on Pattern Recognition,Springer, 2013, pp. 364–374.[138] L. Wang, V. Sindagi, V. Patel, High-quality facial photo-sketch synthesisusing multi-adversarial networks, in: 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), IEEE, 2018,pp. 83–90.23 G. Iglesias, E. Talavera and A. Díaz-ÁlvarezComputer Science Review 48 (2023) 100553[139] N. Barzilay, T.B. Shalev, R. Giryes, MISS GAN: A multi-IlluStrator style generative adversarial network for image to illustration translation, PatternRecognit. Lett. (2021).[140] S.W. Park, J. Kwon, Sphere generative adversarial network based ongeometric moment matching, in: Proceedings of the IEEE/CVF Conferenceon Computer Vision and Pattern Recognition, 2019, pp. 4292–4301.[141] C. Ledig, L. Theis, F. Huszár, J. Caballero, A. Cunningham, A. Acosta, A.Aitken, A. Tejani, J. Totz, Z. Wang, et al., Photo-realistic single imagesuper-resolution using a generative adversarial network, in: Proceedingsof the IEEE Conference on Computer Vision and Pattern Recognition, 2017,pp. 4681–4690.[142] H. Zhang, T. Zhu, X. Chen, L. Zhu, D. Jin, P. Fei, Super-resolution generativeadversarial network (SRGAN) enabled on-chip contact microscopy, J. Phys.D: Appl. Phys. 54 (39) (2021) 394005.[143] O. Dehzangi, S.H. Gheshlaghi, A. Amireskandari, N.M. Nasrabadi, A. Rezai,OCT image segmentation using neural architecture search and SRGAN, in:2020 25th International Conference on Pattern Recognition, ICPR, IEEE,2021, pp. 6425–6430.[144] S. Zhao, Y. Fang, L. Qiu, Deep learning-based channel estimation withSRGAN in OFDM systems, in: 2021 IEEE Wireless Communications andNetworking Conference, WCNC, IEEE, 2021, pp. 1–6.[145] B. Liu, J. Chen, A super resolution algorithm based on attentionmechanism and SRGAN network, IEEE Access (2021).[146] A. Genevay, G. Peyré, M. Cuturi, GAN and VAE from an optimal transportpoint of view, 2017, arXiv preprint arXiv:1706.01807 .[147] E. Denton, A. Hanna, R. Amironesei, A. Smart, H. Nicole, M.K. Scheuerman,Bringing the people back in: Contesting benchmark machine learningdatasets, 2020, arXiv preprint arXiv:2007.07399 .[148] Y. LeCun, L. Bottou, Y. Bengio, P. Haffner, Gradient-based learning appliedto document recognition, Proc. IEEE 86 (11) (1998) 2278–2324.[149] J. Susskind, A. Anderson, G.E. Hinton, The Toronto Face Dataset, Tech.Rep., Technical Report UTML TR 2010-001, U. Toronto, 2010.[150] R. Zhang, P. Isola, A.A. Efros, E. Shechtman, O. Wang, The unreasonableeffectiveness of deep features as a perceptual metric, in: Proceedings ofthe IEEE Conference on Computer Vision and Pattern Recognition, 2018,pp. 586–595.[151] J. Lin, Y. Xia, T. Qin, Z. Chen, T.-Y. Liu, Conditional image-to-imagetranslation, in: Proceedings of the IEEE Conference on Computer Visionand Pattern Recognition, 2018, pp. 5524–5532.[152] Q. Guo, W. Feng, R. Gao, Y. Liu, S. Wang, Exploring the effects of blur anddeblurring to visual object tracking, IEEE Trans. Image Process. 30 (2021)1812–1824.[153] K. Zhang, W. Luo, Y. Zhong, L. Ma, B. Stenger, W. Liu, H. Li, Deblurringby realistic blurring, in: Proceedings of the IEEE/CVF Conference onComputer Vision and Pattern Recognition, 2020, pp. 2737–2746.[154] M.A. Younus, T.M. Hasan, Effective and fast deepfake detection methodbased on haar wavelet transform, in: 2020 International Conferenceon Computer Science and Software Engineering, CSASE, IEEE, 2020,pp. 186–190.[155] X. Ren, Z. Qian, Q. Chen, Video deblurring by fitting to test data, 2020,arXiv preprint arXiv:2012.05228 .[156] M. Westerlund, The emergence of deepfake technology: A review,Technol. Innov. Manage. Rev. 9 (11) (2019).[157] V.C. Martínez, G.P. Castillo, Historia del ‘‘fake’’ audiovisual: ‘‘deepfake’’ yla mujer en un imaginario falsificado y perverso, Hist. Comun. Soc. 24 (2)(2019) 55.[158] A.O. Kwok, S.G. Koh, Deepfake: A social construction of technologyperspective, Curr. Issues Tour. 24 (13) (2021) 1798–1802.[159] P. Korshunov, S. Marcel, Vulnerability assessment and detection of deepfake videos, in: 2019 International Conference on Biometrics, ICB, IEEE,2019, pp. 1–6.[160] B. Dolhansky, J. Bitton, B. Pflaum, J. Lu, R. Howes, M. Wang, C. Canton Ferrer, The deepfake detection challenge dataset, 2020, arXiv e-printsarXiv–2006.[161] N. Carlini, H. Farid, Evading deepfake-image detectors with whiteand black-box attacks, in: Proceedings of the IEEE/CVF Conference onComputer Vision and Pattern Recognition Workshops, 2020, pp. 658–659.[162] H. Zhao, W. Zhou, D. Chen, T. Wei, W. Zhang, N. Yu, Multi-attentionaldeepfake detection, in: Proceedings of the IEEE/CVF Conference onComputer Vision and Pattern Recognition, 2021, pp. 2185–2194.[163] Y. Chen, Y. Pan, T. Yao, X. Tian, T. Mei, Mocycle-gan: Unpaired videoto-video translation, in: Proceedings of the 27th ACM InternationalConference on Multimedia, 2019, pp. 647–655.[164] A. Bansal, S. Ma, D. Ramanan, Y. Sheikh, Recycle-gan: Unsupervised videoretargeting, in: Proceedings of the European Conference on ComputerVision, ECCV, 2018, pp. 119–135.[165] L. Kurup, M. Narvekar, R. Sarvaiya, A. Shah, Evolution of neural text generation: Comparative analysis, in: Advances in Computer, Communicationand Computational Sciences, Springer, 2021, pp. 795–804.[166] H. Zhang, T. Xu, H. Li, S. Zhang, X. Wang, X. Huang, D.N. Metaxas,Stackgan: Text to photo-realistic image synthesis with stacked generativeadversarial networks, in: Proceedings of the IEEE International Conferenceon Computer Vision, 2017, pp. 5907–5915.[167] H. Zhang, T. Xu, H. Li, S. Zhang, X. Wang, X. Huang, D.N. Metaxas,Stackgan++: Realistic image synthesis with stacked generative adversarialnetworks, IEEE Trans. Pattern Anal. Mach. Intell. 41 (8) (2018) 1947–1962.[168] C. Gulcehre, S. Chandar, K. Cho, Y. Bengio, Dynamic neural turing machinewith soft and hard addressing schemes, 2016, arXiv preprint arXiv:1607.00036 .[169] J. Weston, S. Chopra, A. Bordes, Memory networks, 2014, arXiv preprintarXiv:1410.3916 .[170] M. Tao, H. Tang, S. Wu, N. Sebe, X.-Y. Jing, F. Wu, B. Bao, Df-gan: Deepfusion generative adversarial networks for text-to-image synthesis, 2020,arXiv preprint arXiv:2008.05865 .[171] L. Gao, D. Chen, Z. Zhao, J. Shao, H.T. Shen, Lightweight dynamic conditional GAN with pyramid attention for text-to-image synthesis, PatternRecognit. 110 (2021) 107384.[172] S. Reed, Z. Akata, X. Yan, L. Logeswaran, B. Schiele, H. Lee, Generativeadversarial text to image synthesis, in: International Conference onMachine Learning, PMLR, 2016, pp. 1060–1069.[173] S.E. Reed, Z. Akata, S. Mohan, S. Tenka, B. Schiele, H. Lee, Learning whatand where to draw, Adv. Neural Inf. Process. Syst. 29 (2016) 217–225.[174] T.-Y. Lin, M. Maire, S. Belongie, J. Hays, P. Perona, D. Ramanan, P. Dollár,C.L. Zitnick, Microsoft coco: Common objects in context, in: EuropeanConference on Computer Vision, Springer, 2014, pp. 740–755.[175] C. Wah, S. Branson, P. Welinder, P. Perona, S. Belongie, The caltech-ucsdbirds-200–2011 dataset, 2011.[176] M.-E. Nilsback, A. Zisserman, Automated flower classification over a largenumber of classes, in: 2008 Sixth Indian Conference on Computer Vision,Graphics & Image Processing, IEEE, 2008, pp. 722–729.[177] S. Hochreiter, J. Schmidhuber, Long short-term memory, Neural Comput.9 (8) (1997) 1735–1780.[178] A.M. Dai, Q.V. Le, Semi-supervised sequence learning, Adv. Neural Inf.Process. Syst. 28 (2015) 3079–3087.[179] Y. Zhang, Z. Gan, L. Carin, Generating text via adversarial training, in:NIPS Workshop on Adversarial Training, Vol. 21, academia. edu, 2016,pp. 21–32.[180] S. Bengio, O. Vinyals, N. Jaitly, N. Shazeer, Scheduled sampling forsequence prediction with recurrent neural networks, 2015, arXiv preprintarXiv:1506.03099 .[181] L. Yu, W. Zhang, J. Wang, Y. Yu, Seqgan: Sequence generative adversarialnets with policy gradient, in: Proceedings of the AAAI Conference onArtificial Intelligence, Vol. 31, 2017.[182] C.B. Browne, E. Powley, D. Whitehouse, S.M. Lucas, P.I. Cowling, P.Rohlfshagen, S. Tavener, D. Perez, S. Samothrakis, S. Colton, A survey ofmonte carlo tree search methods, IEEE Trans. Comput. Intell. AI Games 4(1) (2012) 1–43.[183] L. Floridi, M. Chiriatti, GPT-3: Its nature, scope, limits, and consequences,Minds Mach. 30 (4) (2020) 681–694.[184] N.-T. Tran, V.-H. Tran, N.-B. Nguyen, T.-K. Nguyen, N.-M. Cheung, On dataaugmentation for GAN training, IEEE Trans. Image Process. 30 (2021)1882–1897.[185] M. Frid-Adar, E. Klang, M. Amitai, J. Goldberger, H. Greenspan, Syntheticdata augmentation using GAN for improved liver lesion classification, in:2018 IEEE 15th International Symposium on Biomedical Imaging (ISBI2018), IEEE, 2018, pp. 289–293.[186] D. Kiyasseh, G.A. Tadesse, L. Thwaites, T. Zhu, D. Clifton, et al., Plethaugment: Gan-based ppg augmentation for medical diagnosis in low-resourcesettings, IEEE J. Biomed. Health Inf. 24 (11) (2020) 3226–3235.[187] C. Qi, J. Chen, G. Xu, Z. Xu, T. Lukasiewicz, Y. Liu, SAG-GAN: Semisupervised attention-guided GANs for data augmentation on medicalimages, 2020, arXiv preprint arXiv:2011.07534 .[188] M. Hammami, D. Friboulet, R. Kechichian, Cycle GAN-based data augmentation for multi-organ detection in CT images via yolo, in: 2020IEEE International Conference on Image Processing, ICIP, IEEE, 2020,pp. 390–393.[189] A. Graves, G. Wayne, I. Danihelka, Neural turing machines, 2014, arXivpreprint arXiv:1410.5401 .[190] P. Guo, P. Wang, J. Zhou, V.M. Patel, S. Jiang, Lesion mask-based simultaneous synthesis of anatomic and molecular mr images using agan, in: International Conference on Medical Image Computing andComputer-Assisted Intervention, Springer, 2020, pp. 104–113.[191] T.C. Mok, A. Chung, Learning data augmentation for brain tumorsegmentation with coarse-to-fine generative adversarial networks, in:International MICCAI Brainlesion Workshop, Springer, 2018, pp. 70–80.[192] H. Uzunova, J. Ehrhardt, H. Handels, Generation of annotated braintumor MRIs with tumor-induced tissue deformations for training andassessment of neural networks, in: International Conference on MedicalImage Computing and Computer-Assisted Intervention, Springer, 2020,pp. 501–511.[193] A. Segato, V. Corbetta, M. Di Marzo, L. Pozzi, E. De Momi, Data augmentation of 3D brain environment using deep convolutional refinedauto-encoding alpha GAN, IEEE Trans. Med. Robot. Bionics 3 (1) (2020)269–272.[194] T. Kossen, P. Subramaniam, V.I. Madai, A. Hennemuth, K. Hildebrand, A.Hilbert, J. Sobesky, M. Livne, I. Galinovic, A.A. Khalil, et al., Synthesizinganonymized and labeled TOF-MRA patches for brain vessel segmentationusing generative adversarial networks, Comput. Biol. Med. 131 (2021)104254.[195] T. Xia, A. Chartsias, C. Wang, S.A. Tsaftaris, A.D.N. Initiative, et al., Learningto synthesise the ageing brain without longitudinal data, Med. ImageAnal. 73 (2021) 102169.[196] Y. Chen, X.-H. Yang, Z. Wei, A.A. Heidari, N. Zheng, Z. Li, H. Chen, H.Hu, Q. Zhou, Q. Guan, Generative adversarial networks in medical imageaugmentation: a review, Comput. Biol. Med. (2022) 105382.[197] M. Li, G. Zhou, A. Chen, J. Yi, C. Lu, M. He, Y. Hu, FWDGAN-based dataaugmentation for tomato leaf disease identification, Comput. Electron.Agric. 194 (2022) 106779.[198] M. Xu, S. Yoon, A. Fuentes, J. Yang, D.S. Park, Style-consistent imagetranslation: A novel data augmentation paradigm to improve plantdisease recognition, Front. Plant Sci. 12 (2021) 773142.[199] H. Jin, Y. Li, J. Qi, J. Feng, D. Tian, W. Mu, GrapeGAN: Unsupervisedimage enhancement for improved grape leaf disease recognition, Comput.Electron. Agric. 198 (2022) 107055.[200] Y. Jing, Y. Bian, Z. Hu, L. Wang, X.-Q.S. Xie, Deep learning for drug design:an artificial intelligence paradigm for drug discovery in the big data era,AAPS J. 20 (3) (2018) 1–10.[201] D. Dana, S.V. Gadhiya, L.G. St. Surin, D. Li, F. Naaz, Q. Ali, L. Paka, M.A.Yamin, M. Narayan, I.D. Goldberg, et al., Deep learning in drug discoveryand medicine; scratching the surface, Molecules 23 (9) (2018) 2384.[202] A. Kadurin, A. Aliper, A. Kazennov, P. Mamoshina, Q. Vanhaelen, K.Khrabrov, A. Zhavoronkov, The cornucopia of meaningful leads: Applying deep adversarial autoencoders for new molecule development inoncology, Oncotarget 8 (7) (2017) 10883.[203] A. Kadurin, S. Nikolenko, K. Khrabrov, A. Aliper, A. Zhavoronkov, druGAN:an advanced generative adversarial autoencoder model for de novogeneration of new molecules with desired molecular properties in silico,Mol. Pharmaceut. 14 (9) (2017) 3098–3104.[204] G.R. Padalkar, S.D. Patil, M.M. Hegadi, N.K. Jaybhaye, Drug discovery usinggenerative adversarial network with reinforcement learning, in: 2021International Conference on Computer Communication and Informatics,ICCCI, IEEE, 2021, pp. 1–3.[205] D. Manu, Y. Sheng, J. Yang, J. Deng, T. Geng, A. Li, C. Ding, W. Jiang,L. Yang, FL-DISCO: Federated generative adversarial network for graphbased molecule drug discovery: Special session paper, in: 2021 IEEE/ACMInternational Conference on Computer Aided Design, ICCAD, IEEE, 2021,pp. 1–7.[206] J. Konečn`y, H.B. McMahan, F.X. Yu, P. Richtárik, A.T. Suresh, D. Bacon,Federated learning: Strategies for improving communication efficiency,2016, arXiv preprint arXiv:1610.05492 .[207] P. Dhariwal, A. Nichol, Diffusion models beat gans on image synthesis,Adv. Neural Inf. Process. Syst. 34 (2021) 8780–8794.[208] J. Ho, A. Jain, P. Abbeel, Denoising diffusion probabilistic models, Adv.Neural Inf. Process. Syst. 33 (2020) 6840–6851.[209] Y. Song, S. Ermon, Generative modeling by estimating gradients of thedata distribution, Adv. Neural Inf. Process. Syst. 32 (2019).[210] F.-A. Croitoru, V. Hondru, R.T. Ionescu, M. Shah, Diffusion models invision: A survey, 2022, arXiv preprint arXiv:2209.04747 .[211] C. Saharia, W. Chan, H. Chang, C. Lee, J. Ho, T. Salimans, D. Fleet, M.Norouzi, Palette: Image-to-image diffusion models, in: ACM SIGGRAPH2022 Conference Proceedings, 2022, pp. 1–10.[212] Y. Jiang, S. Chang, Z. Wang, Transgan: Two transformers can make onestrong gan, 2021, arXiv preprint arXiv:2102.07074 1, 3.[213] Z. Lv, X. Huang, W. Cao, An improved GAN with transformers forpedestrian trajectory prediction models, Int. J. Intell. Syst. 37 (8) (2022)4417–4436.

未完待续... 文章来源地址https://www.toymoban.com/news/detail-797517.html

到了这里,关于【论文综述】一篇关于GAN在计算机视觉邻域的综述的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!