下载源码

cd ~/Downloads/ai

git clone --depth=1 https://gitee.com/ymcui/Chinese-LLaMA-Alpaca-2创建venv

python3 -m venv venv

source venv/bin/activate

安装依赖

pip install -r requirements.txt已安装依赖列表

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$ pip list

Package Version

------------------------ ----------

accelerate 0.26.1

bitsandbytes 0.41.1

certifi 2023.11.17

charset-normalizer 3.3.2

cmake 3.28.1

filelock 3.13.1

fsspec 2023.12.2

huggingface-hub 0.17.3

idna 3.6

Jinja2 3.1.3

lit 17.0.6

MarkupSafe 2.1.3

mpmath 1.3.0

networkx 3.2.1

numpy 1.26.3

nvidia-cublas-cu11 11.10.3.66

nvidia-cuda-cupti-cu11 11.7.101

nvidia-cuda-nvrtc-cu11 11.7.99

nvidia-cuda-runtime-cu11 11.7.99

nvidia-cudnn-cu11 8.5.0.96

nvidia-cufft-cu11 10.9.0.58

nvidia-curand-cu11 10.2.10.91

nvidia-cusolver-cu11 11.4.0.1

nvidia-cusparse-cu11 11.7.4.91

nvidia-nccl-cu11 2.14.3

nvidia-nvtx-cu11 11.7.91

packaging 23.2

peft 0.3.0

pip 22.0.2

psutil 5.9.7

PyYAML 6.0.1

regex 2023.12.25

requests 2.31.0

safetensors 0.4.1

sentencepiece 0.1.99

setuptools 59.6.0

sympy 1.12

tokenizers 0.14.1

torch 2.0.1

tqdm 4.66.1

transformers 4.35.0

triton 2.0.0

typing_extensions 4.9.0

urllib3 2.1.0

wheel 0.42.0

下载编译llama.cpp

cd ~/Downloads/ai/

git clone --depth=1 https://gh.api.99988866.xyz/https://github.com/ggerganov/llama.cpp

cd llma.cpp

make -j6

编译成功

创建软链接

cd ~/Downloads/ai/Chinese-LLaMA-Alpaca-2/scripts/llama-cpp/

ln -s ~/Downloads/ai/llama.cpp/main .

下载模型

由于只有6G显存,只下载基础的对话模型chinese-alpaca-2-1.3b

浏览器打开地址:hfl/chinese-alpaca-2-1.3b at main

放到~/Downloads/ai 目录下

启动chat报错

继续折腾:

这两个文件需要手动在浏览器内下载到~/Downloads/ai/chinese-alpaca-2-1.3b

参考文档

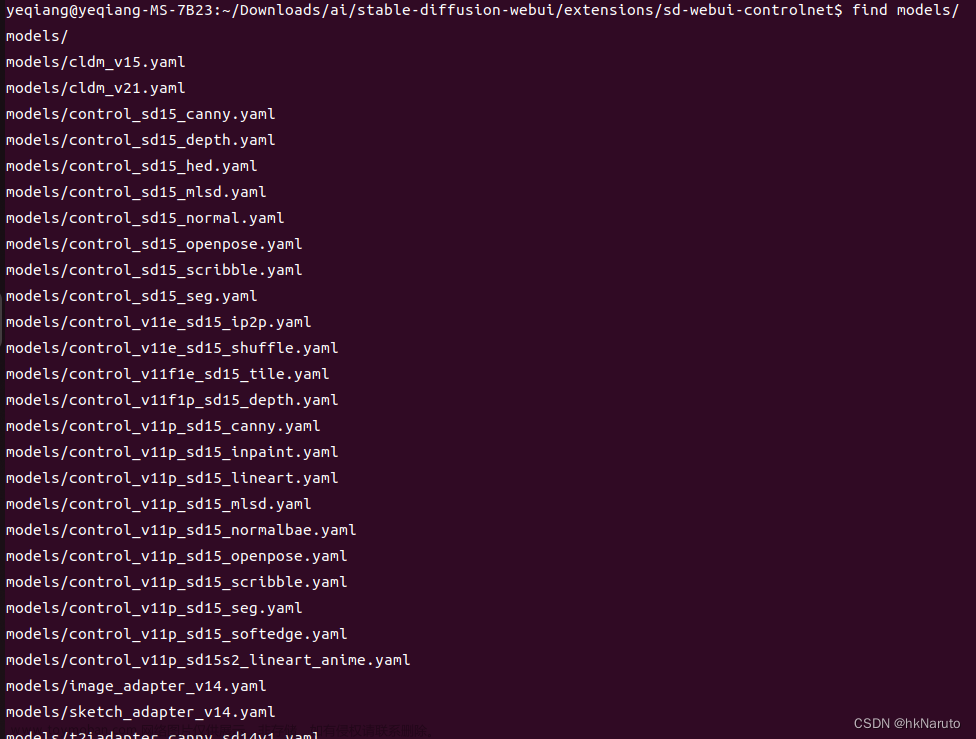

转换模型

rm models/ -rf

mkdir models

cp ~/Downloads/ai/chinese-alpaca-2-1.3b models/ -v

python ~/Downloads/ai/llama.cpp/convert.py models/chinese-alpaca-2-1.3b/转换日志

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$ python ~/Downloads/ai/llama.cpp/convert.py models/chinese-alpaca-2-1.3b/

/home/yeqiang/Downloads/ai/llama.cpp/gguf-py

Loading model file models/chinese-alpaca-2-1.3b/pytorch_model.bin

params = Params(n_vocab=55296, n_embd=4096, n_layer=4, n_ctx=4096, n_ff=11008, n_head=32, n_head_kv=32, f_norm_eps=1e-05, n_experts=None, n_experts_used=None, rope_scaling_type=None, f_rope_freq_base=10000.0, f_rope_scale=None, n_orig_ctx=None, rope_finetuned=None, ftype=None, path_model=PosixPath('models/chinese-alpaca-2-1.3b'))

Loading vocab file 'models/chinese-alpaca-2-1.3b/tokenizer.model', type 'spm'

Permuting layer 0

Permuting layer 1

Permuting layer 2

Permuting layer 3

model.embed_tokens.weight -> token_embd.weight | F16 | [55296, 4096]

model.layers.0.self_attn.q_proj.weight -> blk.0.attn_q.weight | F16 | [4096, 4096]

model.layers.0.self_attn.k_proj.weight -> blk.0.attn_k.weight | F16 | [4096, 4096]

model.layers.0.self_attn.v_proj.weight -> blk.0.attn_v.weight | F16 | [4096, 4096]

model.layers.0.self_attn.o_proj.weight -> blk.0.attn_output.weight | F16 | [4096, 4096]

skipping tensor blk.0.attn_rot_embd

model.layers.0.mlp.gate_proj.weight -> blk.0.ffn_gate.weight | F16 | [11008, 4096]

model.layers.0.mlp.up_proj.weight -> blk.0.ffn_up.weight | F16 | [11008, 4096]

model.layers.0.mlp.down_proj.weight -> blk.0.ffn_down.weight | F16 | [4096, 11008]

model.layers.0.input_layernorm.weight -> blk.0.attn_norm.weight | F16 | [4096]

model.layers.0.post_attention_layernorm.weight -> blk.0.ffn_norm.weight | F16 | [4096]

model.layers.1.self_attn.q_proj.weight -> blk.1.attn_q.weight | F16 | [4096, 4096]

model.layers.1.self_attn.k_proj.weight -> blk.1.attn_k.weight | F16 | [4096, 4096]

model.layers.1.self_attn.v_proj.weight -> blk.1.attn_v.weight | F16 | [4096, 4096]

model.layers.1.self_attn.o_proj.weight -> blk.1.attn_output.weight | F16 | [4096, 4096]

skipping tensor blk.1.attn_rot_embd

model.layers.1.mlp.gate_proj.weight -> blk.1.ffn_gate.weight | F16 | [11008, 4096]

model.layers.1.mlp.up_proj.weight -> blk.1.ffn_up.weight | F16 | [11008, 4096]

model.layers.1.mlp.down_proj.weight -> blk.1.ffn_down.weight | F16 | [4096, 11008]

model.layers.1.input_layernorm.weight -> blk.1.attn_norm.weight | F16 | [4096]

model.layers.1.post_attention_layernorm.weight -> blk.1.ffn_norm.weight | F16 | [4096]

model.layers.2.self_attn.q_proj.weight -> blk.2.attn_q.weight | F16 | [4096, 4096]

model.layers.2.self_attn.k_proj.weight -> blk.2.attn_k.weight | F16 | [4096, 4096]

model.layers.2.self_attn.v_proj.weight -> blk.2.attn_v.weight | F16 | [4096, 4096]

model.layers.2.self_attn.o_proj.weight -> blk.2.attn_output.weight | F16 | [4096, 4096]

skipping tensor blk.2.attn_rot_embd

model.layers.2.mlp.gate_proj.weight -> blk.2.ffn_gate.weight | F16 | [11008, 4096]

model.layers.2.mlp.up_proj.weight -> blk.2.ffn_up.weight | F16 | [11008, 4096]

model.layers.2.mlp.down_proj.weight -> blk.2.ffn_down.weight | F16 | [4096, 11008]

model.layers.2.input_layernorm.weight -> blk.2.attn_norm.weight | F16 | [4096]

model.layers.2.post_attention_layernorm.weight -> blk.2.ffn_norm.weight | F16 | [4096]

model.layers.3.self_attn.q_proj.weight -> blk.3.attn_q.weight | F16 | [4096, 4096]

model.layers.3.self_attn.k_proj.weight -> blk.3.attn_k.weight | F16 | [4096, 4096]

model.layers.3.self_attn.v_proj.weight -> blk.3.attn_v.weight | F16 | [4096, 4096]

model.layers.3.self_attn.o_proj.weight -> blk.3.attn_output.weight | F16 | [4096, 4096]

skipping tensor blk.3.attn_rot_embd

model.layers.3.mlp.gate_proj.weight -> blk.3.ffn_gate.weight | F16 | [11008, 4096]

model.layers.3.mlp.up_proj.weight -> blk.3.ffn_up.weight | F16 | [11008, 4096]

model.layers.3.mlp.down_proj.weight -> blk.3.ffn_down.weight | F16 | [4096, 11008]

model.layers.3.input_layernorm.weight -> blk.3.attn_norm.weight | F16 | [4096]

model.layers.3.post_attention_layernorm.weight -> blk.3.ffn_norm.weight | F16 | [4096]

model.norm.weight -> output_norm.weight | F16 | [4096]

lm_head.weight -> output.weight | F16 | [55296, 4096]

Writing models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf, format 1

Ignoring added_tokens.json since model matches vocab size without it.

gguf: This GGUF file is for Little Endian only

gguf: Setting special token type bos to 1

gguf: Setting special token type eos to 2

gguf: Setting special token type pad to 0

gguf: Setting add_bos_token to True

gguf: Setting add_eos_token to False

[ 1/39] Writing tensor token_embd.weight | size 55296 x 4096 | type F16 | T+ 1

[ 2/39] Writing tensor blk.0.attn_q.weight | size 4096 x 4096 | type F16 | T+ 1

[ 3/39] Writing tensor blk.0.attn_k.weight | size 4096 x 4096 | type F16 | T+ 1

[ 4/39] Writing tensor blk.0.attn_v.weight | size 4096 x 4096 | type F16 | T+ 1

[ 5/39] Writing tensor blk.0.attn_output.weight | size 4096 x 4096 | type F16 | T+ 1

[ 6/39] Writing tensor blk.0.ffn_gate.weight | size 11008 x 4096 | type F16 | T+ 1

[ 7/39] Writing tensor blk.0.ffn_up.weight | size 11008 x 4096 | type F16 | T+ 1

[ 8/39] Writing tensor blk.0.ffn_down.weight | size 4096 x 11008 | type F16 | T+ 1

[ 9/39] Writing tensor blk.0.attn_norm.weight | size 4096 | type F32 | T+ 2

[10/39] Writing tensor blk.0.ffn_norm.weight | size 4096 | type F32 | T+ 2

[11/39] Writing tensor blk.1.attn_q.weight | size 4096 x 4096 | type F16 | T+ 2

[12/39] Writing tensor blk.1.attn_k.weight | size 4096 x 4096 | type F16 | T+ 2

[13/39] Writing tensor blk.1.attn_v.weight | size 4096 x 4096 | type F16 | T+ 2

[14/39] Writing tensor blk.1.attn_output.weight | size 4096 x 4096 | type F16 | T+ 2

[15/39] Writing tensor blk.1.ffn_gate.weight | size 11008 x 4096 | type F16 | T+ 2

[16/39] Writing tensor blk.1.ffn_up.weight | size 11008 x 4096 | type F16 | T+ 2

[17/39] Writing tensor blk.1.ffn_down.weight | size 4096 x 11008 | type F16 | T+ 2

[18/39] Writing tensor blk.1.attn_norm.weight | size 4096 | type F32 | T+ 2

[19/39] Writing tensor blk.1.ffn_norm.weight | size 4096 | type F32 | T+ 2

[20/39] Writing tensor blk.2.attn_q.weight | size 4096 x 4096 | type F16 | T+ 2

[21/39] Writing tensor blk.2.attn_k.weight | size 4096 x 4096 | type F16 | T+ 2

[22/39] Writing tensor blk.2.attn_v.weight | size 4096 x 4096 | type F16 | T+ 2

[23/39] Writing tensor blk.2.attn_output.weight | size 4096 x 4096 | type F16 | T+ 2

[24/39] Writing tensor blk.2.ffn_gate.weight | size 11008 x 4096 | type F16 | T+ 2

[25/39] Writing tensor blk.2.ffn_up.weight | size 11008 x 4096 | type F16 | T+ 2

[26/39] Writing tensor blk.2.ffn_down.weight | size 4096 x 11008 | type F16 | T+ 2

[27/39] Writing tensor blk.2.attn_norm.weight | size 4096 | type F32 | T+ 2

[28/39] Writing tensor blk.2.ffn_norm.weight | size 4096 | type F32 | T+ 2

[29/39] Writing tensor blk.3.attn_q.weight | size 4096 x 4096 | type F16 | T+ 2

[30/39] Writing tensor blk.3.attn_k.weight | size 4096 x 4096 | type F16 | T+ 2

[31/39] Writing tensor blk.3.attn_v.weight | size 4096 x 4096 | type F16 | T+ 2

[32/39] Writing tensor blk.3.attn_output.weight | size 4096 x 4096 | type F16 | T+ 2

[33/39] Writing tensor blk.3.ffn_gate.weight | size 11008 x 4096 | type F16 | T+ 3

[34/39] Writing tensor blk.3.ffn_up.weight | size 11008 x 4096 | type F16 | T+ 3

[35/39] Writing tensor blk.3.ffn_down.weight | size 4096 x 11008 | type F16 | T+ 4

[36/39] Writing tensor blk.3.attn_norm.weight | size 4096 | type F32 | T+ 4

[37/39] Writing tensor blk.3.ffn_norm.weight | size 4096 | type F32 | T+ 4

[38/39] Writing tensor output_norm.weight | size 4096 | type F32 | T+ 4

[39/39] Writing tensor output.weight | size 55296 x 4096 | type F16 | T+ 4

Wrote models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$

进一步对FP16模型进行4-bit量化

~/Downloads/ai/llama.cpp/quantize models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin q4_0日志

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$ ~/Downloads/ai/llama.cpp/quantize models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin q4_0

main: build = 1 (5c99960)

main: built with cc (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0 for x86_64-linux-gnu

main: quantizing 'models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf' to 'models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin' as Q4_0

llama_model_loader: loaded meta data with 21 key-value pairs and 39 tensors from models/chinese-alpaca-2-1.3b/ggml-model-f16.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = LLaMA v2

llama_model_loader: - kv 2: llama.context_length u32 = 4096

llama_model_loader: - kv 3: llama.embedding_length u32 = 4096

llama_model_loader: - kv 4: llama.block_count u32 = 4

llama_model_loader: - kv 5: llama.feed_forward_length u32 = 11008

llama_model_loader: - kv 6: llama.rope.dimension_count u32 = 128

llama_model_loader: - kv 7: llama.attention.head_count u32 = 32

llama_model_loader: - kv 8: llama.attention.head_count_kv u32 = 32

llama_model_loader: - kv 9: llama.attention.layer_norm_rms_epsilon f32 = 0.000010

llama_model_loader: - kv 10: llama.rope.freq_base f32 = 10000.000000

llama_model_loader: - kv 11: general.file_type u32 = 1

llama_model_loader: - kv 12: tokenizer.ggml.model str = llama

llama_model_loader: - kv 13: tokenizer.ggml.tokens arr[str,55296] = ["<unk>", "<s>", "</s>", "<0x00>", "<...

llama_model_loader: - kv 14: tokenizer.ggml.scores arr[f32,55296] = [0.000000, 0.000000, 0.000000, 0.0000...

llama_model_loader: - kv 15: tokenizer.ggml.token_type arr[i32,55296] = [2, 3, 3, 6, 6, 6, 6, 6, 6, 6, 6, 6, ...

llama_model_loader: - kv 16: tokenizer.ggml.bos_token_id u32 = 1

llama_model_loader: - kv 17: tokenizer.ggml.eos_token_id u32 = 2

llama_model_loader: - kv 18: tokenizer.ggml.padding_token_id u32 = 0

llama_model_loader: - kv 19: tokenizer.ggml.add_bos_token bool = true

llama_model_loader: - kv 20: tokenizer.ggml.add_eos_token bool = false

llama_model_loader: - type f32: 9 tensors

llama_model_loader: - type f16: 30 tensors

llama_model_quantize_internal: meta size = 1233920 bytes

[ 1/ 39] token_embd.weight - [ 4096, 55296, 1, 1], type = f16, quantizing to q4_0 .. size = 432.00 MiB -> 121.50 MiB | hist: 0.037 0.016 0.026 0.039 0.057 0.077 0.096 0.110 0.116 0.110 0.096 0.077 0.057 0.039 0.026 0.021

[ 2/ 39] blk.0.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.016 0.027 0.040 0.056 0.074 0.092 0.109 0.121 0.110 0.093 0.076 0.058 0.042 0.027 0.021

[ 3/ 39] blk.0.attn_k.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.035 0.012 0.019 0.031 0.047 0.069 0.097 0.130 0.152 0.130 0.097 0.069 0.047 0.030 0.019 0.015

[ 4/ 39] blk.0.attn_v.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.037 0.054 0.075 0.097 0.115 0.123 0.115 0.097 0.075 0.054 0.037 0.024 0.020

[ 5/ 39] blk.0.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.035 0.012 0.020 0.032 0.049 0.072 0.099 0.126 0.138 0.126 0.100 0.072 0.049 0.032 0.020 0.017

[ 6/ 39] blk.0.ffn_gate.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.096 0.112 0.117 0.112 0.097 0.077 0.056 0.039 0.025 0.021

[ 7/ 39] blk.0.ffn_up.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.097 0.111 0.117 0.111 0.097 0.077 0.056 0.039 0.025 0.021

[ 8/ 39] blk.0.ffn_down.weight - [11008, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.097 0.112 0.117 0.112 0.097 0.077 0.056 0.039 0.025 0.021

[ 9/ 39] blk.0.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 10/ 39] blk.0.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 11/ 39] blk.1.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.013 0.021 0.033 0.050 0.072 0.098 0.123 0.137 0.123 0.098 0.072 0.050 0.033 0.021 0.017

[ 12/ 39] blk.1.attn_k.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.013 0.021 0.033 0.050 0.073 0.098 0.123 0.136 0.123 0.099 0.073 0.051 0.033 0.021 0.017

[ 13/ 39] blk.1.attn_v.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.037 0.055 0.076 0.097 0.114 0.122 0.114 0.097 0.076 0.055 0.038 0.024 0.020

[ 14/ 39] blk.1.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.025 0.038 0.056 0.076 0.097 0.112 0.118 0.112 0.097 0.077 0.056 0.038 0.025 0.020

[ 15/ 39] blk.1.ffn_gate.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.097 0.111 0.117 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 16/ 39] blk.1.ffn_up.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.096 0.111 0.117 0.112 0.097 0.077 0.056 0.039 0.025 0.021

[ 17/ 39] blk.1.ffn_down.weight - [11008, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.117 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 18/ 39] blk.1.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 19/ 39] blk.1.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 20/ 39] blk.2.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.037 0.054 0.075 0.097 0.116 0.125 0.116 0.097 0.075 0.054 0.037 0.024 0.020

[ 21/ 39] blk.2.attn_k.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.037 0.054 0.075 0.097 0.116 0.126 0.116 0.097 0.075 0.054 0.037 0.024 0.019

[ 22/ 39] blk.2.attn_v.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.076 0.096 0.112 0.119 0.112 0.096 0.076 0.056 0.039 0.025 0.021

[ 23/ 39] blk.2.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.116 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 24/ 39] blk.2.ffn_gate.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.116 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 25/ 39] blk.2.ffn_up.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.096 0.111 0.117 0.111 0.097 0.077 0.057 0.039 0.025 0.021

[ 26/ 39] blk.2.ffn_down.weight - [11008, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.117 0.111 0.097 0.077 0.057 0.039 0.025 0.021

[ 27/ 39] blk.2.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 28/ 39] blk.2.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 29/ 39] blk.3.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.038 0.055 0.076 0.097 0.113 0.121 0.113 0.097 0.076 0.055 0.038 0.025 0.020

[ 30/ 39] blk.3.attn_k.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.015 0.024 0.038 0.055 0.076 0.097 0.114 0.121 0.114 0.097 0.076 0.055 0.038 0.024 0.020

[ 31/ 39] blk.3.attn_v.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.076 0.096 0.112 0.118 0.112 0.096 0.076 0.056 0.039 0.025 0.021

[ 32/ 39] blk.3.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 32.00 MiB -> 9.00 MiB | hist: 0.037 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.116 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 33/ 39] blk.3.ffn_gate.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.037 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.116 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 34/ 39] blk.3.ffn_up.weight - [ 4096, 11008, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.037 0.016 0.025 0.039 0.057 0.077 0.096 0.111 0.116 0.111 0.096 0.077 0.057 0.039 0.025 0.021

[ 35/ 39] blk.3.ffn_down.weight - [11008, 4096, 1, 1], type = f16, quantizing to q4_0 .. size = 86.00 MiB -> 24.19 MiB | hist: 0.036 0.016 0.025 0.039 0.056 0.077 0.096 0.111 0.117 0.111 0.097 0.077 0.057 0.039 0.025 0.021

[ 36/ 39] blk.3.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 37/ 39] blk.3.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 38/ 39] output_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 39/ 39] output.weight - [ 4096, 55296, 1, 1], type = f16, quantizing to q6_K .. size = 432.00 MiB -> 177.19 MiB

llama_model_quantize_internal: model size = 2408.14 MB

llama_model_quantize_internal: quant size = 733.08 MB

llama_model_quantize_internal: hist: 0.036 0.015 0.025 0.038 0.056 0.076 0.096 0.112 0.119 0.112 0.097 0.076 0.056 0.038 0.025 0.021

main: quantize time = 5131.57 ms

main: total time = 5131.57 ms

启动chat.sh

mv scripts/llama-cpp/main .

bash scripts/llama-cpp/chat.sh models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin启动成功了,日志

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$ bash scripts/llama-cpp/chat.sh models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin

Log start

main: build = 1 (5c99960)

main: built with cc (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0 for x86_64-linux-gnu

main: seed = 1705481300

llama_model_loader: loaded meta data with 22 key-value pairs and 39 tensors from models/chinese-alpaca-2-1.3b/ggml-model-q4_0.bin (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = LLaMA v2

llama_model_loader: - kv 2: llama.context_length u32 = 4096

llama_model_loader: - kv 3: llama.embedding_length u32 = 4096

llama_model_loader: - kv 4: llama.block_count u32 = 4

llama_model_loader: - kv 5: llama.feed_forward_length u32 = 11008

llama_model_loader: - kv 6: llama.rope.dimension_count u32 = 128

llama_model_loader: - kv 7: llama.attention.head_count u32 = 32

llama_model_loader: - kv 8: llama.attention.head_count_kv u32 = 32

llama_model_loader: - kv 9: llama.attention.layer_norm_rms_epsilon f32 = 0.000010

llama_model_loader: - kv 10: llama.rope.freq_base f32 = 10000.000000

llama_model_loader: - kv 11: general.file_type u32 = 2

llama_model_loader: - kv 12: tokenizer.ggml.model str = llama

llama_model_loader: - kv 13: tokenizer.ggml.tokens arr[str,55296] = ["<unk>", "<s>", "</s>", "<0x00>", "<...

llama_model_loader: - kv 14: tokenizer.ggml.scores arr[f32,55296] = [0.000000, 0.000000, 0.000000, 0.0000...

llama_model_loader: - kv 15: tokenizer.ggml.token_type arr[i32,55296] = [2, 3, 3, 6, 6, 6, 6, 6, 6, 6, 6, 6, ...

llama_model_loader: - kv 16: tokenizer.ggml.bos_token_id u32 = 1

llama_model_loader: - kv 17: tokenizer.ggml.eos_token_id u32 = 2

llama_model_loader: - kv 18: tokenizer.ggml.padding_token_id u32 = 0

llama_model_loader: - kv 19: tokenizer.ggml.add_bos_token bool = true

llama_model_loader: - kv 20: tokenizer.ggml.add_eos_token bool = false

llama_model_loader: - kv 21: general.quantization_version u32 = 2

llama_model_loader: - type f32: 9 tensors

llama_model_loader: - type q4_0: 29 tensors

llama_model_loader: - type q6_K: 1 tensors

llm_load_vocab: mismatch in special tokens definition ( 889/55296 vs 259/55296 ).

llm_load_print_meta: format = GGUF V3 (latest)

llm_load_print_meta: arch = llama

llm_load_print_meta: vocab type = SPM

llm_load_print_meta: n_vocab = 55296

llm_load_print_meta: n_merges = 0

llm_load_print_meta: n_ctx_train = 4096

llm_load_print_meta: n_embd = 4096

llm_load_print_meta: n_head = 32

llm_load_print_meta: n_head_kv = 32

llm_load_print_meta: n_layer = 4

llm_load_print_meta: n_rot = 128

llm_load_print_meta: n_embd_head_k = 128

llm_load_print_meta: n_embd_head_v = 128

llm_load_print_meta: n_gqa = 1

llm_load_print_meta: n_embd_k_gqa = 4096

llm_load_print_meta: n_embd_v_gqa = 4096

llm_load_print_meta: f_norm_eps = 0.0e+00

llm_load_print_meta: f_norm_rms_eps = 1.0e-05

llm_load_print_meta: f_clamp_kqv = 0.0e+00

llm_load_print_meta: f_max_alibi_bias = 0.0e+00

llm_load_print_meta: n_ff = 11008

llm_load_print_meta: n_expert = 0

llm_load_print_meta: n_expert_used = 0

llm_load_print_meta: rope scaling = linear

llm_load_print_meta: freq_base_train = 10000.0

llm_load_print_meta: freq_scale_train = 1

llm_load_print_meta: n_yarn_orig_ctx = 4096

llm_load_print_meta: rope_finetuned = unknown

llm_load_print_meta: model type = ?B

llm_load_print_meta: model ftype = Q4_0

llm_load_print_meta: model params = 1.26 B

llm_load_print_meta: model size = 733.08 MiB (4.87 BPW)

llm_load_print_meta: general.name = LLaMA v2

llm_load_print_meta: BOS token = 1 '<s>'

llm_load_print_meta: EOS token = 2 '</s>'

llm_load_print_meta: UNK token = 0 '<unk>'

llm_load_print_meta: PAD token = 0 '<unk>'

llm_load_print_meta: LF token = 13 '<0x0A>'

llm_load_tensors: ggml ctx size = 0.01 MiB

llm_load_tensors: offloading 0 repeating layers to GPU

llm_load_tensors: offloaded 0/5 layers to GPU

llm_load_tensors: CPU buffer size = 733.08 MiB

..............................

llama_new_context_with_model: n_ctx = 4096

llama_new_context_with_model: freq_base = 10000.0

llama_new_context_with_model: freq_scale = 1

llama_kv_cache_init: CPU KV buffer size = 256.00 MiB

llama_new_context_with_model: KV self size = 256.00 MiB, K (f16): 128.00 MiB, V (f16): 128.00 MiB

llama_new_context_with_model: graph splits (measure): 1

llama_new_context_with_model: CPU compute buffer size = 288.00 MiB

system_info: n_threads = 8 / 6 | AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 0 | AVX512_VBMI = 0 | AVX512_VNNI = 0 | FMA = 1 | NEON = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 0 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 |

main: interactive mode on.

Input prefix with BOS

Input prefix: ' [INST] '

Input suffix: ' [/INST]'

sampling:

repeat_last_n = 64, repeat_penalty = 1.100, frequency_penalty = 0.000, presence_penalty = 0.000

top_k = 40, tfs_z = 1.000, top_p = 0.900, min_p = 0.050, typical_p = 1.000, temp = 0.500

mirostat = 0, mirostat_lr = 0.100, mirostat_ent = 5.000

sampling order:

CFG -> Penalties -> top_k -> tfs_z -> typical_p -> top_p -> min_p -> temp

generate: n_ctx = 4096, n_batch = 512, n_predict = -1, n_keep = 0

== Running in interactive mode. ==

- Press Ctrl+C to interject at any time.

- Press Return to return control to LLaMa.

- To return control without starting a new line, end your input with '/'.

- If you want to submit another line, end your input with '\'.

[INST] <<SYS>>

You are a helpful assistant. 你是一个乐于助人的助手。

<</SYS>>

[/INST] 您好,有什么我可以帮助您的吗?

[INST]

这是完全基于CPU实现的?

编译llama.cpp项目没有启动cuda?

-----

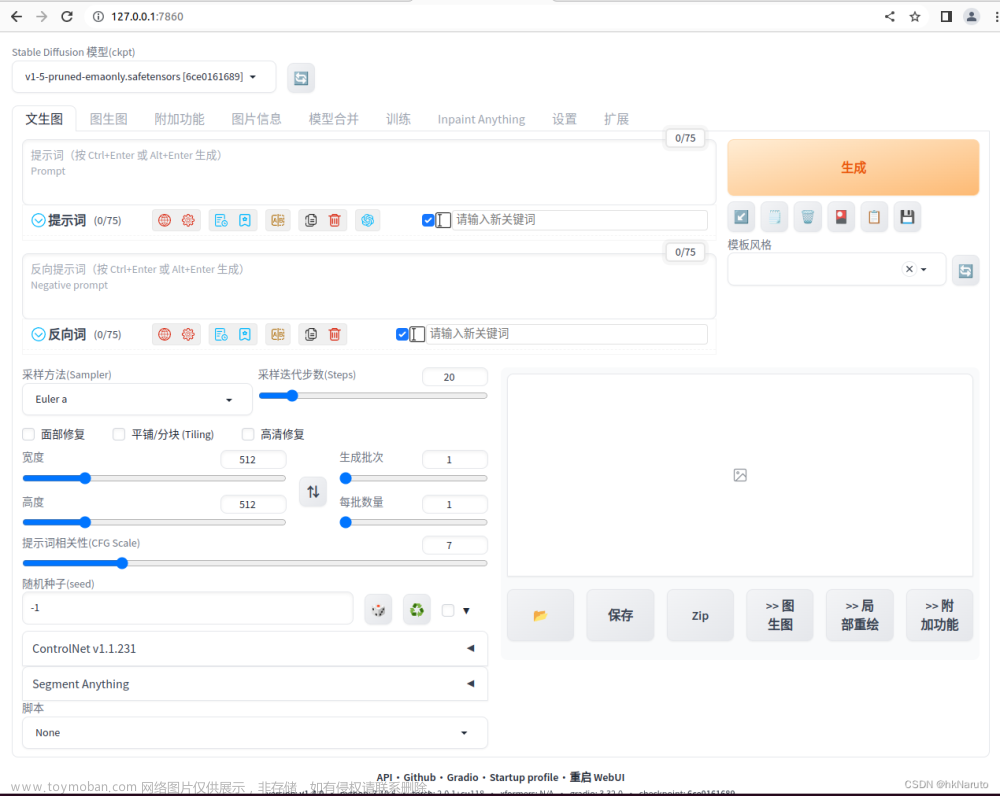

试试web

参考资料

安装gradio

pip install gradio报错

git下载模型,报错

手动把之前的模型拷贝进目录

启动gradio

安装xformers

(venv) yeqiang@yeqiang-MS-7B23:~/Downloads/ai/Chinese-LLaMA-Alpaca-2$ pip install xformers scipy

崩溃了。。。

github加速参考:

FAST-GitHub | Fast-GitHub

huggingface加速参考文章来源:https://www.toymoban.com/news/detail-800342.html

hfl/chinese-alpaca-2-1.3b at main文章来源地址https://www.toymoban.com/news/detail-800342.html

到了这里,关于【AI】RTX2060 6G Ubuntu 22.04.1 LTS (Jammy Jellyfish) 部署Chinese-LLaMA-Alpaca-2的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!