简介

Selenium是一个用于自动化浏览器操作的工具,通常用于Web应用测试。然而,它也可以用作爬虫,通过模拟用户在浏览器中的操作来提取网页数据。以下是有关Selenium爬虫的一些基本介绍:

-

浏览器自动化: Selenium允许你通过编程方式控制浏览器的行为,包括打开网页、点击按钮、填写表单等。这样你可以模拟用户在浏览器中的操作。

-

支持多种浏览器: Selenium支持多种主流浏览器,包括Chrome、Firefox、Edge等。你可以选择适合你需求的浏览器来进行自动化操作。

-

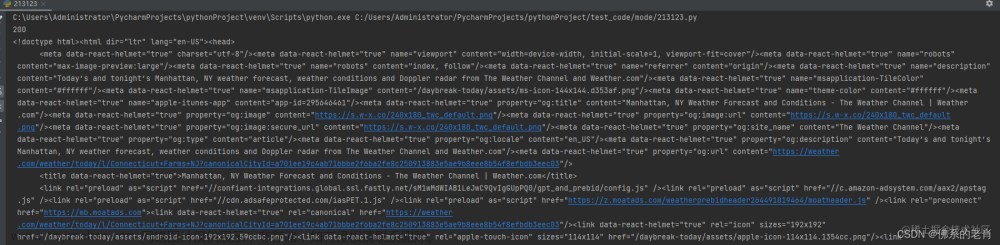

网页数据提取: 利用Selenium,你可以加载网页并提取页面上的数据。这对于一些动态加载内容或需要用户交互的网页来说特别有用。

-

等待元素加载: 由于网页可能会异步加载,Selenium提供了等待机制,确保在继续执行之前等待特定的元素加载完成。

-

选择器: Selenium支持各种选择器,类似于使用CSS选择器或XPath来定位网页上的元素。

-

动态网页爬取: 对于使用JavaScript动态生成内容的网页,Selenium是一个有力的工具,因为它可以执行JavaScript代码并获取渲染后的结果。

尽管Selenium在爬虫中可以提供很多便利,但也需要注意一些方面。首先,使用Selenium进行爬取速度较慢,因为它模拟了真实用户的操作。其次,网站可能会检测到自动化浏览器,并采取措施来防止爬虫,因此使用Selenium时需要小心谨慎,遵守网站的使用规定和政策。

在使用selenium前需要有scrapy爬虫框架的相关知识,selenium需要结合scrapy的中间件才能发挥爬虫的作用,详细请看→前提知识:https://blog.csdn.net/shizuguilai/article/details/135554205

环境配置

1、建议先安装conda

参考连接:https://blog.csdn.net/Q_fairy/article/details/129158178

2、创建虚拟环境并安装对应的包

# 创建名字为scrapy的包

conda create -n scrapy

# 进入虚拟环境

conda activate scrapy

# 下载对应的包

pip install scrapy

pip install selenium

3、下载对应的谷歌驱动以及与驱动对应的浏览器

参考连接:https://zhuanlan.zhihu.com/p/665018772

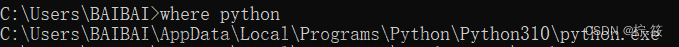

记得配置好环境变量

代码

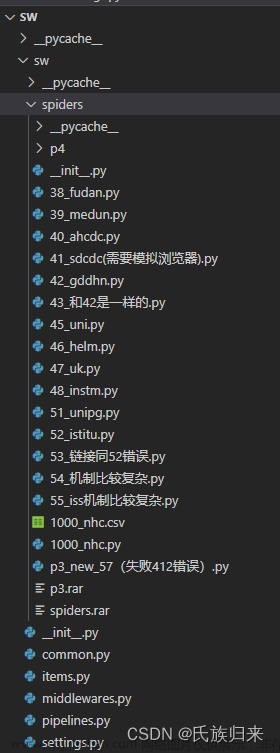

目录结构:spiders下面就是我放scrapy脚本的位置。 文章来源:https://www.toymoban.com/news/detail-807847.html

文章来源:https://www.toymoban.com/news/detail-807847.html

setting.py配置

# Scrapy settings for sw project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = "sw"

SPIDER_MODULES = ["sw.spiders"]

NEWSPIDER_MODULE = "sw.spiders"

DOWNLOAD_DELAY = 3

RANDOMIZE_DOWNLOAD_DELAY = True

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'

COOKIES_ENABLED = True

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = "sw (+http://www.yourdomain.com)"

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# 文件settings.py中

# ----------- selenium参数配置 -------------

SELENIUM_TIMEOUT = 25 # selenium浏览器的超时时间,单位秒

LOAD_IMAGE = True # 是否下载图片

WINDOW_HEIGHT = 900 # 浏览器窗口大小

WINDOW_WIDTH = 900

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

# "Accept-Language": "en",

#}

# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# "sw.middlewares.SwSpiderMiddleware": 543,

#}

# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# "sw.middlewares.SwDownloaderMiddleware": 543,

#}

# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# "scrapy.extensions.telnet.TelnetConsole": None,

#}

# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

#ITEM_PIPELINES = {

# "sw.pipelines.SwPipeline": 300,

#}

# ITEM_PIPELINES = {

# "sw.pipelines.SwPipeline": 300,

# }

# DB_SETTINGS = {

# 'host': '127.0.0.1',

# 'port': 3306,

# 'user': 'root',

# 'password': '123456',

# 'db': 'scrapy_news_2024_01_08',

# 'charset': 'utf8mb4',

# }

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = "httpcache"

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = "scrapy.extensions.httpcache.FilesystemCacheStorage"

# Set settings whose default value is deprecated to a future-proof value

REQUEST_FINGERPRINTER_IMPLEMENTATION = "2.7"

TWISTED_REACTOR = "twisted.internet.asyncioreactor.AsyncioSelectorReactor"

FEED_EXPORT_ENCODING = "utf-8"

# REDIRECT_ENABLED = False

scrapy脚本参考

"""

Created on 2024/01/06 14:00 by Fxy

"""

import scrapy

from sw.items import SwItem

import time

from datetime import datetime

import locale

from scrapy_splash import SplashRequest

# scrapy 信号相关库

from scrapy.utils.project import get_project_settings

# 下面这种方式,即将废弃,所以不用

# from scrapy.xlib.pydispatch import dispatcher

from scrapy import signals

# scrapy最新采用的方案

from pydispatch import dispatcher

from selenium import webdriver

from selenium.webdriver.support.ui import WebDriverWait

class NhcSpider(scrapy.Spider):

'''

scrapy变量

'''

# 爬虫名称

name = "1000_nhc"

# 允许爬取的域名

allowed_domains = ["xxxx.cn"]

# 爬虫的起始链接

start_urls = ["xxxx.shtml"]

# 创建一个VidoItem实例

item = SwItem()

custom_settings = {

'LOG_LEVEL':'INFO',

'DOWNLOAD_DELAY': 0,

'COOKIES_ENABLED': False, # enabled by default

'DOWNLOADER_MIDDLEWARES': {

# SeleniumMiddleware 中间件

'sw.middlewares.SeleniumMiddleware': 543, # 这个数字是启用的优先级

# 将scrapy默认的user-agent中间件关闭

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware': None,

}

}

'''

自定义变量

'''

# 机构名称

org = "xxxx数据"

# 机构英文名称

org_e = "None"

# 日期格式

site_date_format = '发布时间:\n \t%Y-%m-%d\n ' # 网页的日期格式

date_format = '%d.%m.%Y %H:%M:%S' # 目标日期格式

# 网站语言格式

language_type = "zh2zh" # 中文到中文的语言代码, 调用翻译接口时,使用

# 模拟浏览器格式

meta = {'usedSelenium': name, 'dont_redirect': True}

# 将chrome初始化放到spider中,成为spider中的元素

def __init__(self, timeout=40, isLoadImage=True, windowHeight=None, windowWidth=None):

# 从settings.py中获取设置参数

self.mySetting = get_project_settings()

self.timeout = self.mySetting['SELENIUM_TIMEOUT']

self.isLoadImage = self.mySetting['LOAD_IMAGE']

self.windowHeight = self.mySetting['WINDOW_HEIGHT']

self.windowWidth = self.mySetting['windowWidth']

# 初始化chrome对象

options = webdriver.ChromeOptions()

options.add_experimental_option('useAutomationExtension', False) # 隐藏selenium特性

options.add_experimental_option('excludeSwitches', ['enable-automation']) # 隐藏selenium特性

options.add_argument('--ignore-certificate-errors') # 忽略证书错误

options.add_argument('--ignore-certificate-errors-spki-list')

options.add_argument('--ignore-ssl-errors') # 忽略ssl错误

# chrome_options = webdriver.ChromeOptions()

# chrome_options.binary_location = "E:\\学校的一些资料\\文档\研二上\\chrome-win64\\chrome.exe" # 替换为您的特定版本的Chrome浏览器路径

#1.创建Chrome或Firefox浏览器对象,这会在电脑上在打开一个浏览器窗口

# browser = webdriver.Chrome(executable_path ="E:\\chromedriver\\chromedriver", chrome_options=chrome_options) #第一个参数为驱动的路径,第二个参数为对应的应用程序地址

self.browser = webdriver.Chrome(chrome_options=options)

self.browser.execute_cdp_cmd("Page.addScriptToEvaluateOnNewDocument", { # 隐藏selenium特性

"source": """

Object.defineProperty(navigator, 'webdriver', {

get: () => undefined

})

"""

})

if self.windowHeight and self.windowWidth:

self.browser.set_window_size(900, 900)

self.browser.set_page_load_timeout(self.timeout) # 页面加载超时时间

self.wait = WebDriverWait(self.browser, 30) # 指定元素加载超时时间

super(NhcSpider, self).__init__()

# 设置信号量,当收到spider_closed信号时,调用mySpiderCloseHandle方法,关闭chrome

dispatcher.connect(receiver = self.mySpiderCloseHandle,

signal = signals.spider_closed

)

# 信号量处理函数:关闭chrome浏览器

def mySpiderCloseHandle(self, spider):

print(f"mySpiderCloseHandle: enter ")

self.browser.quit()

def start_requests(self):

yield scrapy.Request(url = self.start_urls[0],

meta = self.meta,

callback = self.parse,

# errback = self.error

)

#爬虫的主入口,这里是获取所有的归档文章链接, 从返回的respose

def parse(self,response):

# locale.setlocale(locale.LC_TIME, 'en_US') #本地语言为英语 //*[@id="538034"]/div

achieve_links = response.xpath('//ul[@class="zxxx_list"]/li/a/@href').extract()

print("achieve_links",achieve_links)

for achieve_link in achieve_links:

full_achieve_link = "http:/xxxx.cn" + achieve_link

print("full_achieve_link", full_achieve_link)

# 进入每个归档链接

yield scrapy.Request(full_achieve_link, callback=self.parse_item,dont_filter=True, meta=self.meta)

#翻页逻辑

xpath_expression = f'//*[@id="page_div"]/div[@class="pagination_index"]/span/a[text()="下一页"]/@href'

next_page = response.xpath(xpath_expression).extract_first()

print("next_page = ", next_page)

# 翻页操作

if next_page != None:

# print(next_page)

# print('next page')

full_next_page = "http://xxxx/" + next_page

print("full_next_page",full_next_page)

meta_page = {'usedSelenium': self.name, "whether_wait_id" : True} # 翻页的meta和请求的meta要不一样

yield scrapy.Request(full_next_page, callback=self.parse, dont_filter=True, meta=meta_page)

#获取每个文章的内容,并存入item

def parse_item(self,response):

source_url = response.url

title_o = response.xpath('//div[@class="tit"]/text()').extract_first().strip()

# title_t = my_tools.get_trans(title_o, "de2zh")

publish_time = response.xpath('//div[@class="source"]/span[1]/text()').extract_first()

date_object = datetime.strptime(publish_time, self.site_date_format) # 先读取成网页的日期格式

date_object = date_object.strftime(self.date_format) # 转换成目标的日期字符串

publish_time = datetime.strptime(date_object, self.date_format) # 从符合格式的字符串,转换成日期

content_o = [content.strip() for content in response.xpath('//div[@id="xw_box"]//text()').extract()]

# content_o = ' '.join(content_o) # 这个content_o提取出来是一个字符串数组,所以要拼接成字符串

# content_t = my_tools.get_trans(content_o, "de2zh")

print("source_url:", source_url)

print("title_o:", title_o)

# print("title_t:", title_t)

print("publish_time:", publish_time) #15.01.2008

print("content_o:", content_o)

# print("content_t:", content_t)

print("-" * 50)

page_data = {

'source_url': source_url,

'title_o': title_o,

# 'title_t' : title_t,

'publish_time': publish_time,

'content_o': content_o,

# 'content_t': content_t,

'org' : self.org,

'org_e' : self.org_e,

}

self.item['url'] = page_data['source_url']

self.item['title'] = page_data['title_o']

# self.item['title_t'] = page_data['title_t']

self.item['time'] = page_data['publish_time']

self.item['content'] = page_data['content_o']

# self.item['content_t'] = page_data['content_t']

# 获取当前时间

current_time = datetime.now()

# 格式化成字符串

formatted_time = current_time.strftime(self.date_format)

# 将字符串转换为 datetime 对象

datetime_object = datetime.strptime(formatted_time, self.date_format)

self.item['scrapy_time'] = datetime_object

self.item['org'] = page_data['org']

self.item['trans_org'] = page_data['org_e']

yield self.item

中间件middlewares.py

# Define here the models for your spider middleware

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

# useful for handling different item types with a single interface

from itemadapter import is_item, ItemAdapter

class SwSpiderMiddleware:

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# Should return None or raise an exception.

return None

def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# Must return an iterable of Request, or item objects.

for i in result:

yield i

def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Request or item objects.

pass

def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

spider.logger.info("Spider opened: %s" % spider.name)

class SwDownloaderMiddleware:

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object

# - return a Request object

# - or raise IgnoreRequest

return response

def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception.

# Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info("Spider opened: %s" % spider.name)

# -*- coding: utf-8 -*- 使用selenium

from selenium import webdriver

from selenium.common.exceptions import TimeoutException

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.common.keys import Keys

from scrapy.http import HtmlResponse

from logging import getLogger

import time

class SeleniumMiddleware():

# Middleware中会传递进来一个spider,这就是我们的spider对象,从中可以获取__init__时的chrome相关元素

def process_request(self, request, spider):

'''

用chrome抓取页面

:param request: Request请求对象

:param spider: Spider对象

:return: HtmlResponse响应

'''

print(f"chrome is getting page = {request.url}")

# 依靠meta中的标记,来决定是否需要使用selenium来爬取

usedSelenium = request.meta.get('usedSelenium', None) # 从request中的meta字段中获取usedSelenium值,不过不存在,返回默认的None

# print("来到中间了?")

if usedSelenium == "1000_nhc":

try:

spider.browser.get(request.url)

time.sleep(4)

if(request.meta.get('whether_wait_id', False)): # 从request中的meta字段中获取whether_wait_id值,不过不存在,返回默认的False

print("准备等待翻页的元素出现。。。")

# 使用WebDriverWait等待页面加载完成

wait = WebDriverWait(spider.browser, 20) # 设置最大等待时间为60秒

# 示例:等待页面中的某个元素加载完成,可根据实际情况调整

wait.until(EC.presence_of_element_located((By.ID, "page_div"))) # 等待翻页结束,才进行下一步

except TimeoutException: # 没有等到元素,继续重新进行请求

print("Timeout waiting for element. Retrying the request.")

self.retry_request(request, spider)

except Exception as e:

print(f"chrome getting page error, Exception = {e}")

return HtmlResponse(url=request.url, status=500, request=request)

else:

time.sleep(4)

# 页面爬取成功,构造一个成功的Response对象(HtmlResponse是它的子类)

return HtmlResponse(url=request.url,

body=spider.browser.page_source,

request=request,

# 最好根据网页的具体编码而定

encoding='utf-8',

status=200)

# try:

# spider.browser.get(request.url)

# # 搜索框是否出现

# input = spider.wait.until(

# EC.presence_of_element_located((By.XPATH, "//div[@class='nav-search-field ']/input"))

# )

# time.sleep(2)

# input.clear()

# input.send_keys("iphone 7s")

# # 敲enter键, 进行搜索

# input.send_keys(Keys.RETURN)

# # 查看搜索结果是否出现

# searchRes = spider.wait.until(

# EC.presence_of_element_located((By.XPATH, "//div[@id='resultsCol']"))

# )

# except Exception as e:

# print(f"chrome getting page error, Exception = {e}")

# return HtmlResponse(url=request.url, status=500, request=request)

# else:

# time.sleep(3)

# # 页面爬取成功,构造一个成功的Response对象(HtmlResponse是它的子类)

# return HtmlResponse(url=request.url,

# body=spider.browser.page_source,

# request=request,

# # 最好根据网页的具体编码而定

# encoding='utf-8',

# status=200)

附录:selenium教程

参考链接1 selenium如何等待具体元素的出现:https://selenium-python-zh.readthedocs.io/en/latest/waits.html

参考链接2 selenium具体用法:https://pythondjango.cn/python/tools/7-python_selenium/#%E5%85%83%E7%B4%A0%E5%AE%9A%E4%BD%8D%E6%96%B9%E6%B3%95

参考链接3 别人的的实战:https://blog.csdn.net/zwq912318834/article/details/79773870 文章来源地址https://www.toymoban.com/news/detail-807847.html

文章来源地址https://www.toymoban.com/news/detail-807847.html

到了这里,关于爬虫进阶之selenium模拟浏览器的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!

![[爬虫]2.2.1 使用Selenium库模拟浏览器操作](https://imgs.yssmx.com/Uploads/2024/02/594545-1.jpg)