先说结论:Spark SQL catalog中对表结构的缓存一般不会自动更新。

实验如下:

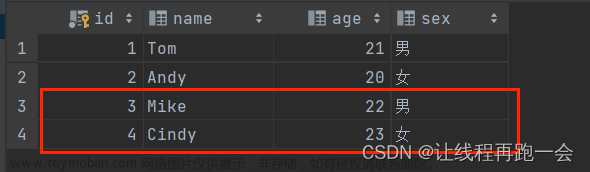

- 在pg中新建一张表t1,其中只有一列 c1 int

- 在Spark SQL中注册这张表,并从中查询数据

- ./bin/spark-sql --driver-class-path postgresql-42.7.1.jar --jars postgresql-42.7.1.jar

- spark-sql (default)> create table c1v using org.apache.spark.sql.jdbc options (url "jdbc:postgresql://localhost:5432/postgres", dbtable "public.t1", user 'postgres', password 'postgres');

- spark-sql (default)> select * from c1v;

- 结果:一行数据

- 在pg中为t1新增一列 c2,并插入一行数据2,2

- 在Spark SQL中继续查询数据

- spark-sql (default)> select * from c1v;

- 结果:两行数据,但是没有c2列

- 在pg中删掉c1列

- 在Spark SQL中继续查询数据

- spark-sql (default)> select * from c1v;

- 结果:报错 c1列不存在

- 从pg的query log中也可以看到,Spark一直发送的都是 SELECT "c1" FROM public.wkt,也即Spark对上述表结构的变化一无所知。

Spark报错如下文章来源:https://www.toymoban.com/news/detail-809376.html

spark-sql (default)> select * from c1v;

24/01/09 20:18:04 ERROR Executor: Exception in task 0.0 in stage 5.0 (TID 5)

org.postgresql.util.PSQLException: ERROR: column "c1" does not exist

Hint: Perhaps you meant to reference the column "wkt.c2".

Position: 8

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2712)

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2400)

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:367)

at org.postgresql.jdbc.PgStatement.executeInternal(PgStatement.java:498)

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:415)

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:190)

at org.postgresql.jdbc.PgPreparedStatement.executeQuery(PgPreparedStatement.java:134)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:92)

at org.apache.spark.TaskContext.runTaskWithListeners(TaskContext.scala:161)

at org.apache.spark.scheduler.Task.run(Task.scala:139)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:554)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1529)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:557)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:829)

24/01/09 20:18:04 WARN TaskSetManager: Lost task 0.0 in stage 5.0 (TID 5) (172.18.203.110 executor driver): org.postgresql.util.PSQLException: ERROR: column "c1" does not exist

Hint: Perhaps you meant to reference the column "wkt.c2".

Position: 8

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2712)

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2400)

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:367)

at org.postgresql.jdbc.PgStatement.executeInternal(PgStatement.java:498)

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:415)

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:190)

at org.postgresql.jdbc.PgPreparedStatement.executeQuery(PgPreparedStatement.java:134)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:92)

at org.apache.spark.TaskContext.runTaskWithListeners(TaskContext.scala:161)

at org.apache.spark.scheduler.Task.run(Task.scala:139)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:554)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1529)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:557)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:829)

24/01/09 20:18:04 ERROR TaskSetManager: Task 0 in stage 5.0 failed 1 times; aborting job

Job aborted due to stage failure: Task 0 in stage 5.0 failed 1 times, most recent failure: Lost task 0.0 in stage 5.0 (TID 5) (172.18.203.110 executor driver): org.postgresql.util.PSQLException: ERROR: column "c1" does not exist

Hint: Perhaps you meant to reference the column "wkt.c2".

Position: 8

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2712)

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2400)

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:367)

at org.postgresql.jdbc.PgStatement.executeInternal(PgStatement.java:498)

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:415)

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:190)

at org.postgresql.jdbc.PgPreparedStatement.executeQuery(PgPreparedStatement.java:134)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:92)

at org.apache.spark.TaskContext.runTaskWithListeners(TaskContext.scala:161)

at org.apache.spark.scheduler.Task.run(Task.scala:139)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:554)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1529)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:557)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:829)

Driver stacktrace:

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 5.0 failed 1 times, most recent failure: Lost task 0.0 in stage 5.0 (TID 5) (172.18.203.110 executor driver): org.postgresql.util.PSQLException: ERROR: column "c1" does not exist

Hint: Perhaps you meant to reference the column "wkt.c2".

Position: 8

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2712)

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2400)

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:367)

at org.postgresql.jdbc.PgStatement.executeInternal(PgStatement.java:498)

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:415)

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:190)

at org.postgresql.jdbc.PgPreparedStatement.executeQuery(PgPreparedStatement.java:134)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:92)

at org.apache.spark.TaskContext.runTaskWithListeners(TaskContext.scala:161)

at org.apache.spark.scheduler.Task.run(Task.scala:139)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:554)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1529)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:557)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:829)

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.failJobAndIndependentStages(DAGScheduler.scala:2785)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2(DAGScheduler.scala:2721)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2$adapted(DAGScheduler.scala:2720)

at scala.collection.immutable.List.foreach(List.scala:333)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:2720)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1(DAGScheduler.scala:1206)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1$adapted(DAGScheduler.scala:1206)

at scala.Option.foreach(Option.scala:437)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:1206)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2984)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2923)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2912)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:971)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2263)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2284)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2303)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2328)

at org.apache.spark.rdd.RDD.$anonfun$collect$1(RDD.scala:1019)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:405)

at org.apache.spark.rdd.RDD.collect(RDD.scala:1018)

at org.apache.spark.sql.execution.SparkPlan.executeCollect(SparkPlan.scala:448)

at org.apache.spark.sql.execution.SparkPlan.executeCollectPublic(SparkPlan.scala:475)

at org.apache.spark.sql.execution.HiveResult$.hiveResultString(HiveResult.scala:76)

at org.apache.spark.sql.hive.thriftserver.SparkSQLDriver.$anonfun$run$2(SparkSQLDriver.scala:69)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$6(SQLExecution.scala:118)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:195)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:103)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:827)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:65)

at org.apache.spark.sql.hive.thriftserver.SparkSQLDriver.run(SparkSQLDriver.scala:69)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processCmd(SparkSQLCLIDriver.scala:415)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1(SparkSQLCLIDriver.scala:533)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1$adapted(SparkSQLCLIDriver.scala:527)

at scala.collection.IterableOnceOps.foreach(IterableOnce.scala:563)

at scala.collection.IterableOnceOps.foreach$(IterableOnce.scala:561)

at scala.collection.AbstractIterable.foreach(Iterable.scala:926)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processLine(SparkSQLCLIDriver.scala:527)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver$.main(SparkSQLCLIDriver.scala:307)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:566)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:1020)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:192)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:215)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:91)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1111)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1120)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: org.postgresql.util.PSQLException: ERROR: column "c1" does not exist

Hint: Perhaps you meant to reference the column "wkt.c2".

Position: 8

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2712)

at org.postgresql.core.v3.QueryExecutorImpl.processResults(QueryExecutorImpl.java:2400)

at org.postgresql.core.v3.QueryExecutorImpl.execute(QueryExecutorImpl.java:367)

at org.postgresql.jdbc.PgStatement.executeInternal(PgStatement.java:498)

at org.postgresql.jdbc.PgStatement.execute(PgStatement.java:415)

at org.postgresql.jdbc.PgPreparedStatement.executeWithFlags(PgPreparedStatement.java:190)

at org.postgresql.jdbc.PgPreparedStatement.executeQuery(PgPreparedStatement.java:134)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:364)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:328)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:92)

at org.apache.spark.TaskContext.runTaskWithListeners(TaskContext.scala:161)

at org.apache.spark.scheduler.Task.run(Task.scala:139)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:554)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1529)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:557)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:829)进一步对Spark SQL的报错进行分析,可见报错点是在org.apache.spark.sql.execution.datasources.jdbc.JDBCRDD.compute(JDBCRDD.scala:320)。从代码中可见,Spark直接使用catalog中缓存的表结构拼接SQL语句下发,直到SQL语句真正被pg执行时,才识别到c1这一列已经不存在的错误。文章来源地址https://www.toymoban.com/news/detail-809376.html

var builder = dialect

.getJdbcSQLQueryBuilder(options)

.withColumns(columns)

.withPredicates(predicates, part)

.withSortOrders(sortOrders)

.withLimit(limit)

.withOffset(offset)

groupByColumns.foreach { groupByKeys =>

builder = builder.withGroupByColumns(groupByKeys)

}

sample.foreach { tableSampleInfo =>

builder = builder.withTableSample(tableSampleInfo)

}

val sqlText = builder.build()

stmt = conn.prepareStatement(sqlText,

ResultSet.TYPE_FORWARD_ONLY, ResultSet.CONCUR_READ_ONLY)

stmt.setFetchSize(options.fetchSize)

stmt.setQueryTimeout(options.queryTimeout)

rs = stmt.executeQuery()到了这里,关于初探Spark SQL catalog缓存机制的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!