@ARTICLE{10105495,

author={Li, Hui and Xu, Tianyang and Wu, Xiao-Jun and Lu, Jiwen and Kittler, Josef},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={LRRNet: A Novel Representation Learning Guided Fusion Network for Infrared and Visible Images},

year={2023},

volume={45},

number={9},

pages={11040-11052},

doi={10.1109/TPAMI.2023.3268209}}

论文级别:SCI A1

影响因子:23.6

📖[论文下载地址]

💽[代码下载地址]

📖论文解读

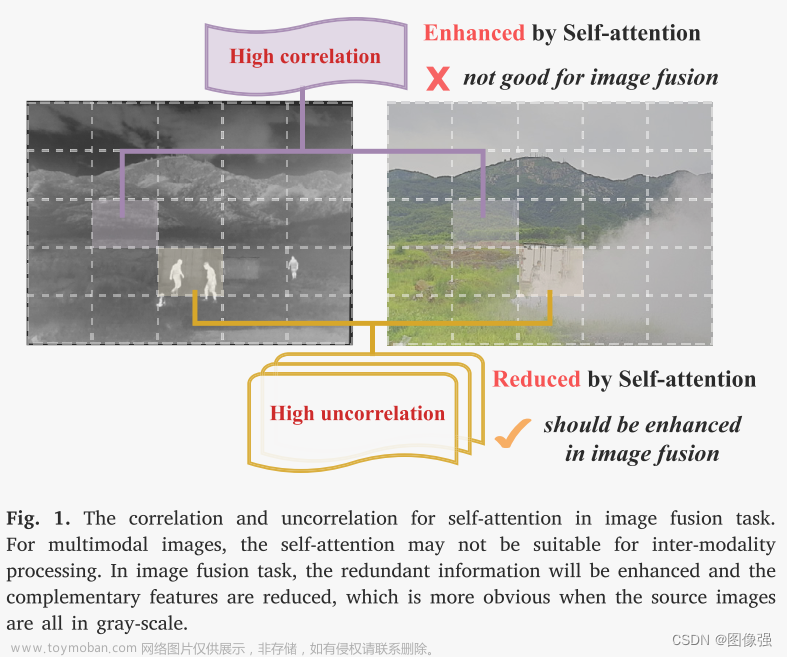

作者构建了一种【端到端】的【轻量级】融合网络,该模型使用训练测试策略避免了网络设计步骤。具体来说,对融合任务使用了【可学习的表达方法】,其网络模型构建是由生成可学习模型的优化算法指导的。【低秩表达】(low-rank representation ,【LRR】)是算法核心基础。

并提出了一种新的细节语义信息损失函数

🔑关键词

image fusion, network architecture, optimal model, infrared image, visible image.

图像融合,网络结构,优化模型,红外图像,可见光图像

💭核心思想

看的不是很懂,感觉和CDDFuse有点像,都是分别从源图像提取两个不同的特征,然后将不同源图像相同的特征拼接在一起,然后融合,然后重构生成融合图像。本文最大的创新应该就是LLRR-Blocks,使用这个东西可以避免设计复杂的网络结构,作者把问题公式化了。(我理解的很浅)

回头再看看吧

待更新……

参考链接

[什么是图像融合?(一看就通,通俗易懂)]

🪢网络结构

作者提出的网络结构如下所示。

x

x

📉损失函数

🔢数据集

- Train:KAIST

- TNO, VOT2020-RGBT

图像融合数据集链接

[图像融合常用数据集整理]

🎢训练设置

🔬实验

📏评价指标

- EN

- SD

- SSIMm

- MI

- VIFm

- Nabf

参考资料

[图像融合定量指标分析]

🥅Baseline

- DenseFuse, FusionGAN, IFCNN, CUNet, RFN-Nest, Tes2Fusion, YDTR, SwinFusion, U2Fusion

✨✨✨参考资料

✨✨✨强烈推荐必看博客[图像融合论文baseline及其网络模型]✨✨✨

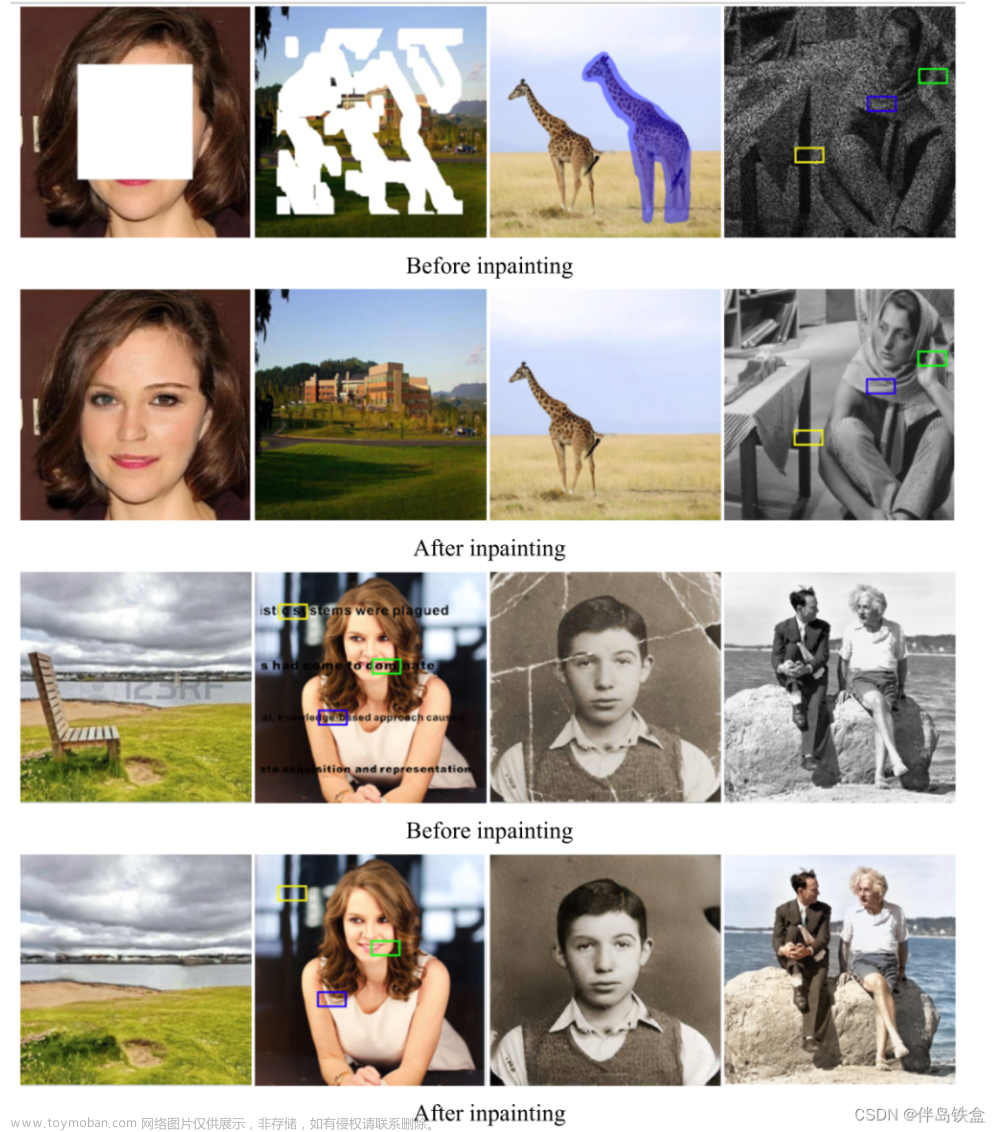

🔬实验结果

更多实验结果及分析可以查看原文:

📖[论文下载地址]

💽[代码下载地址]

🚀传送门

📑图像融合相关论文阅读笔记

📑[(DeFusion)Fusion from decomposition: A self-supervised decomposition approach for image fusion]

📑[ReCoNet: Recurrent Correction Network for Fast and Efficient Multi-modality Image Fusion]

📑[RFN-Nest: An end-to-end resid- ual fusion network for infrared and visible images]

📑[SwinFuse: A Residual Swin Transformer Fusion Network for Infrared and Visible Images]

📑[SwinFusion: Cross-domain Long-range Learning for General Image Fusion via Swin Transformer]

📑[(MFEIF)Learning a Deep Multi-Scale Feature Ensemble and an Edge-Attention Guidance for Image Fusion]

📑[DenseFuse: A fusion approach to infrared and visible images]

📑[DeepFuse: A Deep Unsupervised Approach for Exposure Fusion with Extreme Exposure Image Pair]

📑[GANMcC: A Generative Adversarial Network With Multiclassification Constraints for IVIF]

📑[DIDFuse: Deep Image Decomposition for Infrared and Visible Image Fusion]

📑[IFCNN: A general image fusion framework based on convolutional neural network]

📑[(PMGI) Rethinking the image fusion: A fast unified image fusion network based on proportional maintenance of gradient and intensity]

📑[SDNet: A Versatile Squeeze-and-Decomposition Network for Real-Time Image Fusion]

📑[DDcGAN: A Dual-Discriminator Conditional Generative Adversarial Network for Multi-Resolution Image Fusion]

📑[FusionGAN: A generative adversarial network for infrared and visible image fusion]

📑[PIAFusion: A progressive infrared and visible image fusion network based on illumination aw]

📑[CDDFuse: Correlation-Driven Dual-Branch Feature Decomposition for Multi-Modality Image Fusion]

📑[U2Fusion: A Unified Unsupervised Image Fusion Network]

📑综述[Visible and Infrared Image Fusion Using Deep Learning]

📚图像融合论文baseline总结

📚[图像融合论文baseline及其网络模型]

📑其他论文

📑[3D目标检测综述:Multi-Modal 3D Object Detection in Autonomous Driving:A Survey]

🎈其他总结

🎈[CVPR2023、ICCV2023论文题目汇总及词频统计]

✨精品文章总结

✨[图像融合论文及代码整理最全大合集]

✨[图像融合常用数据集整理]文章来源:https://www.toymoban.com/news/detail-813050.html

如有疑问可联系:420269520@qq.com;

码字不易,【关注,收藏,点赞】一键三连是我持续更新的动力,祝各位早发paper,顺利毕业~文章来源地址https://www.toymoban.com/news/detail-813050.html

到了这里,关于图像融合论文阅读:LRRNet: A Novel Representation Learning Guided Fusion Network for Infrared and Visible Imag的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!