Pandas 初体验

第1关 爬取网页的表格信息

import requests

from bs4 import BeautifulSoup

#代码开始

respose = requests.get("https://tjj.hunan.gov.cn/hntj/tjfx/tjgb/pcgbv/202105/t20210519_19079329.html")

respose.encoding = 'utf-8'

content = respose.text.encode()

soup = BeautifulSoup(content, "html.parser")

bg = soup.find('table')

#代码结束

print(bg)

第2关 爬取表格中指定单元格的信息

import requests

from bs4 import BeautifulSoup

url = "https://tjj.hunan.gov.cn/hntj/tjfx/tjgb/pcgbv/202105/t20210519_19079329.html"

r=requests.get(url)

r.encoding = 'utf-8'

soup=BeautifulSoup(r.text,"html.parser")

bg=soup.find('table')

#代码开始

alltr = bg.findAll('tr')

for index, i in enumerate(alltr, 1): # 使用enumerate获取索引

if index >= 4: # 从第四行开始输出

allspan = i.findAll('span')

for count, j in enumerate(allspan,1):

print(j.text,end=" ")

print() # 在第二个循环结束后换行

#代码结束

第3关 将单元格的信息保存到列表并排序

import requests

from bs4 import BeautifulSoup

url = "https://tjj.hunan.gov.cn/hntj/tjfx/tjgb/pcgbv/202105/t20210519_19079329.html"

r=requests.get(url)

r.encoding = 'utf-8'

soup=BeautifulSoup(r.text,"html.parser")

bg=soup.find('table')

lb=[]

#代码开始

name_num = {}

use = []

alltr = bg.findAll('tr')

for index, i in enumerate(alltr, 1): # 使用enumerate获取索引

if index >= 4: # 从第四行开始输出

allspan = i.findAll('span')

name = allspan[0].text

num = allspan[1].text

name_num[name] = int(num)

use.append(int(num))

use.sort(reverse=True)

lb = [ [k,v] for k,v in sorted(name_num.items(),key=lambda item: use.index(item[1]))]

#代码结束

for lbxx in lb:

print(lbxx[0],lbxx[1])

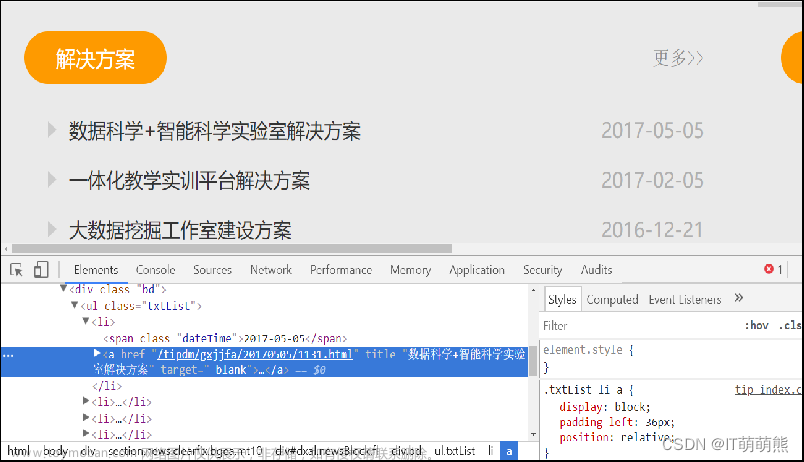

第4关 爬取div标签的信息

import requests

from bs4 import BeautifulSoup

url = 'https://www.hnu.edu.cn/xysh/xshd.htm'

r = requests.get(url)

r.encoding = 'utf-8'

#代码开始

soup = BeautifulSoup(r.text,'html.parser')

jzsj = soup.find('div',class_= 'xinwen-sj-top').string.strip()

jzbt = soup.find('div',attrs={'class','xinwen-wen-bt'}).string.strip()

jzdd = soup.find('div',attrs={'class','xinwen-wen-zy'}).text.strip()

#代码结束

f1=open("jzxx.txt","w")

f1.write(jzsj+"\n")

f1.write(jzbt+"\n")

f1.write(jzdd+"\n")

f1.close()

第5关 爬取单页多个div标签的信息

import requests

from bs4 import BeautifulSoup

url = 'https://www.hnu.edu.cn/xysh/xshd.htm'

r = requests.get(url)

r.encoding = 'utf-8'

jzxx=[]

#代码开始

#代码结束

f1=open("jzxx2.txt","w")

for xx in jzxx:

f1.write(",".join(xx)+"\n")

f1.close()

第6关 爬取多个网页的多个div标签的信息

#湖南大学信科院陈娟版权所有

import requests

from bs4 import BeautifulSoup

f1=open("jz.txt","w",encoding="utf8")

#代码开始

#代码结束

f1.close()

Scrapy爬虫基础

第1关 Scarpy安装与项目创建

#include <iostream>

using namespace std ;

int main (){

int x ; cin >> x ;

while(x--){

scrapy genspider Hello www.educoder.net

}

return 0 ;

}

第2关 Scrapy核心原理

# -*- coding: utf-8 -*-

import scrapy

class WorldSpider(scrapy.Spider):

name = 'world'

allowed_domains = ['www.baidu.com']

start_urls = ['http://www.baidu.com/']

def parse(self, response):

# ********** Begin *********#

# 将获取网页源码本地持久化

baidu = response.url.split(".")[1] + '.html'

with open(baidu, 'wb') as f:

f.write(response.body)

# ********** End *********#

网页数据解析

第1关 XPath解析网页

import urllib.request

from lxml import etree

def get_data(url):

'''

:param url: 请求地址

:return: None

'''

response=urllib.request.urlopen(url=url)

html=response.read().decode("utf-8")

# *************** Begin *************** #

parse= etree.HTML(html)

item_list = parse.xpath("//div[@class='left']/ul/li/span/a/text()")

# *************** End ***************** #

print(item_list)

第2关 BeautifulSoup解析网页

import requests

from bs4 import BeautifulSoup

def get_data(url, headers):

'''

两个参数

:param url:统一资源定位符,请求网址

:param headers:请求头

:return data:list类型的所有古诗内容

'''

# ***************** Begin ******************** #

obj=requests.get(url)

soup=BeautifulSoup(obj.content,"lxml",from_encoding="utf-8")

##data=soup.find("div",class_="left").find('p')

data=soup.find("div",class_='left').ul.find_all("li")

data = [i.p.text for i in data]

# ****************** end ********************* #

return data

requests 爬虫

第1关 requests 基础

import requests

def get_html(url):

'''

两个参数

:param url:统一资源定位符,请求网址

:param headers:请求头

:return:html

'''

# ***************** Begin ******************** #

# 补充请求头

headers={}

# get请求网页

header={

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36'

}

res= requests.get(url ,headers=header)

res.encoding = 'utf-8'

html=res.text

# 获取网页信息文本

# ***************** End ******************** #

return html

第2关 requests 进阶

import requests

def get_html(url):

'''

两个参数

:param url:统一资源定位符,请求网址

:param headers:请求头

:return html 网页的源码

:return sess 创建的会话

'''

# ***************** Begin ******************** #

# 补充请求头

headers={

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36"

}

# 创建Session, 并使用Session的get请求网页

sess = requests.session()

data = {

"name":"hblgysl",

"password":"hblgzsx",

}

res = sess.post(url,headers=headers,data=data)

res1 = sess.get(url)

html=res1.text

# 获取网页信息文本

# ****************** End ********************* #

return html, sess

JSON基础

第1关 JSON篇:JSON基础知识

{

"students": [

{ "name": "赵昊", "age": 15, "ismale": true },

{ "name": "龙傲天", "age": 16, "ismale": true },

{ "name": "玛丽苏", "age": 15, "ismale": false }

],

"count": 3

}

第2关 JSON篇:使用json库

import json

def Func():

data = open("step2/2017.txt","r",encoding = "utf-8")

obj = json.load(data)

data.close()

#********** Begin *********#

obj={

"count":4,

"infos":

[

{"name":"赵昊" , "age":16 ,"height": 1.83, "sex" : "男性" },

{"name":"龙傲天" , "age":17 ,"height": 2.00, "sex" : "男性"},

{"name":"玛丽苏" , "age":16 ,"height": 1.78, "sex" : "女性"},

{"name":"叶良辰" , "age":17 ,"height": 1.87, "sex" : "男性"}

]

}

#********** End **********#

output = open("step2/2018.txt","w",encoding = "utf-8")

json.dump(obj,output) #输出到文件

output.close()

文章来源地址https://www.toymoban.com/news/detail-815204.html

文章来源:https://www.toymoban.com/news/detail-815204.html

到了这里,关于【头歌】——数据分析与实践-python-网络爬虫-Scrapy爬虫基础-网页数据解析-requests 爬虫-JSON基础的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!