Optimizing Model Parameters 优化模型参数

Optimizing Model Parameters 优化模型参数

现在我们有了模型和数据,是时候通过优化数据上的参数来训练、验证和测试我们的模型了。训练模型是一个迭代过程;在每次迭代中,模型都会对输出进行猜测,计算其猜测中的误差(损失),收集相对于其参数的导数的误差(如我们在上一节中看到的),并使用梯度下降优化这些参数。有关此过程的更详细演练,请观看3Blue1Brown 的反向传播有关视频。

前置代码

我们加载前面有关数据集和数据加载器 以及构建模型的代码。

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision.transforms import ToTensor

training_data = datasets.FashionMNIST(

root="data",

train=True,

download=True,

transform=ToTensor()

)

test_data = datasets.FashionMNIST(

root="data",

train=False,

download=True,

transform=ToTensor()

)

train_dataloader = DataLoader(training_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

class NeuralNetwork(nn.Module):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.linear_relu_stack = nn.Sequential(

nn.Linear(28*28, 512),

nn.ReLU(),

nn.Linear(512, 512),

nn.ReLU(),

nn.Linear(512, 10),

)

def forward(self, x):

x = self.flatten(x)

logits = self.linear_relu_stack(x)

return logits

model = NeuralNetwork()

Out:

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz to data/FashionMNIST/raw/train-images-idx3-ubyte.gz

0%| | 0/26421880 [00:00<?, ?it/s]

0%| | 65536/26421880 [00:00<01:12, 363612.43it/s]

1%| | 229376/26421880 [00:00<00:38, 681614.69it/s]

4%|3 | 950272/26421880 [00:00<00:11, 2185311.70it/s]

13%|#2 | 3375104/26421880 [00:00<00:03, 6583220.66it/s]

35%|###5 | 9306112/26421880 [00:00<00:00, 19065043.44it/s]

45%|####5 | 11894784/26421880 [00:00<00:00, 17548305.75it/s]

66%|######6 | 17465344/26421880 [00:01<00:00, 22251664.98it/s]

87%|########6 | 22937600/26421880 [00:01<00:00, 29364458.17it/s]

100%|#########9| 26378240/26421880 [00:01<00:00, 26030331.64it/s]

100%|##########| 26421880/26421880 [00:01<00:00, 18174652.40it/s]

Extracting data/FashionMNIST/raw/train-images-idx3-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz to data/FashionMNIST/raw/train-labels-idx1-ubyte.gz

0%| | 0/29515 [00:00<?, ?it/s]

100%|##########| 29515/29515 [00:00<00:00, 327007.45it/s]

Extracting data/FashionMNIST/raw/train-labels-idx1-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz to data/FashionMNIST/raw/t10k-images-idx3-ubyte.gz

0%| | 0/4422102 [00:00<?, ?it/s]

1%|1 | 65536/4422102 [00:00<00:12, 363040.95it/s]

5%|5 | 229376/4422102 [00:00<00:06, 683295.29it/s]

21%|##1 | 950272/4422102 [00:00<00:01, 2194682.48it/s]

75%|#######4 | 3309568/4422102 [00:00<00:00, 7145453.07it/s]

100%|##########| 4422102/4422102 [00:00<00:00, 6093019.93it/s]

Extracting data/FashionMNIST/raw/t10k-images-idx3-ubyte.gz to data/FashionMNIST/raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz to data/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz

0%| | 0/5148 [00:00<?, ?it/s]

100%|##########| 5148/5148 [00:00<00:00, 38905003.59it/s]

Extracting data/FashionMNIST/raw/t10k-labels-idx1-ubyte.gz to data/FashionMNIST/raw

Hyperparameters 超参数

超参数是可调整的参数,可让您控制模型优化过程。不同的超参数值会影响模型训练和收敛速度(阅读有关超参数调整的更多信息)

我们定义以下训练超参数:

- Number of Epochs - 迭代数据集的次数

- Batch Size - 参数更新之前,通过网络传播的数据样本数量

- Learning Rate- 每个batch/epoch更新模型参数的量。较小的值会导致学习速度较慢,而较大的值可能会导致训练期间出现不可预测的行为。

learning_rate = 1e-3

batch_size = 64

epochs = 5

Optimization Loop 优化循环

一旦我们设置了超参数,我们就可以使用优化循环来训练和优化我们的模型。优化循环的每次迭代称为一个epoch。

每个 epoch由两个主要部分组成:

- The Train Loop- 迭代训练数据集并尝试收敛到最佳参数。

- **The Validation/Test Loop **- 迭代测试数据集以检查模型性能是否有所改善。

让我们简单熟悉一下训练循环中使用的一些概念。向前跳转查看优化循环的完整实现。

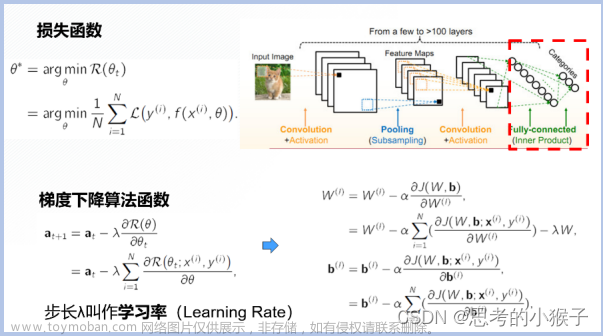

Loss Function 损失函数

当提供一些训练数据时,我们未经训练的网络可能不会给出正确的答案。损失函数衡量的是得到的结果与目标值的不相似程度,它是我们在训练时想要最小化的损失函数。为了计算损失,我们使用给定数据样本的输入进行预测,并将其与真实数据标签值进行比较。

常见的损失函数包括用于回归任务的nn.MSELoss(Mean Square Error 均方误差)和 用于分类的nn.NLLLoss(Negative Log Likelihood 负对数似然)。 nn.CrossEntropyLoss结合了nn.LogSoftmax和nn.NLLLoss。

我们将模型的输出 logits 传递给nn.CrossEntropyLoss,这将标准化 logits 并计算预测误差。

# Initialize the loss function

loss_fn = nn.CrossEntropyLoss()

Optimizer 优化器

优化是调整模型参数以减少每个训练步骤中模型误差的过程。Optimization algorithms定义了如何执行此过程(在本例中我们使用随机梯度下降)。所有优化逻辑都封装在optimizer对象中。这里,我们使用SGD优化器;此外,PyTorch 中还有许多不同的优化器 ,例如 ADAM 和 RMSProp,它们可以更好地处理不同类型的模型和数据。

注册需要训练的模型参数,并传入学习率超参数。我们通过这种方式,来初始化优化器。

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

在训练循环中,优化分三个步骤进行:

- 调用

optimizer.zero_grad()重置模型参数的梯度。默认情况下渐变相加;为了防止重复计算,我们在每次迭代时明确地将它们归零。 - 通过调用

loss.backward()来反向传播预测损失。PyTorch 存储每个参数的损失梯度。 - 一旦我们有了梯度,通过后向传递中收集的梯度,我们就可以调用

optimizer.step()来调整参数。

Full Implementation 全面实施

我们定义了train_loop优化代码的循环,test_loop根据我们的测试数据评估模型的性能。

def train_loop(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset)

# Set the model to training mode - important for batch normalization and dropout layers

# Unnecessary in this situation but added for best practices

model.train()

for batch, (X, y) in enumerate(dataloader):

# Compute prediction and loss

pred = model(X)

loss = loss_fn(pred, y)

# Backpropagation

loss.backward()

optimizer.step()

optimizer.zero_grad()

if batch % 100 == 0:

loss, current = loss.item(), (batch + 1) * len(X)

print(f"loss: {loss:>7f} [{current:>5d}/{size:>5d}]")

def test_loop(dataloader, model, loss_fn):

# Set the model to evaluation mode - important for batch normalization and dropout layers

# Unnecessary in this situation but added for best practices

model.eval()

size = len(dataloader.dataset)

num_batches = len(dataloader)

test_loss, correct = 0, 0

# Evaluating the model with torch.no_grad() ensures that no gradients are computed during test mode

# also serves to reduce unnecessary gradient computations and memory usage for tensors with requires_grad=True

with torch.no_grad():

for X, y in dataloader:

pred = model(X)

test_loss += loss_fn(pred, y).item()

correct += (pred.argmax(1) == y).type(torch.float).sum().item()

test_loss /= num_batches

correct /= size

print(f"Test Error: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

我们初始化损失函数和优化器,并将其传递给train_loop和test_loop。请随意增加epoch数来跟踪模型改进的性能。

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

epochs = 10

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train_loop(train_dataloader, model, loss_fn, optimizer)

test_loop(test_dataloader, model, loss_fn)

print("Done!")

Out:

Epoch 1

-------------------------------

loss: 2.298730 [ 64/60000]

loss: 2.289123 [ 6464/60000]

loss: 2.273286 [12864/60000]

loss: 2.269406 [19264/60000]

loss: 2.249603 [25664/60000]

loss: 2.229407 [32064/60000]

loss: 2.227368 [38464/60000]

loss: 2.204261 [44864/60000]

loss: 2.206193 [51264/60000]

loss: 2.166651 [57664/60000]

Test Error:

Accuracy: 50.9%, Avg loss: 2.166725

Epoch 2

-------------------------------

loss: 2.176750 [ 64/60000]

loss: 2.169595 [ 6464/60000]

loss: 2.117500 [12864/60000]

loss: 2.129272 [19264/60000]

loss: 2.079674 [25664/60000]

loss: 2.032928 [32064/60000]

loss: 2.050115 [38464/60000]

loss: 1.985236 [44864/60000]

loss: 1.987887 [51264/60000]

loss: 1.907162 [57664/60000]

Test Error:

Accuracy: 55.9%, Avg loss: 1.915486

Epoch 3

-------------------------------

loss: 1.951612 [ 64/60000]

loss: 1.928685 [ 6464/60000]

loss: 1.815709 [12864/60000]

loss: 1.841552 [19264/60000]

loss: 1.732467 [25664/60000]

loss: 1.692914 [32064/60000]

loss: 1.701714 [38464/60000]

loss: 1.610632 [44864/60000]

loss: 1.632870 [51264/60000]

loss: 1.514263 [57664/60000]

Test Error:

Accuracy: 58.8%, Avg loss: 1.541525

Epoch 4

-------------------------------

loss: 1.616448 [ 64/60000]

loss: 1.582892 [ 6464/60000]

loss: 1.427595 [12864/60000]

loss: 1.487950 [19264/60000]

loss: 1.359332 [25664/60000]

loss: 1.364817 [32064/60000]

loss: 1.371491 [38464/60000]

loss: 1.298706 [44864/60000]

loss: 1.336201 [51264/60000]

loss: 1.232145 [57664/60000]

Test Error:

Accuracy: 62.2%, Avg loss: 1.260237

Epoch 5

-------------------------------

loss: 1.345538 [ 64/60000]

loss: 1.327798 [ 6464/60000]

loss: 1.153802 [12864/60000]

loss: 1.254829 [19264/60000]

loss: 1.117322 [25664/60000]

loss: 1.153248 [32064/60000]

loss: 1.171765 [38464/60000]

loss: 1.110263 [44864/60000]

loss: 1.154467 [51264/60000]

loss: 1.070921 [57664/60000]

Test Error:

Accuracy: 64.1%, Avg loss: 1.089831

Epoch 6

-------------------------------

loss: 1.166889 [ 64/60000]

loss: 1.170514 [ 6464/60000]

loss: 0.979435 [12864/60000]

loss: 1.113774 [19264/60000]

loss: 0.973411 [25664/60000]

loss: 1.015192 [32064/60000]

loss: 1.051113 [38464/60000]

loss: 0.993591 [44864/60000]

loss: 1.039709 [51264/60000]

loss: 0.971077 [57664/60000]

Test Error:

Accuracy: 65.8%, Avg loss: 0.982440

Epoch 7

-------------------------------

loss: 1.045165 [ 64/60000]

loss: 1.070583 [ 6464/60000]

loss: 0.862304 [12864/60000]

loss: 1.022265 [19264/60000]

loss: 0.885213 [25664/60000]

loss: 0.919528 [32064/60000]

loss: 0.972762 [38464/60000]

loss: 0.918728 [44864/60000]

loss: 0.961629 [51264/60000]

loss: 0.904379 [57664/60000]

Test Error:

Accuracy: 66.9%, Avg loss: 0.910167

Epoch 8

-------------------------------

loss: 0.956964 [ 64/60000]

loss: 1.002171 [ 6464/60000]

loss: 0.779057 [12864/60000]

loss: 0.958409 [19264/60000]

loss: 0.827240 [25664/60000]

loss: 0.850262 [32064/60000]

loss: 0.917320 [38464/60000]

loss: 0.868384 [44864/60000]

loss: 0.905506 [51264/60000]

loss: 0.856353 [57664/60000]

Test Error:

Accuracy: 68.3%, Avg loss: 0.858248

Epoch 9

-------------------------------

loss: 0.889765 [ 64/60000]

loss: 0.951220 [ 6464/60000]

loss: 0.717035 [12864/60000]

loss: 0.911042 [19264/60000]

loss: 0.786085 [25664/60000]

loss: 0.798370 [32064/60000]

loss: 0.874939 [38464/60000]

loss: 0.832796 [44864/60000]

loss: 0.863254 [51264/60000]

loss: 0.819742 [57664/60000]

Test Error:

Accuracy: 69.5%, Avg loss: 0.818780

Epoch 10

-------------------------------

loss: 0.836395 [ 64/60000]

loss: 0.910220 [ 6464/60000]

loss: 0.668506 [12864/60000]

loss: 0.874338 [19264/60000]

loss: 0.754805 [25664/60000]

loss: 0.758453 [32064/60000]

loss: 0.840451 [38464/60000]

loss: 0.806153 [44864/60000]

loss: 0.830360 [51264/60000]

loss: 0.790281 [57664/60000]

Test Error:

Accuracy: 71.0%, Avg loss: 0.787271

Done!

Further Reading 进一步阅读

- Loss Functions

- torch.optim

- Warmstart Training a Model

参考文献

Optimizing Model Parameters — PyTorch Tutorials 2.2.0+cu121 documentation

Optimizing Model Parameters — PyTorch Tutorials 2.2.0+cu121 documentation

Github

storm-ice/Get_started_with_PyTorch文章来源地址https://www.toymoban.com/news/detail-820556.html文章来源:https://www.toymoban.com/news/detail-820556.html

storm-ice/Get_started_with_PyTorch

到了这里,关于【深度学习PyTorch入门】6.Optimizing Model Parameters 优化模型参数的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!