一、实施步骤:

(1)客户端也要有cent用户:

useradd cent && echo "123" | passwd --stdin cent

echo -e 'Defaults:cent !requiretty\ncent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph

chmod 440 /etc/sudoers.d/ceph

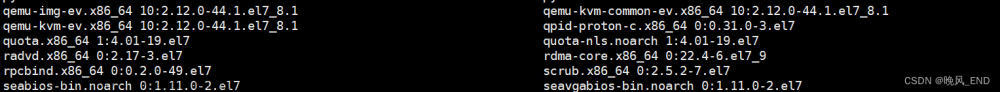

(2)openstack要用ceph的节点(比如compute-node和storage-node)安装下载的软件包:

yum localinstall ./* -y

或则:每个节点安装 clients(要访问ceph集群的节点):

yum install python-rbdyum

install ceph-common

如果先采用上面的方式安装客户端,其实这两个包在rpm包中早已经安装过了

(3)部署节点上执行,为openstack节点安装ceph:

ceph-deploy install controller

ceph-deploy admin controller

(4)客户端执行

sudo chmod 644 /etc/ceph/ceph.client.admin.keyring| 1 |

(5)create pools,只需在一个ceph节点上操作即可:

ceph osd pool create images 1024

ceph osd pool create vms 1024

ceph osd pool create volumes 1024

显示pool的状态

ceph osd lspools

(6)在ceph集群中,创建glance和cinder用户, 只需在一个ceph节点上操作即可:

ceph auth get-or-create client.glance mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=images'

ceph auth get-or-create client.cinder mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=volumes, allow rwx pool=vms, allow rx pool=images'

nova使用cinder用户,就不单独创建了

(7)拷贝ceph-ring, 只需在一个ceph节点上操作即可:

| 1 2 |

ceph auth get-or-create client.glance > /etc/ceph/ceph.client.glance.keyring ceph auth get-or-create client.cinder > /etc/ceph/ceph.client.cinder.keyring |

使用scp拷贝到其他节点(ceph集群节点和openstack的要用ceph的节点比如compute-node和storage-node,本次对接的是一个all-in-one的环境,所以copy到controller节点即可 )

| 1 2 3 4 |

[root@yunwei ceph]# ls ceph.client.admin.keyring ceph.client.cinder.keyring ceph.client.glance.keyring ceph.conf rbdmap tmpR3uL7W [root@yunwei ceph]# [root@yunwei ceph]# scp ceph.client.glance.keyring ceph.client.cinder.keyring controller:/etc/ceph/ |

(8)更改文件的权限(所有客户端节点均执行)

| 1 2 |

chown glance:glance /etc/ceph/ceph.client.glance.keyring chown cinder:cinder /etc/ceph/ceph.client.cinder.keyring |

(9)更改libvirt权限(只需在nova-compute节点上操作即可,每个计算节点都做)

uuidgen

940f0485-e206-4b49-b878-dcd0cb9c70a4

在/etc/ceph/目录下(在什么目录没有影响,放到/etc/ceph目录方便管理):

cat > secret.xml <<EOF

<secret ephemeral='no' private='no'>

<uuid>940f0485-e206-4b49-b878-dcd0cb9c70a4</uuid>

<usage type='ceph'>

<name>client.cinder secret</name>

</usage>

</secret>

EOF

将 secret.xml 拷贝到所有compute节点,并执行::

virsh secret-define --file secret.xml

ceph auth get-key client.cinder > ./client.cinder.key

virsh secret-set-value --secret 940f0485-e206-4b49-b878-dcd0cb9c70a4 --base64 $(cat ./client.cinder.key)配置Glance, 在所有的controller节点上做如下更改:

vim /etc/glance/glance-api.conf

[DEFAULT]

default_store = rbd

[cors]

[cors.subdomain]

[database]

connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

[glance_store]

stores = rbd

default_store = rbd

rbd_store_pool = images

rbd_store_user = glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

[image_format]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = glance

[matchmaker_redis]

[oslo_concurrency]

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[paste_deploy]

flavor = keystone

[profiler]

[store_type_location_strategy]

[task]

[taskflow_executor]

在所有的controller节点上做如下更改

| 1 2 |

systemctl restart openstack-glance-api.service systemctl status openstack-glance-api.service |

创建image验证:

| 1 2 3 4 5 |

[root@controller ~]# openstack image create "cirros" --file cirros-0.3.3-x86_64-disk.img.img --disk-format qcow2 --container-format bare --public

[root@controller ~]# rbd ls images 9ce5055e-4217-44b4-a237-e7b577a20dac **********有输出镜像说明成功了 |

(8)配置 Cinder:

vim /etc/cinder/cinder.conf

[DEFAULT]

my_ip = 172.16.254.63

glance_api_servers = http://controller:9292

auth_strategy = keystone

enabled_backends = ceph

state_path = /var/lib/cinder

transport_url = rabbit://openstack:admin@controller

[backend]

[barbican]

[brcd_fabric_example]

[cisco_fabric_example]

[coordination]

[cors]

[cors.subdomain]

[database]

connection = mysql+pymysql://cinder:CINDER_DBPASS@controller/cinder

[fc-zone-manager]

[healthcheck]

[key_manager]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = cinder

password = cinder

[matchmaker_redis]

[oslo_concurrency]

lock_path = /var/lib/cinder/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[oslo_reports]

[oslo_versionedobjects]

[profiler]

[ssl]

[ceph]

volume_driver = cinder.volume.drivers.rbd.RBDDriver

rbd_pool = volumes

rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_flatten_volume_from_snapshot = false

rbd_max_clone_depth = 5

rbd_store_chunk_size = 4

rados_connect_timeout = -1

glance_api_version = 2

rbd_user = cinder

rbd_secret_uuid = 940f0485-e206-4b49-b878-dcd0cb9c70a4

volume_backend_name=ceph

重启cinder服务:

| 1 2 |

systemctl restart openstack-cinder-api.service openstack-cinder-scheduler.service openstack-cinder-volume.service systemctl status openstack-cinder-api.service openstack-cinder-scheduler.service openstack-cinder-volume.service |

创建volume验证:

| 1 2 |

[root@controller gfs]# rbd ls volumes volume-43b7c31d-a773-4604-8e4a-9ed78ec18996 |

(9)配置Nova:

vim /etc/nova/nova.conf

[DEFAULT]

my_ip=172.16.254.63

use_neutron = True

firewall_driver = nova.virt.firewall.NoopFirewallDriver

enabled_apis=osapi_compute,metadata

transport_url = rabbit://openstack:admin@controller

[api]

auth_strategy = keystone

[api_database]

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api

[barbican]

[cache]

[cells]

[cinder]

os_region_name = RegionOne

[cloudpipe]

[conductor]

[console]

[consoleauth]

[cors]

[cors.subdomain]

[crypto]

[database]

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://controller:9292

[guestfs]

[healthcheck]

[hyperv]

[image_file_url]

[ironic]

[key_manager]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = nova

[libvirt]

virt_type=qemu

images_type = rbd

images_rbd_pool = vms

images_rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_user = cinder

rbd_secret_uuid = 940f0485-e206-4b49-b878-dcd0cb9c70a4

[matchmaker_redis]

[metrics]

[mks]

[neutron]

url = http://controller:9696

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = neutron

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path=/var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

os_region_name = RegionOne

auth_type = password

auth_url = http://controller:35357/v3

project_name = service

project_domain_name = Default

username = placement

password = placement

user_domain_name = Default

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[ssl]

[trusted_computing]

[upgrade_levels]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled=true

vncserver_listen=$my_ip

vncserver_proxyclient_address=$my_ip

novncproxy_base_url = http://172.16.254.63:6080/vnc_auto.html

[workarounds]

[wsgi]

[xenserver]

[xvp]

重启nova服务:

| 1 2 |

systemctl restart openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-compute.service openstack-nova-cert.service systemctl status openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-no |

ceph常用命令

1、查看ceph集群配置信息

| 1 |

ceph daemon /var/run/ceph/ceph-mon.$(hostname -s).asok config show |

2、在部署节点修改了ceph.conf文件,将新配置推送至全部的ceph节点

| 1 |

ceph-deploy --overwrite-conf config push dlp node1 node2 node3 |

3、检查仲裁状态,查看mon添加是否成功

| 1 |

ceph quorum_status --format json-pretty |

4、列式pool列表

| 1 |

ceph osd lspools |

5、列示pool详细信息

| 1 |

ceph osd dump |grep pool |

6、检查pool的副本数

| 1 |

ceph osd dump|grep -i size |

7、创建pool

| 1 |

ceph osd pool create pooltest 128 |

8、删除pool

| 1 2 |

ceph osd pool delete data ceph osd pool delete data data --yes-i-really-really-mean-it |

9、设置pool副本数

| 1 2 |

ceph osd pool get data size ceph osd pool set data size 3 |

10、设置pool配额

| 1 2 |

ceph osd pool set-quota data max_objects 100 #最大100个对象 ceph osd pool set-quota data max_bytes $((10 * 1024 * 1024 * 1024)) #容量大小最大为10G |

11、重命名pool

| 1 |

ceph osd pool rename data date |

12、PG, Placement Groups。CRUSH先将数据分解成一组对象,然后根据对象名称、复制级别和系统中的PG数等信息执行散列操作,再将结果生成PG ID。可以将PG看做一个逻辑容器,这个容器包含多个对 象,同时这个逻辑对象映射之多个OSD上。如果没有PG,在成千上万个OSD上管理和跟踪数百万计的对象的复制和传播是相当困难的。没有PG这一层,管理海量的对象所消耗的计算资源也是不可想象的。建议每个OSD上配置50~100个PG。

PGP是为了实现定位而设置的PG,它的值应该和PG的总数(即pg_num)保持一致。对于Ceph的一个pool而言,如果增加pg_num,还应该调整pgp_num为同样的值,这样集群才可以开始再平衡。

参数pg_num定义了PG的数量,PG映射至OSD。当任意pool的PG数增加时,PG依然保持和源OSD的映射。直至目前,Ceph还未开始再平衡。此时,增加pgp_num的值,PG才开始从源OSD迁移至其他的OSD,正式开始再平衡。PGP,Placement Groups of Placement。

计算PG数:

ceph集群中的PG总数

| 1 |

PG总数 = (OSD总数 * 100) / 最大副本数 ** 结果必须舍入到最接近的2的N次方幂的值 |

ceph集群中每个pool中的PG总数

| 1 |

存储池PG总数 = (OSD总数 * 100 / 最大副本数) / 池数 |

获取现有的PG数和PGP数值

| 1 2 |

ceph osd pool get data pg_num ceph osd pool get data pgp_num |

13、修改存储池的PG和PGP

| 1 2 |

ceph osd pool set data pg_num = 1文章来源:https://www.toymoban.com/news/detail-829441.html ceph osd pool set data pgp_num = 1文章来源地址https://www.toymoban.com/news/detail-829441.html |

到了这里,关于Openstack云计算(六)Openstack环境对接ceph的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!