kubernetes集群规划

| 主机名 | IP地址 | 备注 |

|---|---|---|

| k8s-master01 | 192.168.0.109 | master |

| k8s-node1 | 192.168.0.108 | node1 |

| k8s-node2 | 192.168.0.107 | node1 |

| k8s-node3 | 192.168.0.105 | node1 |

🍇 准备工作

1、主机配置

[root@k8s-master01 ~]# hostnamectl set-hostname k8s-master01

[root@k8s-master01 ~]# hostname

k8s-master01

[root@localhost ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.0.109 k8s-master01

192.168.0.108 k8s-node1

192.168.0.107 k8s-node2

192.168.0.105 k8s-node3

systemctl stop firewalld && systemctl disable firewalld

setenforce 0 && sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

sestatus #查看selinux 状态

# 时间同步

ntpdate time1.aliyun.com

2、升级内核

导elrepo gpg key,软件验证

# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

安装elrepoYUM源仓库

# yum -y install https://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

安装kernel-ml版本,ml为长期稳定版本,1t为长期维护版本

# yum --enablerepo="elrepo-kernel" -y install kernel-lt.x86_64

设置grub2默认引导为0,就是重启后会使用最新内核

# grub2-set-default 0

重新生成grub2引导文件

# grub2-mkconfig -o /boot/grub2/grub.cfg

更新后,需要重启,使用升级的内核生效。

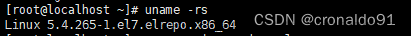

#reboot 重启后检查版本

# uname -r

[root@k8s-master01 ~]# uname -r

5.4.265-1.el7.elrepo.x86_64

3、配置内核转发以及过滤

添加网桥过滤及内核转发配置文件

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1 #启用 IPv6 数据包经过 iptables 的处理。

net.bridge.bridge-nf-call-iptables = 1 #启用 IPv4 数据包经过 iptables 的处理。

net.ipv4.ip_forward = 1 #启用 IPv4 数据包的转发功能。

vm.swappiness = 0 #闭交换分区的使用,使系统更加倾向于使用物理内存而非交换分区。

EOF

加载br_netfilter模块

# modprobe br_netfilter

# sysctl -p /etc/sysctl.d/k8s.conf

查看是否加载

# lsmod | grep br_netfilter

[root@k8s-master01 ~]# lsmod | grep br_netfilter

br_netfilter 28672 0

设置开机自启 vi /etc/sysconfig/modules/br_netfilter.modules

#!/bin/bash

modprobe br_netfilter

设置权限

# chmod 755 /etc/sysconfig/modules/br_netfilter.modules

4、安装ipset ipvsadm,IPVS(IP Virtual Server)是一个用于负载均衡的 Linux 内核模块,它可以用来替代 kube-proxy 默认的 iptables 方式。IPVS 提供了更高效和可扩展的负载均衡功能,特别适用于大规模的集群环境。

yum -y install ipset ipvsadm

配置ipvsadm模块加载方式

添加需要加载的模块

# cat > /etc/sysconfig/modules/ipvs.modules <<EOF

# !/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

授权、运行、检查是否加载

[root@k8s-master01 ~]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

ip_vs_sh 16384 0

ip_vs_wrr 16384 0

ip_vs_rr 16384 0

ip_vs 155648 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack 147456 4 xt_conntrack,nf_nat,xt_MASQUERADE,ip_vs

nf_defrag_ipv6 24576 2 nf_conntrack,ip_vs

nf_defrag_ipv4 16384 1 nf_conntrack

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

配置文件解析:

ip_vs:这是 IPVS 的核心模块,用于实现 IP 负载均衡。它拦截流量并将其转发到后端的服务节点,以实现负载均衡和高可用性。

ip_vs_rr:这个模块实现了基于轮询的调度算法(Round Robin),它按顺序将请求分配给后端节点,直到达到最大连接数限制。

ip_vs_wrr:这个模块实现了加权轮询的调度算法(Weighted Round Robin),它根据节点的权重分配请求,可以使具有更高权重的节点处理更多的请求。

ip_vs_sh:这个模块实现了源地址哈希的调度算法(Source Hash),它基于请求的源 IP 地址将请求分发到后端节点。相同的源 IP 地址将始终被分配到同一个后端节点。

nf_conntrack:这个模块提供了网络连接跟踪功能,用于跟踪数据包的连接状态,以便正确地处理负载均衡过程中的网络流量。

5、关闭swap 分区文章来源地址https://www.toymoban.com/news/detail-830158.html

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

🥭 部署containerd

1 ,下载

wget https://github.com/containerd/containerd/releases/download/v1.7.7/cri-containerd-1.7.7-linux-amd64.tar.gz

scp -r ./cri-containerd-1.7.7-linux-amd64.tar.gz root@192.168.0.108:/root/

scp -r ./cri-containerd-1.7.7-linux-amd64.tar.gz root@192.168.0.107:/root/

scp -r ./cri-containerd-1.7.7-linux-amd64.tar.gz root@192.168.0.105:/root/

tar -xvf cri-containerd-1.7.7-linux-amd64.tar.gz -C /

配置配置文件

mkdir /etc/contained

containerd config default > /etc/contained/config.toml

vi /etc/contained/config.toml

sandbox_image = "redistry.k8s.io/pause:3.9" #由3.8修改为3.9

设置开机自启

systemctl enable --now containerd

runc 准备(替换原有问题的runc)

github:https://github.com/opencontainers/runc/releases/tag/v1.1.9

libseccomp准备

下载部署包

tar -xvf libseccomp-2.5.4.tar.gz

yum -y install gperf

cd libseccomp-2.5.4

./configure

make

make install

find / -name "libseccomp.so"

[root@k8s-master01 libseccomp-2.5.4]# find / -name "libseccomp.so"

/root/libseccomp-2.5.4/src/.libs/libseccomp.so

/usr/local/lib/libseccomp.so

安装runc

gtihub:https://github.com/opencontainers/runc/releases/download/v1.1.9/runc.amd64

rm -rf `which runc`

chmod +x runc.amd64

mv runc.amd64 runc

mv runc /usr/local/sbin/

[root@k8s-master01 ~]# runc -version

runc version 1.1.9

commit: v1.1.9-0-gccaecfcb

spec: 1.0.2-dev

go: go1.20.3

libseccomp: 2.5.4

部署K8S

1、K8S集群软件部署,选择一个yum 源即可

cat > /etc/yum.repos.d/k8s.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey-https://packages.cloud.google.com/yum/doc/yum-key.gpg

https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

EOF

cat > /etc/yum.repos.d/k8s.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey-https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

更新后查看是否存在1.28镜像

yum clean all

yum makecache

yum list kubeadm.x86_64 --showduplicates

yum -y install kubeadm-1.28.0-0 kubelet-1.28.0-0 kubectl-1.28.0-0

2, K8S软件初始化

#配置kubelet

[root@k8s-master wrap]# vi /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--cgroup-driver=systemd"

systemctl enable kubelet

3, 集群初始化

kubeadm init --kubernetes-version=v1.28.0 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=172.21.131.89 --cri-socket unix:///var/run/containerd/containerd.sock

新版本--cri-socket可以不添加 默认优先选择contained

拉取镜像失败使用:

kubeadm init --image-repository=registry.aliyuncs.com/google_containers --kubernetes-version=v1.28.1 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.0.109 unix:///var/run/containerd/containerd.sock

kubeadm token create --print-join-command #过有效期之后重新生成

4, 手动拉去镜像(如果镜像拉去不下来也可以手动拉去)

kubeadm config images list

[root@k8s-master01 ~]# kubeadm config images list

W1228 02:55:13.724483 8989 version.go:104] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get "https://cdn.dl.k8s.io/release/stable-1.txt": dial tcp 146.75.113.55:443: i/o timeout (Client.Timeout exceeded while awaiting headers)

W1228 02:55:13.724662 8989 version.go:105] falling back to the local client version: v1.28.0

registry.k8s.io/kube-apiserver:v1.28.0

registry.k8s.io/kube-controller-manager:v1.28.0

registry.k8s.io/kube-scheduler:v1.28.0

registry.k8s.io/kube-proxy:v1.28.0

registry.k8s.io/pause:3.9

registry.k8s.io/etcd:3.5.9-0

registry.k8s.io/coredns/coredns:v1.10.1

ctr image pull docker.io/gotok8s/kube-apiserver:v1.28.0

ctr image pull docker.io/gotok8s/kube-controller-manager:v1.28.0

ctr image pull docker.io/gotok8s/kube-scheduler:v1.28.0

ctr image pull docker.io/gotok8s/kube-proxy:v1.28.0

ctr image pull docker.io/gotok8s/pause:3.9

ctr image pull docker.io/gotok8s/etcd:3.5.9-0

ctr image pull docker.io/gotok8s/coredns:v1.10.1

ctr i tag docker.io/gotok8s/kube-apiserver:v1.28.0 registry.k8s.io/kube-apiserver:v1.28.0

ctr i tag docker.io/gotok8s/kube-controller-manager:v1.28.0 registry.k8s.io/kube-controller-manager:v1.28.0

ctr i tag docker.io/gotok8s/kube-scheduler:v1.28.0 registry.k8s.io/kube-scheduler:v1.28.0

ctr i tag docker.io/gotok8s/kube-proxy:v1.28.0 registry.k8s.io/kube-proxy:v1.28.0

ctr i tag docker.io/gotok8s/pause:3.9 registry.k8s.io/pause:3.9

ctr i tag docker.io/gotok8s/etcd:3.5.9-0 registry.k8s.io/etcd:3.5.9-0

ctr i tag docker.io/gotok8s/coredns:v1.10.1 registry.k8s.io/coredns:v1.10.1

5, 保存文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

export KUBECONFIG=/etc/kubernetes/admin.conf

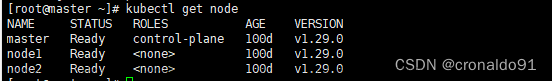

[root@k8s-master01 kubernetes-1.28.0]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady control-plane 53m v1.28.0

k8s-node1 NotReady <none> 28s v1.28.0

k8s-node2 NotReady <none> 16s v1.28.0

k8s-node3 NotReady <none> 10s v1.28.0

网络插件

flannel

sudo docker pull rancher/mirrored-flannelcni-flannel-cni-plugin:v1.2.0

wget https://github.com/flannel-io/flannel/blob/master/Documentation/kube-flannel.yml -O kube-flannel.yml

sed -i 's|docker.io/flannel/flannel-cni-plugin:v1.2.0|ccr.ccs.tencentyun.com/google_cn/mirrored-flannelcni-flannel-cni-plugin:v1.1.0|g' kube-flannel.yml

sed -i 's|docker.io/flannel/flannel:v0.23.0|ccr.ccs.tencentyun.com/google_cn/mirrored-flannelcni-flannel:v0.18.1|g' kube-flannel.yml

sed -i 's|10.244.0.0/16|10.1.0.0/16|' kube-flannel.yml

kubectl apply -f kube-flannel.yml

calico部署

calico访问链接:https://projectcalico.docs.tigera.io/about/about-calico

# kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/tigera-operator.yaml

# wget https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/custom-resources.yaml

# vim custom-resources.yaml

# This section includes base Calico installation configuration.

# For more information, see: https://projectcalico.docs.tigera.io/master/reference/installation/api#operator.tigera.io/v1.Installation

apiVersion: operator.tigera.io/v1

kind: Installation

metadata:

name: default

spec:

# Configures Calico networking.

calicoNetwork:

# Note: The ipPools section cannot be modified post-install.

ipPools:

- blockSize: 26

cidr: 10.244.0.0/16 修改此行内容为初始化时定义的pod network cidr

encapsulation: VXLANCrossSubnet

natOutgoing: Enabled

nodeSelector: all()

---

# This section configures the Calico API server.

# For more information, see: https://projectcalico.docs.tigera.io/master/reference/installation/api#operator.tigera.io/v1.APIServer

apiVersion: operator.tigera.io/v1

kind: APIServer

metadata:

name: default

spec: {}

kubectl create -f custom-resources.yaml

报错一

[root@k8s-master01 ~]# kubeadm init --image-repository=registry.aliyuncs.com/google_containers --kubernetes-version=v1.28.1 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.0.109

[init] Using Kubernetes version: v1.28.1

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR CRI]: container runtime is not running: output: time="2023-12-28T02:42:09-08:00" level=fatal msg="validate service connection: validate CRI v1 runtime API for endpoint \"unix:///var/run/containerd/containerd.sock\": rpc error: code = Unimplemented desc = unknown service runtime.v1.RuntimeService"

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

解决

vim /etc/containerd/config.toml

disabled_plugins = ["cri"] 改成 disabled_plugins = [""]

sudo systemctl restart containerd

报错二

[init] Using Kubernetes version: v1.28.1

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

W1228 02:50:26.797707 8490 checks.go:835] detected that the sandbox image "registry.k8s.io/pause:3.8" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image.

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.0.109]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.0.109 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.0.109 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

# 查看错误信息

journalctl -u kubelet -f

大概是:

Failed to create pod sandbox: rpc error: code = Unknown desc = failed to get sandbox image "registry.k8s.io/pause:3.8": failed to pull image "registry.k8s.io/pause:3.8":

failed to pull and unpack image "registry.k8s.io/pause:3.8": failed to resolve reference "registry.k8s.io/pause:3.8":

failed to do request: Head "https://europe-west2-docker.pkg.dev/v2/k8s-artifacts-prod/images/pause/manifests/3.8": dial tcp 173.194.174.82:443: i/o timeout

解决

crictl pull registry.aliyuncs.com/google_containers/pause:3.9

ctr -n k8s.io images tag registry.aliyuncs.com/google_containers/pause:3.9 registry.k8s.io/pause:3.8

kubeadm reset

kubeadm init --image-repository=registry.aliyuncs.com/google_containers --kubernetes-version=v1.28.0 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.0.109

结果

root@k8s-master01 contained]# kubectl get po -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-flannel kube-flannel-ds-6nbh9 1/1 Running 0 156m

kube-flannel kube-flannel-ds-vpghq 1/1 Running 0 16m

kube-flannel kube-flannel-ds-wv5hb 1/1 Running 0 15m

kube-flannel kube-flannel-ds-zdsfg 1/1 Running 0 16m

kube-system coredns-66f779496c-fcxnb 1/1 Running 0 4h16m

kube-system coredns-66f779496c-snjk2 1/1 Running 0 4h16m

kube-system etcd-k8s-master01 1/1 Running 0 4h16m

kube-system kube-apiserver-k8s-master01 1/1 Running 0 4h16m

kube-system kube-controller-manager-k8s-master01 1/1 Running 0 4h16m

kube-system kube-proxy-6vdts 1/1 Running 0 4h16m

kube-system kube-proxy-7thjs 1/1 Running 0 3m11s

kube-system kube-proxy-nc5zh 1/1 Running 0 3m11s

kube-system kube-proxy-sgwcc 1/1 Running 0 2m43s

kube-system kube-scheduler-k8s-master01 1/1 Running 0 4h16m

资源监控

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- nodes/metrics

verbs:

- get

- apiGroups:

- ""

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

replicas: 2

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 1

template:

metadata:

labels:

k8s-app: metrics-server

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchLabels:

k8s-app: metrics-server

namespaces:

- kube-system

topologyKey: kubernetes.io/hostname

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.6.4

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: metrics-server

namespace: kube-system

spec:

minAvailable: 1

selector:

matchLabels:

k8s-app: metrics-server

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

文章来源:https://www.toymoban.com/news/detail-830158.html

到了这里,关于【云原生 | Kubernetes 系列】— 部署K8S 1.28版本集群部署(基于Containerd容器运行)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!