概述

Hadoop: 分布式系统基础架构

解决问题: 海量数据存储、海量数据的分析计算

官网:https://hadoop.apache.org/

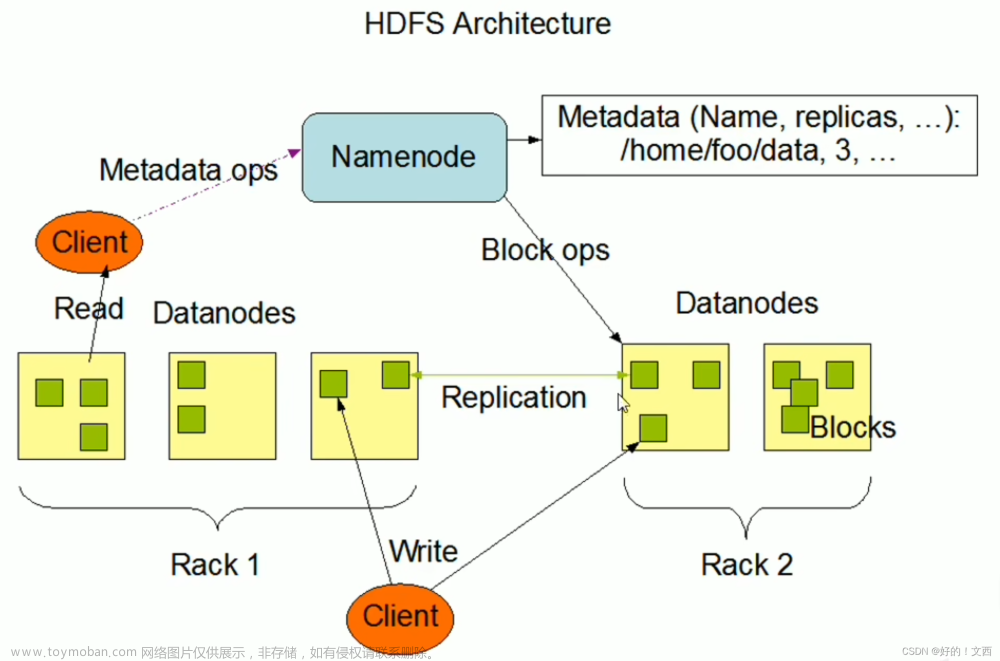

HDFS(Hadoop Distributed File System): 分布式文件系统,用于存储数据

Hadoop的默认配置【core-site.xml】: https://hadoop.apache.org/docs/r3.3.6/hadoop-project-dist/hadoop-common/core-default.xml == 配置Hadoop集群中各个组件间共享属性和通用参数以实现更好的性能和可靠性 == hadoop目录\share\hadoop\common\hadoop-common-3.3.6.jar

Hadoop的默认配置【hdfs-site.xml】: https://hadoop.apache.org/docs/r3.3.6/hadoop-project-dist/hadoop-hdfs/hdfs-default.xml === 配置HDFS组件中各种参数以实现更好的性能和可靠性(如数据块大小、心跳间隔等)== hadoop目录\share\hadoop\hdfs\hadoop-hdfs-3.3.6.jar

Hadoop的默认配置【mapred-site.xml】: https://hadoop.apache.org/docs/r3.3.6/hadoop-mapreduce-client/hadoop-mapreduce-client-core/mapred-default.xml === 配置MapReduce任务执行过程进行参数调整、优化等操作 == hadoop目录\share\hadoop\mapreduce\hadoop-mapreduce-client-core-3.3.6.jar

Hadoop的默认配置【yarn-site.xml】: https://hadoop.apache.org/docs/r3.3.6/hadoop-yarn/hadoop-yarn-common/yarn-default.xml === 配置YARN资源管理器(ResourceManager)和节点管理器(NodeManager)的行为 == hadoop目录\share\hadoop\yarn\hadoop-yarn-common-3.3.6.jar

基础知识

Hadoop组件构成

Hadoop配置文件

配置文件路径: hadoop目录/etc/hadoop

环境准备

配置

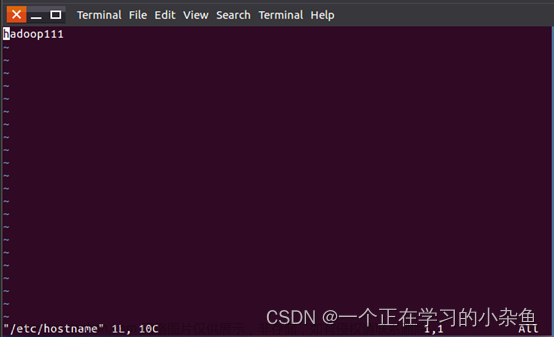

//修改主机名

//more /etc/sysconfig/network == 内容如下 //不同机器取不同的HOSTNAME,不要取成一样的

NETWORKING=yes

HOSTNAME=hadoop107

//=======================

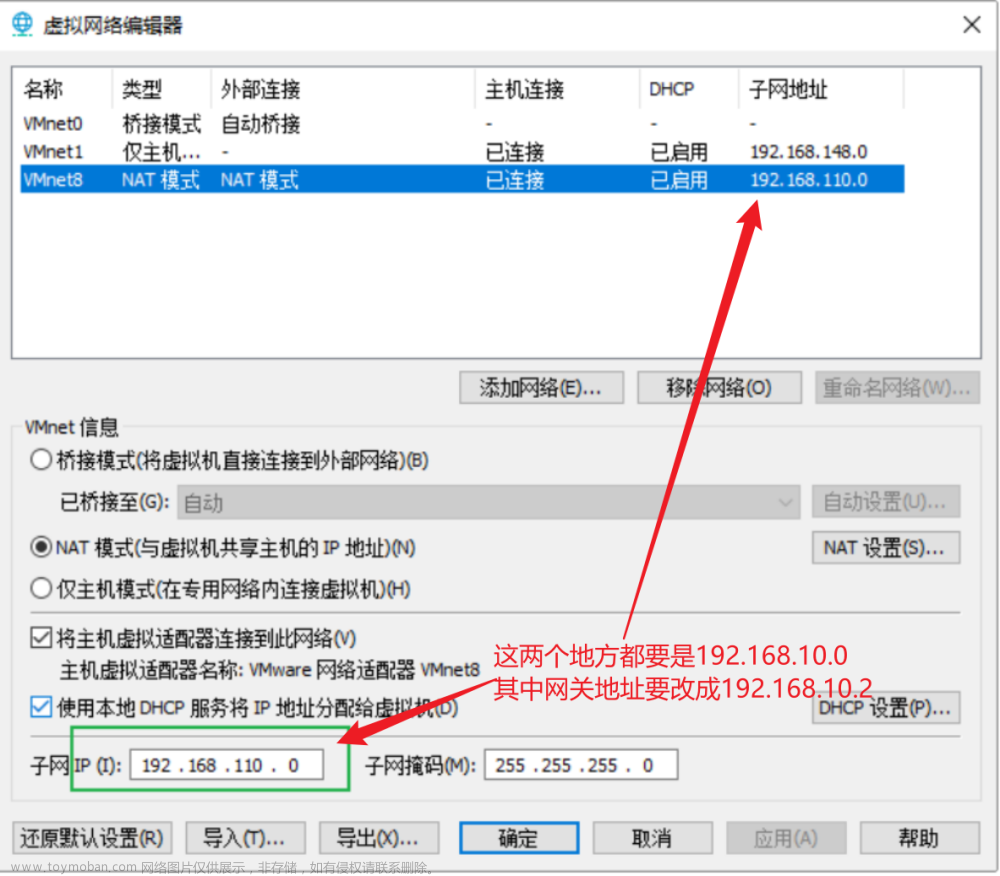

//固定IP地址 == 自行百度

ifconfig

more /etc/sysconfig/network-scripts/ifcfg-ens33

//=======================

// 查看自定义主机名、ip的映射关系 == more /etc/hosts

ping 主机名

Hadoop配置

下载

官网: https://hadoop.apache.org/releases.html

配置环境变量

//将压缩包解压到指定目录

mkdir -p /opt/module/ && tar -zxvf hadoop-3.3.6.tar.gz -C /opt/module/

//进入解压后的软件目录

cd /opt/module/hadoop-3.3.6

//设置环境变量

vim /etc/profile

//此文件末尾添加下面四行内容

## Hadoop

export HADOOP_HOME=/opt/module/hadoop-3.3.6

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

//使环境变量生效

source /etc/profile

Hadoop运行模式

Standalone Operation(本地)

参考: https://hadoop.apache.org/docs/r3.3.6/hadoop-project-dist/hadoop-common/SingleCluster.html#Standalone_Operation

官方Demo

官方Demo,统计文件中某个正则规则的单词出现次数

# hadoop目录

cd /opt/module/hadoop-3.3.6

# 创建数据源文件 == 用于下面进行demo统计单词

mkdir input

# 复制一些普通的文件

cp etc/hadoop/*.xml input

# 统计input里面的源文件规则是'dfs[a-z.]+'的单词个数,并将结果输出到当前目录下的output目录下 == 输出目录不得提前创建,运行时提示会报错

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.6.jar grep input output 'dfs[a-z.]+'

# 查看统计结果

cat output/*

cat output/part-r-00000

# 显示出来的结果,跟grep查出来的一样

WordCount单词统计Demo

//创建数据目录

mkdir -p /opt/module/hadoop-3.3.6/input/wordCountData && cd /opt/module/hadoop-3.3.6/input/

//文件数据创建 = 用于demo测试

echo "cat apple banana" >> wordCountData/data1.txt

echo "dog" >> wordCountData/data1.txt

echo " elephant" >> wordCountData/data1.txt

echo "cat apple banana" >> wordCountData/data2.txt

echo "dog" >> wordCountData/data2.txt

echo " elephant queen" >> wordCountData/data2.txt

//查看数据内容

more wordCountData/data1.txt

more wordCountData/data2.txt

//开始统计wordCountData文件目录下的单词数

hadoop jar /opt/module/hadoop-3.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.6.jar wordcount /opt/module/hadoop-3.3.6/input/wordCountData wordCountDataoutput

//查看统计结果

cd /opt/module/hadoop-3.3.6/input/wordCountDataoutput

cat ./*

Pseudo-Distributed Operation(伪分布式模式)

参考: https://hadoop.apache.org/docs/r3.3.6/hadoop-project-dist/hadoop-common/SingleCluster.html#Pseudo-Distributed_Operation

概述: 单节点的分布式系统(用于测试使用)

配置修改

核心配置文件修改: vim /opt/module/hadoop-3.3.6/etc/hadoop/core-site.xml

<configuration>

<!-- 默认是本地文件协议 file: -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.19.107:9000</value>

</property>

<!-- 临时目录 默认/tmp/hadoop-${user.name} -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-3.3.6/tmp</value>

</property>

</configuration>

核心配置文件修改: vim /opt/module/hadoop-3.3.6/etc/hadoop/hdfs-site.xml

<configuration>

<!-- 集群设置为1, 默认3 -->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

启动DFS【9870】

Hadoop-DFS数据清空格式化

hdfs namenode -format

启动DFS组件

注意: 启动过程中可能遇到非root用户、JAVA_HOME找不到的现象,导致启动失败,自行参考下面的问题解决

# 未启动hadoop时所系统所运行java程序

jps

# 启动hadoop相关的应用程序

sh /opt/module/hadoop-3.3.6/sbin/start-dfs.sh

# 查看启动hadoop的应用变化

jps

访问DFS前端页面(不同版本的Hadoop的NameNode端口有变)

浏览器NameNode前端页面: http://192.168.19.107:9870/

dfs命令使用(主要用来操作文件)

帮助文档: hdfs dfs --help

复制物理机文件中hadoop中

hdfs dfs -mkdir /test

hdfs dfs -put /opt/module/hadoop-3.3.6/input /test

文件展示以及读取文件内容

hdfs dfs -ls -R /

hdfs dfs -cat /test/input/core-site.xml

创建目录、文件

hdfs dfs -mkdir -p /test/linrc

hdfs dfs -touch /test/linrc/1.txt

使用mapreduce进行计算hadoop里面某个文件夹的内容

hdfs dfs -ls /test/input

# 对hadoop里面某个文件夹内容进行单词统计

hadoop jar /opt/module/hadoop-3.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.6.jar wordcount /test/input/wordCountData /test/input/wordCountDataoutput2

hdfs dfs -ls /test/input

# 查看统计结果

hdfs dfs -cat /test/input/wordCountDataoutput2/*

启动Yarn组件【8088】

配置修改

强制指定Yarn的环境变量: /opt/module/hadoop-3.3.6/etc/hadoop/yarn-env.sh

export JAVA_HOME=/www/server/jdk8/jdk1.8.0_202

yarn-site.xml添加如下两个配置 /opt/module/hadoop-3.3.6/etc/hadoop/yarn-site.sh

<configuration>

<!-- Site specific YARN configuration properties == https://hadoop.apache.org/docs/r3.3.6/hadoop-yarn/hadoop-yarn-common/yarn-default.xml -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>192.168.19.107</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_HOME,PATH,LANG,TZ,HADOOP_MAPRED_HO

ME</value>

</property>

<!-- 查看任务日志时,防止其重定向localhost,导致页面打开失败 -->

<property>

<name>yarn.timeline-service.hostname</name>

<value>192.168.19.107</value>

</property>

</configuration>

启动

//非常重要,必须回到hadoop的目录里面进行启动,我也不知道为什么

cd /opt/module/hadoop-3.3.6

//不要使用 sh命令启动,否则报错,我也不知道为什么

./sbin/start-yarn.sh

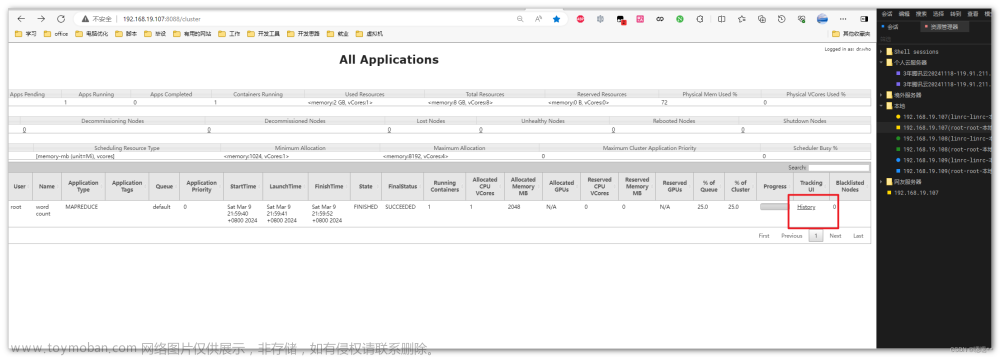

访问yarn前端页面

浏览器: http://ip:8088

yarn页面端口配置: https://hadoop.apache.org/docs/r3.3.6/hadoop-yarn/hadoop-yarn-common/yarn-default.xml的【yarn.resourcemanager.webapp.address】

运行计算dfs某个目录所有文件的单词数,yarn页面有运行记录

//单词计算开始

hadoop jar /opt/module/hadoop-3.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.6.jar wordcount /test/input/wordCountData /test/input/wordCountDataoutput3

启动MapReduce组件

配置修改

强制指定mapred的环境变量: /opt/module/hadoop-3.3.6/etc/hadoop/mapred-env.sh

export JAVA_HOME=/www/server/jdk8/jdk1.8.0_202

mapred-site.xml添加如下配置: /opt/module/hadoop-3.3.6/etc/hadoop/mapred-site.xml

<configuration>

<!-- The runtime framework for executing MapReduce jobs. Can be one of local, classic or yarn -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*</value>

</property>

<!-- mr运行日志采集系统配置 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>192.168.19.107:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>192.168.19.107:19888</value>

</property>

</configuration>

启动日志采集系统

mapred --daemon start historyserver

查看任务日志

启动日志聚集(任务执行的具体详情上传到HDFS组件中)

未启动前

启动

注意: 如果yarn组件已经启动,修改yarn的配置需要重新启动,使得配置生效

#停止日志系统

mapred --daemon stop historyserver

#停止yarn组件

cd /opt/module/hadoop-3.3.6

./sbin/stop-yarn.sh

yarn-site.xml添加如下配置 /opt/module/hadoop-3.3.6/etc/hadoop/yarn-site.sh

<configuration>

<!-- Site specific YARN configuration properties == https://hadoop.apache.org/docs/r3.3.6/hadoop-yarn/hadoop-yarn-common/yarn-default.xml -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>192.168.19.107</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_HOME,PATH,LANG,TZ,HADOOP_MAPRED_HO

ME</value>

</property>

<property>

<name>yarn.timeline-service.hostname</name>

<value>192.168.19.107</value>

</property>

<!-- 日志聚集启动 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 日志聚集的日志保留的时间,单位秒 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>2592000</value>

</property>

</configuration>

#启动yarn组件

cd /opt/module/hadoop-3.3.6

./sbin/start-yarn.sh

#启动日志系统

mapred --daemon start historyserver

# 重新运行一个任务

hadoop jar /opt/module/hadoop-3.3.6/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.6.jar wordcount /test/input/wordCountData /test/input/wordCountDataoutput5

文章来源:https://www.toymoban.com/news/detail-840262.html

文章来源:https://www.toymoban.com/news/detail-840262.html

文章来源地址https://www.toymoban.com/news/detail-840262.html

文章来源地址https://www.toymoban.com/news/detail-840262.html

到了这里,关于Hadoop学习1:概述、单体搭建、伪分布式搭建的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!