今天想分享一下 Qwen 1.5 官方用例的二次封装( huggingface 说明页也有提供源码),其实没有太多的技术含量。主要是想记录一下如何从零开始在不使用第三方工具的前提下,以纯代码的方式本地部署一套大模型,相信这对于技术人员来说还是非常有用的。

虽然现在人人都可以用像 ollama 这种工具一键部署本地大模型,但想通过这种方式将大模型深度接入现有系统可就有点麻烦。 因此我觉得还是有必要各位分享一下这种纯代码的模式,希望能够帮助到更多的人。

1. 模型下载

通过之前的文章可以知道,现在国内可以通过 https://hf-mirror.com/ 下载 huggingface 大模型。在配置好环境变量后,可以通过以下命令下载 Qwen:

yuanzhenhui@MacBook-Pro ~ % cd /Users/yuanzhenhui/Documents/code_space/git/processing/python/tcm_assistant/transformer/model/qwen

yuanzhenhui@MacBook-Pro qwen % huggingface-cli download --resume-download Qwen/Qwen1.5-7B-Chat --local-dir .

Consider using `hf_transfer` for faster downloads. This solution comes with some limitations. See https://huggingface.co/docs/huggingface_hub/hf_transfer for more details.

Fetching 14 files: 7%|█▊ | 1/14 [00:00<00:06, 1.87it/s]downloading https://hf-mirror.com/Qwen/Qwen1.5-7B-Chat/resolve/294483ad23713036574b30587b186713373f4271/README.md to /Users/yuanzhenhui/.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/0963c198257a0607c4d2def66a84aec172240afd.incomplete

README.md: 4.26kB [00:00, 6.40MB/s]

Fetching 14 files: 100%|████████████████████████| 14/14 [00:01<00:00, 12.81it/s]

/Users/yuanzhenhui/Documents/code_space/git/processing/python/tcm_assistant/transformer/model/qwen

由于我之前已经 checkout 过一遍了,因此会显示上面的输出。如果是第一次 checkout 那么你可能要等一段时间才能全部下载完成(毕竟还挺大的)。

如果你是 MacOS 的用户那么模型的路径将会如下所示:

(base) yuanzhenhui@MacBook-Pro hub % pwd

/Users/yuanzhenhui/.cache/huggingface/hub

(base) yuanzhenhui@MacBook-Pro hub % ls

models--BAAI--bge-large-zh-v1.5 models--Qwen--Qwen1.5-7B-Chat version.txt

(base) yuanzhenhui@MacBook-Pro hub %

由于下载的是 “Qwen/Qwen1.5-7B-Chat” 因此下载下来后会以“models–Qwen–Qwen1.5-7B-Chat”名称进行保存。

这时有小伙伴会问,huggingface-cli 命令中不是有写“–local-dir .”参数吗?如果模型不是保存在这个参数指定的位置,那么这个参数有什么用途?这个“.”(本地目录)又有什么意义?

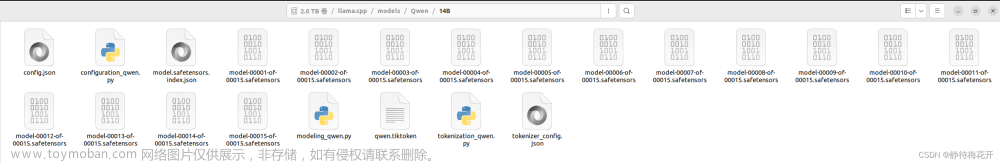

其实通过 local-dir 存放的是大模型的软连接和配置文件,如下图所示:

(base) yuanzhenhui@MacBook-Pro qwen % tree -l

.

├── LICENSE

├── README.md

├── config.json

├── generation_config.json

├── merges.txt

├── model-00001-of-00004.safetensors -> ../../../../../../../../../.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/9e8f7873d7c4c74b8883db207a08bf8a783ec8c26da6b3d660a0929048ce6422

├── model-00002-of-00004.safetensors -> ../../../../../../../../../.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/e573fdaf3eba785c4b31b8858288f762f3541f09d75b53dfb1ae4d8ee5011d65

├── model-00003-of-00004.safetensors -> ../../../../../../../../../.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/1d7cd36508251baa069a894f6ee98da5929e8b0788ff9c9faa7934ad102f845a

├── model-00004-of-00004.safetensors -> ../../../../../../../../../.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/189e7a297229937ec93912c940ef05019738c56ff0720c811fd083f5dd400dce

├── model.safetensors.index.json

├── tokenizer.json -> ../../../../../../../../../.cache/huggingface/hub/models--Qwen--Qwen1.5-7B-Chat/blobs/33ea6c72ebb92a237fa2bdf26c5ff16592efcdae

├── tokenizer_config.json

└── vocab.json

0 directories, 13 files

model-00001-of-00004.safetensors、model-00002-of-00004.safetensors 等都是连接到 …/.cache/huggingface/hub 地址的。可能作者考虑到模型的复用问题吧,因此没有将模型直接下载到本地指定目录,而是将软连接建在这个目录底下,这个就不做深究了。

2. 代码部署使用

在真正使用前,按照 Qwen 1.5 的官方说明,我们还需要将 transformer 升级到 4.37.0 版本及其以上。如下图:

既然这样,那就直接 pip install --upgrade 吧,如下图:

(base) yuanzhenhui@MacBook-Pro qwen % pip install --upgrade transformers

Requirement already satisfied: transformers in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (4.37.0)

Collecting transformers

Downloading transformers-4.39.3-py3-none-any.whl.metadata (134 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 134.8/134.8 kB 358.9 kB/s eta 0:00:00

Requirement already satisfied: filelock in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (3.13.1)

Requirement already satisfied: huggingface-hub<1.0,>=0.19.3 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (0.20.3)

Requirement already satisfied: numpy>=1.17 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (1.26.4)

Requirement already satisfied: packaging>=20.0 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (23.2)

Requirement already satisfied: pyyaml>=5.1 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (6.0.1)

Requirement already satisfied: regex!=2019.12.17 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (2023.10.3)

Requirement already satisfied: requests in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (2.31.0)

Requirement already satisfied: tokenizers<0.19,>=0.14 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (0.15.2)

Requirement already satisfied: safetensors>=0.4.1 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (0.4.2)

Requirement already satisfied: tqdm>=4.27 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from transformers) (4.65.0)

Requirement already satisfied: fsspec>=2023.5.0 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from huggingface-hub<1.0,>=0.19.3->transformers) (2023.10.0)

Requirement already satisfied: typing-extensions>=3.7.4.3 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from huggingface-hub<1.0,>=0.19.3->transformers) (4.9.0)

Requirement already satisfied: charset-normalizer<4,>=2 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from requests->transformers) (2.0.4)

Requirement already satisfied: idna<4,>=2.5 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from requests->transformers) (3.4)

Requirement already satisfied: urllib3<3,>=1.21.1 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from requests->transformers) (2.0.7)

Requirement already satisfied: certifi>=2017.4.17 in /Users/yuanzhenhui/anaconda3/lib/python3.11/site-packages (from requests->transformers) (2024.2.2)

Downloading transformers-4.39.3-py3-none-any.whl (8.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 8.8/8.8 MB 2.6 MB/s eta 0:00:00

Installing collected packages: transformers

Attempting uninstall: transformers

Found existing installation: transformers 4.37.0

Uninstalling transformers-4.37.0:

Successfully uninstalled transformers-4.37.0

Successfully installed transformers-4.39.3

轻轻松松就能够升级到 4.39.3 版本了。接下来就可以编写部署代码,如下图:

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

"""

由于 Qwen 1.5 已经整合到 transformers 里面了,因此我这边使用的正是 transformers 的调用方式

"""

# 大模型名称和模型定义

model_name = "Qwen/Qwen1.5-7B-Chat"

model = AutoModelForCausalLM.from_pretrained(model_name, torch_dtype="auto", device_map="auto")

# 这里对大模型角色进行定义

sys_content = "You are a helpful assistant"

# 获取千问 token 实例

def setup_qwen_tokenizer():

return AutoTokenizer.from_pretrained(model_name)

# 设置问答输入信息

def setup_model_input(tokenizer, prompt):

# 判断硬件使用情况,有 cuda 用 cuda 没有 cuda 用 cpu

if torch.cuda.is_available():

device = torch.device("cuda")

else:

device = torch.device("cpu")

# 需要提问的内容的 json 格式

messages = [

{"role": "system", "content": sys_content},

{"role": "user", "content": prompt}

]

# 该函数将使用提供了标记化器来生成输入文本,然后对其进行标记化并将其转换为PyTorch张量。

text = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

return tokenizer([text], return_tensors="pt").to(device)

# 提交问题并获取回复

def msg_generate(prompt):

tokenizer = setup_qwen_tokenizer()

# 整理模型所需的输入信息

model_inputs = setup_model_input(tokenizer, prompt)

# 根据模型生成id集合

generated_ids = model.generate(model_inputs.input_ids, max_new_tokens=512)

# 删除没有响应的id

generated_ids = [output_ids[len(input_ids):] for input_ids, output_ids in zip(

model_inputs.input_ids, generated_ids)]

# 根据id集合对返回信息进行解码获得返回结果

return tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

if __name__ == '__main__':

# 这里提供一个调用实例

prompt = "中医药理论是否能解释并解决全身乏力伴随心跳过速的症状?"

response = msg_generate(prompt)

print(">>> "+response)

将 msg_generate 封装之后就能够根据需要提供对外服务了(在外层加一层 flask 不就可以直接提供接口了么,再加个精美一点的 UI 界面,老板又可以割一波韭菜了,技术人员保住饭碗不是梦…开个玩笑)。具体的执行效果如下图:

(base) yuanzhenhui@MacBook-Pro qwen % python qwen_model.py

Loading checkpoint shards: 100%|██████████████████| 4/4 [00:00<00:00, 8.75it/s]

WARNING:root:Some parameters are on the meta device device because they were offloaded to the disk and cpu.

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

>>> 中医药理论确实可以尝试解释和治疗全身乏力和心跳过速的综合症状,但需要具体辨证论治,因为中医认为人体的病症是内外环境、脏腑功能失衡的结果。以下是可能的解释和治疗方法:

1. 全身乏力:中医认为这是“气虚”、“血虚”或“阴阳两虚”的表现。气虚可能导致人体机能下降,运化无力;血虚则可能影响血液滋养全身,导致四肢乏力。心悸(心跳过速)可能是心气不宁,心神不安,或是心血不足,心脉瘀阻等问题。调理方法可能包括补气养血、益心安神等,比如黄芪、人参、当归、熟地、枣仁等中药。

2. 心跳过速:中医认为可能是心火上炎、心血瘀阻、心神不宁等引起。针对不同的病因,可能采用清热解毒、活血化瘀、镇静安神等方法,如黄连、丹参、麦冬、柏子仁等。

3. 实践中,中医会通过望、闻、问、切四诊合参,全面了解患者的整体情况,然后开具个性化的处方。如果心跳过速伴有其他症状,如胸闷、心慌、失眠、面色苍白等,可能还需要结合西医的心电图检查和其他检查结果。

4. 请注意,虽然中医药有其独特的疗效,但对于严重的或持续存在的症状,还是建议及时就医,以西医的诊断和治疗为主,中医辅助调养。

总的来说,中医药在调理全身状况,改善亚健康状态,特别是对于一些慢性病的治疗上有独特优势,但对于急性病和严重症状,应遵循医嘱,中西医结合为宜。

由于我用的是 Mac 因此只能用 cpu 跑,就一个问题也跑了将近 20 分钟,所以建议大家本地部署还是用 gpu 吧。当然了千问团队也很贴心弄了GGUF 版本(专门为 cpu 用户提供的),但是我没有尝试过我就不多说什么了。文章来源:https://www.toymoban.com/news/detail-852072.html

但说真的能够代码部署那后面的可塑性就很强了,相信接下来会有更多的企业投身到“人工智能+”的行列吧,在这里真的要感谢阿里千问团队付出的努力和无私的分享。文章来源地址https://www.toymoban.com/news/detail-852072.html

到了这里,关于【AIGC】本地部署通义千问 1.5 (PyTorch)的文章就介绍完了。如果您还想了解更多内容,请在右上角搜索TOY模板网以前的文章或继续浏览下面的相关文章,希望大家以后多多支持TOY模板网!